IBM, Meta form AI Alliance: What can it accomplish?

IBM, Meta form AI Alliance: What can it accomplish?

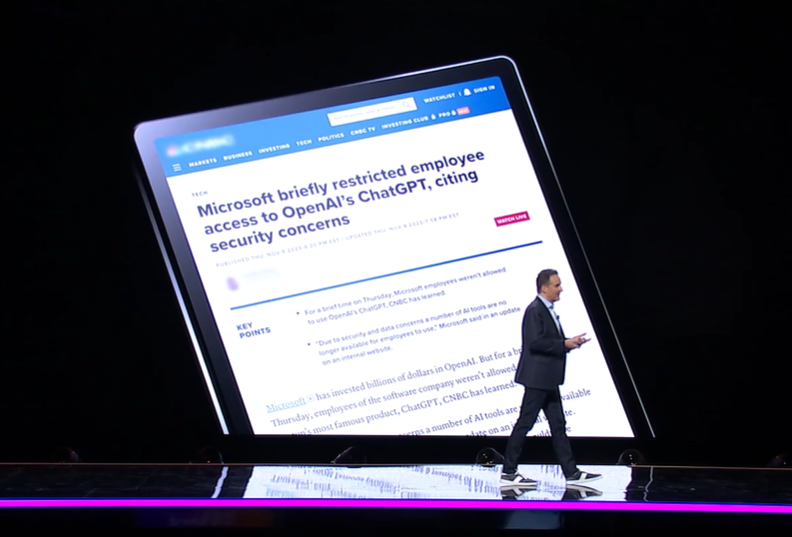

IBM and Meta have formed the AI Alliance with 50 founding members aiming to provide "open and transparent innovation" in artificial intelligence. The catch is that many of the big names--Google, Nvidia and Microsoft--driving the AI advances so far aren't among the founding members.

The concept behind the AI Alliance is notable in that the group wants to bring international players, academics, researchers, governments and developers together to provide an open science and technology ecosystem. The tension around technologies like generative AI revolves around for-profit motives vs. doing what's right for humanity. Security expert Bruce Schneier just penned a long missive about AI and trust worth a read.

And IBM and Meta have a history of contributing to open source as well as initiatives such as Open Compute Project. However, it remains to be seen what the AI Alliance ultimately accomplishes without some key AI leaders.

Also see: How Generative AI Has Supercharged the Future of Work | Generative AI articles | Why you need a Chief AI Officer | Software development becomes generative AI's flagship use case | Enterprises seeing savings, productivity gains from generative AI | Work in a generative AI world will need critical, creative thinking

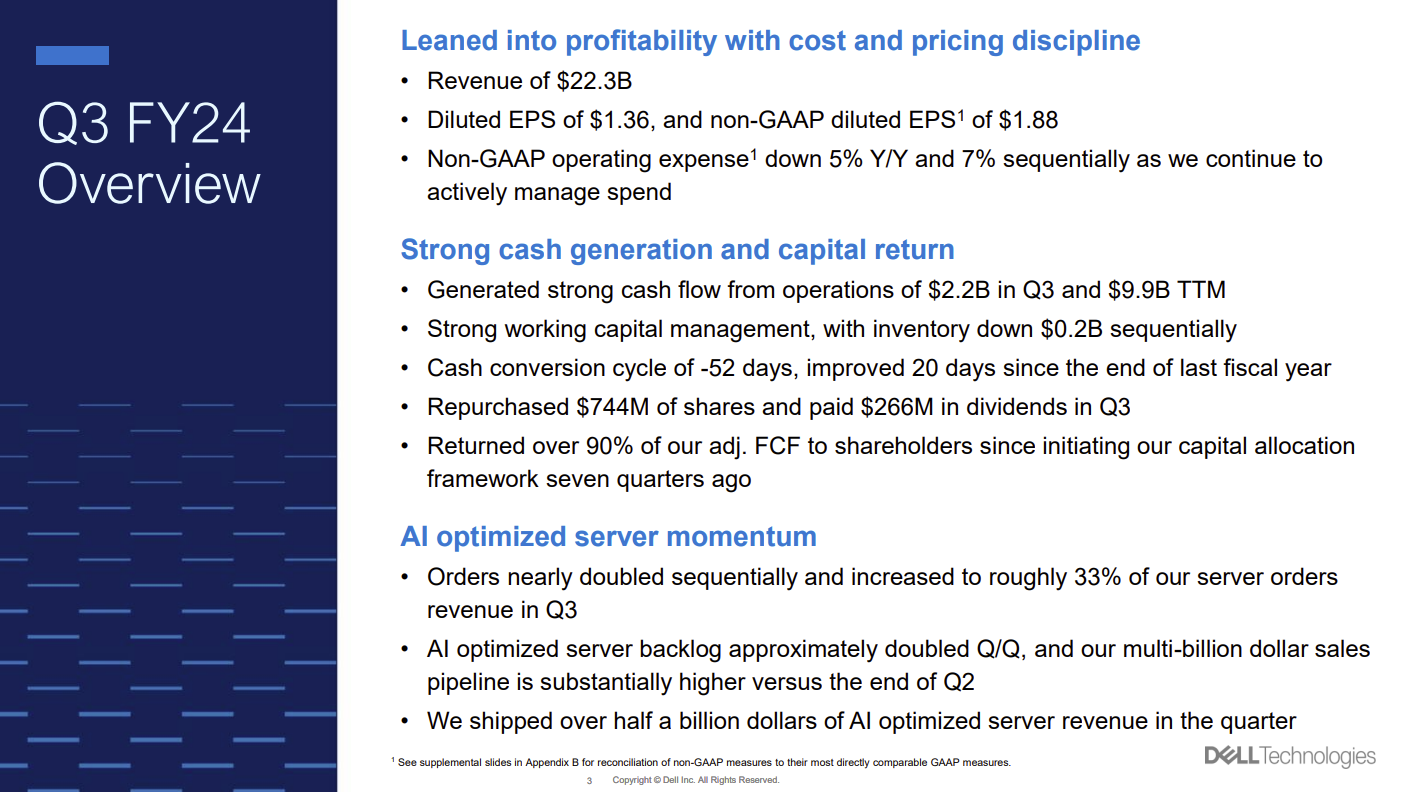

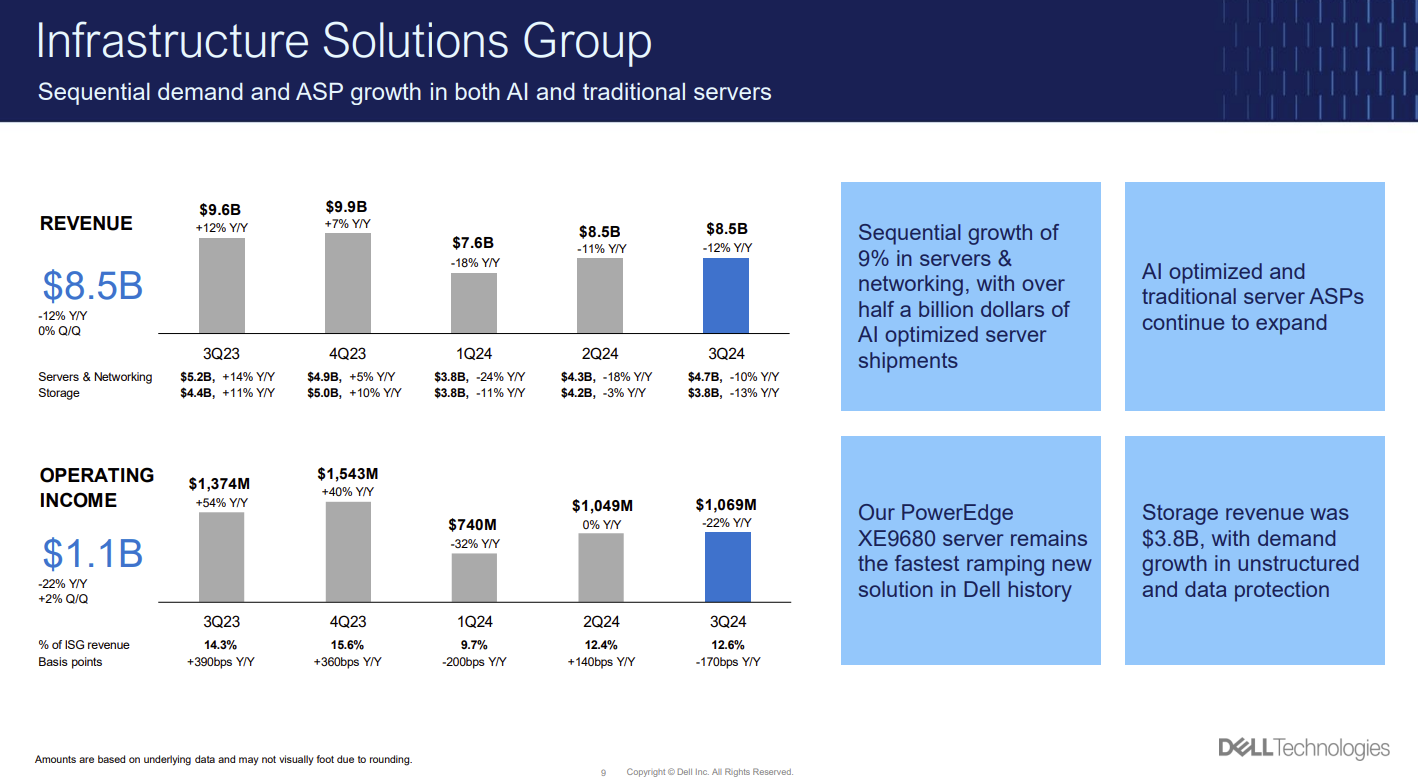

The founding members and collaborators include the following: AMD, Anyscale, CERN, Cerebras, Cleveland Clinic, Cornell University, Dartmouth College, Dell Technologies, EPFL, ETH, Hugging Face, Imperial College London, Intel, INSAIT, Linux Foundation, MLCommons, MOC Alliance operated by Boston University and Harvard University, NASA, NSF, Oracle, Partnership on AI, Red Hat, Roadzen, ServiceNow, Sony Group, Stability AI, University of California Berkeley, University of Illinois, University of Notre Dame, The University of Tokyo, Yale University and others.

Constellation Research analyst Andy Thurai was quick to note who was missing. He said:

"Major A list players such as AWS, Databricks, Snowflake, Dataiku, Data Robot, Domino Data Lab, Scale.AI, NVIDIA, Microsoft, OpenAI, Google, Anthropic, Cohere, AI 21 Labs and many others are missing from the list."

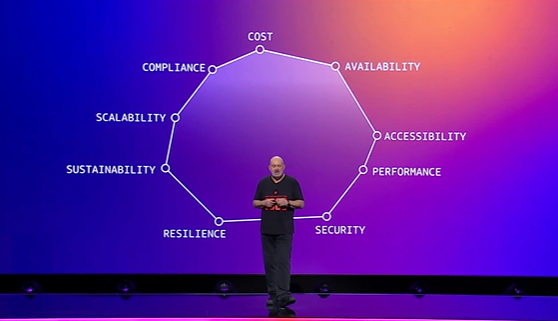

Like any alliance, federation or task force by any other name, the jury is out until something actually happens. The AI Alliance indicated that it will be action oriented and focus on "fostering an open community and enabling developers and researchers to accelerate responsible innovation in AI while ensuring scientific rigor, trust, safety, security, diversity and economic competitiveness."

Focus areas for AI Alliance include:

- Developing benchmarks and evaluation standards for responsible development of AI systems as well as tools for safety, security and trust.

- Advance an ecosystem of open foundation models with diversity on many fronts.

- Create an AI hardware accelerator ecosystem.

- Support AI skills and research.

- Develop educational content and resources.

Meta and IBM's experience in open source as well as open hardware could come in handy for kicking off AI Alliance. Simply put, the group is worth watching pending further developments.

Thurai said it's unclear what AI Alliance will ultimately accomplish. He said:

Data to Decisions Innovation & Product-led Growth Future of Work Tech Optimization Next-Generation Customer Experience Digital Safety, Privacy & Cybersecurity meta IBM AI GenerativeAI ML Machine Learning LLMs Agentic AI Analytics Automation Disruptive Technology Chief Information Officer Chief Executive Officer Chief Technology Officer Chief AI Officer Chief Data Officer Chief Analytics Officer Chief Information Security Officer Chief Product Officer"There are many tools and software components, both open source and commercial already available focused on AI Alliance initiatives. Depending on what is measured there are standards out there. For example, tools like GAIA, MLPerf, and other tools to measure F1 score, model performance, accuracy, and drift are available. I am not sure what the alliance is offering at this point. There are some promises made which are yet to be seen. Tools like Fairlearn, AI fairness 360, Accenture Fairness Tool, etc. to measure model fairness are already available. Some popular benchmarking tools include DAWNBench, GLUE, and SQuAD.”

nd pay down technical debt.

nd pay down technical debt.