ServiceNow's Strategic Portfolio Management Gains Generative AI, Many Key Updates

Vice President and Principal Analyst

Constellation Research

Title: About Dion Hinchcliffe

Dion Hinchcliffe is an internationally recognized business strategist, bestselling author, enterprise architect, industry analyst, and noted keynote speaker. He is widely regarded as one of the most influential figures in digital strategy, the future of work, and enterprise IT. He works with the leadership teams of large enterprises as well as software vendors to raise the bar for the art-of-the-possible in their digital capabilities.

He is currently Vice President and Principal Analyst at Constellation Research. Dion is also currently an executive fellow at the Tuck School of Business Center for Digital Strategies. He is a globally recognized industry expert on the topics of digital transformation, digital workplace, enterprise collaboration, API…...

Read more

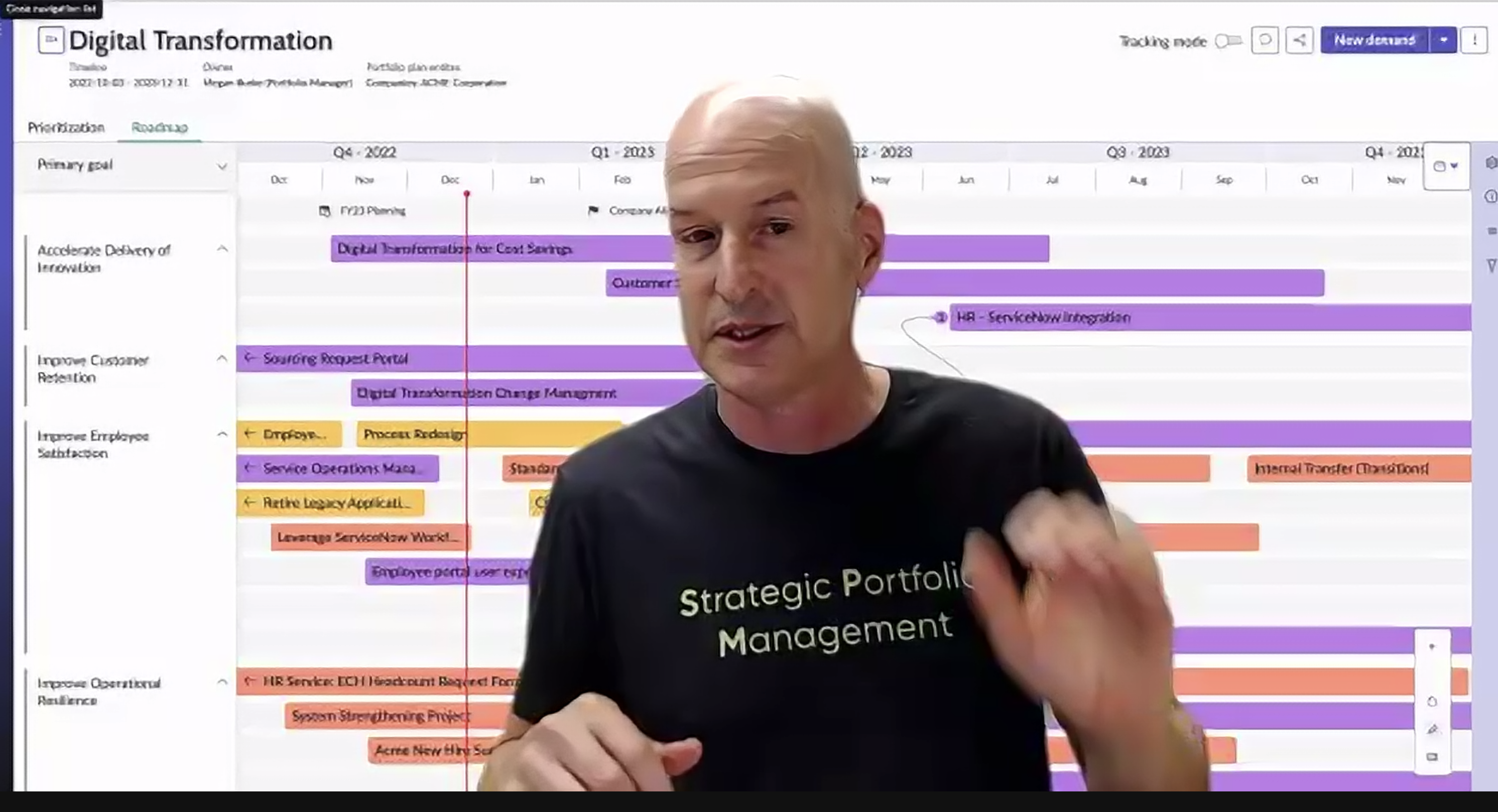

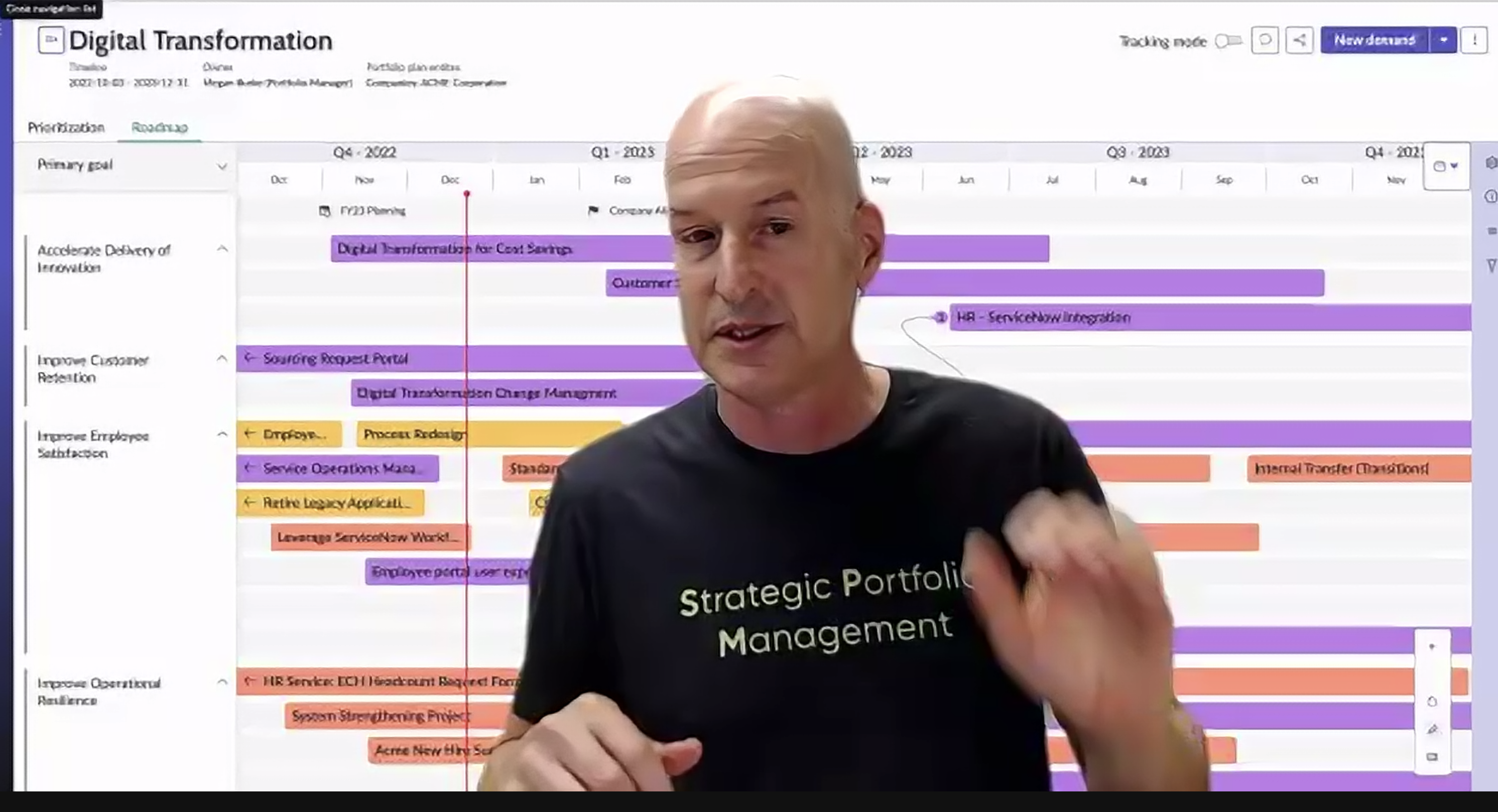

In the latest March, 2024 Vision update delivered today, the team behind ServiceNow's Strategic Portfolio Management (SPM) platform provided a deep dive on the significant advancements within the product recently, which are aimed at empowering organizations to optimize their investment decision-making processes. I'll delve into the most impactful updates from today, with a particular focus on the integration of generative AI, enhanced user experience, and the introduction of collaborative work management features, among a large list of other enhancements that were presented this morning.

It's evident that ServiceNow considers SPM a fundamentally strategic enterprise capability that takes the company well beyond its ITSM roots, helping leaders from CIOs to program managers in delivering on their overarching business and IT objectives across portfolios, programs,and projects. The announcements today continues to expand the platform into broad new aspects and capabilities, as well as thoroughly modernizing it around a comptemporary view of value stream management that span industries, lenses, experience models, and project types.

ServiceNow's SPM Is Intended As a One-Stop-Shop for All Strategic Portfolio, Program, and Project Needs

A Journey From IT Projects to All Enterprise Program Portfolios

ServiceNow's Strategic Portfolio Management (SPM) has undergone a significant evolution since its inception in 2016. Originally, the platform focused primarily on IT portfolio management, and was known then as IT Business Management. During this stage, its core functionality centered on managing the lifecycle of IT projects and investments, ensuring alignment with IT strategy and optimizing resource allocation.

However, in 2022, ServiceNow recognized the growing need for a more comprehensive approach to portfolio management. Businesses were increasingly demanding a solution that could manage not just IT projects but the entire program and project portfolio across the organization, encompassing both IT and business initiatives. This shift retained a focus on the growing cross-functional importance of digital transformation, where IT plays a crucial role in supporting and enabling business goals as organizations broadly move into a more tech-driven future.

To address this need, ServiceNow's IT portfolio management solution transformed into the richer and more robust Strategic Portfolio Management (SPM) platform. This evolution expanded the tool's capabilities well beyond IT-centric projects. SPM now caters to managing the entire program and project portfolio, encompassing both IT and business initiatives. This holistic approach ensures full alignment between business strategy and investment decisions, fostering a more strategic and integrated approach to resource allocation.

The Latest Updates on ServiceNow SPM

Yoav Boaz, VP and General Manager of Strategic Portfolio Management (SPM) for ServiceNow, first delivered an update on the product's latest advancements and customer success stories. "We just want to point out the major investments ServiceNow is making within SPM and within our product line. Some of the innovations that we brought to market last year were around product feedback that some of you are already deploying, with benchmarks where you can compare your KPIs to other [ServiceNow] customers' KPIs. to different industry lenses and process optimizations where you can see what the bottlenecks are happening within the demand or ideation, your resource assignment, your resource management, workspaces and so on", said Boaz as he underscored how SPM is a workspace to manage nearly every type of major transformation and value stream within an enterprise today.

ServiceNow's Yoav Boaz Kicked Off the March 2024 Vision Update for Strategic Portfolio Management

Here's a breakdown of the most salient trends and changes Boaz explored. The latest product Innovations and updates in SPM include:

- ServiceNow has continued investing extensively in SPM, with numerous major new features.

- Benchmarking for comparing KPIs with other customers.

- Industry-specific lenses for tailored insights.

- Process optimization to identify bottlenecks in demand management, resource allocation, and workspace utilization.

- Revamped enterprise HR planning.

- Scenario planning for evaluating different future possibilities.

- Collaborative work management for enhanced teamwork.

Adoption of the Pro version of SPM grew by over 50% in the past year, which indicates strong customer engagement with new capabilities. ServiceNow is now positioned as a leader in both Strategic Portfolio Management and Value Stream Management a number of industry reports.

Key customer trends and success stories for SPM:

- The market is shifting from project-centric to a product value stream approach. Customers are focusing on understanding the interconnectedness of products and their overall value delivery.

- Organizations are increasingly deploying SPM across the enterprise, not just within specific departments, to align leadership priorities with resource allocation and investment decisions.

- Generative AI (Gen AI) is generating significant interest among customers, with ServiceNow planning to share its roadmap and initial Gen AI release details in early May.

A number of key SPM customer success stories were highlighted:

- Western Governors University: Improved demand and value stream management.

- Juniper Networks: Enhanced demand management.

- Premise Health: Achieved over $1.7 million in savings through improved project management.

- MKS (semiconductor manufacturer): Increased project deliveries by 29% and project completion rates by 30% with SPM.

- Anonymous US government agency: Reduced project management costs by over 15% and saved 67% of time spent on program management using SPM on a $17 billion IT budget.

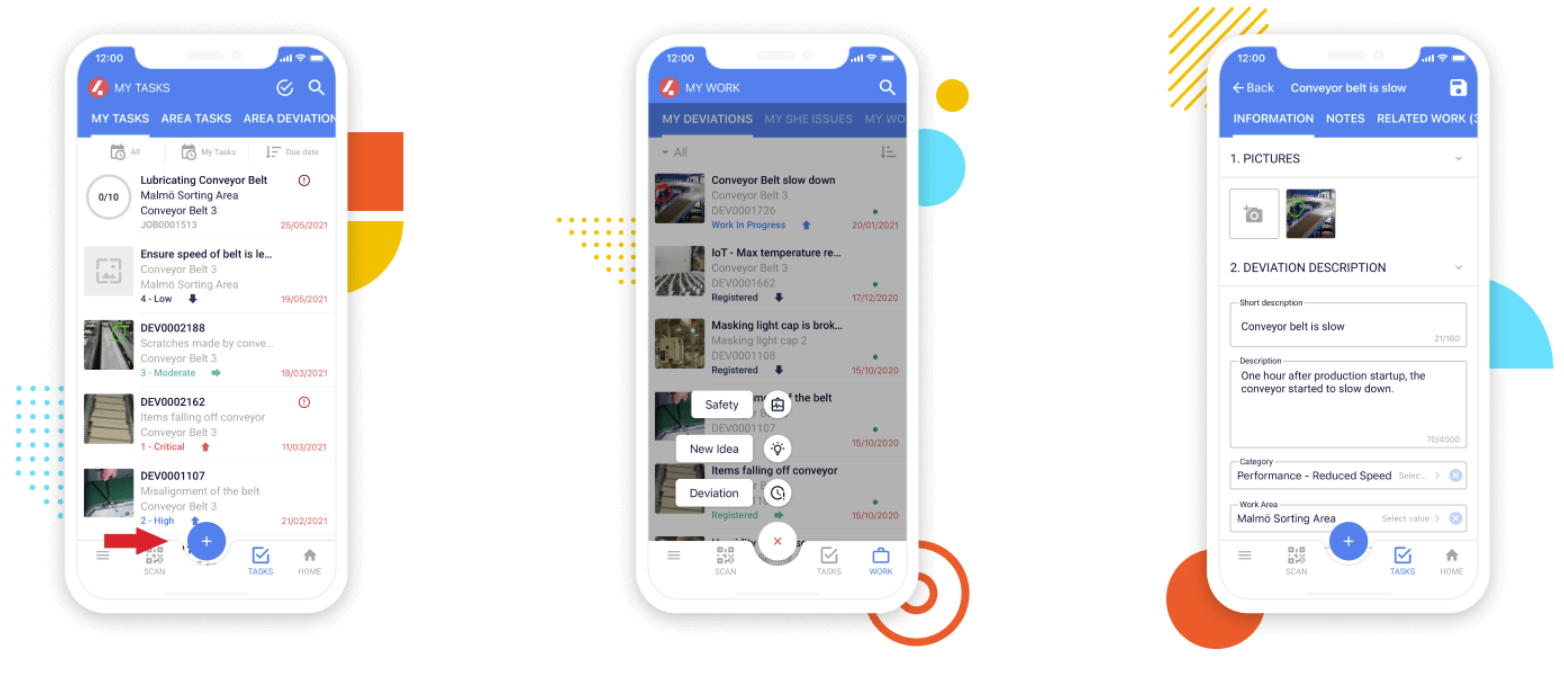

How ServiceNow Strategic Portfolio Management (SPM) Supports Enterprise Portfolios Including Scaled Agile

Carina Hatfield, Senior Director of Inbound Product Management, and James Ramsay, Product Management Director of Strategic Portfolio Management/Application Portfolio Management, then explored the roadmap and the expansive new functionalities in ServiceNow's SPM, highlighting its support for Scaled Agile's popular framework. Here are my key takeaways:

Lenses:

- New "Business Capability Lens" allows organizations to integrate business capability planning with strategic processes.

- Lenses provide flexibility for planning based on different structures like product, value stream, or digital transformation initiatives.

Requirements Management:

SPM can robustly and versatilely handle various work types, including Scaled Agile work alongside traditional projects and demands.

Value Streams:

- Value stream view showcases the relationships between epics, products, and supporting technologies.

- Architectural runway concept helps visualize dependencies between customer value, technical feasibility, and enabling technologies.

Capacity Planning:

This new feature ensures planned work aligns with available team capacity to avoid resource overload.

Enterprise Agile Planning:

- Supports various Scaled Agile frameworks like SAFe or Scrum of Scrums.

- Enables configuration of different work types and team structures.

Project Workspace Enhancements:

- Improved drag-and-drop functionality and template application.

- Consolidated view of project resources, financials, risks, and issues.

Benchmarking:

- Compares your SPM usage and KPIs against anonymized data from other users.

- Allows filtering by industry and size for a more relevant comparison.

Process Mining:

- Analyzes the flow of work within SPM processes.

- Identifies bottlenecks and opportunities for improvement, particularly in demand management.

- Enables creation of improvement initiatives to streamline processes.

Strategic Planning Workspace:

- Provides real-time visibility into program progress against objectives and key results (OKRs).

- Allows automated data collection from various sources across the platform.

Focus on Scaled Agile:

- Throughout the update, the emphasis is on SPM's ability to support Scaled Agile methodologies.

- Features like Enterprise Agile Planning and Scaled Agile work management within requirements demonstrate this focus.

Overall, these updates enhance ServiceNow SPM's capabilities for organizations adopting Scaled Agile frameworks while still catering to traditional project management needs.

Analysis and Key Takeaways from the SPM March 2024 Vision Update

Yoav Boaz repeatedly emphasized ServiceNow's commitment to SPM innovation and its positive impact on customer success. The focus on product value streams and enterprise-wide deployment reflects evolving market demands for more capable strategic portfolio management solutions. The upcoming Gen AI integration promises further advancements in automating tasks and generating insights. The customer success stories showcase SPM's ability to deliver significant cost savings, improved project delivery rates, and better resource allocation, even if the customer portfolio used did tend to be a bit tech-heavy (that's because non-tech companies tend to have less success with sophisticated PPM solutions like this.)

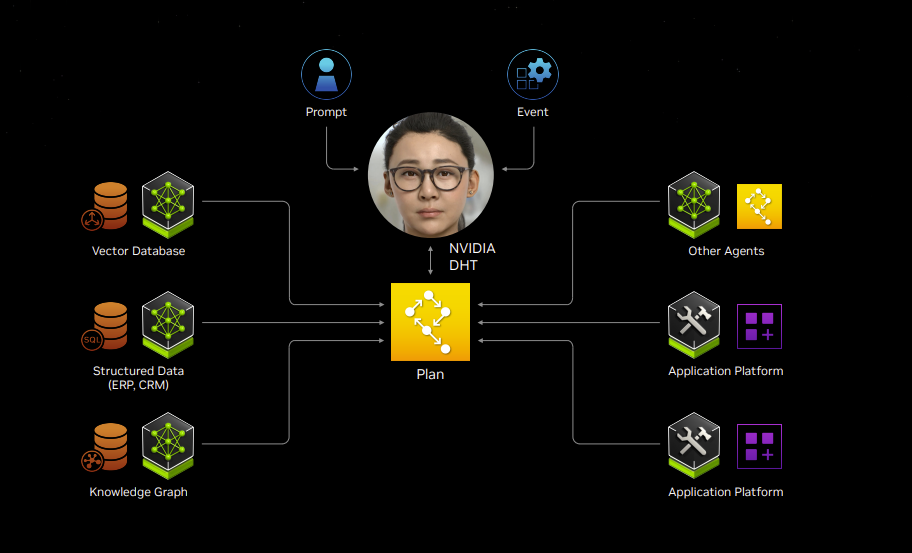

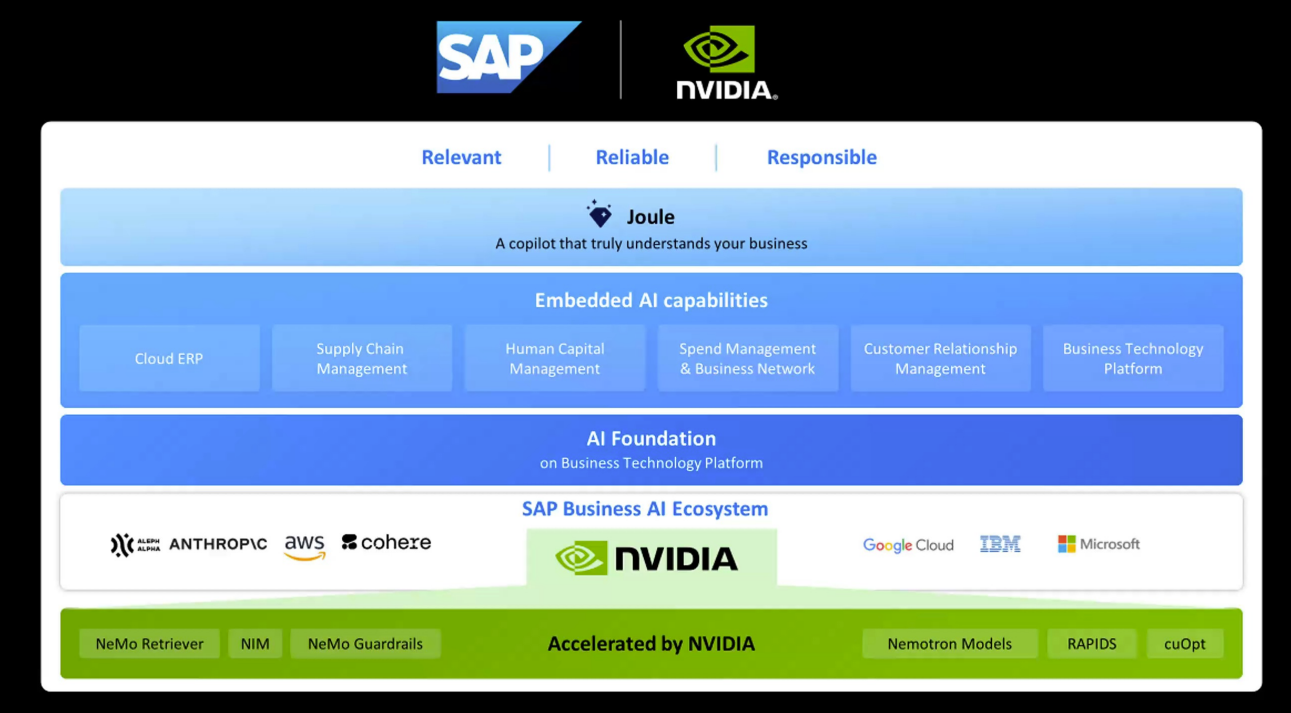

For obvious reasons, the most exciting and potentially transformative addition to SPM is the incorporation of generative AI. This cutting-edge technology holds the potential to automate repetitive tasks associated with portfolio management, such as data collection, feedback synthesis, and project analysis. Generative AI also generates insightful recommendations, allowing portfolio managers to focus on strategic initiatives and make data-driven decisions with greater efficiency. This not only streamlines the workflow but also empowers portfolio managers to derive superior investment outcomes.

ServiceNow Intends to Have Generative AI Capabilities for a Wide Variety of SPM Roles

Furthermore, SPM clearly strives to deliver a significantly improved user experience. The interface has been redesigned to be more intuitive and user-friendly. This enhanced usability allows users to navigate features and access critical data effortlessly. Improved user experience fosters broader adoption of SPM across an organization, ensuring a wider range of stakeholders can contribute to the strategic portfolio management process. This fosters a more collaborative and informed approach to investment decisions.

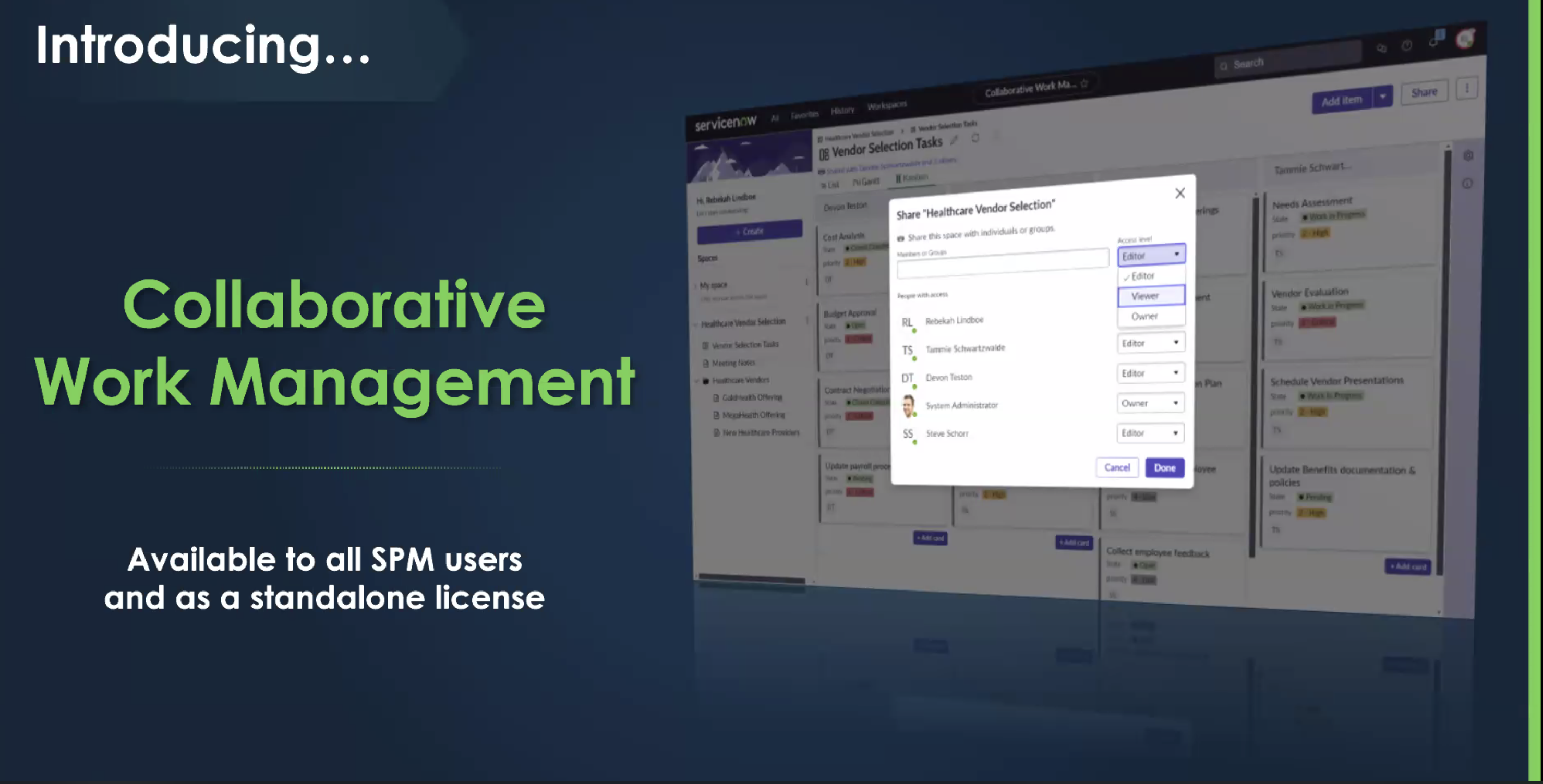

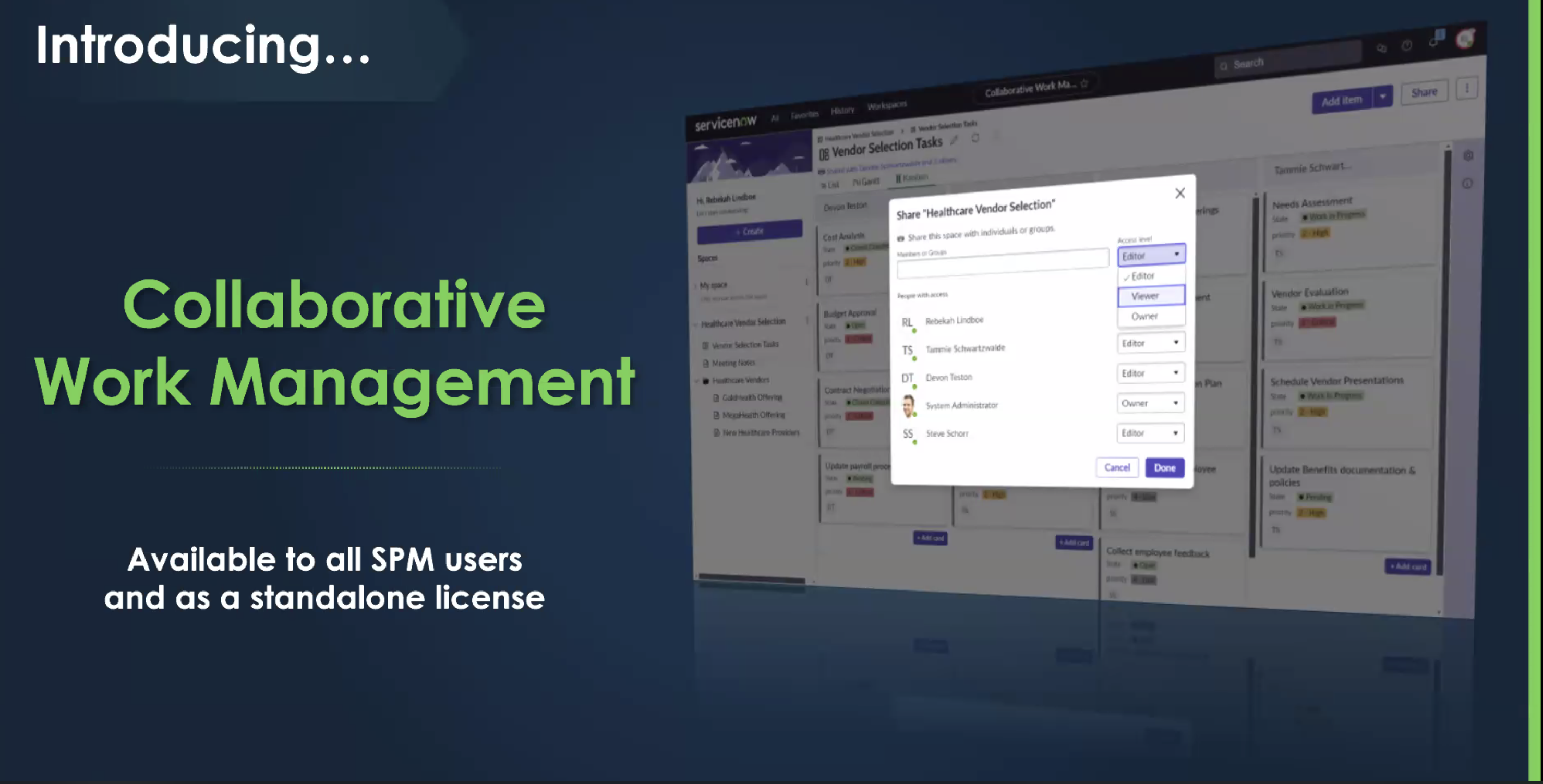

But perhaps the most significant update to SPM, however, is the introduction of new collaborative work management features, which I'll be evaluating soon for potential inclusion in my Work Coordination ShortList. This significant feature focuses on more detailed tasking while streamlining teamwork and facilitates automation information sharing and updates amongst portfolio managers and stakeholders on the progress of task execution. By enabling real-time collaboration, SPM ensures everyone involved in the decision-making process has access to the latest information and can contribute effectively. This fosters transparency and promotes a more cohesive approach to actually delivering on the details of portfolio management. In their customer stories, we heard about the struggle of integrating solutions like SmartSheet properly with SPM, so now ServiceNow has their own native solution right within the platform. It's also evident that ServiceNow would also like to capitalize on the success of this burgeoning product category as means of actually executing on the details of business and digital transformation.

To Help Deliver On Actually Executing Against Programs/Project, ServiceNow SPM Adds Work Coordination/CWM

Finally, ServiceNow shared the roadmap for the product, emphasizing a) going well beyond IT in support the types of business transformation projects that it can handle, b) an "obession" with SPM value creation, and c) a vital focus on improved ease-of-user through various innovations, including generative AI.

Key Takeaways As SPM Climbs Into the Apex Position in PPM and Digital Transformation

ServiceNow's Strategic Portfolio Management (SPM) is evolving at a blazing pace: It now offers an a truly overarching suite of enterprise-grade capabilities for organizations wishing to master their their entire estate of programs and projects, from business to IT. New features like Scaled Agile support, process mining, and real-time OKR tracking showcase its commitment to the details of empowering strategic decision-making. However, the platforms very complexity now presents a growing challenge. The sheer volume of features and capabilities can be overwhelming, potentially hindering user adoption and ultimately, value creation.

To truly unlock SPM's potential, ServiceNow now needs to prioritize its digital adoption strategy. In my view, this will include fully leveraging its new "Data to Assist" functionality for proactive guidance and a relentless focus on user-friendliness. While advancements like Generative AI are promising, ensuring a smooth and intuitive user experience is crucial, and I'm gratified to see this in their strategic roadmap for 2024. Now the challenge will be to successfully deliver a high rate of new feature uptake that delivers on customer outcomes. As SPM seeks to provide a full range of the latest advancements, it must not leave users behind by neglecting adoption of some of its most impactful new capabilities. Striking a balance between cutting-edge features and user accessibility will be paramount to maximizing the value proposition of this powerful platform, and ensure its customers achieve the strategic value creation that the platform so evidently strives for.

My Related Research

Transforming IT with Unified Software Services: An Evolving Strategy for CIOs

How to Embark on the Transformation of Work with Artificial Intelligence

Unleashed Amsterdam: Atlassian Refines the End-to-End Developer Experience

AWS re:Invent 2023: Perspectives for the CIO

My new IT Strategy Platforms ShortList

My current Digital Transformation Target Platforms ShortList

Private Cloud a Compelling Option for CIOs: Insights from New Research

The Future of Money: Digital Assets in the Cloud

Four Strategic Frameworks for Digital Transformation

The Future of Work in 2030: A Comprehensive Guide of 40+ Trends

I am seeking companies that want to submit their customer stories to support these trends. Please inquire on inclusion to [email protected].

New C-Suite

Future of Work

Data to Decisions

Innovation & Product-led Growth

Tech Optimization

Next-Generation Customer Experience

Digital Safety, Privacy & Cybersecurity

servicenow

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Robotics

AI

Analytics

Automation

Quantum Computing

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

Leadership

VR

business

Marketing

SaaS

PaaS

IaaS

CRM

ERP

finance

Healthcare

Customer Service

Content Management

Collaboration

Chief Executive Officer

Chief Financial Officer

Chief Information Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer