An ode to middle managers

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

It's open season for middle managers across technology and multiple industries, but it remains to be seen how short-sighted this trend ultimately becomes.

Yes folks, this is an ode to middle managers--the people who manage projects, serve as a buffer to executives, and lead teams in smaller batches. Today, we've gone from the COVID-19 era of over hiring to cutting out layers of management. In true American fashion, the pendulum swings all the way to the other side with little to no balance in the middle.

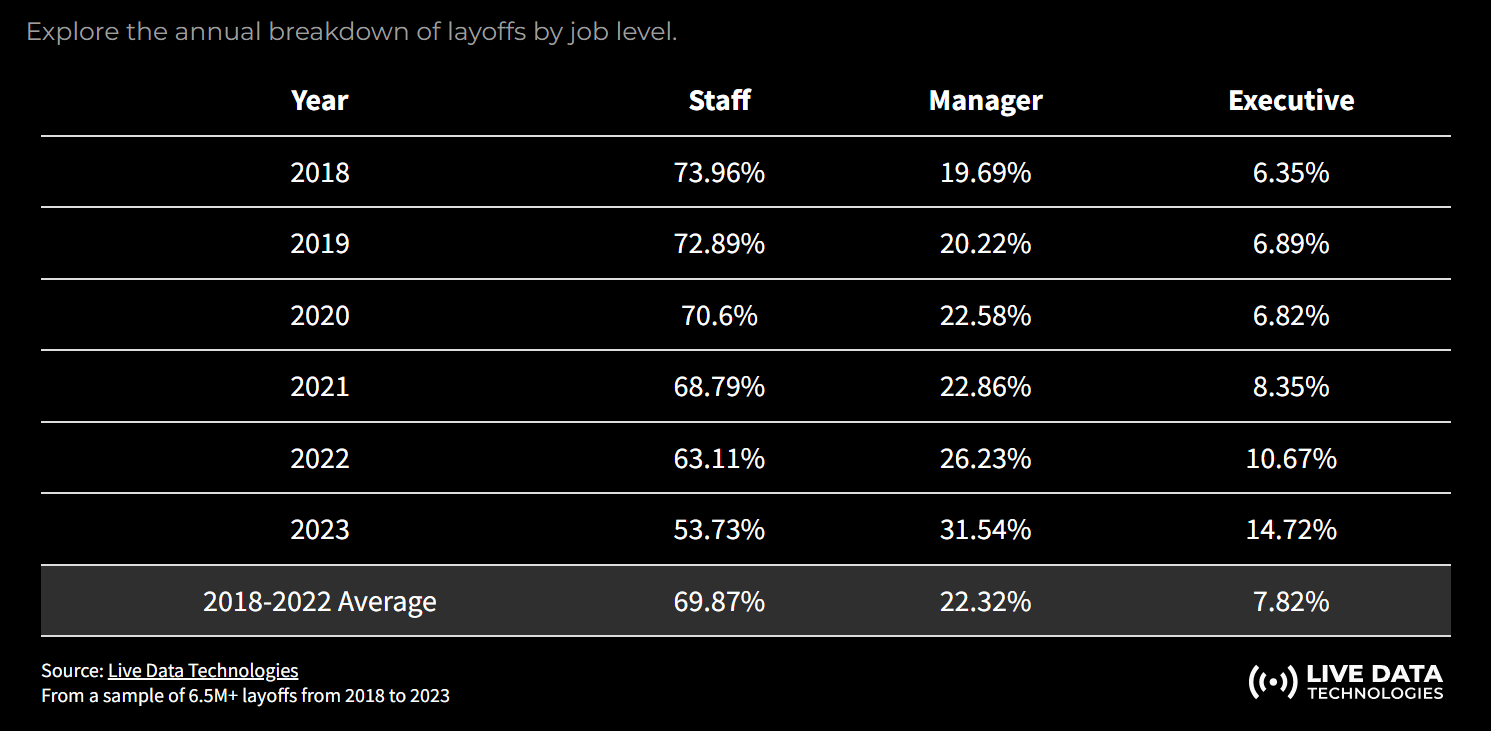

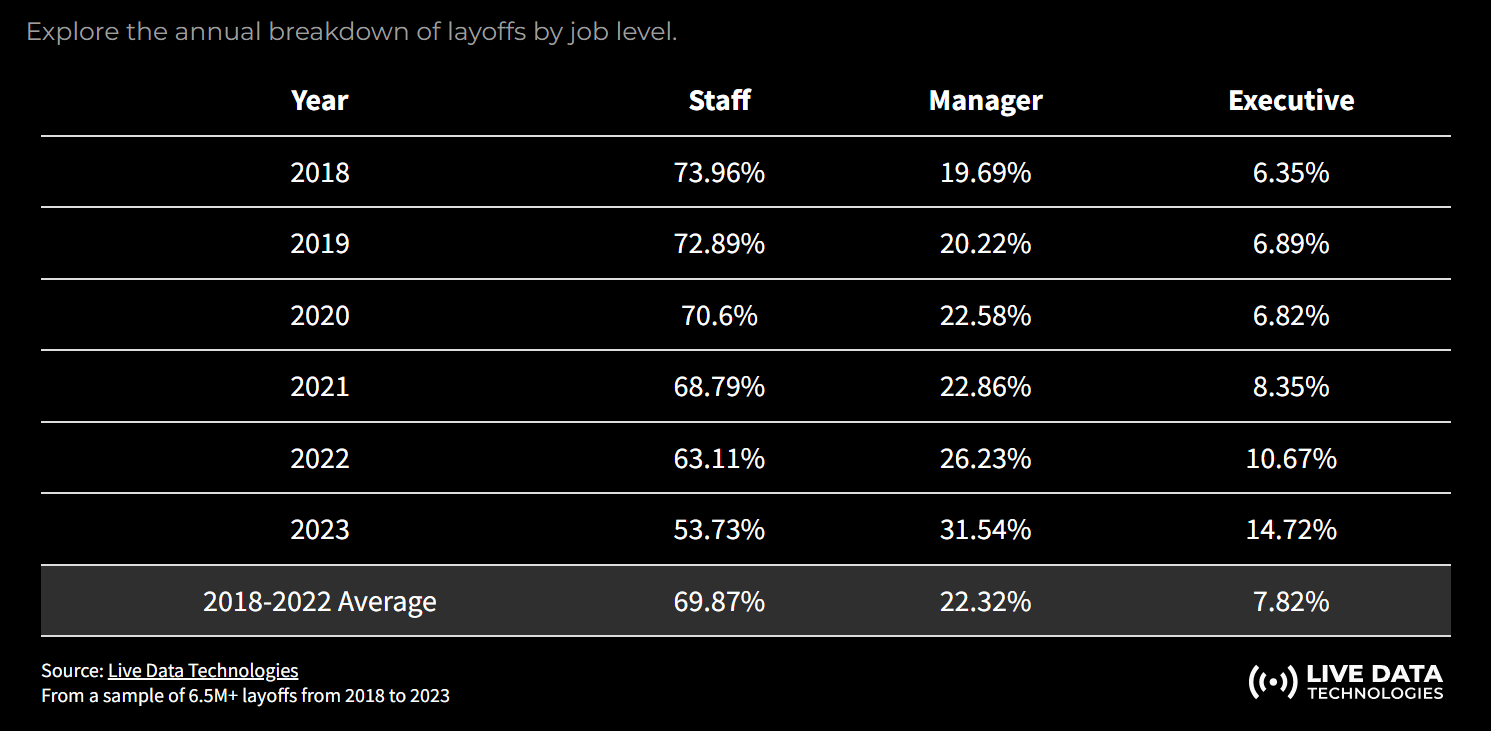

According to Live Data Technologies, manager-level or higher layoffs made up nearly half of all observed layoffs in 2023.

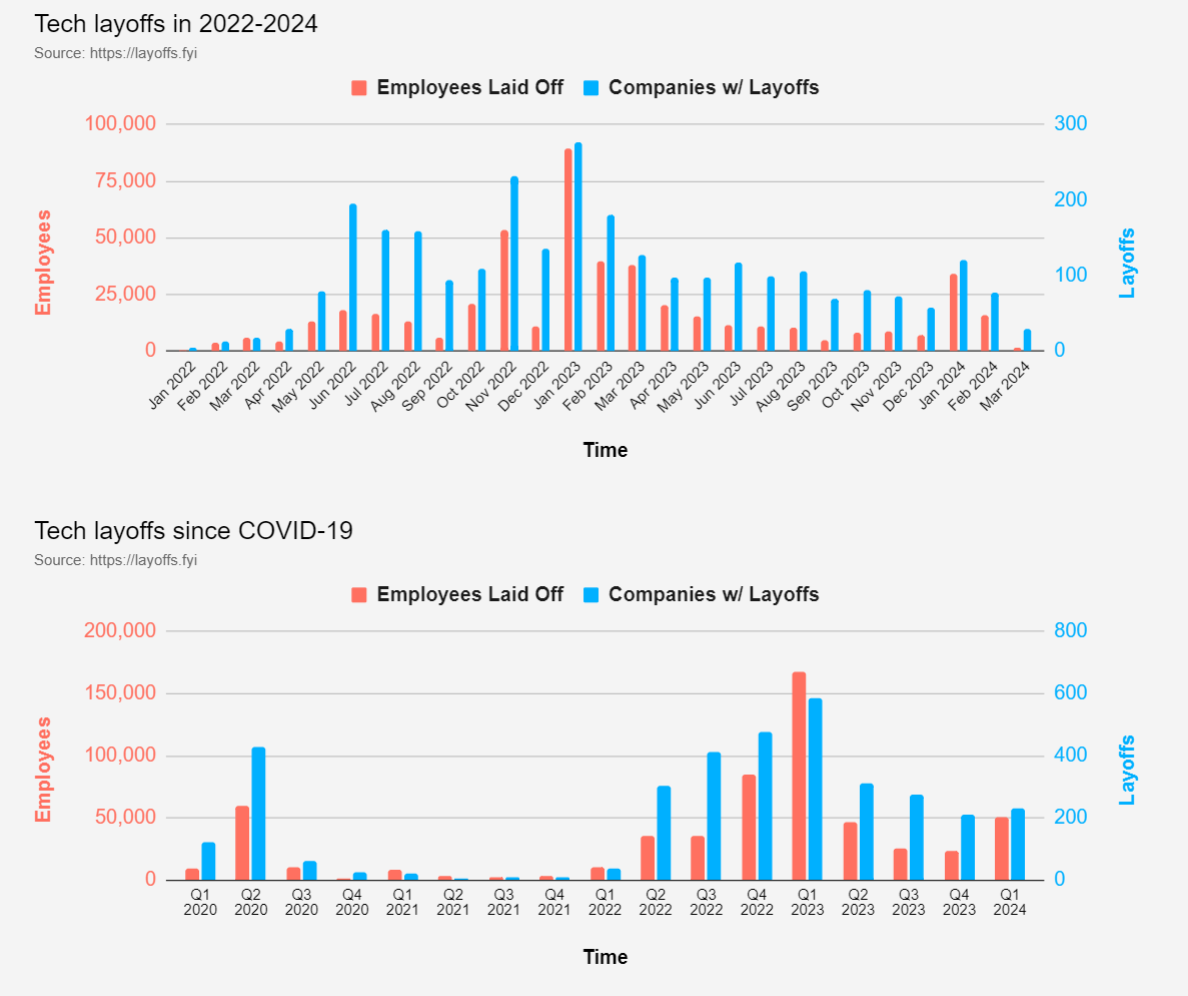

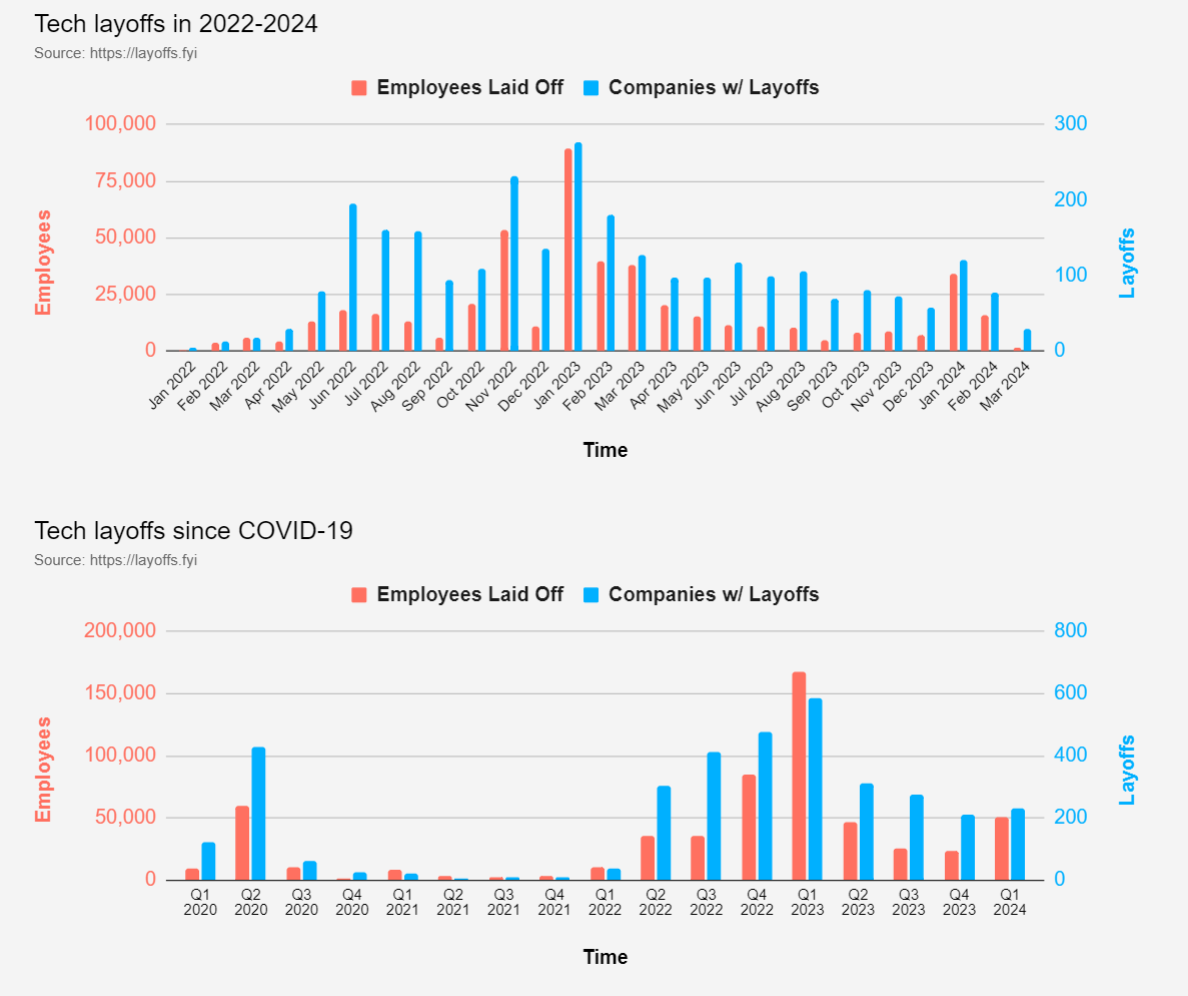

In 2024, it appears to be a similar layoff story via Layoff.fyi.

Consider:

- Mark Zuckerberg, CEO of Meta, said on the company's fourth quarter earnings call that he has "come around to thinking that we operate better as a leaner company." Zuckerberg just closed out 2023, dubbed a year of efficiency, and noted that "in 2024, we do have a big recruiting backlog because part of the layoffs that we did included teams basically swapping out certain talent profiles for others." Translation: Engineers and salespeople in. Everyone else out. Meta ended 2023 with 67,317 employees, down 22% from 2022. As the end of 2021, Meta had 71,970 employees up 23% from 2020.

- Sundar Pichai, CEO of Google parent Alphabet, said "teams are working to focus on key priorities and execute fast, removing layers and simplifying their organizational structures."

- UPS Carol Tome said the company is going to fit its organization to focus on "what's wildly important." UPS is cutting 12,000 positions to save $1 billion and most of those reductions are managers.

- Citi CEO Jane Frasier said it will cut more managers to simplify the org structure and save more than $1 billion. Citi restructurings aren't exactly a new trend since its core competency appears to be adding management layers and penning restructuring announcements later.

I realize it's a bit contrarian to defend middle managers--especially when one of my recent reads was Bullshit Jobs: A Theory--but there is going to be some fallout to this middle management purge.

This post first appeared in the Constellation Insight newsletter, which features bespoke content weekly and is brought to you by Hitachi Vantara.

Here are some of the ramifications from the middle manager cuts.

Morale. The UKG Workforce Institute found that 86% of managers’ report feeling burnout. That job burnout rate is higher than employees and CXOs. However, 75% of employees feel that their manager cares for and has empathy for them.

You can't tell me that a super flat organization where 200 people report to one executive who doesn't have time for them is going to boost morale.

Decline of project management. Many of these middle manager types getting cut happen to be project managers. Simply put, project managers have a tough gig. They're caught in the middle between the technology and business sides of an enterprise. These project managers are more human and happen to be bilingual in tech and business. Over time, enterprises are going to realize that you need a human in the middle of the Venn diagram to get things done.

Customer success teams at your friendly neighborhood vendor will suffer. Anecdotally, enterprise vendors have been cutting customer success managers who aren’t technical or salespeople. These customer success structures have always been a bit wonky. One setup could have a technical lead, a sales-ish type person focused on success (and a never-ending upsell cycle) and a manager who is more neutral and may want the customer to actually succeed. Guess what happened? The relationship person was cut (no credit for revenue and/or code). Enterprise customers have been asking where their core contacts went over the last 18 months. It's quite possible that customers are stuck with a tech-oriented contact with little people skills and another who is focused on relationship building with a heavy dose of upsell.

SaaS companies are going to lose seats. Live Data Technologies also had a post on the shrinking total addressable market for SaaS companies. Guess who SaaS users were? Yep, those managers who were cut over the last year and change.

A few companies will realize that the talent that has been purged can be very useful in the right cases. AuthID CFO Ed Sellitto said on the company's recent earnings call that it is building out its customer success team. "This team is laser focused on deploying and refining proven customer implementation methodology to help customers meet projected go live dates, establish full-service rollouts across contracted use cases and meet and ultimately exceed their minimum contracted commitments," said Sellitto.

Enterprises could quantify middle managers. If project managers and folks who run teams can be credited for some slice of the overall revenue pie the economics may look dramatically different. To date, the sales department runs off with the quantification (as if that person sold everything with no support whatsoever) and middle managers look like overhead. The person who keeps the product launch running on time is taken for granted. Maybe a slice of revenue attribution for the supporting cast is warranted.

The problem for managers is the same as it was in the 1980s: It's hard to quantify middle managers. In the age of AI, soft metrics aren't going to fly.

Executives are going to feel all the burnout middle managers faced. Middle managers were facing extreme burnout. Well, now that the middle tier is gone and all those gripes, employee morale issues and balancing act between KPIs and reality will hit higher-level executives. Once those execs in flattened orgs feel the pain look for enterprises to start adding a layer or two back. The harsh reality is someone must absorb the blows and executives are going to find it difficult to yell at algorithms that replaced those human managers just cut.

What the large language models think of middle managers

Given I was going to take the unlikely position of supporting middle management, it made sense to ask generative AI--the technology that'll replace these people--what it thought. Here's a look at what Gemini and ChatGPT gave me when I asked for an ode to the middle manager.

Gemini:

Oh, middle manager, you unsung hero,

You keep the wheels of industry turning.

You bridge the gap between upper management,

And the front-line workers who do the earning.

You're responsible for implementing strategies,

And making sure that work plans are followed.

You're also responsible for the execution,

Of your own and your team’s work.

You're often beholden to others' expectations,

Deadlines, and schedules.

But you always try your best,

To make sure that the job gets done.

You're the glue that holds the organization together,

And you deserve to be recognized for your hard work.

So, here's to you, middle manager,

We appreciate all that you do.

And the ever-wordy ChatGPT:

Ode to the Middle Manager, steadfast and true,

Keeper of balance, in skies often not blue.

In the realm of the cubicles, amidst fluorescent light,

You stand as a beacon, a guide through the night.

With a coffee in one hand, reports in the other,

You navigate the waters, a skilled helmsman and brother.

Between the titans above and the sea below,

You chart the course, steady and slow.

In meetings, you're the diplomat, the voice of reason,

Bridging gaps, through every season.

Your inbox, a testament to battles fought,

Each email is a volley, each decision a thought.

You translate the visions, from lofty to real,

Into actionable plans, with zeal you seal.

The dreams of the high, to the hands of the low,

Through you, they take shape, and begin to grow.

You're the unsung hero, in the corporate fray,

Juggling demands, come what may.

The mentor, the coach, on the office floor,

Guiding your team, to strive for more.

When the storm clouds gather, and morale is low,

You're the first to arrive, and the last to go.

With a joke, a smile, or a listening ear,

You dispel the shadows and bring back the cheer.

For the middle manager, no song is sung,

No praises shouted; no bells are rung.

But know this truth, as clear as day,

Without you, the ship would lose its way.

So, here's to you, in your unassuming might,

The linchpin that holds, the day and night.

In the heart of the maze, you find the path,

Middle manager, you deserve our gratitude and laughter.

New C-Suite

Future of Work

Data to Decisions

Innovation & Product-led Growth

Tech Optimization

Next-Generation Customer Experience

Digital Safety, Privacy & Cybersecurity

Leadership

AI

GenerativeAI

ML

Machine Learning

LLMs

Agentic AI

Analytics

Automation

Disruptive Technology

Chief Executive Officer

Chief Financial Officer

Chief Information Officer

Chief Experience Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer

Wood spoke with industry analysts including me and Doug Henschen at AWS' New York offices. Here are some of the key themes to note.

Wood spoke with industry analysts including me and Doug Henschen at AWS' New York offices. Here are some of the key themes to note.