Workday layers generative AI features throughout HCM, Adaptive Planning

Workday layers generative AI features throughout HCM, Adaptive Planning

Workday launched a series of HCM and Adaptive Planning update along with generative AI tools for enterprises, managers and developers.

Workday's generative AI rollout and updates across its finance and human resources applications come as ERP vendors are racing to add features that streamline work, bolster productivity and deliver real-time insights. Rivals SAP and Oracle recently outlined generative AI updates across applications.

The company outlined the updates, strategy and approach to generative AI at its Workday Rising annual customer conference.

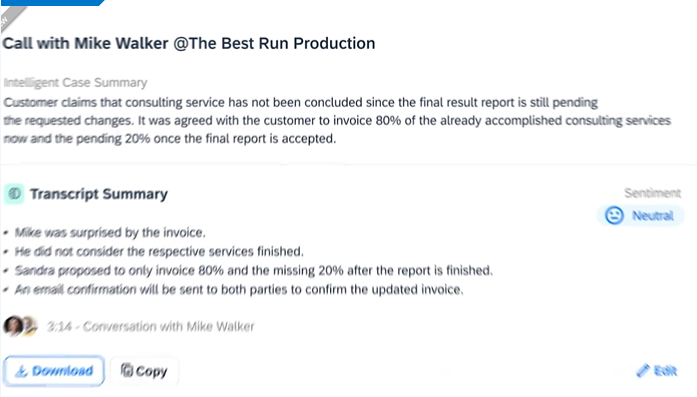

Executives reiterated that Workday's approach to generative AI is to leverage its models based on 625 billion transactions processed on its platform. Workday said it will use generative AI to create job descriptions on the fly, analyze and correct contracts to bolster revenue recognition, create knowledge management articles, streamline collections and use text-to-code models embedded in Workday App Builder. Additional generative AI capabilities will be deployed throughout the platform. Workday has said it plans to "offer generous usage based entitlements" for customers that opt-in to generative AI functionality. That approach could resonate with enterprise buyers, who are about to get hit with a bevy of generative AI upsells and add-ons.

Aneel Bhusri, Co-Founder and Co-CEO of Workday, said during a keynote that the company has more than 10,000 customers. He outlined Workday's AI strategy. "AI is treated like any other feature in Workday. Turn it on and it's ready to go," he said.

Workday is also focused on clean data and trust. "Transparency and understanding how the models are used mitigates risk," said Bhusri.

Here's a look at the Workday Rising news:

- Customers with Workday Human Capital Management (HCM) and Adaptive Planning will have a user interface that delivers workforce planning across finance and HR. The interface within Workday HCM will enable workforce planners to update and create new positions and have them reflected in financial and headcount planning in Adaptive Planning. Workday Adaptive Planning will also have an automated headcount reconciliation process to the position level. All changes can be viewed through a cost impact lens.

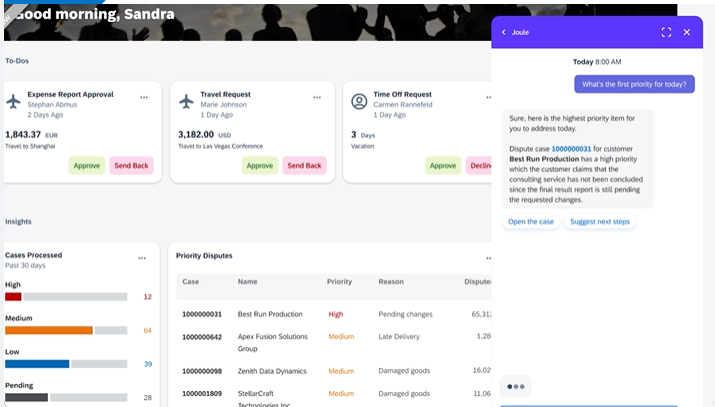

- Workday said Manager Insights Hub is available within Workday HCM. Manager Insight Hub uses AI and machine learning to provide personalized recommendations to provide opportunities for employees based on skills and interests. Workday HCM will also get Flex Teams, which enables managers to identify talent and assemble teams using Workday Skills Cloud.

- In addition to generative AI features to hone financial and work processes, Workday launched Workday AI Gateway within its Workday Extend program to provide developers with tools to build apps on Workday AI. Workday Extend was also updated with a no-code and low-code toolset and services to leverage skills analysis, sentiment, document intelligence and forecasts leveraging machine learning and AI. Developers will also be able to access multiple AWS AI services within Workday Extend. The company said Workday Extend Essentials and Workday Extend Professional will be available in late 2023 with AWS AI services available to Workday Extend Professional in the first half of 2024.

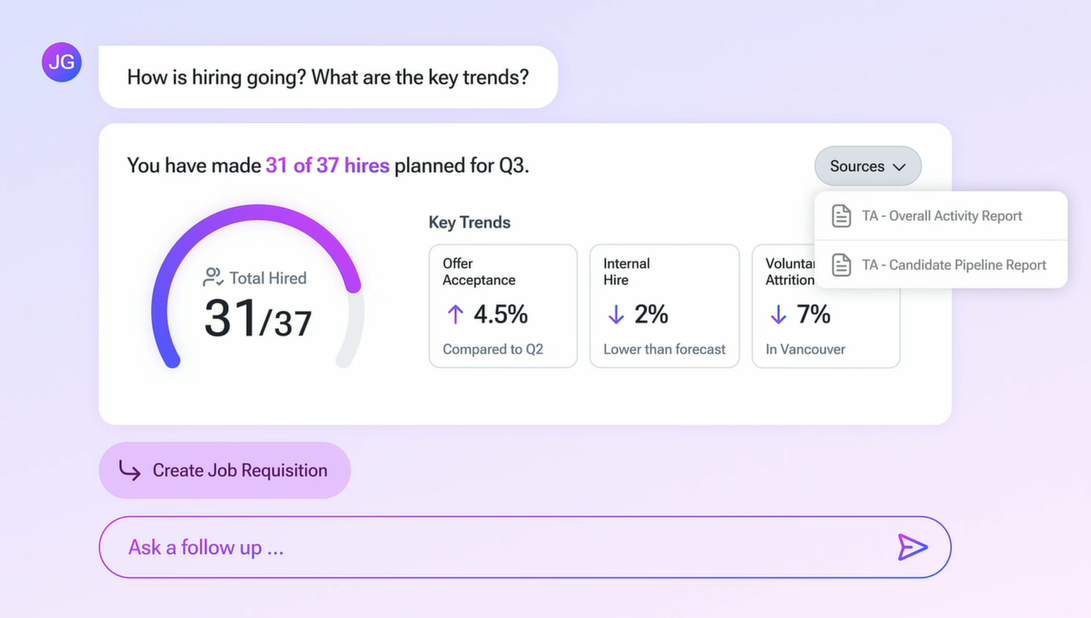

- Workday Adaptive Planning will have a new user experience based on generative AI and natural language queries. Officials said Workday Adaptive Planning users will be able to surface data, find contextually relevant insights and garner recommended actions based on conversational text. Workday Adaptive Planning will also get new tools for what-if scenarios, automated communication and report scheduling, performance upgrades for dashboards and machine learning enabled forecasts.

- Workday also launched the Workday AI Marketplace, a curated set of partners with AI models and applications launching in the second quarter of 2024. Accenture, Amazon, Vertex and others are early partners.

Most of the aforementioned features from Workday begin rolling out to customers within the next 6 to 12 months.

Workday also previewed some interface concepts based on generative AI queries with a focus on "simple and smart experiences."