Lead times for generative AI systems extending into 2024

Lead times for generative AI systems extending into 2024

Lead times for systems for generative AI and AI workloads are lengthy to the point where infrastructure ordered today may not be installed until Spring 2024.

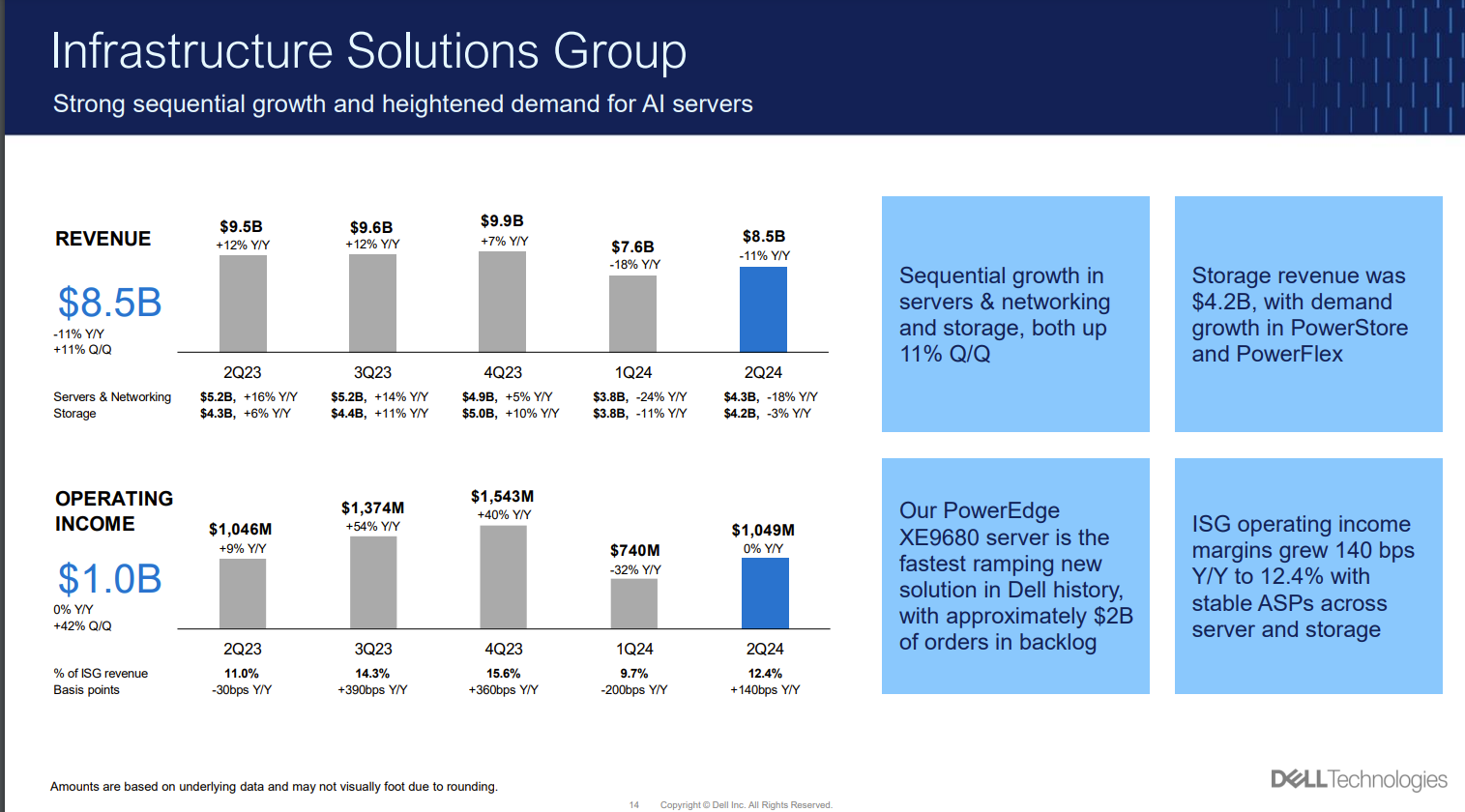

That's the takeaway from a bevy of earnings conference calls from infrastructure providers. Dell Technologies reported better-than-expected second quarter results and noted that its PowerEdge XE9680 GPU-enabled server is the fastest ramping product it has launched.

Dell's problem: Orders are off the charts, but parts--namely GPUs--are hard to come by even though the company has a strong partnership with Nvidia. Dell Chief Operating Officer Jeff Clarke said the company has about $2 billion of XE9680 orders in backlog with a higher sales pipeline.

Yes, generative AI investment is taking off. But the revolution--and the enterprise use cases on premises and locally--will be delayed due to supply and demand imbalances. Clarke later put a number on the backlog issue (emphasis mine):

"Maybe the easiest measure to determine where we are with supply is demand is way ahead of supply. If you order a product today, it's a 39-week lead time, which would be delivered the last week of May of next year. So, we are certainly asking for more parts working to get more parts. It's what we do. I'm not the allocator, I'm the allocatee. We’re advocating our position on our demand. Again, we are winning business signaled by the $2 billion in backlog today with a pipeline that's significantly bigger I was in the discussion yesterday with two different customers about AI, the day before about AI. It is constantly something that's coming into our business that we're fielding the opportunities. From different cloud providers to folks building AI as a service to enterprises now beginning to do proof of concept and trying to figure out how they do exactly what I just said earlier, use their data on-premises to actually drive AI to improve their business."

A few notable takeaways from Clarke's comments:

- Nvidia has big investments in its supply chain and is moving GPUs at a rapid clip. Hyperscale cloud providers are gobbling up systems.

- Enterprises are looking to train models on-premises too. That migration rhymes with how enterprises are re-evaluating public cloud workloads.

- Supply will improve as Nvidia rivals come online with GPUs and accelerators.

- AI workloads will reshape data center demand.

To that end, Clarke added that supply will catch up.

"We're tracking at least 30 different accelerator chips that are in the pipeline in development that are coming. So, there are many people that see the opportunity. Some of these new technologies are fairly exciting from neuromorphic type of processors to types of accelerators there's a series of new technologies and quite frankly, new algorithms that we think open up the marketplace and we'll obviously be watching that and driving that across our businesses and helping customers."

AMD is the likely beneficiary with its fourth quarter ramp of next-generation GPUs.

This GPU supply issue is also affecting other infrastructure players.

HPE CEO Antonio Neri said on the company's latest earnings call:

"We start now shipping some of those orders, those wins, but it's a long way to go. And remember that there's two components related to that. Number one is availability of supply, which obviously in the AI space is constrained. Number two is the fact that when you deploy these deals, you have to install it and then drive acceptances, which means elongated times for revenue recognition. And then maybe in a specific win or two, there are other conditions related to the contractual agreements."

Neri added that he's pleased with the quality of AI deals the company is making. HPE, like Dell, is building a lineup that aims for a portfolio of gear for AI training to tuning to inferencing for enterprises looking at domain-specific models.

Broadcom CEO Hock Tan said AI systems take time and supply issues are going to impact a bevy of infrastructure players. "These products for generative AI take long lead times," said Tan. "We're trying to supply, like everybody else wants to have, within lead times. You have constraints. And we're trying to work through the constraints, but it's a lot of constraints. And you'll never change as long as demand, orders flow in shorter than the lead time needed for production."

According to Intel CEO Pat Gelsinger, who spoke at an investor conference last week, competitors will start grabbing some of that GPU demand. Intel's entry in the GPU and AI accelerator race is its Gaudi lineup. Gaudi is a line of AI accelerators built off the acquisition of Habana in 2019.

Gelsinger said Nvidia "has a great leadership position," but "there's a bit of false economy there." He said there's huge demand, high prices and supply chain constraints for Nvidia GPUs but that won't last forever. "Lots of people are showing up, including us, to compete," said Gelsinger. "We've seen a rapid expansion of our Gaudi pipeline. We're building our supply chains to get much larger for our footprint there as we start competing as well as others will."

Intel's argument is that GPUs and CPUs will be used for AI models and enterprises will follow pricing vs returns. "There is a bit of euphoria so overall I expect to see more moderation," he said. "We're going to be competing more for the GPU and accelerator, but we also see workloads driving energy that will create opportunities for our CPU offerings as well."

Research:

Data to Decisions Tech Optimization Innovation & Product-led Growth Future of Work Next-Generation Customer Experience Digital Safety, Privacy & Cybersecurity Big Data AI GenerativeAI ML Machine Learning LLMs Agentic AI Analytics Automation Disruptive Technology Chief Information Officer Chief Technology Officer Chief Information Security Officer Chief Data Officer Chief Executive Officer Chief AI Officer Chief Analytics Officer Chief Product Officer