CoreWeave's great AI infrastructure race

CoreWeave's great AI infrastructure race

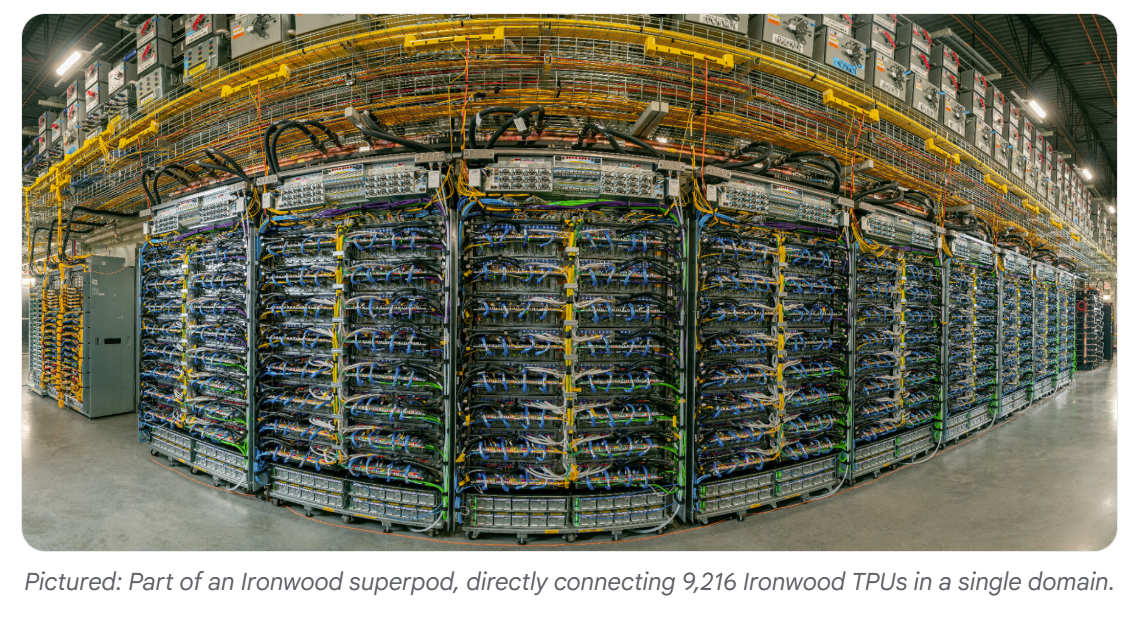

CoreWeave said it is dealing with ongoing supply chain issues as demand far exceeds capacity and revenue expected in the fourth quarter will slip to the first quarter. Nevertheless, CoreWeave's bet is that self-building its AI infrastructure will be a winning strategy in the future.

Michael Intrator, CEO of CoreWeave, said on the company's third quarter earnings call that there are multiple delays but the biggest issue is at the powered-shell level. Powered shell refers to a facility where the power and exterior are completed, but the interior isn't finished.

"There's plenty of power right now, and we believe that there will be ample power for the next couple of years. But really the challenge is the powered shell," said Intrator.

CoreWeave's third quarter had a bevy of moving parts to consider and also reflected emerging skepticism about capital expenditures for AI infrastructure. Although AWS, Alphabet and Microsoft all said capital spending would continue to surge for AI infrastructure, Wall Street openly questioned Meta's plans. In Meta's case, it could simply be a case of metaverse traumatic stress disorder, but the focus of AI spending is turning to returns.

Consider the following for CoreWeave milestones in what is a frenetic pace of scaling:

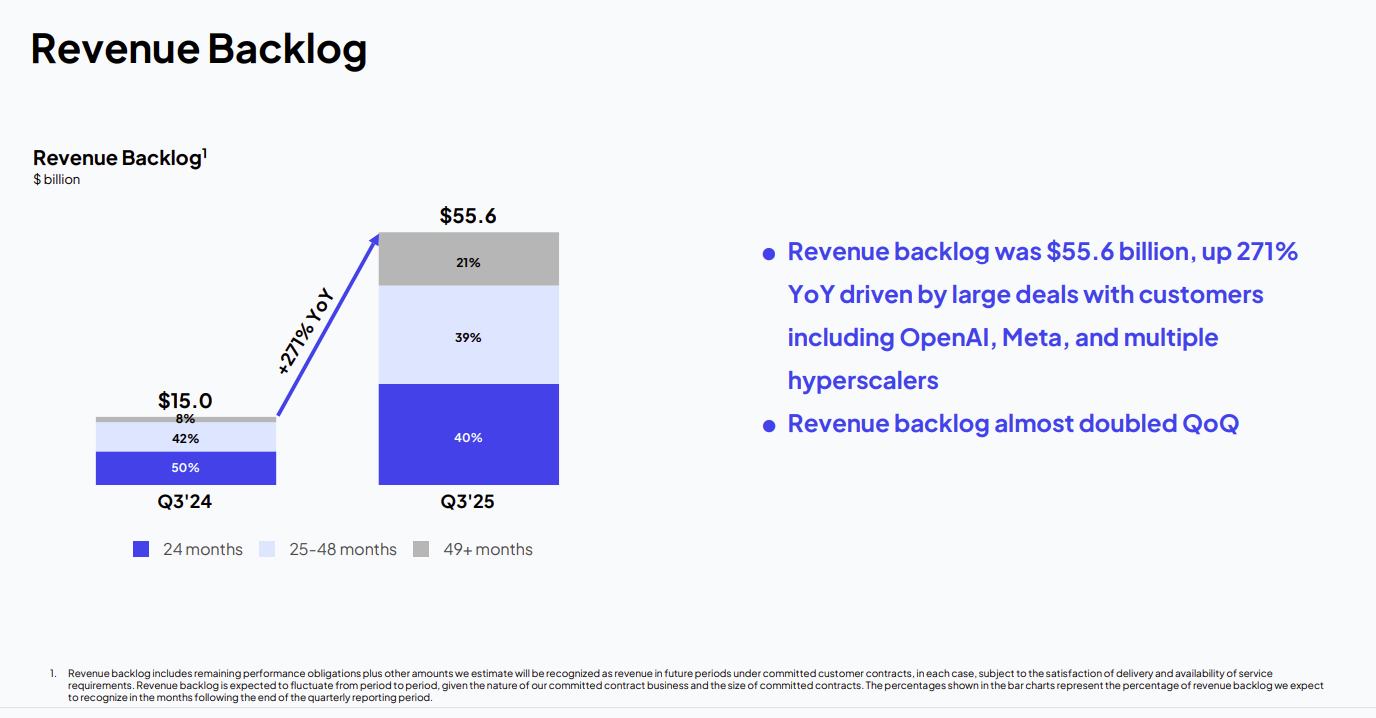

- In the third quarter, CoreWeave had revenue of $1.4 billion, up 134%.

- Revenue backlog at the end of the third quarter was $55 billion.

- CoreWeave's will deliver more than 1 gigawatt of contracted capacity to customers within the next 12 to 24 months.

- The company landed third quarter compute contracts with Meta and OpenAI.

- A planned merger with Core Scientific is officially off and Intrator said the price was simply too high.

- CoreWeave is diversifying its stack with the acquisition of OpenPipe, which is a platform for training AI agents, and Marimo, a developer workflow company. CoreWeave also acquired Monolith for an industrial AI play.

- The company launched a unit to land US government customers and added Jon Jones, an AWS alum, as its first chief revenue officer.

- CoreWeave also launched AI Object Storage, which optimizes the storage layer for AI workloads. CoreWeave's storage platform has topped $100 million in annual recurring revenue.

However, CoreWeave's buildout comes at a price. In the third quarter, CoreWeave delivered a net loss of $110.1 million, an improvement on the $360 million net loss a year ago. Net interest expense in the third quarter was $310.55 million, up from $104.4 million a year ago. Operating income margin was 4% compared to 20% a year ago.

CoreWeave is obviously betting that if it builds the infrastructure customers will come. "AI adoption is progressing beyond the frontier AI labs and hyperscalers. Broader global demand and our recent large wins are driving diversification of our revenue base," said Intrator, who noted customer wins including CrowdStrike, Rakuten and NASA.

Jones, who was the head of startups and venture capital at AWS, will look to add AI natives that will grow with CoreWeave.

No CoreWeave customer in the third quarter represented more than 35% of the company's revenue backlog. The customer base is still concentrated, but well below the 85% level at the start of 2025. Sixty percent of CoreWeave's revenue backlog is with investment grade customers.

- CoreWeave acquires Monolith, eyes industrial AI use cases

- Coreweave's Q2: Takeaways on AI training, inference demand

- CoreWeave raises $7.5 billion in debt financing for AI data center buildout

The race

In many ways, CoreWeave symbolizes much of the AI infrastructure market in that there's a race between investor patience and scaling amid fears that overcapacity may loom.

Intrator said supply chain issues may be a risk. "While we are experiencing relentless demand for our platform, data center developers across the industry are also enduring unprecedented pressure across supply chains. In our case, we are affected by temporary delays related to a third-party data center developer who is behind schedule. This impacts fourth quarter expectations," he said.

The customer affected by the current delays agreed to adjust the delivery schedule and extend the expiration date.

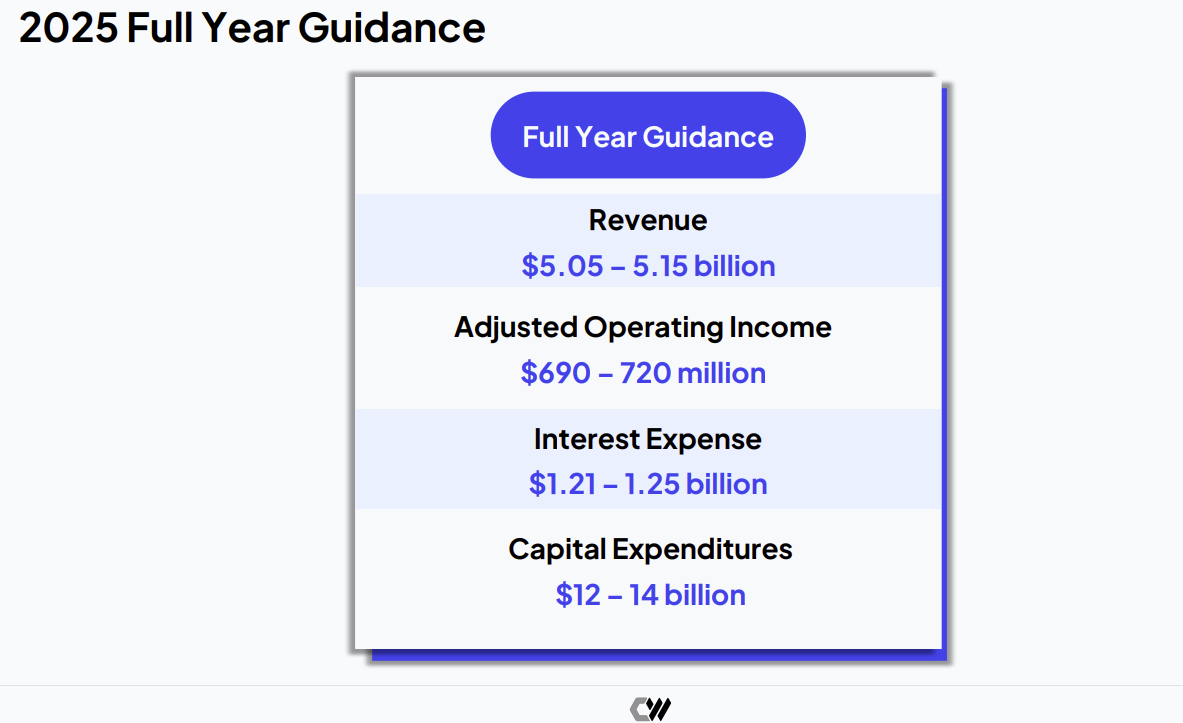

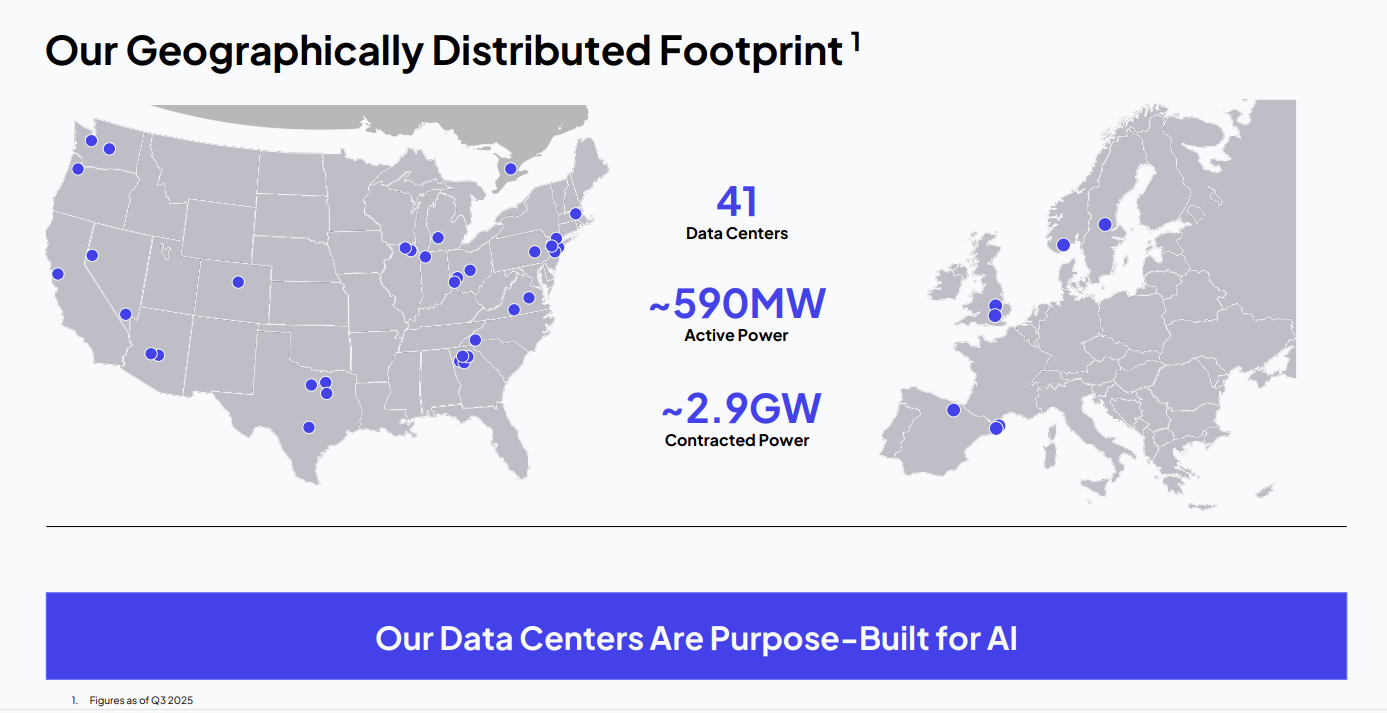

CoreWeave said 2025 revenue will be between $5.05 billion to $5.15 billion with adjusted operating income of $690 million to $720 million and more than 850 megawatts of active power.

Nitin Agrawal, CoreWeave CFO, said 2025 capital expenditures will be between $12 billion to $14 billion and the 2026 figure will more than double. Interest expense in 2025 will range from $1.21 billion to $1.25 billion.

Agrawal said:

"In Q4, we will be bringing online some of the largest scale deployment in our company's history. This will have a near-term impact on adjusted operating margin due to the timing difference between when data center costs are first incurred and when we start recognizing revenue.

We expect 2025 interest expense in the range of $1.21 billion to $1.25 billion, driven by increased debt to support our demand-led CapEx growth, partly offset by an increasingly lower cost of capital."

Intrator was asked about CoreWeave's strategy to self-build infrastructure. He said CoreWeave has diversified providers and the ability to self-build data centers makes it a larger player in the supply chain. Intrator added that CoreWeave does work with third-party data center providers, but self-building is "about derisking deliver across the broader portfolio."

"We just look at self-build as an additional piece of the puzzle. It puts us closer to the physical infrastructure. It embeds us deeper into the supply chain around the world so that we have firsthand information," said Intrator. "We just think that you need to be on both sides of this fence in order to be as effective as you can be derisking what is a complicated supply chain environment."

Add it up and CoreWeave is going to be a fascinating business school case study. Is CoreWeave's balance sheet just a pile of debt or growth capital? Can CoreWeave remain differentiated in three to four years? Will CoreWeave build out is AI software stack to play a larger revenue role?

The CoreWeave saga will be a fascinating two- to three-year race. Why? CoreWeave has no debt maturing until 2028.

Constellation Research analyst Holger Mueller said:

Data to Decisions Tech Optimization Chief Executive Officer Chief Information Officer"CoreWeave showed outstanding growth with revenue growing 150%+ YoY. It is also showing the skeptics that it is not a money loosing business - as EPS improved year over year. Another quarter like this and CoreWeave should be in the black for Q4 on an adjusted basis. With that demonstrated - the focus needs to shift on CoreWeave managing to keep the growth going with supply chain challenges as it secures capital, delivers data center capacity and runs customer workloads well. At the moment, the first concern with CoreWeave is delivering data centers. We will see if all of this issue is addressed in Q4."