Celonis makes case for process, context in agentic AI, forges Databricks partnership

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

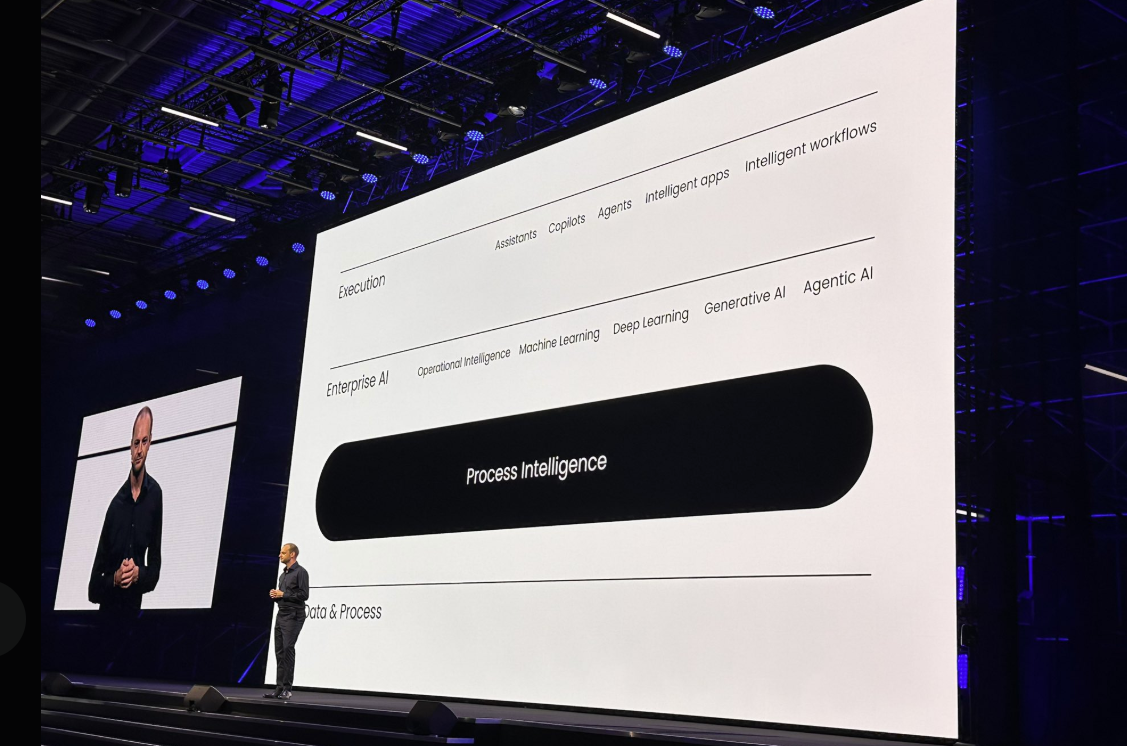

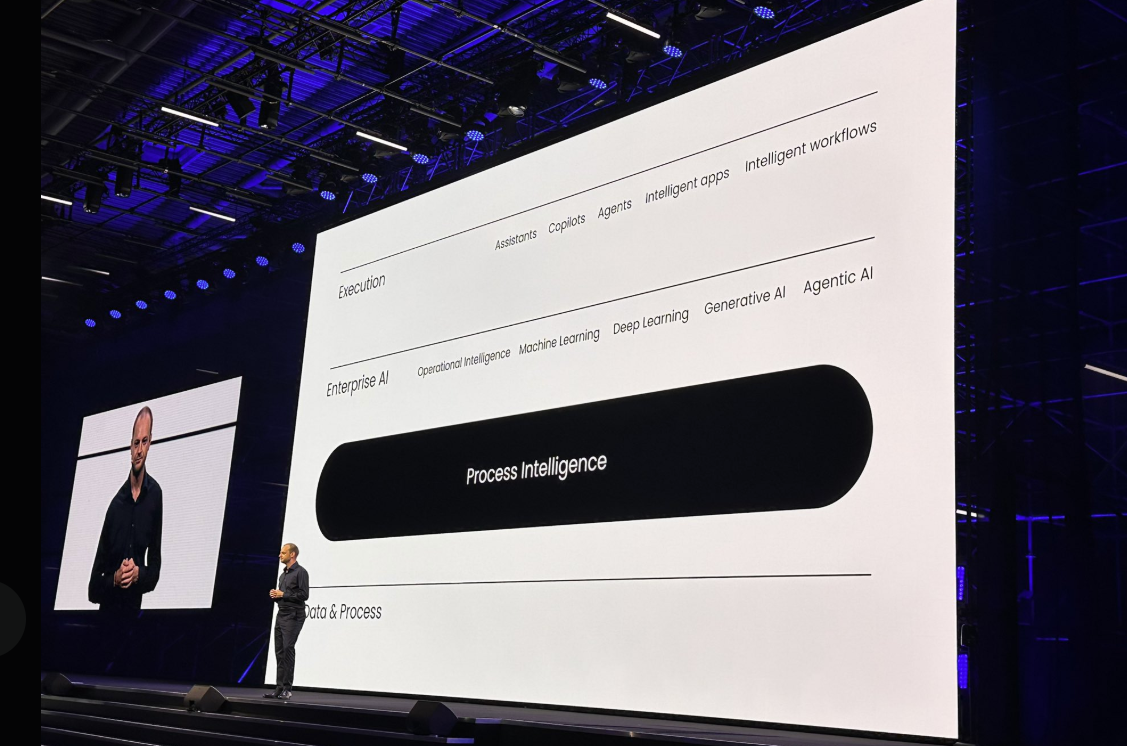

Celonis is looking to embed its process intelligence platform into agentic AI workloads and make it clear that AI agents sans process knowhow won't deliver enterprise value.

At Celosphere 2025 in Munich, Celonis outlined the following:

- Celonis Process Intelligence additions that integrate data lakes without data duplication, tools to build more comprehensive digital twins of enterprises and blueprints for enterprise architecture.

- A partnership with Databricks that integrates Delta Sharing to directly connect the Celonis Process Intelligence Platform with the Databricks Data Intelligence Platform.

- And enterprise value use cases from the likes of Mercedes Benz, Uniper, Vinfast and 120 other "value champions" that have realized value of more than $10 million each.

Alex Rinke, co-CEO of Celonis, said the company is looking to help enterprises get value from their AI investments. "We give their AI the context it needs. We guide them to deploy it in the right places. And we enable them to make it work with everything else they’re doing," said Rinke.

Constellation Research analyst Mike Ni covered the Celosphere 2025 keynote live from Munich. He noted that Celonis was making the case that process intelligence should be the brain of enterprise AI. Ni added that Celonis touted an ecosystem that includes Wipro, Microsoft and now Databricks.

In many ways, Ni said Celonis is positioning itself as a "multi-dimensional Digital Twin of Operations" and the "architecture for contextual AI."

"While the hot term is not always understood think of it as a living business graph that feeds AI reasoners solving the very hard problem of context structuring," said Ni.

He added:

"The underlying message: stop treating AI as a science project. Make AI operational. AI suffers without context. Celonis wants to give it memory. Celosphere 2025 isn’t about process mining anymore, it’s about decision mining: finding, understanding executing where AI should act."

Here's a deeper dive on the Celonis news.

Celonis added to its Process Intelligence Platform and built out its operational digital twin features. The company said Celonis Data Core is generally available with the ability to integrate with Databricks and Microsoft data lakes.

The company said that the Process Intelligence Graph includes the ability to connect desktop actions to business processes with new task mining capabilities and AI-driven task discovery. Unstructured data types are also integrated.

Celonis also added enterprise architecture blueprints that give a live view of operations. The company also added more than 60 pre-built objects and events including assistants for data extraction and data modeling.

Other additions include:

- New object-centric process mining (OCPM) capabilities to identify issues at process intersection points.

- An orchestration engine that now coordinates AI agents for end-to-end processes.

- Process Intelligence MCP Server.

As for the Databricks partnership, Celonis will leverage Databricks Delta Sharing for bi-directional integration. The integration means that joint customers won't need to move or copy data between platforms.

With the Databricks integration, Celonis can read live Databricks data and enrich it with business context and its Celonis Process Intelligence Graph to create digital twins. Process Intelligence from Celonis can then be fed back to Agent Bricks.

For Celonis to win over enterprise, it'll have to prove out its value in the data to decisions chain. Mercedes-Benz outlined how it uses Celonis for order-to-deliver, aftersales and quality management. Mercedes has integrated process intelligence with its AI and operations workflows.

Vinmar created a digital replica to automate order-to-cash, find distressed orders and remove friction from handoffs.

Data to Decisions

Tech Optimization

Innovation & Product-led Growth

Future of Work

Next-Generation Customer Experience

Revenue & Growth Effectiveness

Chief Information Officer