From Boardroom to Operations: Building Human-Machine Partnerships That Balance Speed with Wisdom

Distinguished Chair of the Accelerator & Principal/CEO

Stimson Center & LeadDoAdapt Ventures

Dr. David A. Bray is a Distinguished Fellow and Chair of the Accelerator with the Alfred Lee Loomis Innovation Council at the non-partisan Henry L. Stimson Center. He is also a non-resident Distinguished Fellow with the Business Executives for National Security, and a CEO and transformation leader for different “under the radar” tech and data ventures seeking to get started in novel situations. He is Principal at LeadDoAdapt Ventures and has served in a variety of leadership roles in turbulent environments, including bioterrorism preparedness and response from 2000-2005. Dr. Bray previously was the Executive Director for a bipartisan National Commission on R&D, provided non-partisan leadership as a federal agency Senior Executive, worked with the U.S. Navy and Marines on improving…...

Read more

1

In Part 1 of this 2026 Boardroom Decision series, I highlighted why corporate Boards must expand their fiduciary duty to encompass gray zone threats, hardware-level vulnerabilities, and the strategic asymmetries created by fragmented AI policies. I argued that traditional risk management frameworks are insufficient when facing adaptive, intelligent adversaries in rapidly changing environments. The question I left you with was this: how do we build resilient organizations that can thrive in a contested world while preserving human agency and ethical judgment?

The answer lies in operationalizing what I call decision elasticity through AI-augmented defense and human-machine partnerships. This is not about choosing between human judgment and machine speed. It is about architecting systems where both work together, each amplifying the other's strengths while compensating for weaknesses.

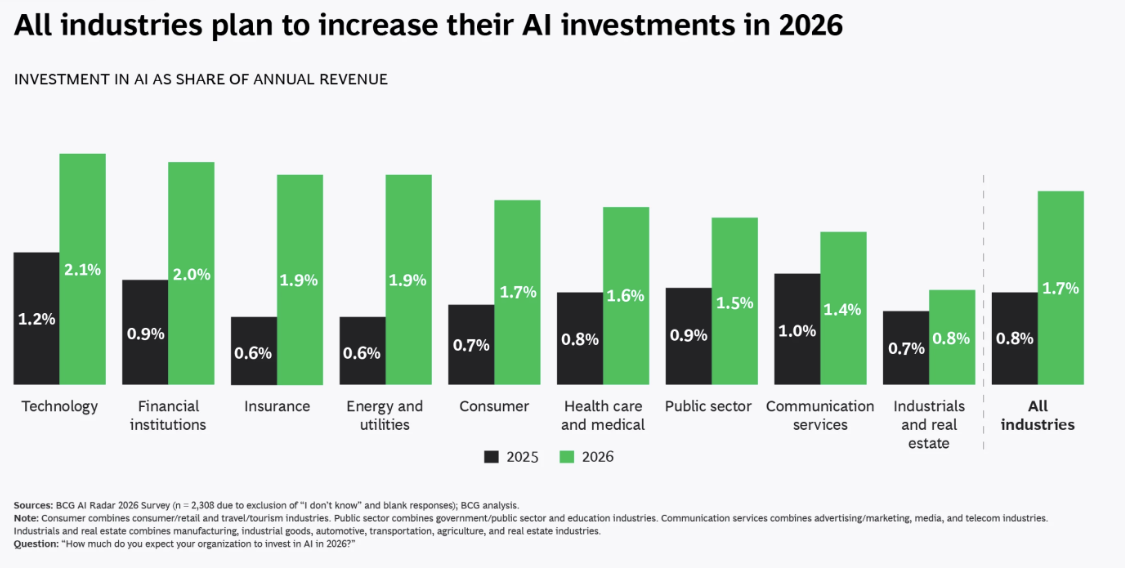

Why Cybersecurity and AI Budgets Must Rise Together

In a recent DisrupTV episode with friends Ray Wang and Vala Afshar, I joined Andre Pienaar, CEO of C5 Capital, to discuss leadership in the age of AI-driven cyber threats. Andre opened with a stark reality that every Board must internalize namely that cyberattacks are becoming more sophisticated, faster, and increasingly AI-driven. Threat actors no longer operate with manual tools. They are deploying automation, machine learning, and increasingly autonomous systems to exploit vulnerabilities at scale.

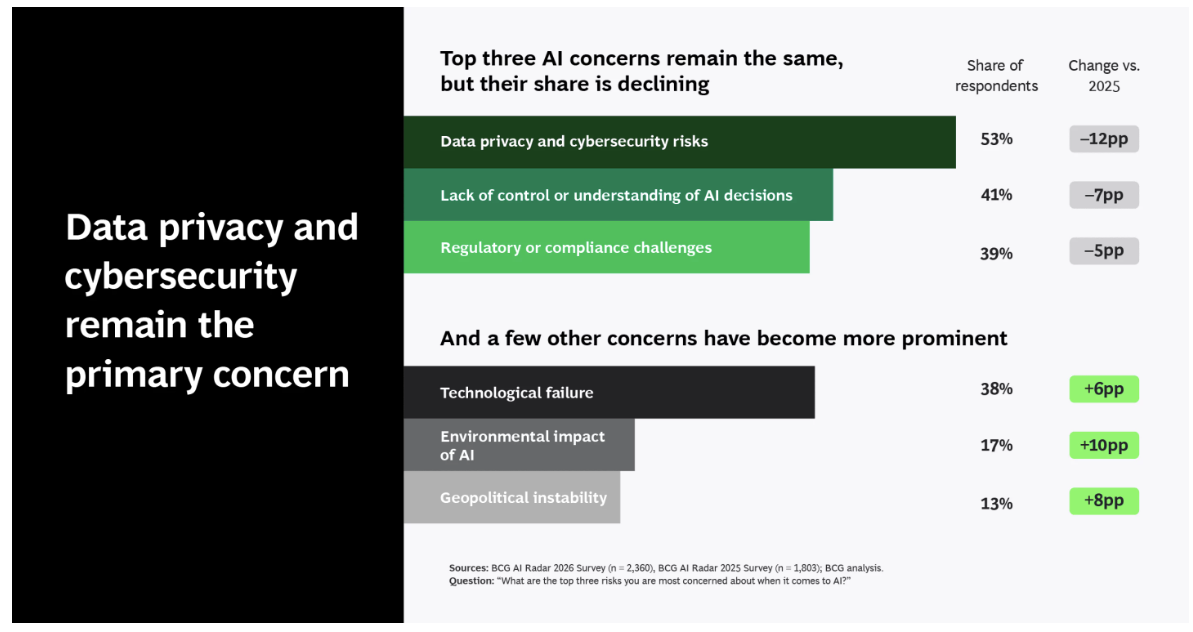

For Boards and executives, this means a fundamental shift in investment strategy. You cannot increase AI adoption without simultaneously increasing cybersecurity investment. Andre emphasized that AI expands the attack surface just as much as AI enhances productivity. Organizations deploying AI without upgrading security architectures are effectively widening the door for adversaries.

This connects directly to the gray zone threats I discussed in Part 1 of this series. Specifically, when nation-state actors spend 15 years systematically positioning themselves in your supply chain, they are not just waiting for you to deploy technological upgrades. They are counting on it.

Every new AI system, every cloud migration, every edge computing deployment creates new entry points if security is not architected from the ground up.

AI-Augmented Defense: Humans and Machines, Together

During the DisrupTV conversation, I reinforced a principle that has guided my work from the CDC to the U.S. Intelligence Community to the FCC to my current advisory roles: AI alone is not the solution, but neither are humans operating without it. Cybersecurity success depends on augmented intelligence, where AI detects patterns of life and anomalies at machine speed while humans provide the essential context, judgment, and ethical oversight.

I highlighted a sobering trend: ransom demands are increasing sharply, and AI-enabled attacks are lowering the cost and effort for bad actors. Defenders must respond with equal sophistication. The future of cybersecurity is not humans versus machines. The future is humans with machines.

This humans-with-machines partnership reflects what I call deployment empathy, the recognition that technological transformation is fundamentally about people. Leaders must create environments where teams feel psychologically safe to experiment, learn, and adapt alongside AI systems rather than feeling threatened by them.

Let me be concrete about what this looks like operationally. In my work advising organizations on AI adoption, I see three common failure modes that Boards must help their executive teams avoid.

Failure Mode 1: Treating AI as a Black Box. When security teams deploy AI-driven threat detection but cannot explain how the system reached its conclusions, they create two problems. First, they cannot improve the system because they do not understand its reasoning. Second, they cannot defend their decisions to regulators, customers, or juries when things go wrong. Boards must insist on explainable AI in security-critical applications.

Failure Mode 2: Over-Automating Decision-Making. Some organizations, in their enthusiasm for AI efficiency, automate responses to detected threats without human oversight. This creates catastrophic risks when AI systems misidentify legitimate activity as malicious or when adversaries learn to game the automated responses. Decision elasticity requires keeping humans in the loop for consequential decisions, even if AI provides the initial alert and analysis.

Failure Mode 3: Under-Investing in Human Capability. The most sophisticated AI security tools are useless if your team does not have the skills to interpret their outputs, tune their parameters, or integrate their insights into broader strategic context. Boards must ensure that AI investments are matched by investments in human capability development, not just technical training but also the critical thinking and ethical reasoning skills that machines cannot replicate.

Quantum Computing, Geopolitics, and Shortening Supply Chains

The DisrupTV discussion also explored the geopolitical implications of AI and quantum computing. Andre and I both stressed that quantum breakthroughs will eventually render today's encryption obsolete, making post-quantum cryptography a near-term planning requirement, not a distant concern.

This is where the hardware vulnerabilities I discussed in Part 1 of this 2026 Boardroom Decision Series become even more critical. If your supply chain is already compromised at the chip level, the transition to post-quantum cryptography will not save you. Adversaries with hardware-level access can simply intercept data before it is encrypted or after it is decrypted, rendering even quantum-resistant algorithms useless.

My recommendation to Boards is clear: shorten your supply chains to improve cybersecurity. This is not just about reshoring manufacturing, though that may be part of the solution. It is about reducing the number of handoffs, intermediaries, and black-box components between your organization and the foundational hardware and software you depend on. Every link in the supply chain is a potential compromise point. The shorter and more transparent the chain, the easier it is to verify integrity.

At the same time, AI policy and regulations are fragmenting globally.

I posited during the DisrupTV conversation that leaders must understand which geopolitical "technology matrix" they are operating within. To maintain resilience, organizations must be able to pivot as that matrix shifts.

Key Strategic Takeaways for Operational Leadership

Building on the Board-level governance principles from Part 1, here are the operational imperatives that CEOs, CISOs, and General Counsel must execute:

Harmonize Governance Internally Before External Mandates Force Your Hand. Do not wait for federal AI policy to resolve the 50-state fragmentation I discussed in Part 1. Establish internal AI governance frameworks now that can adapt to multiple regulatory regimes without requiring complete system redesigns. This means building modularity and flexibility into your AI architecture from the start.

Compartmentalize Experimentation While Maintaining Oversight. Create sandboxes where teams can experiment with AI capabilities without putting production systems or sensitive data at risk. But ensure these sandboxes have clear governance, defined success criteria, and pathways to production that include security reviews and ethical assessments.

Prioritize Pivotability Over Optimization. In a world of rapid technological change and geopolitical uncertainty, the ability to change direction quickly is more valuable than squeezing the last percentage point of efficiency from current systems. This is what I call maximizing pivotability. Avoid decision anchoring, where you double down on a technology or vendor relationship even when market signals suggest a need for change.

Embrace Different AI Flavors with Different Governance. Understand that computer vision AI operates deterministically while Generative AI is creative but unpredictable. Each requires different governance approaches and risk frameworks. Boards and executives must resist the temptation to apply one-size-fits-all policies to fundamentally different technologies.

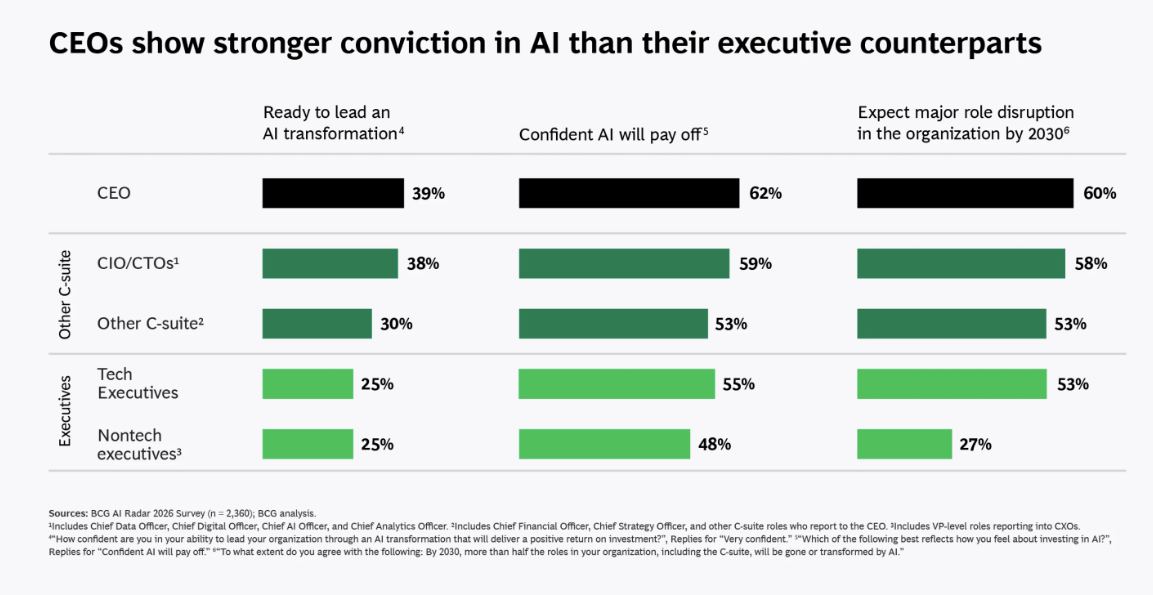

Lead with Empathy and Courage Through Uncertainty. We need leaders who are not just technically literate, but also leaderships who lead with empathy and courage through unprecedented uncertainty. This means being honest about what you do not know, creating space for your teams to voice concerns and propose alternatives, and making decisions even when perfect information is unavailable.

The Operational Reality of Decision Elasticity

Decision elasticity is not just a conceptual framework. It is an operational discipline that requires specific organizational capabilities.

First, you need real-time situational awareness. This means AI systems that continuously monitor your environment, detect anomalies, and surface potential threats or opportunities before they become crises. But situational awareness alone is not enough.

Second, you need rapid sense-making capabilities. When AI surfaces an anomaly, your team must be able to quickly assess whether it represents a genuine threat, a false positive, or an emerging opportunity. This requires cross-functional collaboration, access to diverse expertise, and the ability to synthesize information from multiple sources.

Third, you need pre-authorized response options. In a crisis, you cannot afford to wait for Board approval or executive consensus on every decision. But you also cannot give mid-level managers carte blanche to make consequential choices. Decision elasticity means defining in advance what types of responses can be executed immediately, what requires escalation, and what triggers a full crisis response.

Throughout these three capabilities, you need continuous learning and adaptation. After every incident, whether it is a security breach, a compliance failure, or a missed opportunity, your organization must conduct rigorous after-action reviews that feed insights back into your AI systems, your processes, and your training programs.

Scaling Resilience Through Ecosystem Partnerships

The governance principles from Part 1 of this essay series, and the operational capabilities I have outlined here, collectively create the foundation for organizational resilience. No company can achieve resilience in isolation. In Part 3 of this series, I will explore how Boards can scale resilience through strategic ecosystem partnerships while maintaining the pivotability needed to adapt as the geopolitical and technological landscape shifts.

The key insight I will develop is this: scaling through partnerships is not just about growth. It is about building a resilient infrastructure that can pivot every three to six months as tech tectonics shift.

This requires moving away from top-down leadership and toward a decentralized, networked model that leverages collective intelligence while avoiding the decision anchoring that causes organizations to double down on failing strategies.

An Invitation to Operational Excellence

If your organization is struggling to balance AI adoption with cybersecurity, if your teams feel overwhelmed by the pace of change, or if you recognize that your current operational model is not built for the converged threats I have described, I invite you to engage in a deeper conversation about building human-machine partnerships that work.

My advisory work focuses on helping organizations develop the operational discipline and cultural foundations needed for decision elasticity. This is not about implementing a specific technology or following a compliance checklist. It is about building the organizational muscle memory to respond to ambiguous threats with speed and wisdom.

The stakes are clear. AI strategy is now inseparable from national security and economic competitiveness.

For operational leaders, the question is whether you will build the capabilities to compete in this environment or watch as more agile competitors and adversaries outmaneuver you. Given that fortune favors the brave, I strongly recommend a proactive leadership approach.

Dr. David Bray is both Chair of the Accelerator and a Distinguished Fellow at the non-partisan Stimson Center as well as Principal and CEO at LeadDoAdapt Ventures, Inc. He previously served as a non-partisan Senior National Intelligence Service Executive, as Chief Information Officer of the Federal Communications Commission, and IT Chief for the Bioterrorism Preparedness and Response Program. Business Insider named him one of the top “24 Americans Changing the World” and he has received both the Joint Civilian Service Commendation Award and the National Intelligence Exceptional Achievement Medal. The U.S. Congress invited him to serve as an expert witness on AI in September 2025. He also advises corporate Boards and CEOs on navigating the convergence of AI, cybersecurity, and geopolitical risk.

Board Strategy

Data to Decisions

Digital Safety, Privacy & Cybersecurity

Future of Work

Innovation & Product-led Growth

New C-Suite

Tech Optimization

Next-Generation Customer Experience

Leadership

Security

Zero Trust

ML

Machine Learning

LLMs

Agentic AI

Generative AI

AI

Analytics

Automation

business

Marketing

SaaS

PaaS

IaaS

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

CRM

ERP

finance

Healthcare

Customer Service

Content Management

Collaboration

Chief Executive Officer

Chief Financial Officer

Chief People Officer

Chief Information Officer

Chief Marketing Officer

Chief Information Security Officer

Chief Experience Officer

Chief Privacy Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Product Officer