HOT TAKE: SupportLogic New Features Help Leverage Support as Revenue Driver

HOT TAKE: SupportLogic New Features Help Leverage Support as Revenue Driver

SupportLogic has been in business since 2016, and has primarily been seen as a tool that helps support leaders drive a more enhanced support experience (or “SX” as the company brands it). This has mostly been achieved by using SupportLogic’s ML and sentiment analysis to extract “signals” from emails and other text-based data inside customer cases to prevent escalations, and provide better agent quality control.

But the company has long understood that the signals it extracts are far more valuable than the core use cases of escalation avoidance and more intelligent case routing. CEO Krishna Raj Raja has always called the support center a “revenue center” rather than a cost center - positing that support organizations are the true front line when it comes to the actual voice of the customer.

In B2B relationships (especially in high tech where SupportLogic has focused), this rings true. Think about it - CRM data from sales interactions holds some important deal data, but typically ends when the deal is closed. Marketing data only includes interest, a little insight about products purchased, used, and the product usage/customer experience. But case data includes a treasure trove of insights around actual products deployed, how they are used, user satisfaction, dissatisfaction - as well as signals around what a customer might be missing: features, configurations, additional products, etc. that can lead to a more successful (and profitable) relationship, if acted upon at the right time and in the right manner.

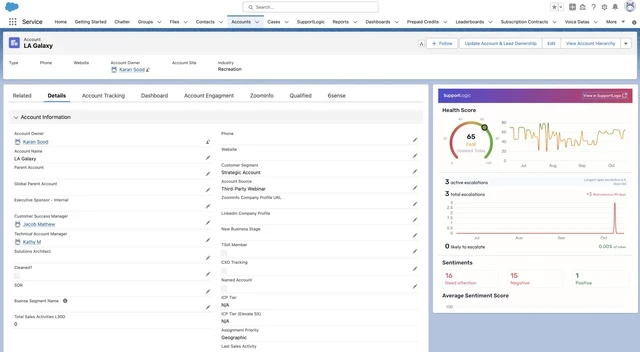

Enter “Expand” - a new feature set from SupportLogic than expands upon its signal extraction capabilities around Account health Scores to drive success and other revenue teams with previously hidden cross sell and upsell opportunities. As the company puts it, SupportLogic's Expand module brings real-time account health visibility to account management teams, helping them identify upsell and cross-sell opportunities, monitor customer satisfaction, and act on early warning signs that may signal potential issues or churn risks. This provides an interesting workflow where true customer signals can be flowed to customer success, account execs, etc. to better act on revenue opportunities, where these signals typcially get lost in unstructured text, or are not captured properly at all.

The new features of Expand are designed to provide a comprehensive view of account health, combining insights from multiple data sources, allowing teams to take proactive measures for growth. It includes the following core features:

- Account Health Score: A unified score that reflects the overall health of the customer relationship, combining sentiment data, support history, and product usage signals.

- Account Commercial Signals: New commercial signals that significantly enhance customer retention, drive revenue growth, and foster long-term customer loyalty.

- Signals include: Churn risk, renewal likelihood, competitive consideration, expansion opportunity, price sensitivity, license upgrades and downgrades.

- Account Summarization: Generative AI-based automated summaries that capture the status of key accounts, making it easy for account managers to stay informed.

- Account Alerts: Real-time alerts for changes in account health, including early warnings on potential churn or upsell opportunities.

- Account CRM Widget: Seamless integration into popular CRM platforms, enabling account managers to view account health directly from their CRM dashboards.

- Integration with Gainsight CS: Built-in compatibility with leading Customer Success platforms like Gainsight CS, offering streamlined workflows for customer success and account management teams.

The new Expand feature set is indicative of an overall shift in terms of tech better supporting a full journey approach to growth optimization. According to Supportlogic, organizations that understand, and act on, the types of signals that can be gleaned from support interactions can increase customer lifetime value and reduce churn in a more profitable and seamless manner.

In the age of agents AI, and a lot of “easy button” promises by leading CX vendors - buyers should look more closely at how use cases such as account health and expansion revenue analysis are truly supported by both generic Gen AI and agent tools, as well as how they are truly addressed by the copilots and agents found in leading CRM platforms. Tools like SupportLogic are not “easy buttons,” but for businesses with longer, complex support cycles that include a lot of “back and forth” wherein lots of product and sentiment signals can be extracted - it is worth considering as many more broad based AI tools are not as finely tuned for such specific B2B use cases.