ServiceNow posts strong Q3, launches Workflow Data Fabric, expands partnerships, hires Zavery as product chief

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

ServiceNow reported better-than-expected third quarter results, launched Workflow Data Fabric, outlined partnerships with Rimini Street and Cognizant and said it will step up agentic AI efforts with Nvidia. For good measure, ServiceNow also said Google Cloud executive Amit Zavery will be the company’s new president, chief product officer and chief operating officer.

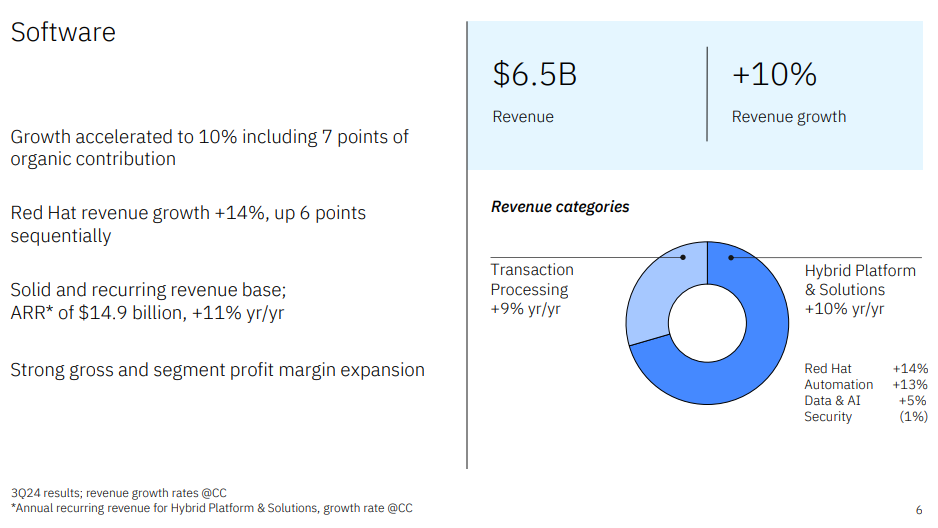

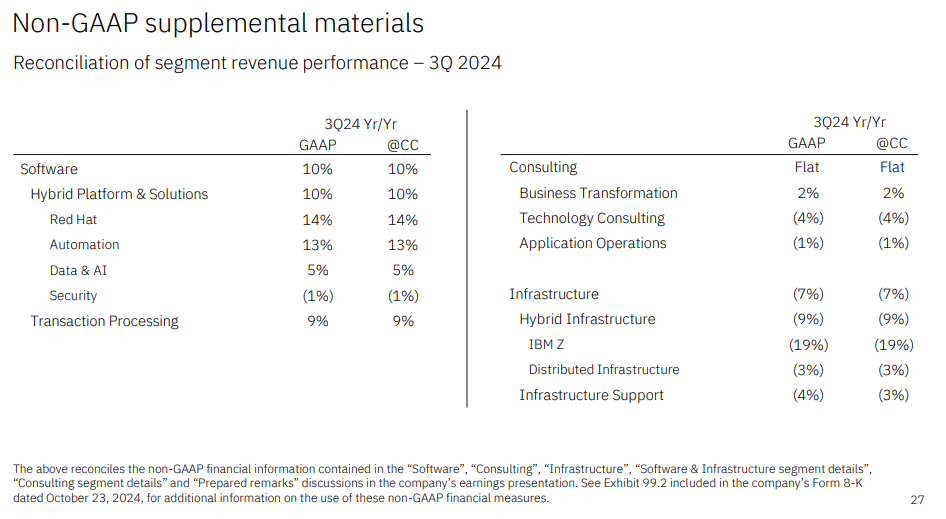

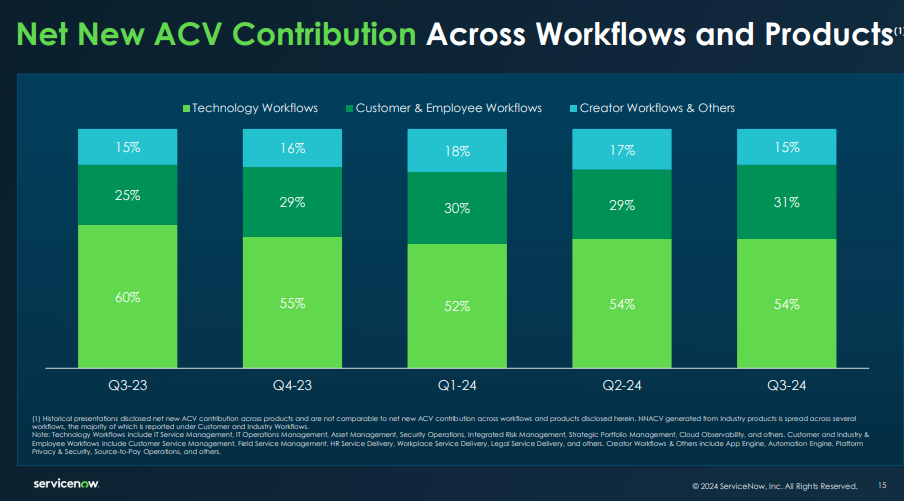

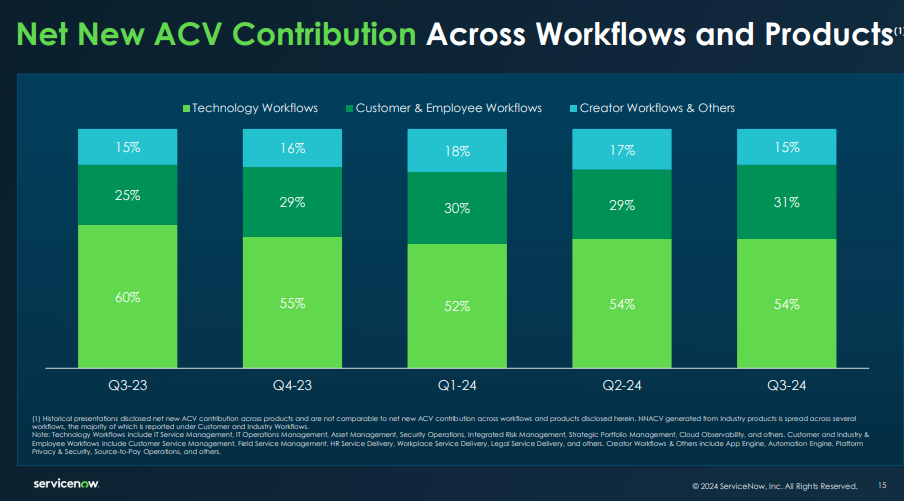

The moves come as ServiceNow delivered strong subscription growth of 22.5% in the third quarter compared to a year ago. The company inked 15 deals worth more than $5 million in annual contract value and more than 2,020 customers with more than $1 million in ACV.

For the third quarter, ServiceNow reported net income of $432 million, or $2.07 a share, on revenue of $2.8 billion. Non-GAAP earnings for the quarter were $3.72 a share. Wall Street was expecting ServiceNow to report earnings of $3.45 a share on revenue of $2.75 billion.

As for the outlook, ServiceNow projected subscription revenue of $2.875 billion to $2.88 billion.

With Now Assist AI as its fastest growing product, ServiceNow is looking to press an advantage as a platform that enables agentic AI by connecting multiple systems and the data, workflows and processes in enterprises. ServiceNow CFO Gina Mastantuono said demand for the Now Platform was “robust” and Now Assist was “already delivering fantastic results.”

ServiceNow's Xanadu release adds AI Agents, RaptorDB Pro, genAI enhancements

The hire of Zavery is also a big move. CEO Bill McDermott said the addition of Zavery gives ServiceNow a “visionary product and engineering leader with a proven history of building market defining products and scaling world class platforms.”

Zavery's most recent gig was VP, GM and Head of Platform from Google Cloud. Zavery previously was an executive at Oracle.

In an SEC filing, ServiceNow detailed Zavery's pay package. A few nuggets worth noting:

- The base salary is $900,000.

- Zavery's signing bonus was $3 million.

- To replace Zavery's outstanding equity grant from Alphabet (Google Cloud's parent), ServiceNow said Zavery was eligble to receive an equity grant of $29 million.

Zavery will take on his role as ServiceNow is already furiously building on its agentic AI plans:

- ServiceNow launched Workflow Data Fabric, a data layer that operates across enterprise systems, and a partnership with Cognizant.

- ServiceNow and Nvidia expanded a partnership to co-develop AI agents based on Nvidia NIM Blueprints.

- ServiceNow and Rimini Street outlined a partnership to put the Now Platform on top of legacy ERP systems, support them and take the savings to invest in AI innovation.

Speaking on ServiceNow's third quarter earnings call, McDermott said the company is more strategic with generative AI and agentic AI. "The C-suite is looking to us to prevent a mess with AI," said McDermott. "Leaders see the risk that every vendor's bots and agents will scatter like hornets fleeing the nest. Enterprises trust us to be the governance control tower."

McDermott said the company is now using its platform to deploy autonomous agents at scale. "We intend to be the control point that governs the deployment of agentic AI across the enterprise," he said.

Here's a look at what was announced:

Workflow Data Fabric, Cognizant pact

ServiceNow launched Workflow Data Fabric, which is based on the Now Platform and RaptorDB Pro database. Workflow Data Fabric is available now.

The data layer features Zero Copy connections to integrate data from multiple sources to be used by AI agents. Cognizant will be the first partner for Workflow Data Fabric.

With the move, ServiceNow is adding a data integration layer to connect systems and workflows. Enterprise software vendors are increasingly adding these data layers.

Workflow Data Fabric is designed to connect, understand and process structured and unstructured streaming data across ServiceNow and third-party platforms. ServiceNow's plan is to use Workflow Data Fabric to read data and act.

Key points include:

- Workflow Data Fabric is powered by ServiceNow's Automation Engine, which includes data streaming, robotics process automation and process mining.

- The recent acquisition of Raytion is integrated with more than 500 connectors.

- ServiceNow said the launch of Workflow Data Fabric includes Zero Copy partnerships with Databricks and Snowflake and other data platforms.

- Workflow Data Fabric streamlines notifications and assigns incidents and includes ServiceNow's Knowledge Graph. The idea is that this graph can add context so AI agents can carry out tasks.

Nvidia NIM Agent Blueprints

ServiceNow and Nvidia said the companies will co-develop native AI agents based on Nvidia's NIM Agent Blueprints.

The two companies said they will develop multiple AI agent use cases for the Now Platform. These AI agents would leverage Nvidia AI Enterprise, NeMo and NIM microservices.

ServiceNow and Nvidia have been partners for years. Nvidia is looking to broaden its AI agent frameworks to speed up generative AI deployments.

According to the companies, the plan is to expand out-of-the-box AI agents starting with cybersecurity. ServiceNow and Nvidia said they will develop Vulnerability Analysis for Container Security AI Agent.

These turnkey AI agents will be activated through ServiceNow AI Agent Studio, which is expected to be available in 2025.

Rimini Street pact

ServiceNow said it will partner with Rimini Street to offer enterprise resource planning (ERP) support to milk legacy systems without migrations and re-platforming so enterprises can invest elsewhere.

Constellation Research CEO Ray Wang said the ServiceNow-Rimini Street partnership can give enterprises some breathing room since many are being prodded to upgrade to cloud-based foundations. "It is imperative that enterprises accelerate AI innovation, digital transformation and workflow automation without being slowed by the complexity and cost burden of upgrading or migrating existing enterprise software," said Wang.

The companies said they will offer a new enterprise software model that includes:

- ServiceNow's AI platform to provide a layer on top of existing ERP systems. Rimini Street will design, deploy and manage the Now Platform on top of ERP systems with a certified ServiceNow team. Savings will fund AI investments.

- Rimini Support will replace software vendor maintenance with no required upgrades or migrations for a minimum of 15 years.

- Rimini Manage that will run the day-to-day software operating and support tasks of legacy ERP systems.

For Rimini Street, the ServiceNow pact will help with the company's own transformation as it winds down Oracle Peoplesoft support to focus on VMware maintenance and other initiatives.

Data to Decisions

Future of Work

Innovation & Product-led Growth

Tech Optimization

Next-Generation Customer Experience

Digital Safety, Privacy & Cybersecurity

servicenow

AI

GenerativeAI

ML

Machine Learning

LLMs

Agentic AI

Analytics

Automation

Disruptive Technology

Chief Information Officer

Chief Information Security Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Product Officer