Scotts Miracle-Gro Tests CX to Optimize for Extraordinary Growth | CR CX Convos

Vice President & Principal Analyst

Constellation Research

About Liz Miller:

Liz Miller is Vice President and Principal Analyst at Constellation, focused on the org-wide team sport known as customer experience. While covering CX as an enterprise strategy, Miller spends time zeroing in on the functional demands of Marketing and Service and the evolving role of the Chief Marketing Officer, the rise of the Chief Experience Officer, the evolution of customer engagement, and the rising requirement for a new security posture that accounts for the threat to brand trust in this age of AI. With over 30 years of marketing experience, Miller offers strategic guidance on the leadership, business transformation, and technology requirements to deliver on today’s CX strategies. She has worked with global marketing organizations to transform everything from…...

Read more

Don't miss the latest CR #CX Convo with Constellation analyst Liz Miller! ?

Liz sits down with CX leaders Jessica Bailey and Hailey Schraer from The Scotts Miracle-Gro Company 🌱 to unpack how customer experience strategies and partnership with UserTesting drive omnichannel #revenue for the business. 📈

Topics include...

📌 UserTesting techniques (07:41)

📌 Championing the voice of the #customer (10:10)

📌 Balancing education and purchase focus (14:10)

📌 Importance of quick insights (18:45)

📌 Challenges with prioritization and balancing requests (25:22)

📌 Direct-to-consumer efforts and lessons learned (32:05)

For more info, read the full customer story by Larry Dignan ? https://www.constellationr.com/research/scotts-miracle-gro-how-cx-and-usertesting-drive-omnichannel-revenue

__

Access the full video transcript: (Disclaimer: This transcript has not been edited and may contain errors)

Music. Hello, everyone. I am here with two amazing, amazing researchers, digital experience architects, CX visionaries. But you know what? More importantly, I am here with two people who know how to put in the work, and so I'm super excited to be joined by Jess and Haley from Scott's Miracle Grow. Should we just go ahead and jump right into it? Should we just go have some fun? Let's do it. I love it. I love it. I first. I want to get into it. Tell me about yourselves, like, what's your background? What's your role at Scott's Miracle Grow? What got you from, like, those first jobs, the job where you're at right now? And what do you do?

Yeah, I'll kick it off. I am coming up on my sixth year anniversary at Scots. Sort of started out pre covid Going into the office every day, but, yeah, it's been a really, really interesting journey. My background is a lot of user experience, more on the evaluative usability research side, but also information architecture design, and I had some really, really amazing experiences early in my career, working in the consulting space, working for big in house organizations. And I think I really found a love for being able to be in house and see the work all the way through, and and to see and well, and to see some of these really long timelines all the way through to and we have several projects that we've been working on that span multiple years. And it's such a blessing to be able to see that, as opposed to having to, you know, fall away after a little bit and hopefully come back, but it's been really great, and I've been able to bring on amazing people like Haley, who have made us just even better. I'll toss it to you. Love that?

Awesome. Yeah, I've been at the company for I just hit my five year in May, so I kind of owe my entire UX career to Jess. UX is where I started UX at Scots, and I've kind of been here ever since leading and building some sort of a team here so we can kind of build empathy across the organization with how our consumers use our digital products, use our physical products, etc. So excited to be here in chat a little bit more.

I love that you just said that you're building empathy across the organization for how people use your digital like, I love that statement. I kind of want to turn it into a t shirt. So I'm not gonna lie, if you somehow see that pop up someplace, just

make sure you send me one. Just send me Yeah, I'm just gonna send you one. There'll be, like, a whole like,

we'll Venmo each other, it's gonna be fine. Okay, so let's talk just a little bit about kind of what's going on and what are some of the big projects, what are some of the big initiatives when you start to think about experience, and especially when you start to think about digital experience, where are some of the directions, and where are some of the key areas that not only you want to see the organization start to go in, but what are those big projects you guys are working on right now that you can share? I don't want to give away the secret sauce, but some good stuff. Yeah, I

think a big thing for us is really honing out on education across all of our platforms. We need to kind of educate our consumers about what they need to do in their lawn, they need to do in their gardens, so that they feel kind of confident and comfortable tackling these projects, and the more confident and comfortable that they feel, the higher likelihood that they're going to purchase from us, so that they're going to revisit our sites, come back and learn more. And so I think that's that's always been a big push for us, and I think we just continue to find and drive ways to really push education, push all of the tips and tricks, so we can teach you how to be the best yarder and gardener that you can I

love, that I love, but that really brings up kind of a very specific need of being able to identify fairly well ahead of time, I would think that kind of earlier in the content and the experience development process, what the burning needs of education are like, what are those things that are top of mind? What are those things that people want to talk about? Like, you know, like me, I like, I want to talk about how to make the fat squirrel in my yard go on a diet. Like, I don't want them to stop eating. But, like, right? But so when we everyone has a different place that they want to start learning. So what are some of the things? What are the tools, the techniques that you guys are using to really, not only get an understanding of that, what maybe a little ahead of time, but then also mapping and under. Understanding if that's really what your audience wanted to experience. Like, is it like? I might sound as I think it's cool, but I might also be navel gazing.

Yeah, I think we're always looking at bringing a lot of cross functional input together. So I know folks on our team, even just last week, had this really amazing conversation with our sales our field sales team to understand like, hey, when you run into folks that when they're in the aisle of a garden center, you know, what are the questions that they're typically asking you, and how can we create a bridge for them? We have folks on the team that focus really deeply on weather and the impacts of weather on our category, and people shopping and trying to just participate in our category overall. And so how did they see weather trends impacting us? And what are some solutions that we can create there? Haley is highly involved, and runs all of our conversion rate optimization and AB testing. And so we're able to get some really clear answers on like, what kind of content is performing better and driving some of those key actions we're focusing on SEO, what are people searching for? The terms that we're looking at. So we really do try to bring a lot of different sources together to get at what are the burning questions that people have, and also, how can we help meet them in the middle? You know, we don't want to come in and be you know, we are the expert, and we know all overlords. Yeah, no, yeah, yeah. Like so your problem? Could your tomatoes be more could you have a bigger bounty or a bigger harvest? Yeah, maybe Squirrel. Squirrel is your big problem right now. So solve that one for you and make it more likely that you're going to put in another, you know, another seedling next year, and maybe two seedlings next year, because you really want to make it happen, then that's a success for us.

I love that. I love that, you know, listen, as a long term marketer, I feel like I should say recovering marketer. I think that's a recovering marketer. And, you know, been at agencies, you know, worked very closely with, you know, art departments, and I've been through that process of, right, like you, you're like, Oh, I gotta build a website, and I'm gonna go and do this, and it's gonna be amazing. And here, here's my, you know, here's my mood board, and here's all of my, like, you know, here's my site maps, and here's all of my research, all this. And then you go and build it, and you kind of forget that all of that research, all of that information that you poured your blood, sweat and tears in, even if you were looking at all the SEO research to make sure all of that foundational search was there, you kind of forget that the testing is to keep going. You know that you kind of have to constantly be working on that care and feeding. I know that you guys do a lot with user testing, so I'm curious. Can you share some of the examples of how you're using these testing tools, right, whether it is something like SEO, whether it is that research on the back end, whether it is AB testing, or whether it is these qualitative panels? Can you share a little bit about what you're doing, and then where that starts to slot in, when you start to think about kind of the life cycle of engagement for each of your brands.

Yeah, user testing has been a great partner for us, I think, for the past three, four years. At this point, we use them in every way that we possibly can. So we do a lot of like, post launch we do a lot of pre launch usability. We'll do some, like, moderated interviews, where we're getting really in depth and nitty gritty about how people are kind of shopping that aisle and what their triggers are for them to go out in their yard, their yards and gardens. I think most recently we we recently ran a study on a new kind of platform that we're looking at going out with and wanting to get some pre launch research with it, to understand what are the pain points that they're having, or what can we expect once we once we release this, or what are some hypothesis that we can pull from this? And then now we can take those and go in and do testing post launch. So now we can say, hey, we're lacking education here. How can we then tackle that post launch and come up with some different testing ideas and different designs where we can really pool and show that quant data of We saw this in qual. These are, this is what we heard from our consumers. And then now we can test against it and say, okay, hey, these two variations smashed it. We really got the consumers what they needed from these lenses. And so we use user testing a lot when it comes to triangulation, and starting to find like our big common themes amongst our participants, and then going out talking to our analytics team or doing AB testing, to really marry that quant, that qual together so we can come up and with the best insights we can

I. I love that. I'm gonna ask like a okay, because you said something, and now my brain went someplace, and so that's where the question's gonna go. But I think part of the problem a lot of times, or at least, I mean, I can remember being at those tables and it sometimes it can feel like the, you know, those data kids came in and told me my baby was ugly. I don't want to listen to them anymore, right? Like it feels like that sometimes. What are some of the things that you guys do like, really, to champion the real voice of the customer by triangulating this quant and the qual. You guys really are sitting at a very interesting intersection of where that true customer, user reaction is coming in. It's not you saying, Well, I think people didn't like this button. It's literally someone being like, I hated that button. You're like, whoa, like, there's the end. But you also get the reason behind that. You get the richness behind that. How you know, how are you guys finding the insights and the information that you're sharing and triangulating move like upstream, so that it's not just I didn't like that button, but it becomes really rich insight for even senior executives and business strategy to say maybe we need to make a different decision, because if education is pushing us here, maybe our product has to shift there too. Or maybe this is a different opportunity. Are you seeing what you guys do take a different stream than kind of just sitting in that digital optimization space.

Yeah, I think there's a few ways that we're seeing that happen. We have a team that is really,

like respectful, I guess,

of data, and not a lot of defensiveness at all. And so I don't know, we're very lucky, I think, but we've not really had a situation where it's like, well, you're just saying that because you don't like it, or that's your personal like, purple, yeah, you know the power of a highlight reel, right? The power of video, the power of having a video of eight people saying, I'm confused. I'm not sure what, like the the cycle on that would be, or I'm confused. I don't know how I would use that. Can't you can't really rebut that at all. So we have teams that are really, really oriented to improving and optimizing and being willing to be wrong and work in a really agile way of if you know, if we can optimize, if we can improve. And it's also the beauty of working in the digital space is that we're working on products that are constantly evolving. Much of the the physical products that we sell in stores and that we sell online, you know, they're always evolving too that time scale is a little bit longer, but our innovation pipeline is super rich, and we're really trying to just sort of help folks that maybe are a little bit more used to that longer time frame. Say, Hey, you know, when it comes to the digital experiences that we have. We're doing that same, you know, product life cycle. We're just sort of squeezing it down a little bit, and the R and D that you're doing to make sure that that product is super, super effective, and that it really does deliver on all of the promises. That's essentially what we're doing to just apply to a different, yeah, I love that. Jess, I'll emphasize

one of your points there too. Of like, highlight reels, we have shown that that like, sticks with our stakeholders the most. Like, there's nothing more important. And I think this is really how we've helped to, like, build empathy. Here is like, let's put this in front of user. We watch videos all day, so let's put this in front of our stakeholders, in front of our cross functional teammates and truly show them what these consumers and these participants are doing, so that they really have a chance to, like, put their put their feet in their shoes, and understand how they're experiencing it, rather than us just saying, hey, the button's ugly, like you can actually truly hear it. And it really does drive us and take us really far with our stakeholders. So that's

amazing. That's amazing, you know, and I think that it's so interesting in your business. I mean, so Scott's Miracle Grow. I think everyone thinks about it from a different entry point, right? Because the portfolio of brands is really expansive across the company. So you have a different mindset of buyer. You have a different skill level of buyer. You, you know, like you got me, like self professed urban farmer here in Los Angeles growing a tomato. I'm pretty proud of myself. But then you have, like, farmer, farmers, like you guys, have this really broad group of customers, but you also, I would imagine, that also translates into a very broad group of stakeholders internally, some of whom are looking for that transaction, right? Like, I need someone to buy that bag of soil. I need someone to be referred to a channel partner. Like, it's going to be very transactional. And Hayley, like you mentioned, you also have that stakeholder that's like, no, no, remember the education part? Like, remember how we lead people into the funnel? So those can often be in most organizations, a pretty. Significant push and pull when you're trying to figure out how to prioritize, whether it's testing, whether it's research, how quickly things get up. I think traditionally, in the world of research, you got to make choices, because things take a while. Can you kind of walk me through how you've developed best practices, whether it is how you prioritize the speed and scale at what you're able to work. But then also, how have you really been able to just, kind of, I guess, balance, like, balance everything. So now that you have the knowledge, how do you are you know? How are you able to then kind of make sure that, like, yeah, we're getting bags and carts, but we're also getting people to think about the next season?

Yeah, yeah. I think focusing on building confidence and motivation and having content that can get people excited to maybe start caring about their lawn for the first time in a while, or to take that very first step, that kind of upper funnel, I guess, like that kind of getting people to just start to care about something that maybe they've lost interest in, or they started a garden during covid, and they're, you know, maybe had one or two seasons of like, oh boy, but putting them back in, you know, if we can do that, then the rest of the pieces are much easier for a lot of our other partners that are in media and marketing and sales, like we've we've really primed them to say, like, you can hit a home run now, like, we've got folks in that right mindset. And so I think having that focus being on the upper funnel, we see that it's paying for things that come later down the road, and that that is the right place for the channels that we're focused on most often, that's the right role for it to play. I think that it can sometimes vary by brand. And there are certain in the portfolio that we have, there are certain brands that say we are exclusively here for education, if you want to compare products, because you're between two or three that have a similar benefit, that's why we're showing up for you in the digital space, or if you want to get inspired to take on a new project, that's why we're there. There are other brands and other sub brands where we see that purchase and that conversion being their top focus. And so we are having to shift a little bit to say, hey, you know you're really focused on moving people through the purchase path, getting that cart to move into checkout, getting that checkout to move into a purchase and so we do have to balance a little bit. And sometimes certain voices are louder than others when it comes to making that balance, we're We're a small team, and so we have choiceful and pretty strategic on how we take those on. But I think truly, in the last couple of years where we've tried to create efficiency in our martech stack and creating efficiencies with the platforms and tools that we're using, we're able to take an insight and apply it more broadly, a little bit easier than we have in the past. And so that rising tide can then benefit all of the brands, and the ways where it's appropriate to benefit all of the brands, and then note those that need, like a more specific focus or a more specific outcome, we can focus our attention really deeply there, while we've also brought everybody up to that same sort of baseline experience that we're able to identify love,

that how important is speed, when you start thinking about these tests, and when you start thinking about kind of how quickly you're able to like, let's look at, let's be honest, I remember back in the day this is, this is going to prove like, how old I am as a marketer. I worked in, you know, skincare, and I also worked in professional sports. And I can remember, like, remember good old, like, focus groups, right, where you were, like, let me get 20 people to sit over there in uncomfortable chairs with bad apple juice and maybe some of the butter cookies that came out of the tan, like, Jess, you come on. Like, we remember this, where you're like, oh, yeah,

I remember

projects. Yeah, I was

right, yeah. Like, can you? Can you? No, no, find the button. No, no, fine, not the real but no, you're not your shirt. But like, we remember those days, right? Like, I still have pain from those days. So how important is it now in this digital space? Well, we are expected to move so quickly, right? Everyone's like, Well, what do you mean? You can't just change it. Well, what do you mean? How important is speed and scale? Just one come before. Like, is it more? How do you start to look at those things? Yeah,

I think not to kind of poke out. These are. Testing again here, but a user thing super much helps us with that. Where our A our ability to test early and often and through the user platform, I think has been super helpful for us. I know we have stats somewhere in our case study of just like we've been able to grow exponentially and pull a lot of those consumer insights in much easier and much quicker, because we can test much faster. And I think that also just speaks to our consumer where we are not that like niche, very, very tiny, tiny business that has that very niche consumer. We want to talk to homeowners. We want to talk to those who are in their lawns and gardens, and so it's a little bit easier for us to then recruit for those folks, to be able to bring them in. So our definitely, our speed to insights has definitely increased. I know I can't speak to some of those kind of long days in a in a focus group room, but fun, Haley,

you don't want to. You don't ever want to. I

went to one, I went to one of my lifetime with Jess, and I will say, we work, we work much more efficiently and faster now. So

I did one on sunscreen, and it's like a day and a half I don't get back in my life just I'm just saying it was not good. Oh my gosh. Well, you know, there's two questions I want to leave us here and share with our audience, because I love these best practices, and I love the things that you guys are sharing, and I think that these are, I mean, you guys look at UX, you guys look at the digital experience, you look at this. But I think that so much of this can be actually applied, even in when we think about decision making for executives, and the velocity and the speed at which we need to make decisions about our businesses, we need to be able to bring in all the data, it bring in all the information, and to bring in that empathy, like you guys said, like, I love thinking about how you can apply that for a business leader and an executive, trying to move that concept of decision velocity forward. What are some of the things that you guys have noticed really make that difference? Like, are there any things that you would share with your colleagues and your peers across the CX ecosystem of if you could take this into your leaders three rungs up, you know, at the very top of the org, these are the types of things that we can and should be delivering specifically to accelerate decision making, anything that you guys would share?

Yeah, I think you talked about speed, and having that speed, we are obviously in a very seasonal business, right? Spring is our Christmas. Of a lot of other CPG and like online retail, like the holidays are, that's our spring, and I love it. Having an answer in spring for spring to impact spring is really important. And so that contrast from the olden days to now, where Haley has and can turn a study around in 24 hours, essentially. And I think that's where too I can take a very specific question that an executive has, a very specific or nuanced hypothesis that someone in leadership has, and we can run it, we can answer it, and we can get that insight back to them to inform it pretty quick, some of the things that we often are doing in the middle of that process that are sort of helping them massage their hypothesis too. Of like, well, you know, I think that people don't care about weather and how it impacts their seeding project, their grass seed project, you know. And so we're saying, Well, is it really about weather, or is it about temperature, or maybe about how long it's going to take to see results, or whether they have to water that much. And so we're trying to help understand, sort of the boundaries of the question that we're trying to solve or identify, at least, like the rough shape of the hypothesis, maybe that somebody has. And then we can come at it really iteratively, and say, we asked that question. Here's the response that we got. And we can ask two, three more questions too, to say, well, actually, hey, you know, they talked mostly about watering. Watering was their real concern. And so we can then spin it into another, spin it into another. There's a great way, I think, that we can help inform some of those leadership decisions that they're having to make, which are often so, so quick, in a way that we probably couldn't before.

I love that. That's I love that so. But then this leads to my net like almost second to last question. I'm going to count this as A, B to that last question I asked. Okay, just be honest, it's just between us girls. I mean, no one else is gonna see this, no one else gonna see this. Has the results of that, has the the ability to bring that research and that insight so quickly also kind of opened the floodgates, though, for folks across. Organization to be like, I too, would like you to test this where now all of a sudden everyone's like, I would like you to test blue versus dark blue, and you're like, that might not be a priority to date.

Oh yeah.

It's ironic to bring that up, because we just had an example of that this week, where, like, we are so dying to kind of get our research in front of people to share what we're finding and the insights that we have and build that empathy. But once you share it like it's not going to stop flooding in. And so I think we're right now trying to trying to figure out that balance a little bit too of and that's where like priority comes in, and seasonality, especially for us, comes into play, of how do we best balance these things within given seasons and within given priorities, because we want to help everyone. We want to bring all the insights and build as much empathy as we possibly can. But it definitely comes with, comes with the flood is coming in, and we got all the intake requests as possible. So everybody wants a new question. Okay, so

most important question I'm going to ask for you guys to share with our audience, like most important question, what should we be growing? Tomatoes, cucumbers, like, what like zucchini and I, we get along, but then when zucchini goes crazy and I now I got, like, 9000 bushels of zucchini and all the little thorns those who knew zucchini and cucumbers were actually painful. I did not know this when they were at just a whole foods. I did not know that I could get wounded by a vegetable. But there I am. Okay. So what are you guys growing? Come on,

I'm growing both tomatoes and cucumbers. There's this gorgeous, cute little variety of Bonnie Plants. It's called The Boston pickling cucumber, they're just little babies. And oh my goodness, do a refrigerator pickle at home. They're adorable. You always gotta, you gotta grow to the cuisine. You have to grow to what you like to cook, right? And so I love to cook Italian, and I love to cook like Mexican. And so

basil, you're like, three organo zone, like, you got a whole like, yeah, Herb era going on in the backyard,

yeah? But only plant what you're going to be excited to cook with. Otherwise, you're just that person that's shoving cucumbers on your neighbors and saying, Please, will you take this?

Yeah, looking alive. That was me.

I was like, I think I can grow bell peppers. I don't like bell peppers, yeah? So, like, I like them in one place, and that's a pizza, and that's it. Like, I don't and I only like one color. So it was just like, This is not Yeah, this is not good. I should not be doing this. Everyone got bell peppers. Like, I apologize now to everyone, pepper, but yeah, so. And then inside play, is it like, is there like, a favorite indoor like, do you guys have any indoor plant, like, preferences, or anything that you're telling us all go, like, Hey, y'all go out and get this, like, little fiddle thing.

I'm more of that indoor girly Jess. Jess takes care of the outdoors. She gets the basil. She makes me pesto. It's perfect. Love. I'm more of the indoor plant girly, where you unfortunately can't see any, but I'm a big window right in front of all the windows, but none behind me. Um, I love a good round tail snake plant. Let's see if I can drag her over real quick. I have one that is thriving. Yes, look at that guy. Yes. I'm a big, round tail snake or just normal snake. I don't discriminate. Love them all.

I'm the idea I got this guy because I read an article that sent me down a rat hole to a NASA study that showed how many allergen particulates this little guy can pull out of my air, and I'm like, every room will have one. Like they're everywhere in our house. It's ridiculous,

yeah, and they're also the easiest. So I'll

take it. I love it. I love it. Oh, my God. Thank you guys. Thank you guys so much. So many best practices, so many great stories. Congratulations, all the stuff you guys are doing.

Thank you. This has been great fun.

I'm gonna pause there, because that's where our editor is gonna go hack up the first 28 minutes of our conversation. I'm gonna ask you guys a couple more questions if you still have a little bit more time here. And I'm sorry, I think we may be going over. So I do apologize if you guys have to run. I totally understand, and I'm happy to send these over to you written. But if you don't mind, I'm going to ask just a couple more of these that Larry has sent over for the customer insights. That'd be okay, yeah, yeah, not terribly far over, but a bit yeah. I'm just going to ask you a couple ofthese important ones, and then maybe if there's a couple that Larry's like, no, I really need this, we might just email them back to you. But I think that the other stuff is awesome when it comes to metrics and how the team is being measured, how your kind of success as a group is being measured. What are your fight what are you finding that those business metrics for CX and for UX are, and how is that getting rolled up into other kind of business driving metrics you. Yeah, engagement is often what we're reporting on, and also brand perception and overall brand health.

We've gotpartners and siblings and cousins in our departments and organizations that are a part of email marketing, social media, and so all of those those channels are coming together to really drive like a personal relationship and engagement with our consumers. Awesome.

Love that. Love that.

So this is what proves that Larry listens to all the calls. Because here's the question, Has when what's interesting about Scott's is how CX directly aligns with the broader corporate strategy. Your CEO in the company's latest conference call cited market share gains and unit point of sale growth. How do you see your digital properties in terms of that direct sale? But also, how do your properties aid that in store buying process? Because, again, for a lot of organizations, they're run by two separate teams, but the insight that feeds the experience also comes from two separate teams. You guys seem very aligned across all of that.

Yeah, and I think when it comes to our websites, it kind of goes back to education and content. So if you are searching for something and you're in that aisle on what grass sheets should I use, or what fertilizer Should I put down now? And we intercept you on our own site. We'll educate the hell out of you, give you all the resources you need. And I don't care if you purchase from us or if we push you over to Home Depot or Lowe's or things to go buy on their websites or to go to their retail stores. It doesn't matter. And so I think that's really where we intercept and we grab them and build the confidence, educate as much as we can, and that's great. And if you go to somewhere else, that's also fine as well. So

I like that confidence seems to be a major currency for the that experience strategy for you guys that it's, you know, how are we making that exchange of content for confidence? And everyone can kind of bring that back into that virtuous cycle. I really like that. That's really well thought out. Okay, so Larry would like to know if you can share, how are those efforts to drive direct to consumer how's that going, and what are some of the lessons maybe, that you guys have learned through, whether it's user testing, whether it is through some of the other ways that you are testing and driving metrics. From that UX perspective, how are things going and where are you guys pushing and heading, let's say in the next year.

Yeah, direct to consumer, I think is so, so interesting. There are certain products for which direct to consumer, either through our own website or through our retailers websites. It feels so natural and such a wonderful fit. There are others where we have to do a little bit of educating on like, Yeah, this is something you can totally get it shipped to your house. And in fact, you want to subscribe to it, and we'll just send it to you every three months so you don't have to worry about it. So when you need to repot that plant and keep it from, you know, growing too far, yeah, that we can help make that really seamless and easier for you. But I think that also we're, we're helping drive to retailers and really smart ways helping, like Haley mentioned, intercepting folks and pushing them to retailers that are a good fit for them, and even if they've come to our site saying, Hey, you have a great store that's just down the road that can deliver this for You to do it today curbside, I think that. And you know, some of those buy online, pick up in store, kinds of solutions that are available are a really, really great match for our category, and with certain retailers being able to just add on those gardening supplies when you get your grocery order or when you're there to pick up the diapers and everything else that you need at the store. That just taking care of the weekends projects and getting all in one stop is a really great way for us to sort of show up for them.

So making it really convenient, making it easy, and giving that perception of ease when taking on those projects, I think is, is good, but yeah, growth and direct to consumer is it's going well. I think that we're leaning in. We have a really amazing omni channel and shopper marketing teams that are doing amazing things with some of these retailers and pushing the boundaries on a lot of stuff. I.

On

CR Conversations

<iframe width="560" height="315" src="https://www.youtube.com/embed/BClBs-OE7bc?si=UkqKUSLi44pDgfOg" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share" referrerpolicy="strict-origin-when-cross-origin" allowfullscreen></iframe>

In a statement, T-Mobile CEO Mike Sievert said:

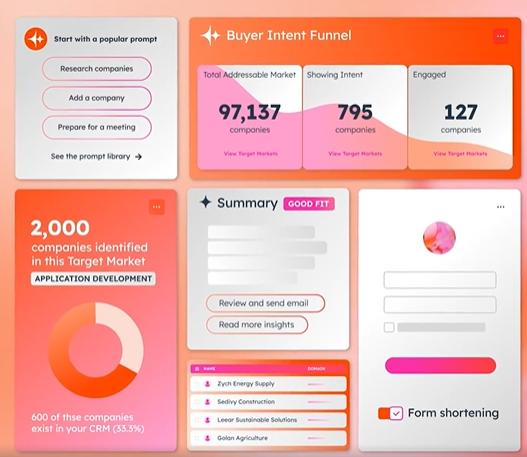

In a statement, T-Mobile CEO Mike Sievert said: The tandem of Breeze and Breeze Intelligence, outlined in

The tandem of Breeze and Breeze Intelligence, outlined in