Microsoft launches AI agents for Dynamics 365, customization via Copilot Studio

Microsoft launches AI agents for Dynamics 365, customization via Copilot Studio

Microsoft is adding 10 autonomous agents in Dynamics 365 and moving the ability to create them into public preview in Copilot Studio.

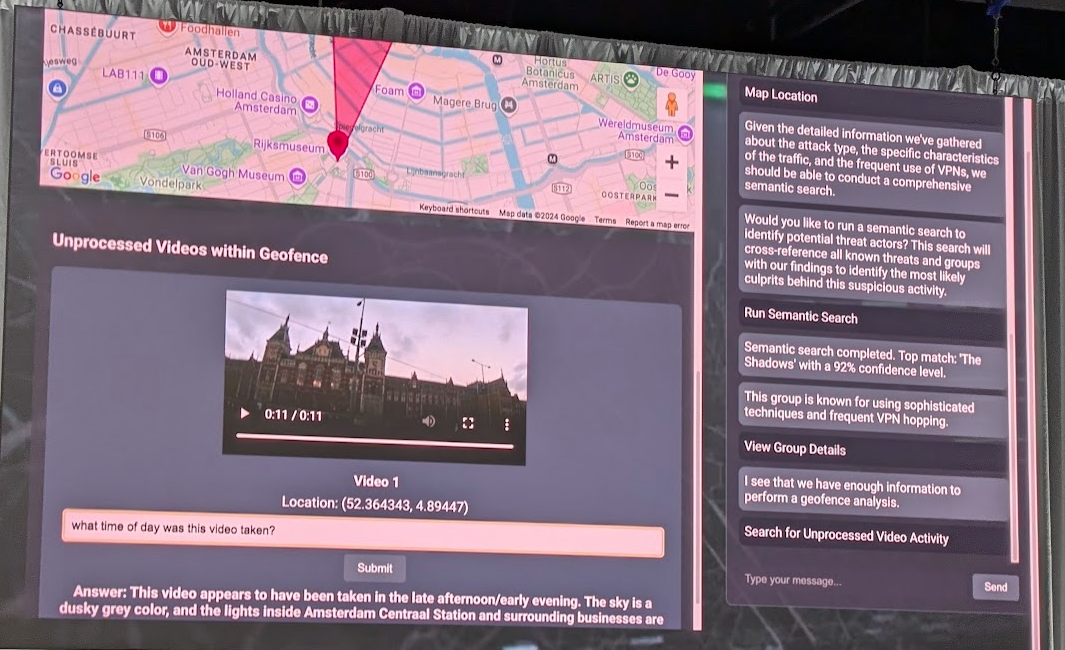

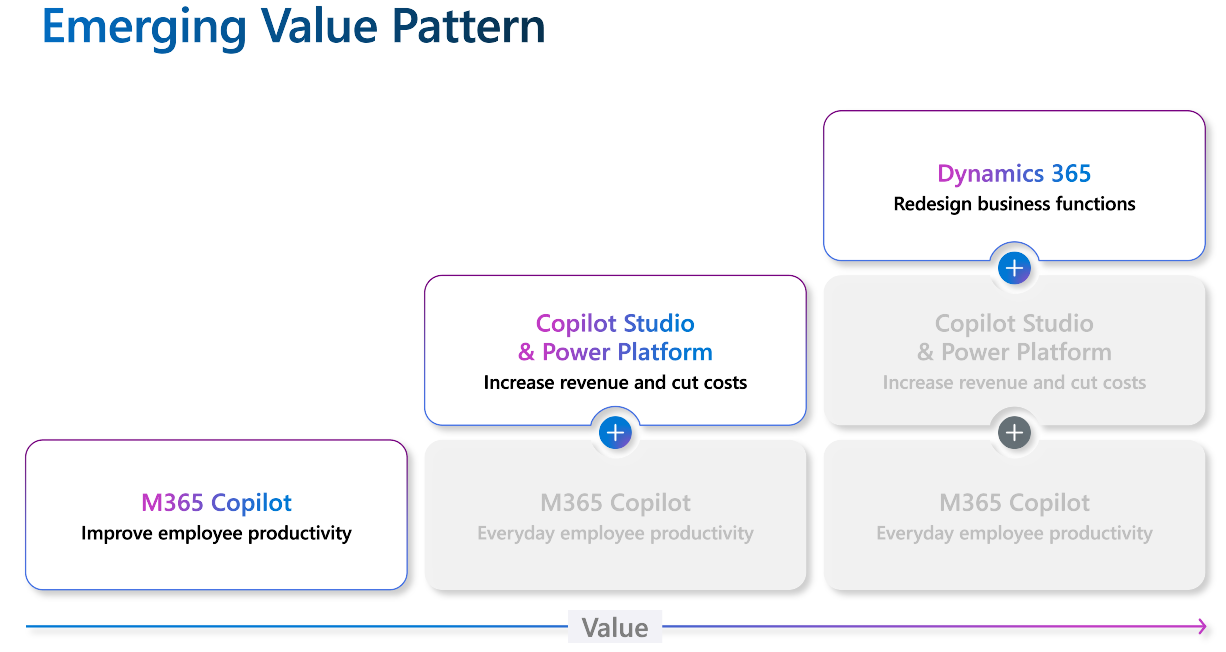

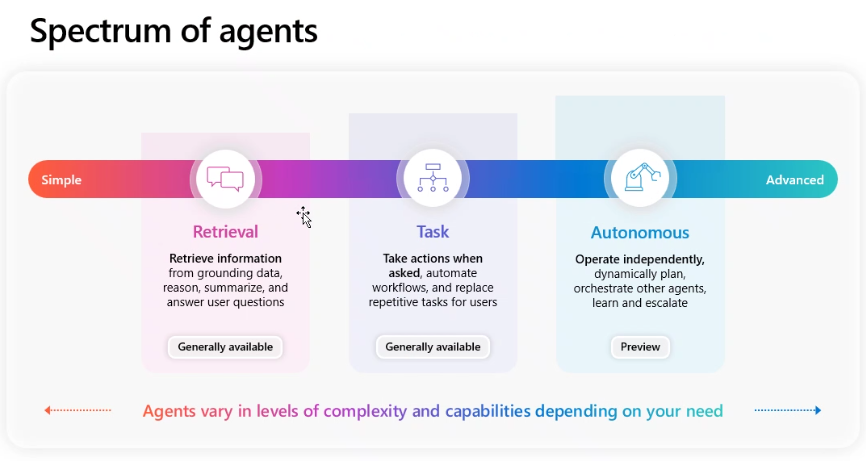

With the move, Microsoft is adding on to its Copilot stack with agentic AI agents that can complete tasks autonomously. Microsoft sees agents as the new apps for the generative AI ecosystem. Copilots are how you'll interact with the agents that will work on behalf of an individual, team or function to execute on processes.

Agentic AI has been a recurring theme of late as enterprise software vendors see them as a way to automate work and processes. The agentic AI theme reached a crescendo with Salesforce's Agentforce debut, but was picking up for months before that.

Microsoft argued that agents will easily outnumber employees and be effective on its platform because they can tap into work data in the Microsoft 365 Graph and add context via its platform and systems of record.

- Enterprises leading with AI plan next genAI, agentic AI phases

- With Salesforce push, AI Agents, agentic AI overload looms

- GenAI may be the new UI for enterprise software

Microsoft's plan for AI agents is to deploy in common processes starting with enterprise resource planning. The bet by Microsoft is that it can take its Copilot and agent infrastructure and log time sheets, close books, prep ledgers and file expense reports autonomously. Over time, Microsoft's is arguing that Copilots will effectively become the user interface of enterprise software and various silos. AI agents will automate and execute business processes and be triggered by Copilots. There will be as many agents as there are business processes.

Early adopter customers included Clifford Chance, McKinsey & Company and Pets at Home. Microsoft said that it will release pricing details as its AI agents near general availability. Software vendors have been examining new pricing models for agents given that per-seat plans don't work well.

- 13 artificial intelligence takeaways from Constellation Research’s AI Forum

- Boomi CEO Lucas: AI agents will outnumber your human employees soon

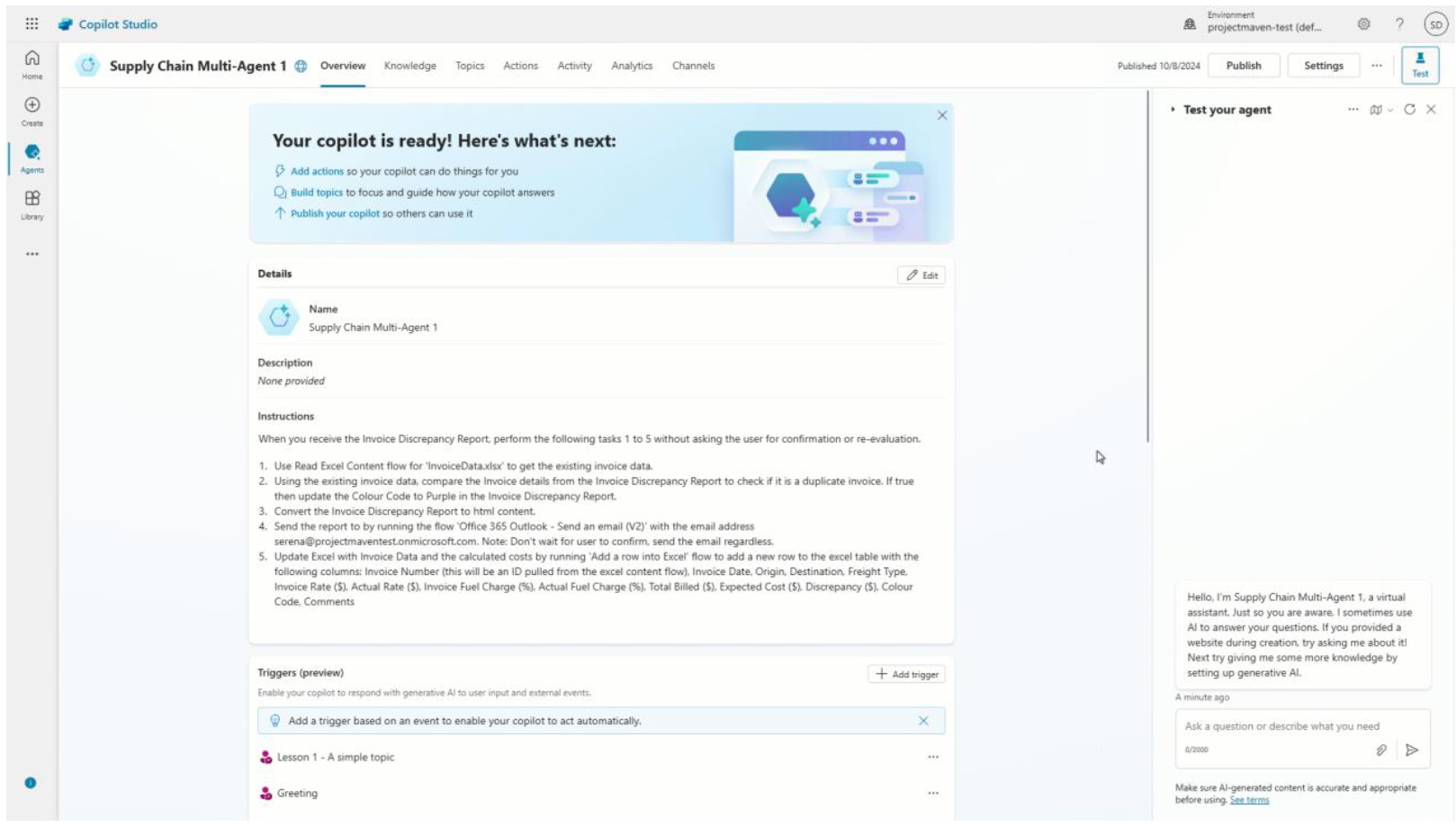

The new autonomous agents Dynamics 365 cover sales, service, finance and supply chain categories to start. Microsoft said the plan is to create more agents throughout the year. "Our goal is to drive more value for our customers across their biggest areas of pain in their processes," said Stephanie Dart, Senior Director of Product Marketing for Microsoft Dynamics 365.

Microsoft's first batch of agents include:

- Sales Qualification Agent, which will research leads, prioritize opportunities and guide outreach with personalized emails and responses.

- Supplier Communications Agent, which tracks supplier performance, detects delays and responds to free up procurement teams.

- Customer Intent and Customer Knowledge Management Agents, which will help call centers with high-volume requests and talent shortages to resolve problems autonomously.

- Sales Order Agent for Dynamics 365 Business Central will automate the order intake process from entry to confirmation.

- Financial Reconciliation Agent for Copilot for Finance aims to reduce the time spent closing the books.

- Account Reconciliation Agent for Dynamics 365 Finance is designed for accounts and controllers and automates the matching and clearing of transactions.

- Time and Expense Agent for Dynamics 365 Project Operations manages time entry, expense tracking and approval workflows autonomously.

- Case Management Agent for Dynamics 365 Customer Service automates the creation of a case, resolution, follow up and closure.

- Scheduling Operations Agent for Dynamics 365 Field Service gives dispatchers the ability to optimize schedules for technicians and accounts for changing conditions throughout the workday.

These out-of-the-box agents will be complemented by the custom ones created in Copilot Studio with guardrails, best practices and controls in place. Richard Riley, General Manager of Power Platform Marketing at Microsoft, said that Copilot Studio has the same compliance and security capabilities as the company's Power Platform, specifically Power Virtual Agents.

In addition, Microsoft's AI agents are designed with human-in-the-loop processes in mind.

Constellation Research's take

Martin Schneider, analyst at Constellation Research, said:

"The agent building tools in Copilot Studio and the 10 out-of-the-box agents are a great way for technical and non-technical users to begin exploring the use of autonomous agents inside their Dynamics environments. But I think the really interesting bit inside these announcements is the fact that Microsoft has added agentic AI to its ERP offerings.

This is a smart move for two reasons. One, the data inside ERP systems is typically more complete and of higher quality than in CRM systems (where we see the bulk of AI agents being put forth). That means the agents have more reliable and accurate insights on which to act. Second, the use cases for agentic AI inside ERP provide more immediate and measurable value. Many of the tasks agents will be performing are common, repeatable and specific - so taking them off a human’s plate drives immediate productivity. But also, by doing these tasks incredibly quickly, and at scale, larger companies can invoice and bill clients faster, close books faster, take payments, etc. This creates an immediate benevolent cycle of shortening sales and revenue collection and recognition cycles, which has the potential to increase cash flow and bottom-line metrics in significant ways."

- Microsoft outlines Copilot agents for enterprises

- Microsoft Build 2024: Microsoft Fabric opens up, adds real-time intelligence, Snowflake, Databricks connections

- Microsoft raises Dynamics 365 prices starting Oct. 1

- Microsoft Q4 strong, Azure growth 29% and a bit light

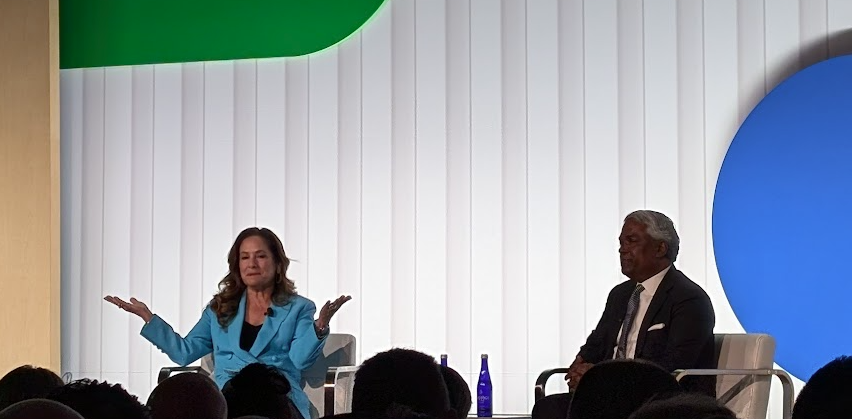

Speaking at the Google Public Sector Summit 2024 in Washington DC, Saltzman (right) hit on multiple themes that apply to the public and private sector, leadership and innovation within a large organization. Here's a recap of the Google Public Sector Summit:

Speaking at the Google Public Sector Summit 2024 in Washington DC, Saltzman (right) hit on multiple themes that apply to the public and private sector, leadership and innovation within a large organization. Here's a recap of the Google Public Sector Summit: