Google Public Sector lands new clearances for Gemini, authorizations for Air Force Cloud One

Google Public Sector lands new clearances for Gemini, authorizations for Air Force Cloud One

Google Public Sector said Gemini in Google Distributed Cloud for secret and top-secret workloads will be available in early 2025 as the two-year-old unit announced key customer wins NIH Strides and CalHEERS as well as a Federal AI Solution Factory with Accenture. Google Cloud also won new authorizations for Air Force Cloud One to provide cloud services to the Department of the Airforce.

At its Google Public Sector Summit in Washington DC, Google Cloud laid more groundwork for government AI workloads. The summit featured a bevy of AI leaders across federal agencies as well as Google Cloud CEO Thomas Kurian. The event kicks off as each federal agency is required to fill Chief Artificial Intelligence Officer roles by the end of the year due to the White House Executive Order on AI.

With government agencies going multicloud, Google Public Sector is positioning itself to be an AI provider that can adapt across hybrid cl oud deployments and multiple models with Google Cloud services such as BigQuery and Vertex AI. A demo showcase will highlight how Google generative AI tools can be used for everything from disability claims processing to research grants to geospatial applications and edge computing.

oud deployments and multiple models with Google Cloud services such as BigQuery and Vertex AI. A demo showcase will highlight how Google generative AI tools can be used for everything from disability claims processing to research grants to geospatial applications and edge computing.

- Google Public Sector Summit: 9 takeaways you need to know

- Google Public Sector: A look at the strategy

In a keynote, Google Public Sector CEO Karen Dahut (right) said the Public Sector Summit had more than 1,000 public sector leaders at the conference. "The public sector is ready to adopt the latest generative AI technology with the proper guardrails built in," she said. "We are demonstrating what's possible now."

Dahut cited multiple Google Public Sector customers including the US Air Force for preventive maintenance, local governments such as Dearborn, Michigan for citizen services, and the Department of Defense, which has built an AI-driven microscope to be deployed at military treatment centers.

Here's the rundown of what was announced.

- Gemini in Google Cloud Distributed Hosted and its clearance for secret and top-secret workloads mean public sector agencies can build out AI agents across departments for workflows, code development and cybersecurity.

- Google Public Sector has achieved Impact Level (IL) 4 and IL5 Authorization to Operate (ATO) for Air Force Cloud One. Air Force Cloud One is a contract vehicle for providing cloud services to the Air Force. The authorization, which builds on top of Google Public Sector's FedRAMP authorizations, means the US Air Force will be able to access Google Cloud for infrastructure and applications.

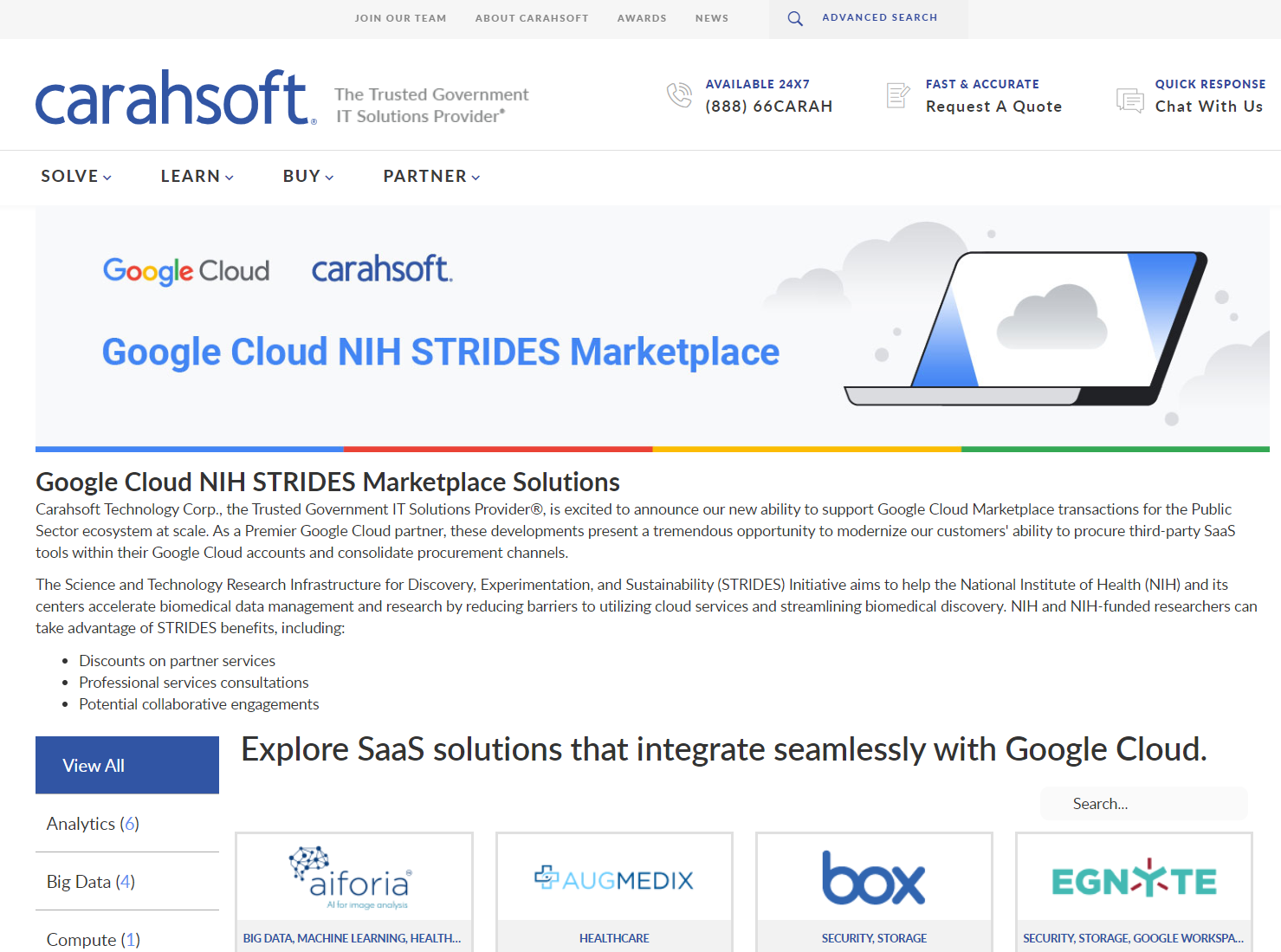

- National Institute of Health STRIDES Marketplace. Google Cloud and 11 independent software vendors launched the Google Cloud NIH STRIDES Marketplace, which will give NIH scientists the ability to find, purchase and deploy services such as compute, storage, analytics, AI and machine learning for biomedical research. The marketplace's inaugural partners include Redis, Box for Life Sciences , Augmedix (a Commure company), Sorcero, Egnyte, MongoDB, Weka.io, Form Bio, Red Hat, Rhino Health and Aiforia.

- Deloitte and Google Public Sector will deploy Google Security Operations to secure CalHEERS, the State of California’s health benefits exchange system. CalHEERS runs Covered California, the state's health insurance marketplace. Google Cloud will provide its suite of cybersecurity tools for threat detection, incident response and security analytics with built in Gemini AI. Covered California said in April it would use Google Cloud's Document AI.

- Google Public Sector expanded a partnership with Accenture to launch an AI Federal Solution Factory to prototype and pilot AI use cases for agencies. In addition, Slalom Solution Factory and Google Public Sector expanded a partnership aimed at US state and local government and education use cases.

- The Accenture and Slalom Solution Factory partnerships landed as Google Public Sector enhanced its Partner Program, which includes improved incentives, more training and co-marketing support, accelerated go-to-market and clear delivery frameworks.

- Google said it will dedicate $15 million in new Google.org funding to Partnership for Public Service and InnovateUS to upskill government workers.