Oracle AI World 2025: Autonomous AI Lakehouse, AI Data Platform launched

Oracle AI World 2025: Autonomous AI Lakehouse, AI Data Platform launched

Oracle launched Oracle Autonomous AI Lakehouse and AI Data Platform as it extends its database dominance into broader AI platform ambitions.

The news, which was announced at Oracle AI World in Las Vegas, highlights Oracle's long game, according to Constellation Research analyst Michael Ni. For the Oracle Autonomous AI Lakehouse, Oracle is looking to unlock multiple clouds and formats for existing customers rather than migrate enterprises.

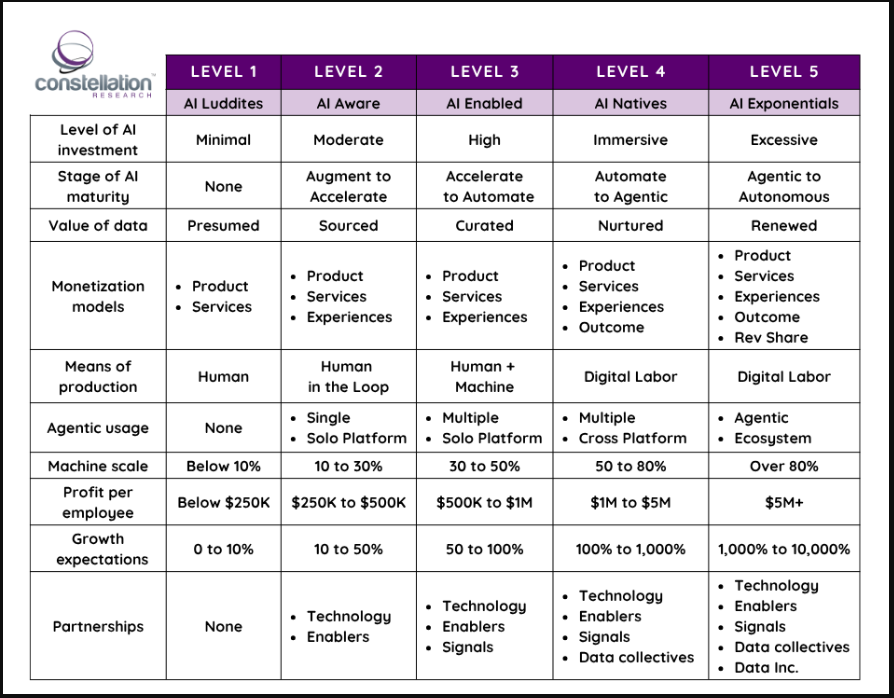

"This is Oracle’s long game in motion: turn every database into an AI engine and every AI engine into an autonomous decision platform. For customers, it means less stitching, less tuning, and faster time from insight to action," said Ni.

For the Oracle Data Platform, Ni said Oracle is positioning itself for the time when enterprises grow weary of stitching together platforms. "Oracle’s timing is classic: let competitors burn cash in the early AI arms race, then crash the party when customers demand ROI and compliance. As the market pivots from experimentation to operational AI, buyers are tired of stitching tools and policing governance," said Ni. "Oracle's unified data and agentic platform is positioned for that consolidation wave where control finally matters as much as compute."

- Holger Mueller: Oracle Makes It 4 for 4: Oracle Database@AWS Is Generally Available | Oracle Solves Decades-Old DBMS Challenges and Future-Proofs Oracle Database

- Stargate ramps as OpenAI, Oracle, Softbank outline 5 new data centers

- Oracle names Magouyrk and Sicilia co-CEOs as Catz moves to Vice Chair

- Oracle Q1 misses, but sees OCI revenue surging over next 4 years

Here's a look at what Oracle announced at Oracle AI World.

Oracle Autonomous AI Lakehouse

Oracle announced Oracle Autonomous AI Lakehouse in a move that combines Oracle's Autonomous AI Database with Apache Iceberg so data can flow seamlessly for analytics and AI.

In addition, Oracle launched Autonomous Data Catalog, which aims to be a "catalog of catalogs" that can combine enterprise data and metadata across other catalogs and platforms. Oracle added that its Data Lake Accelerator can speed up large-scale queries across Iceberg tables by scaling networking and compute capacity to Autonomous AI Database.

Key points:

- Oracle Autonomous AI Lakehouse will have native support for Apache Iceberg and will integrate catalogs from Databricks Unity, AWS Glue and Snowflake Polaris.

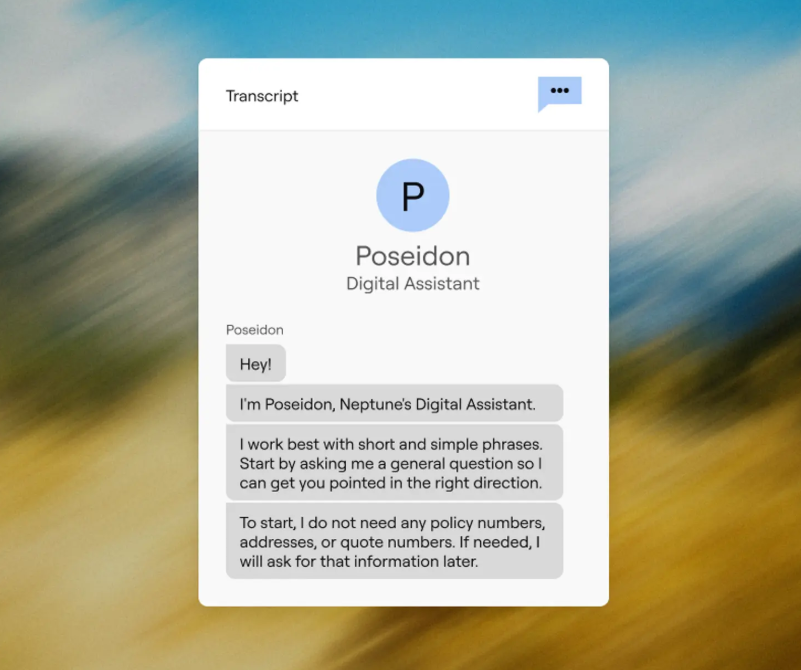

- The offering includes Select AI, which provides natural language-to-SQL and AI agent frameworks.

- Oracle's AI Vector Search is available to process Iceberg tables.

- Oracle Autonomous AI Lakehouse is available on Oracle Cloud Infrastructure, AWS, Microsoft Azure, Google Cloud and Exadata Cloud@Customer.

- Autonomous AI Database Catalog provides a unified view of data assets across multiple systems and clouds.

- Oracle Autonomous AI Lakehouse includes Select AI Agent, which provides customers a framework to build, deploy and manage AI agents in Oracle Autonomous AI Database, as well as a Data Science Agent and plug and play SQL access.

Ni said:

"Oracle’s Lakehouse didn’t appear overnight. It’s the convergence of its autonomous database, Iceberg support, and AI Select stack over two years. What's new is the parts operate as a single, self-managing AI fabric.

Under the hood, Oracle’s Lakehouse still runs on its Autonomous Data Warehouse (ADW). What is new is that the operating model has evolved. By fusing Iceberg, AI agents, and self-managing performance into ADW, Oracle has turned its warehouse into a full lakehouse runtime.

Databricks built speed. Snowflake built scale. Oracle’s building staying power fast-following the market, weaving lakehouse behavior into its existing autonomous database, and native AI capabilities. Showing up late doesn’t matter if you outlast everyone with an autonomous architecture that runs itself."

- Databricks Summit 2025: The Lakehouse Becomes a Decision Platform

- Databricks valued at $100 billion

- SAP launches Business Data Cloud, partnership with Databricks: Here's what it means

- Snowflake, Salesforce, BlackRock lead effort to standardize semantic data

- Snowflake Q2 earnings strong with revenue growth of 32%

Oracle AI Data Platform

According to Oracle, AI Data Platform is designed to connect models with enterprise data, applications and workflows. The goal is to combine data ingestion, vector indexing, semantic enrichment and AI tools to help enterprises scale.

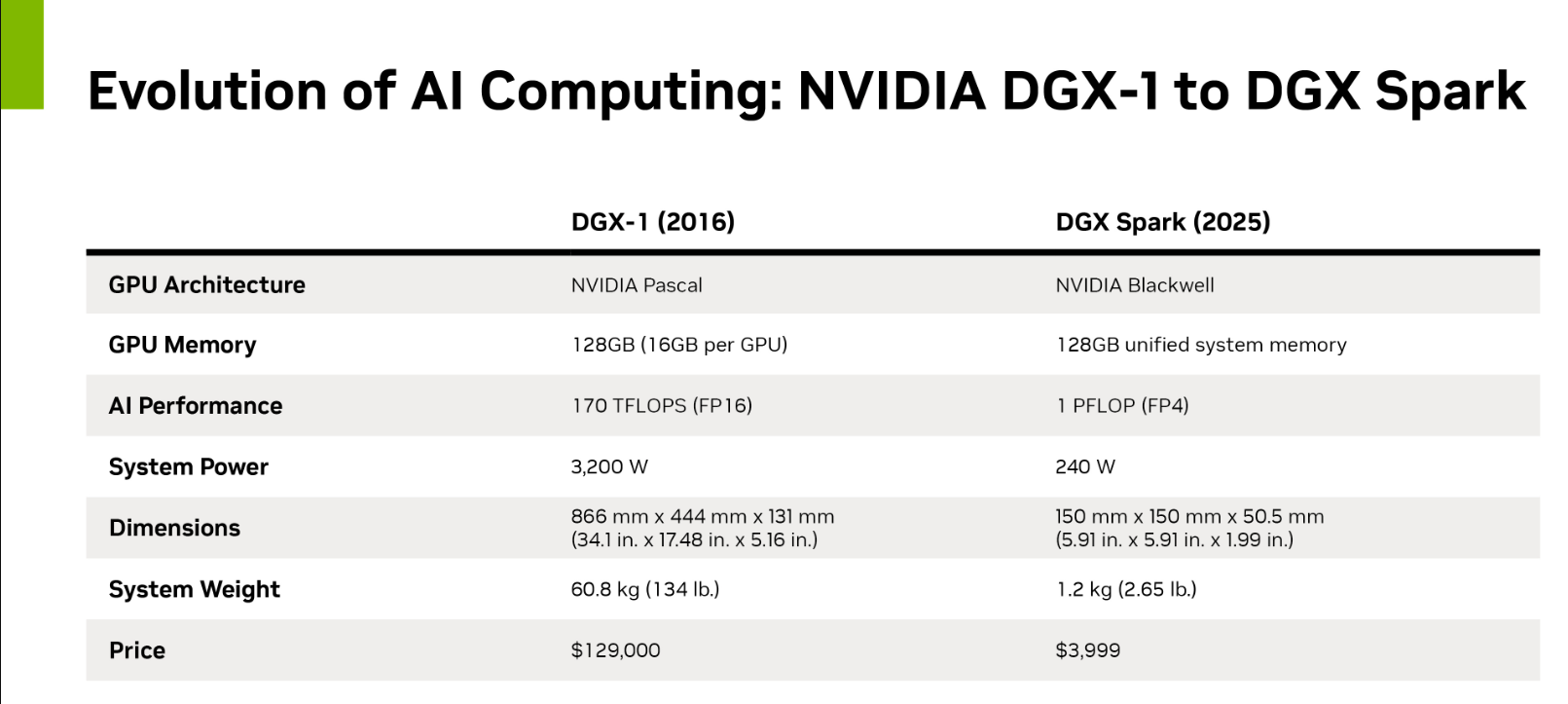

The AI Data Platform combines Oracle Cloud Infrastructure, Autonomous AI Database and its generative AI services. AI Data Platform runs on Nvidia GPUs.

Key points:

- Oracle AI Data Platform can be used to create data lakehouses with open formats such as Delta Lake and Iceberg.

- The company said Oracle AI Data Platform has a unified view and governance across all data and assets.

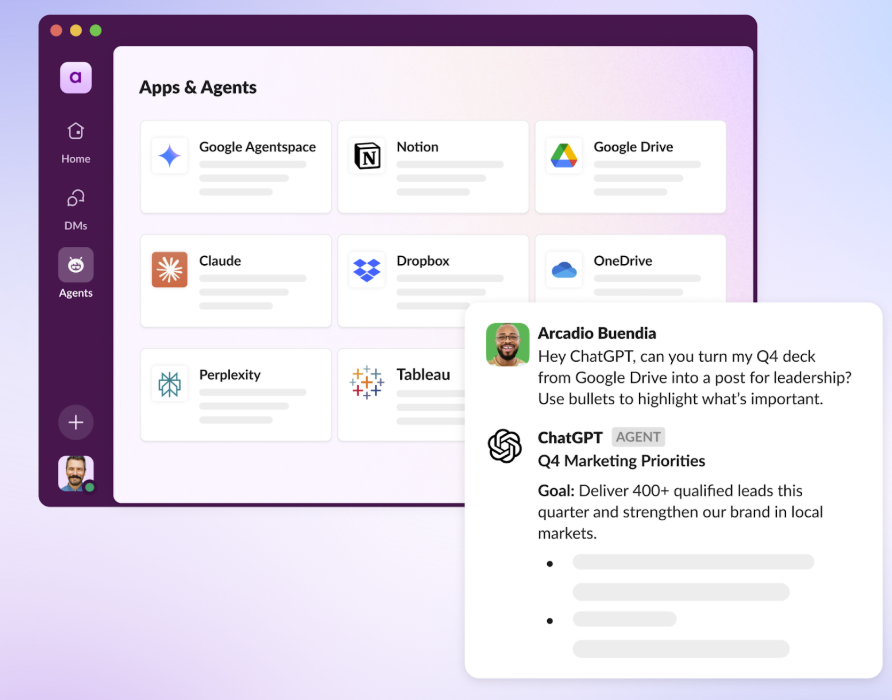

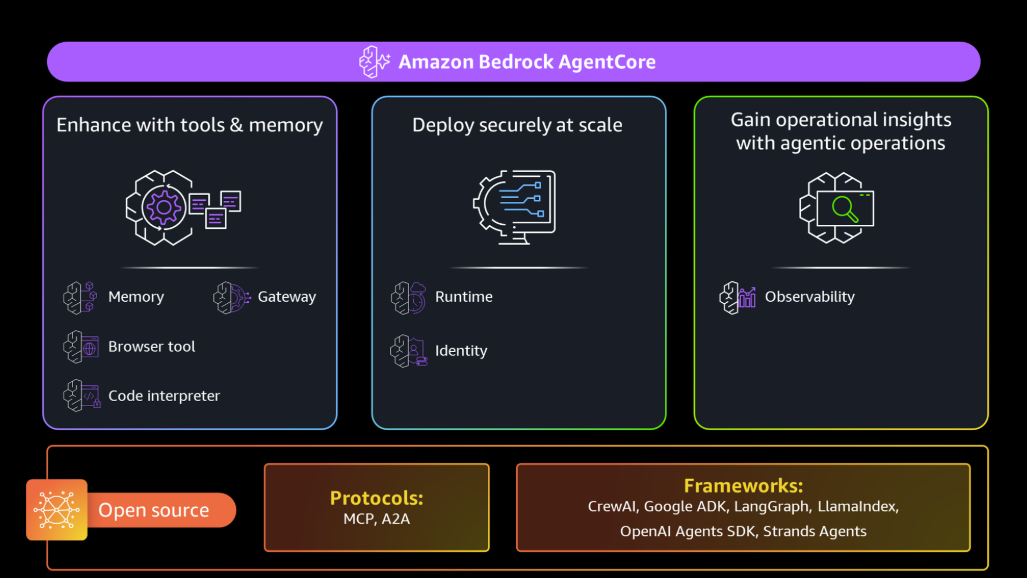

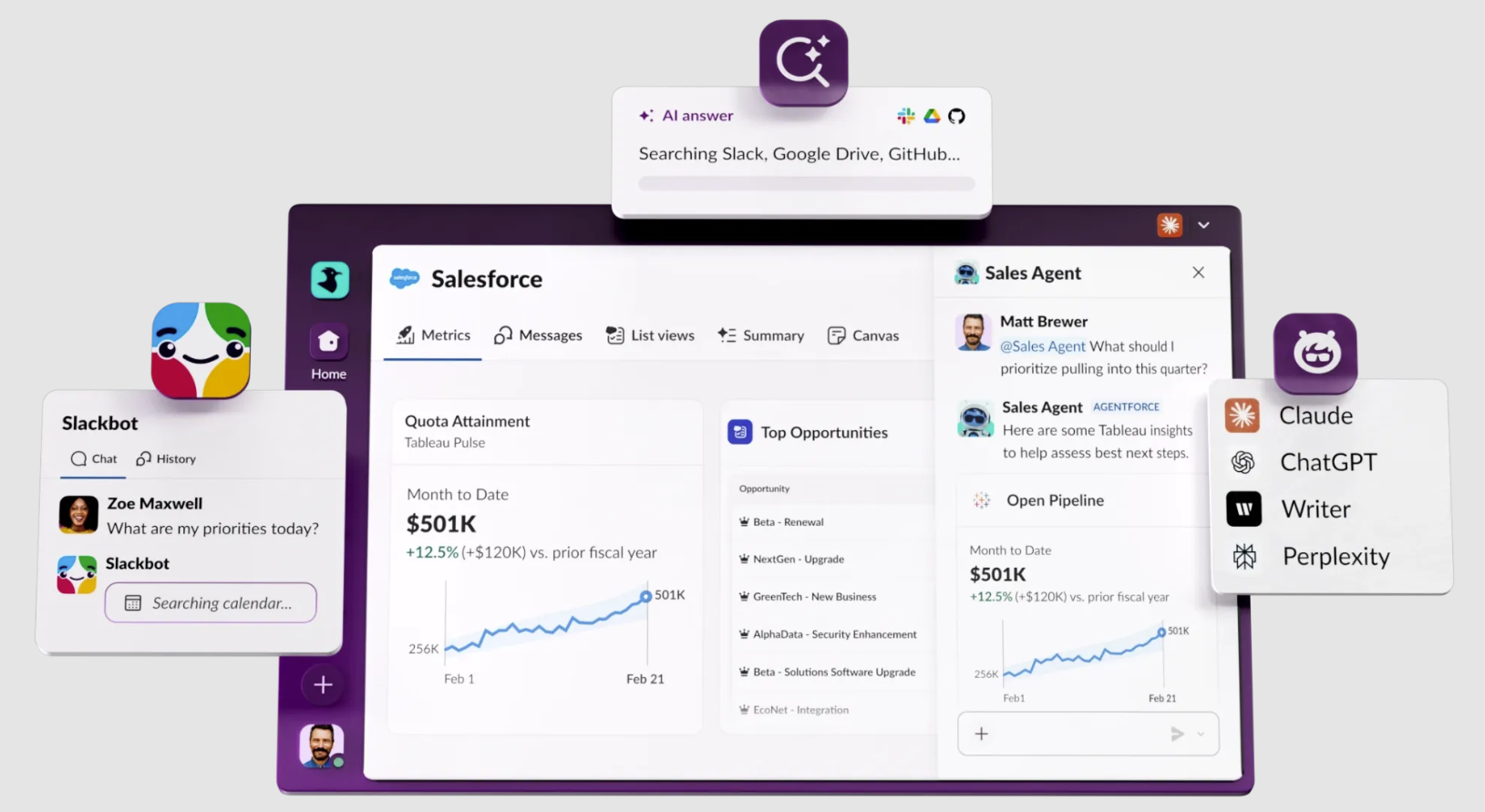

- Oracle AI Data Platform supports Agent2Agent and Model Context Protocol.

- Oracle AI Data Platform includes Agent Hub, which is an abstraction layer for managing agents.

- Zero-ETL and Zero Copy features are included in Oracle AI Data Platform, which is multi-cloud.

- Oracle AI Data Platform will be integrated into all major Oracle application suites via pre-built integrations.

- Global systems integrators including Accenture, KPMG, PwC and Cognizant said they will invest support resources for Oracle AI Data Platform.

Oracle Cloud Infrastructure

Oracle Cloud Infrastructure (OCI) outlined an expanded partnership with AMD, new networking capabilities, multicloud universal credits and a Zettascale10 Cluster.

Here's a look:

- Oracle said it will be a launch partner for the first publicly available supercluster powered by AMD Instinct MI450 Series GPUs. The initial deployment will have 50,000 GPUs starting in the third quarter of 2026 and expanding in future years. OCI launched instances powered by AMD Instinct MI300X GPUs in 2024 and is adding AMD Instinct MI355X GPUs in its zettascale OCI Supercluster. OCI is using AMD's Helios rack design that includes AMD's integrated AI stack.

- The company said Oracle Acceleron, OCI's suite of network software and architecture, will get a dedicated fabric network architecture, multi-planar networking, converged NIC and zero-trust packet routing.

- OCI will get multicloud universal credits, which will give customers a cross-cloud consumption model to buy Oracle AI Database and OCI services on any cloud. For customers, the move will help procurement and give enterprises flexible terms and consistent contracts across AWS, Google Cloud, Microsoft Azure, and OCI.