Oracle CTO Larry Ellison on AI use cases for healthcare, agriculture, climate change

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

Oracle CTO Larry Ellison is never short of words. In his annual keynote at Oracle's AI World conference (previously Cloud World), Ellison held court for nearly two hours and riffed on a bevy of big AI ideas.

A good chunk of Ellison's talk revolved around talking up Oracle Cloud Infrastructure's plans for a 1.2 billion-Watt AI Brain, which would be Oracle's largest data center with 500,000 Nvidia GPUs. Ellison also touted Oracle AI Data Platform, which will reason on public and private data.

Ellison said Oracle's two biggest opportunities are AI training and AI Reasoning. Private data will enable more valuable models and data will stay private. He added that Oracle databases already contain most of the world's high-value private data.

"The big opportunity in AI training is upon us, and Oracle is a major participant in building data centers to do AI training. But the much, much larger opportunity, the one that will truly change the world, isn't the creation of the models themselves, the training of the models," said Ellison. "What will change the world is when we start using these remarkable electronic brains, and that's what they are. These are remarkable electronic brains to solve humanity's most difficult and enduring problems."

Ellison also noted that AI will also build out its perception game in addition to reasoning. "It's called artificial intelligence as opposed to artificial perception, but it does perceive. It hears it smells. Think about smelling. I mean the idea that you can pick up chemicals that are just drifting around in the atmosphere and figure out what those chemicals are. Dogs can smell cancer in patients," said Ellison. "We should be able to do that with AI, we should be able to. In fact, there's a project I know of called the dog's nose that I'm actually a part of, and we're building sensors. We're building sensors that can smell cancer or other or other illnesses."

According to Ellison, AI players are focusing on two flavors of models. The ones that are most familiar are the reasoning models, but real-time models will be as important. He noted how Google Cloud has Gemini, but physical AI models too. Elon Musk's xAI has Grok, which is a reasoning model, but Tesla represents a real-time model.

Here's a roundup of those problems that AI can solve. Some of these problems are aligned with the Ellison Institute of Technology at Oxford, which is expanding following another £890 million investment from the CTO.

Healthcare

Ellison, which counts Cerner as part of Oracle, is passionate about healthcare. Cerner has leveraged AI to rewrite its code base, but Ellson noted the rebuild also includes retooling accounting and HR systems designed for hospitals. "Hospitals are very unusual," he said.

AI-driven use cases for healthcare include:

AI-powered precision surgery. Ellison described AI robots that surpass human surgical capabilities through superior vision and coordination. For something like cancer removal, AI robots could eliminate some key steps where surgeons remove tissue layers and examine them under a microscope. "AI robots just don't play fair. Their vision is the AI, the vision on the robot and it is microscopic. They don't need a microscope to see individual cells and they can cut between a layer of healthy cells and a layer of cancer cells."

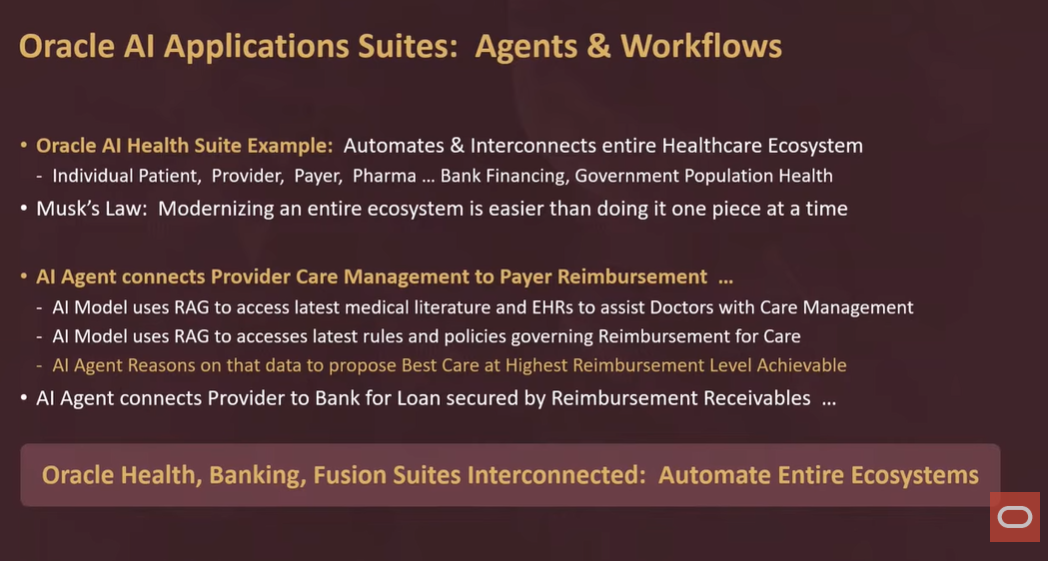

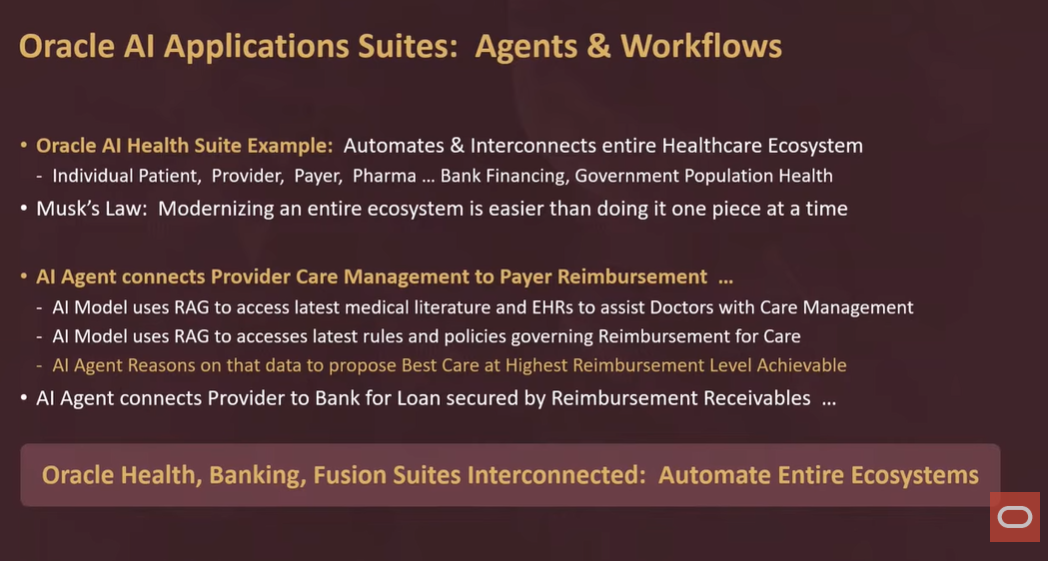

Integrated healthcare workflows. Ellison said there's a big opportunity to automate healthcare workflows. With retrieval-augmented generation (RAG) models can access the latest medical literature, patient test results, and clinical trial information. The AI can identify optimal care while ensuring full insurance reimbursement. That final point is key since you can use AI to predict whether insurance will pay.

Smoothing out hospital cash flow. With better predictions for insurance reimbursement, hospitals will be able to get loans against receivables with a 95% to 99% chance to be reimbursed. Ellison said there could be specialized banks focused on hospitals. "An AI agent can bank all of the information about a particular collection of reimbursements, assuring the bank that those that reimbursements will be adhere to all of the rules," said Ellison. "The clinic and the hospital will be reimbursed. You can discount a little bit, and the bank will then loan on those receivables."

Automating healthcare ecosystems. "If we really want to be successful in health care, we can't just automate hospitals and clinics. We have to automate the entire ecosystem," said Ellison. "You have to automate the pay the patient, the provider, the payer, the regulator, the pharma, companies, banks who finance the hospitals, and governments who regulate the hospitals and collect information from the hospitals. You have to automate the entire ecosystem. You do that and then you will get a truly modern, efficient healthcare system. And that's what we were after when we bought Cerner as a first step."

Ellison said one of the most interesting AI agents built by Oracle connects providers to payers. The agent comes up with the best possible quality of care that is fully reimbursable if the patient can't pay. "The AI model will provide information to doctors as the doctor tries to figure out the best possible care for the patient, then the AI model is also trained on the latest rules and policies. What's the best care and what's fully reimbursable? I have to train the model on all of the insurance rules," said Ellison.

The subtext here is that Ellison could use healthcare automation as a way to talk about layering AI agents throughout its Fusion apps. At Oracle AI World, Oracle outlined the agentic AI efforts in its applications including a marketplace and AI agent studio.

Agriculture, carbon capture and sustainable farming

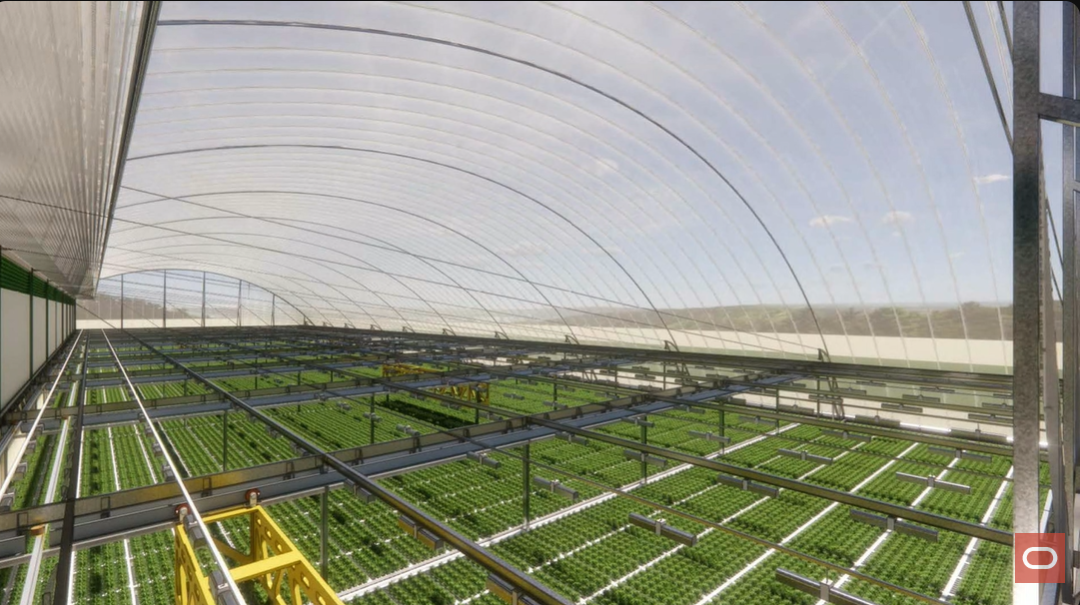

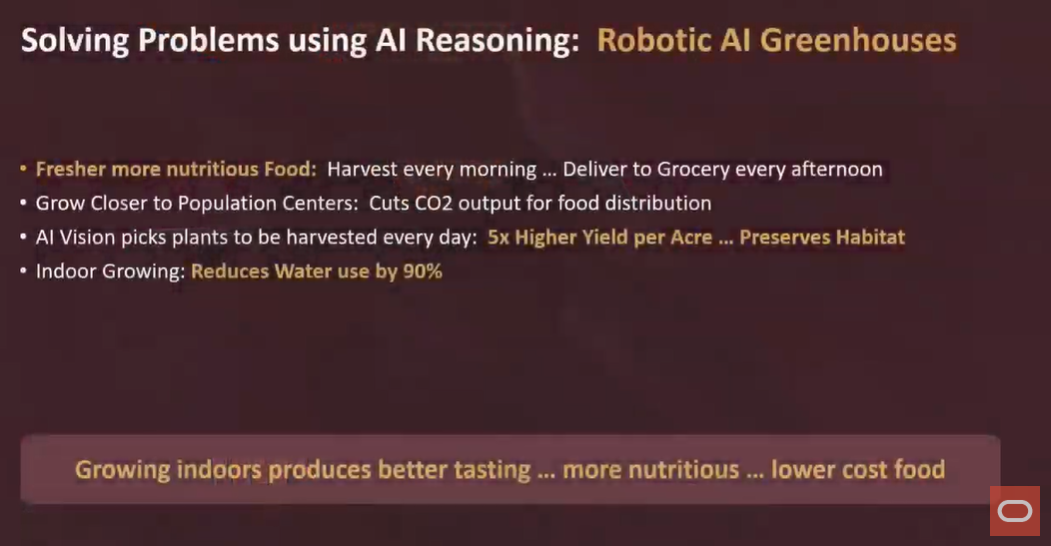

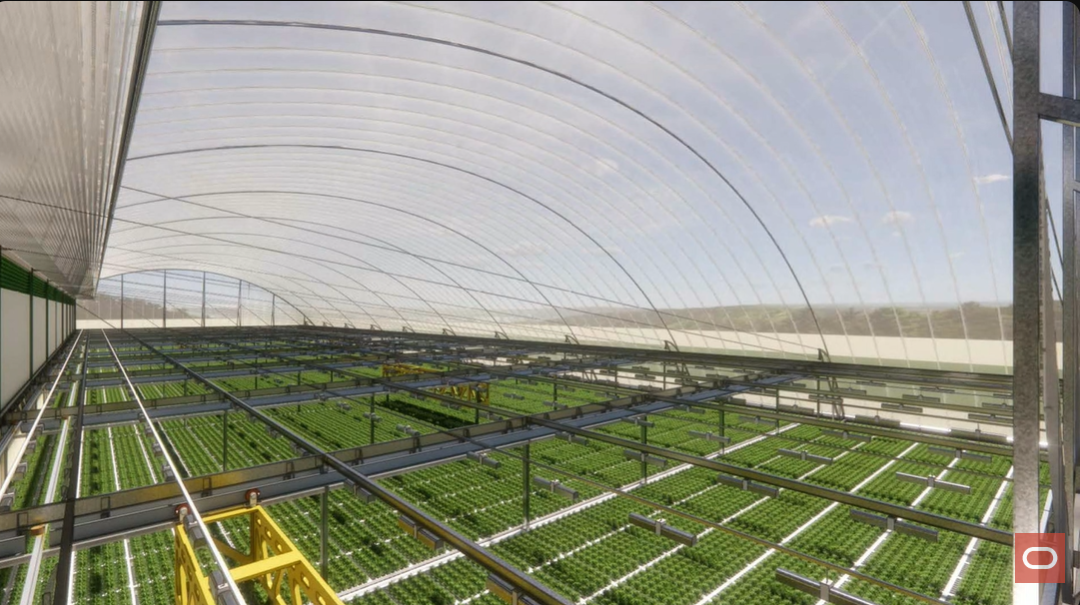

Ellison said there's a need for "robotic greenhouse systems" and AI driven farming. "We need to produce much more fruit and food than we currently do. We're going to run out of water. We're going to run out of arable land. We can't keep taking habitat and converting it to farmland. We have to be much more efficient," said Ellison. "By growing and by growing in greenhouses and moving plants around, plants only need a lot of room for the few weeks before they're harvested."

Robotic greenhouses will use less water and space. The CO2 footprint would be lower. Ellison said two robotic greenhouses are being built in California and Texas. The building includes a rail system that moves plants from one location to another. No humans are allowed since people contaminate the growing area. The structure is an air pressure building.

"You snap the steel cables onto the footing, and then you turn the fan on, and you inflate the building. You fold the building up. The building is fabric with steel cables. You fold it up and nice, nice, nice packages, and you transport it to where you're building it, or you transport it to Mars on one of those big rockets," said Ellison.

The company behind the robotic greenhouses is Wild Bio, which is part of Ellison's institute at Oxford.

AI-assisted engineering of crops. Ellison said crops can be engineered to remove CO2 from the atmosphere while increasing food production. "We could use our food crops, we could actually increase the food yield while lowering CO2 through a natural process called bio-mineralization," said Ellison. The general idea is to engineer crops to extract nitrogen from the atmosphere via an enzyme called Nitrogenase, naturally found in soybeans.

"Rather than using fertilizer to nourish the plant, the atmosphere has got a huge amount of nitrogen in it. Why don't you simply engineer the plant to take the nitrogen directly out of the atmosphere?" said Ellison.

The engineering of crops would face multiple regulatory and technology challenges as well as the impact on biological systems. Farmers would need new practices and techniques as well as training.

Drones

Forest fire detection and response. Ellison demonstrated autonomous drones equipped with infrared cameras that can "detect forest fires immediately" and even determine if fires were deliberately set.

Medical sample transport. The system includes RFID-tagged specimen vaults that maintain chain of custody while protecting patient privacy. Drones transport blood samples from clinics to testing laboratories, solving logistics challenges while maintaining security. "No one knows this is Larry Ellison's blood. They just know there's an RFID tag on it," said Ellison.

Data to Decisions

Innovation & Product-led Growth

Next-Generation Customer Experience

Tech Optimization

Future of Work

Digital Safety, Privacy & Cybersecurity

Oracle

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Robotics

AI

Analytics

Automation

Quantum Computing

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

Leadership

VR

Chief Information Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer