Qlik Plots Course to Big Data, Cloud and 'AI' Innovation

Qlik highlights upgrades and the roadmap to high-scale, hybrid cloud and ‘augmented intelligence.’ Here's my take on the long-range plans.

Big data scalability, hybrid cloud flexibility and smart “augmented” intelligence. These are the three plans that business intelligence and analytics vendor Qlik officially put on its roadmap at the May 15-18 Qonnections conference in Orlando, Florida.

Qlik also highlighted six important upgrades coming in the Qlik Sense June 2017 release – one of five annual updates now planned for the company’s flagship product (reflecting cloud-first pacing, though on-premises customers can choose whether and when to make the move). The June upgrade highlights include:

- Self-service data-prep capabilities

- New data visualizations and color-selection flexibility

- Qlik GeoAnalytics geospatial analyses added through the vendor’s January acquisition of Idevio

- An improved QlikSense Mobile app that supports offline analysis

- Support for advanced analytics capabilities based on R and Python

- Easier conversion of QlikView apps to Qlik Sense.

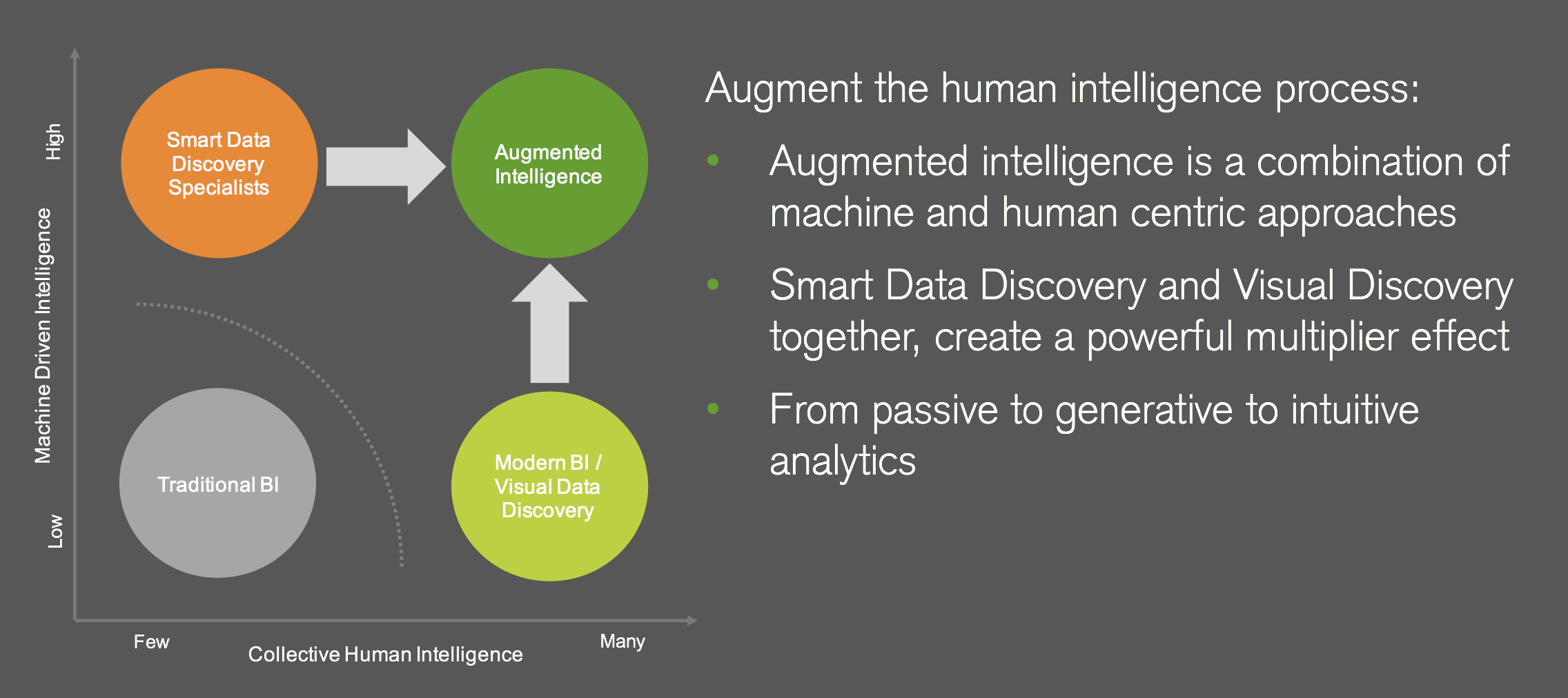

Qlik is promising "augmented intelligence" said to combine the best of machine intelligence

with human interaction and decisions.

Most of these upgrades earned hearty applause from the more than 3,200 attendees at the Qonnections opening general session, but the sexiest and most visionary announcements were the ones on the roadmap. Here’s a rundown of what to expect, along with my take on what’s coming.

Building Toward BigData Analysis

Qlik’s key differentiator is its associative QIX data-analysis engine, which is at the heart of the company’s platform and is shared by its Qlik Sense and QlikView applications. QIX keeps the entire data set and rich detail visible even as you focus in on selected dimensions of data. If you select customers who are buying X product, for example, you’ll also see which customers are not buying that product. It’s an advantage over drill-down analysis where you filter out information as you explore

There have been limits, however, in how much data you can analyze within the 64-bit, in-memory QIX engine. Qlik has a workaround whereby you start with aggregated views of large data sets. Using an On-Demand App Generation capability you can then drill down to the detailed data in areas of interest. But the drawback of this approach is that you lose the powerful associative view of non-selected data.

The Associative Big Data Index approach announced at Qonnections will create index summaries of large data sets, drawn from sources such as Hadoop or high-scale distributed databases. A distributed version of the QIX engine will then enable users to explore the fine-grained detail within slices of the data without losing sight of the summary-level index of the entire data set.

MyPOV on Qlik big data capabilities: What I like about the Associative Big Data Index is that it will leave data in place, whether that’s in the cloud or in an on-premises big data source. It brings the query power to the data, eliminating time-consuming and costly data movement. The distributed architecture also promises performance. In a demo, Qlik demonstrated nearly instantaneous querying of a 4.5-terabyte data set. Granted, it was a controlled, prototype test, so we’ll have to wait and see about real-world performance.

Speaking of waiting, on big data, as on the hybrid cloud and augmented intelligence fronts, Qlik senior vice president and CTO Anthony Deighton set conservative expectations, telling customers they would see progress by next year’s Qonnections event. He didn’t rule out the possibility of an earlier release, but nor did he promise that any of the new capabilities would be generally available by next year’s event. As has been Qlik’s habit in recent years, it’s responding slowly to demands in emerging areas like big data and cloud.

Preparing for Hybrid Cloud

The business intelligence market has forced a binary, either-or, on-premises or cloud-based choice, said Deighton. He vowed that Qlik will change it to an and/or choice by fostering hybrid flexibility with the aid of microservices, APIs and containerized deployment. The approach will also require sophisticated, federated identity management, which the vendor has developed to support European GDPG data security and privacy compliance requirements set to go into effect next year.

In a prototype preview at Qonnections, Qlik demonstrated workloads being spawned and assigned automatically across Qlik nodes running on Amazon, in the Qlik Cloud and on-premises. The idea is to flexibly send workloads to the most appropriate resources. That could mean spawning public cloud instances on the fly when scale is required. Or it could mean keeping analyses on-premises when regulated data is involved. Qlik is working with big banks and hospitals, among other customers, to master microservices orchestration across on-premises, private-cloud and public-cloud instances.

MyPOV On Qlik’s cloud plans: As noted above, Qlik made no promises as to when it will deliver on this flexible, cloud-friendly microservices vision, other than to say that we’ll hear more at Qonnections 2018. Qlik’s cloud offerings need these workload-management features, particularly where Qlik Sense Enterprise in the cloud is concerned. Customers want better performance as well as the granular services and APIs they’re used to from leading SaaS vendors. I believe it’s more important for Qlik to deliver quickly on this front than on any other, so let’s hope it’s something introduced before Qonnections 2018.

Augmenting Intelligence

There have been many announcements about “smart” capabilities this year. A few have of the capabilities have actually launched (like those detailed in my detailed reports on Salesforce Einstein and Oracle Adaptive Intelligent Apps), but most are works in progress. Some are conservatively described as automated predictive analytics or machine learning while others are billed as “artificial intelligence.”

Over the past year, Deighton and other Qlik executives have charged that competitive AI and cognitive offerings tend to remove humans from decision making. In keeping with this theme, the company announced that it’s working on “augmented intelligence” that will “combine the best” of what machines can do with human input and interaction. The approach will eschew automation in favor of machine-human interaction that will bring context to data and promote better-informed machine learning, said Deighton.

The general idea is for humans to interact with concise lists of computer-generated suggestions. This will happen through computer-augmented interfaces at various stages in the data-analysis lifecycle. When users bring data together, for example, data-analysis algorithms will be applied to suggest how the data might be correlated. In the analysis stage, algorithms will suggest the best analytical approaches. And once results are generated, data-visualizations algorithms will be applied to suggests best-fit visualizations. Humans will interact with the suggestions and make the final selections at every stage. Deighton promised something that will neither dump too many possibilities on users, at one extreme, nor create “trust gaps” by automating and remove human input from decisioning.

MyPOV on Qlik Augmented Intelligence: Based on conversations with Qlik executives, I’d say we’re in the early stages of Qlik’s augmented intelligence initiative. It all sounds good, but the details were sketchy. I heard a bit about analytic libraries and potential partnerships on the machine learning and neural net front. But executives weren't ready to name partners or predict availability. In short, we may see the beginnings of Qlik’s augmented intelligence capabilities at Qonnections 2018, but Qlik execs were up front in describing the initiative as something that may take a few years to mature.

Qlik’s most direct competitors, including Tableau, Microsoft, SAP and IBM, are all working on smart data exploration, basic prediction and “smart” recommendation features of one stripe or another. IBM is actually on the second-generation of its cloud-based IBM Watson Analytics service. Yet we’re still in the very earliest phases of bringing advanced analytics, machine learning and artificial intelligence to the broad business intelligence market. I think 2017 may mark the end of the beginning. By 2018 and beyond, we’ll start to see vendor selections based on smart features rather than the maturing trend toward self-service capabilities.

RELATED READING:

Qlik Gets Leaner, Meaner, Cloudier

Inside Salesforce Einstein Artificial Intelligence

Tableau Sets Stage For Bigger Analytics Deployments