In Digital Economy, You Should Fail Fast, But Must Also Recover Fast

In digital economy, you must move fast to survive. Not in six-month release cycles. But moving with fast release cycles, continuous releases, a mature CI/CD pipeline is only a portion of the solution. If you continue to break your systems at a faster rate but are unable to fix them faster as well, you are setting up for unplanned disasters that will hurt your business sooner than later.

I recently did a podcast with my buddy Mike Kavis, Chief Cloud Architect at Deloitte discussing some of these areas of concern.

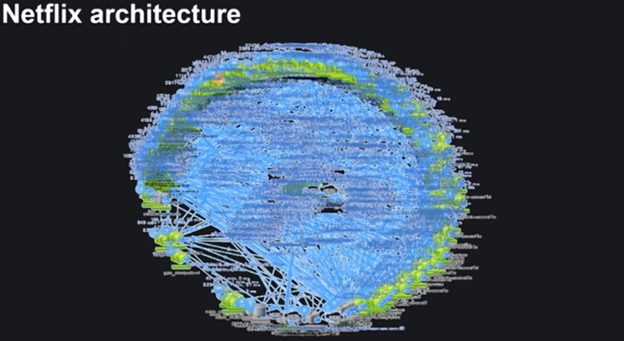

To achieve this, the DevOps culture, agile development, CI/CD pipeline, and microservices are in full swing at most digital powerhouses. I recently wrote about the digital powerhouse release cycles on how many changes they make per day. The classic microservice architecture from Netflix from a few years ago claims they have close to 800+ microservices (at that time). Netflix, Uber, and most other digital giants claim to make thousands of "daily production changes." Sometimes multiple changes to the same service. That is the power of agile development. "Fix fast and fail fast" is the mantra of successful digital houses where they can be very nimble and provide the changes the customers want, sometimes by making multiple changes to the same service. The canary, blue-green, and rolling deployments give power to the developers and DevOps teams to figure out how to get the functions and features fast to the consumers.

However, this can be a nightmare for centralized Ops teams. As I discussed in my previous article, per Gene Kim in his The Visible Ops Handbook, 80% of unplanned outages are due to ill-planned changes. Distributed systems produce a ton of noise. If anyone critical microservice or underlying infrastructure component goes down it is not uncommon for IT monitoring and observability systems to produce tens of thousands of alerts. It is very common in many digital-native companies the Ops teams get drowned in alerts and often are "alert fatigued." The noise to signal ratio is very high in these environments.

After looking at the processes are multiple large customer situations, I have to say the "fail fast" or development cycle, has matured enough, but the "find fast and fix fast" cultures of Ops teams are still not mature enough. Using yesteryears tools, processes, and skills to fight the modern war is the most dangerous thing any organization can do and yet it is very commonplace with today's IT teams. Most of them seem to band-aid something until something major breaks.

War/Incident collaboration rooms are broken

Given the virtual nature we are operating in during the pandemic, most enterprises have successfully adopted virtual incident collaboration rooms instead of physical war rooms. A good thing about the physical rooms is that you can physically see how many are in the room, and who is appropriate and figure out someone important is missing. This will help reduce the incident collaboration rooms to the right size quickly before they can deep dive into the issue.

With virtual incident collaboration rooms, everyone who is tagged with a remote association to any critical service will be automatically invited to a virtual incident room (eg. Slack channel) and everyone starts to guess what went wrong. Not only too many cooks but most of those engineers, developers, system admins who would be doing something else productive instead. Sadly, as one high-powered executive told me in confidence, they established a "Mean Time to Innocence" to reduce crowd in those situations. In other words, invite everyone you can think of, and if they can prove it is not their work that is offending then they can leave the room.

Find what is broken and fix it fast

I have written numerous articles on this topic. You need to first figure out if what happened is really an incident. All notifications/alerts/anomalies are not necessarily incidents. An incident is defined as "Something that affects the quality of service delivered." If it is an unplanned incident, you also need to figure out if it is causing problems to any user. You also should have a mechanism to figure out how many high-profile customers are affected vs random users which need to happen before the customer knows the incident happened so you can fix it.

AI/ML systems, Observability systems, AIOps, and proper Incident Management systems, if set up properly, can help a lot in this area. Those are some of my coverage areas that I am researching here at Constellation. If you have an offering in this space, please reach out to us to brief us. I would love to find out what you do and include you in my research report.

Keep in mind in the digital economy you need to fail fast and recover fast as well. If you just keep failing without finding and fixing things faster your business will fail permanently soon enough. It is not about how hard you fall that matters, but how fast you get back up that matters more.

I am currently a research VP and Principal Analyst at Constellation Research a silicon valley research firm. My coverage areas include AI, ML, AIOps, DataOps, ModelOps, MLOps, CloudOps, Observability, etc. Contact me if you would like to brief me about your company or you need content/research work.