Wayfair starts to reap rewards from optimization, tech replatforming efforts

Wayfair starts to reap rewards from optimization, tech replatforming efforts

Wayfair has optimized its technology and operations to the point where it can grow both its top and bottom lines.

The home retailer delivered net income of $15 million, or 11 cents a share, on revenue of $3.3 billion, up 5% from a year ago. Non-GAAP earnings for the company were 87 cents a share.

For Wayfair, the results were the best since 2021. Wayfair has suffered from a Covid pandemic boom and bust cycle. Niraj Shah, CEO of Wayfair, said "we can and will grow profitably while taking significant share in the market."

A big part of that profitability push has revolved around a technology overhaul that largely revolved around a move to Google Cloud. When we last checked in with Wayfair, it was in the middle innings of a replatforming. Now that work is largely complete.

- How Wayfair's tech transformation aims to drive revenue while saving money

- BT150 interview: Wayfair CTO Fiona Tan on transformation, business alignment and paying down tech debt

Shah added that Wayfair aims to invest in the future, grow current profitability and maximize free cash flow in the long run. Those goals will require continuous optimization, efficiency, AI and new features.

"Our model allows us to service the products with the best value for our customers, enabling us and our suppliers to gain share and grow revenue," said Shah.

Shah described the furniture and home goods market as "stable-ish." The higher-end market is stronger than the mass market, but overall demand is "bumping along the bottom after a few years of declines."

Here's a look at Wayfair's big initiatives and how it is flowing through to the bottom line.

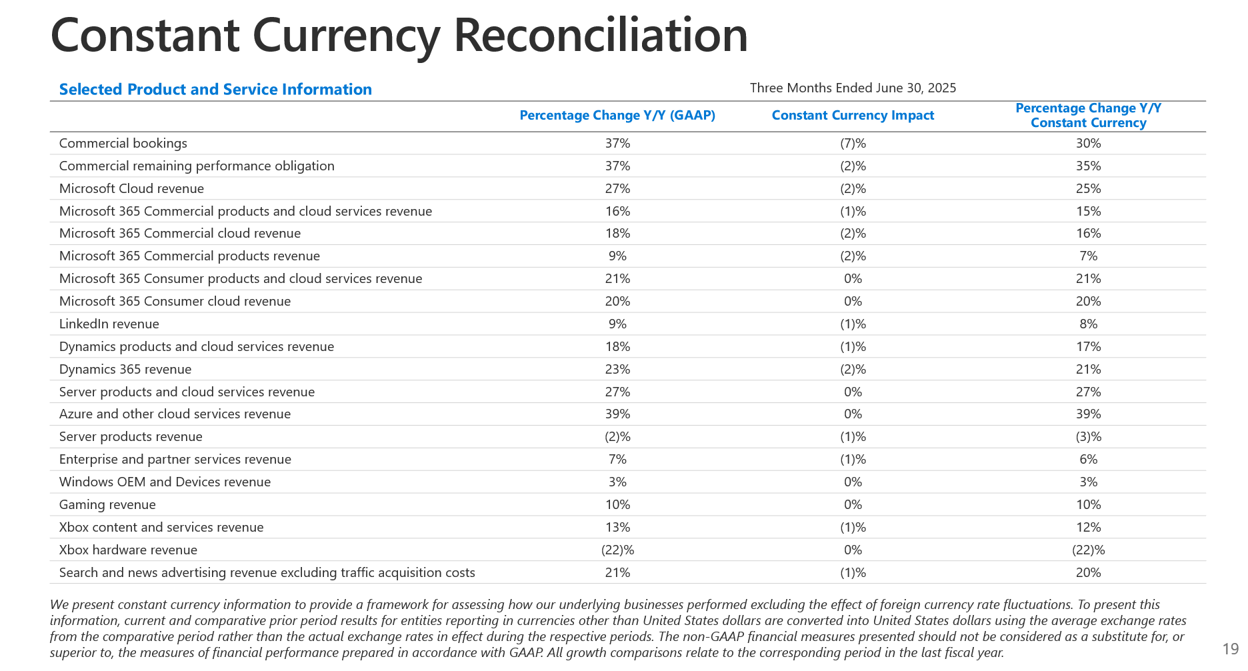

Supply chain and logistics. Wayfair has an inventory light approach to its supply chain, but CastleGate, the company's proprietary logistics network, is performing well. The network covers inbound logistics, storage and outbound fulfillment.

CastleGate Forwarding is an inbound logistics and ocean freight forwarding operation that gives suppliers volume rates with carriers. Wayfair consolidates goods to ship and smaller suppliers have been increasing CastleGate usage.

According to Wayfair, CastleGate Fowarding has seen a 40% year over year increase in total volume in the second quarter and long-term inbound commitments are up 30% from a year ago. Wayfair is growing revenue by offering a third-party logistics service tailored to the home category.

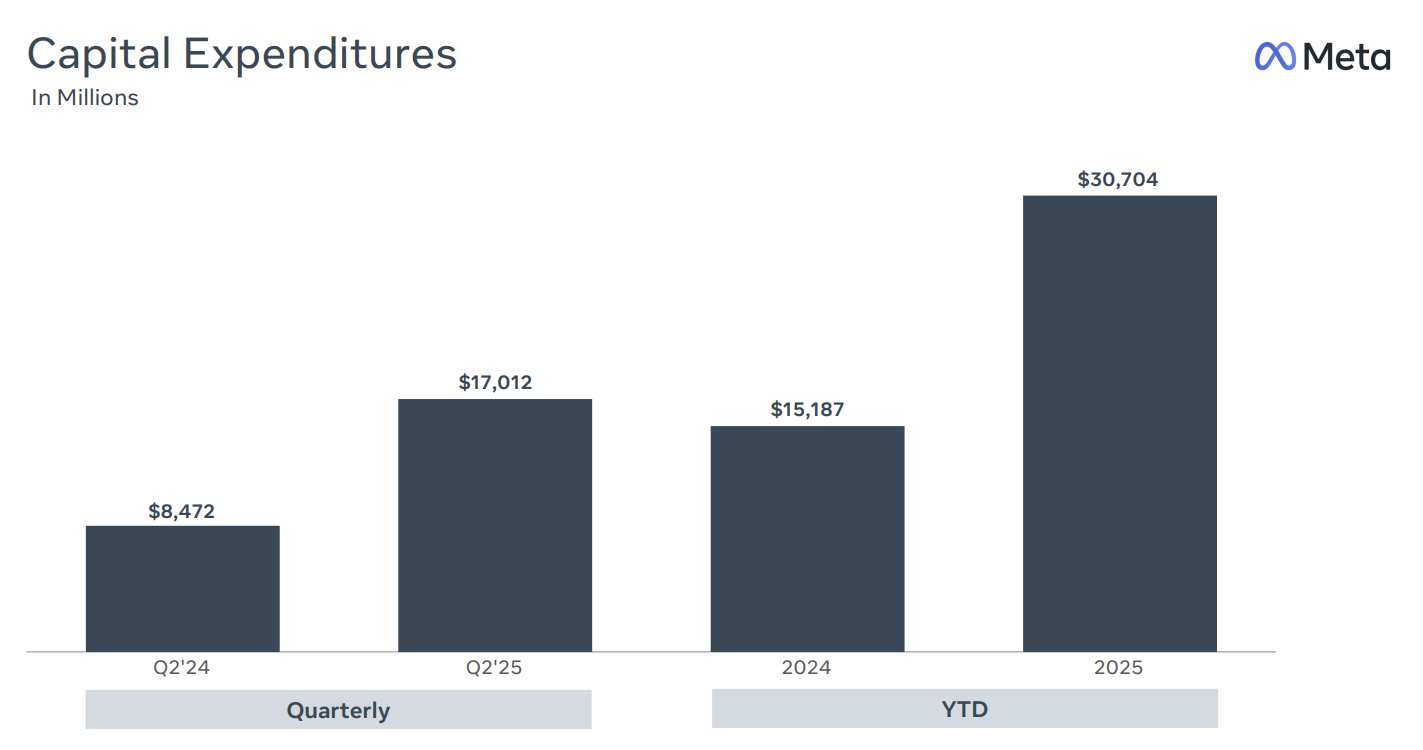

Replatforming to Google Cloud is now mostly complete. CFO Kate Gulliver said that Wayfair's second quarter free cash flow of $230 million was its strongest since the third quarter of 2020. Capital expenditures were lower due a technology restructuring after the replatforming.

Customer and supplier experience improvements. Shah said that Wayfair has a 2,500 person technology organization that has been focused on the replatforming of core systems to Google Cloud. Now that migration is complete, that team is focused on increase product velocity and innovation.

"Now that we're very far into that replatforming effort, a lot of the cycles of the team are now back building features and functions to improve the customer experience and supplier experience," said Shah. "And you see that affect things, whether it's conversion rates, enabling suppliers to do more, launching new genAI-powered features and delivering efficiency gains in our operations."

Surgical ad spending with a focus on ROI. Wayfair in the fourth quarter began investing in influencers on Instagram and TikTok. Shah said that influencer investment has performed well, but the spend is modest.

More importantly, Shah said Wayfair has lowered ad costs through "a lot of testing and enhancements to some of our measurement models." "We've also been able to identify pockets of our spend, which we do not believe were contributing at the economic payback we wanted," said Shah. "Even though they were creating some revenue for us, it was not at a cost level that would make sense to us."

AI: Generative and agentic. Shah said there are multiple consumer facing areas where experience is being improved by generative AI. Search results, product descriptions and imagery, accuracy and other areas are just a few.

Shah said Wayfair in the long run is looking at using AI and agents to guide customers because "there's a lot more product discovery and content around trends." Wayfair is also developing features like Decorify and Muse to give shoppers personalization based on price and style.

The company is also looking at partnerships and ways to work with LLM companies such as OpenAI, Google Gemini and Perplexity, said Shah.

Wayfair has spent recent years rightsizing its organization and teams. That restructuring has been a distraction, but now Wayfair teams can focus on new programs including Wayfair Rewards, a loyalty program, Wayfair Verified, a set of goods that has been hand selected and inspected by Wayfair, and logistics efforts.

"The recipe keeps getting better, the technology cycles are available to drive the business forward, and we've been launching and growing new programs," said Shah.

Data to Decisions Marketing Transformation Matrix Commerce Next-Generation Customer Experience Innovation & Product-led Growth Revenue & Growth Effectiveness B2B B2C CX Customer Experience EX Employee Experience business Marketing eCommerce Supply Chain Growth Cloud Digital Transformation Disruptive Technology Enterprise IT Enterprise Acceleration Enterprise Software Next Gen Apps IoT Blockchain CRM ERP Leadership finance Social Customer Service Content Management Collaboration M&A Enterprise Service AI Analytics Automation Machine Learning Generative AI Chief Information Officer Chief Customer Officer Chief Data Officer Chief Digital Officer Chief Executive Officer Chief Financial Officer Chief Growth Officer Chief Marketing Officer Chief Product Officer Chief Revenue Officer Chief Technology Officer Chief Supply Chain Officer