Google Cloud tops $50 billion annual revenue run rate in Q2

Google Cloud tops $50 billion annual revenue run rate in Q2

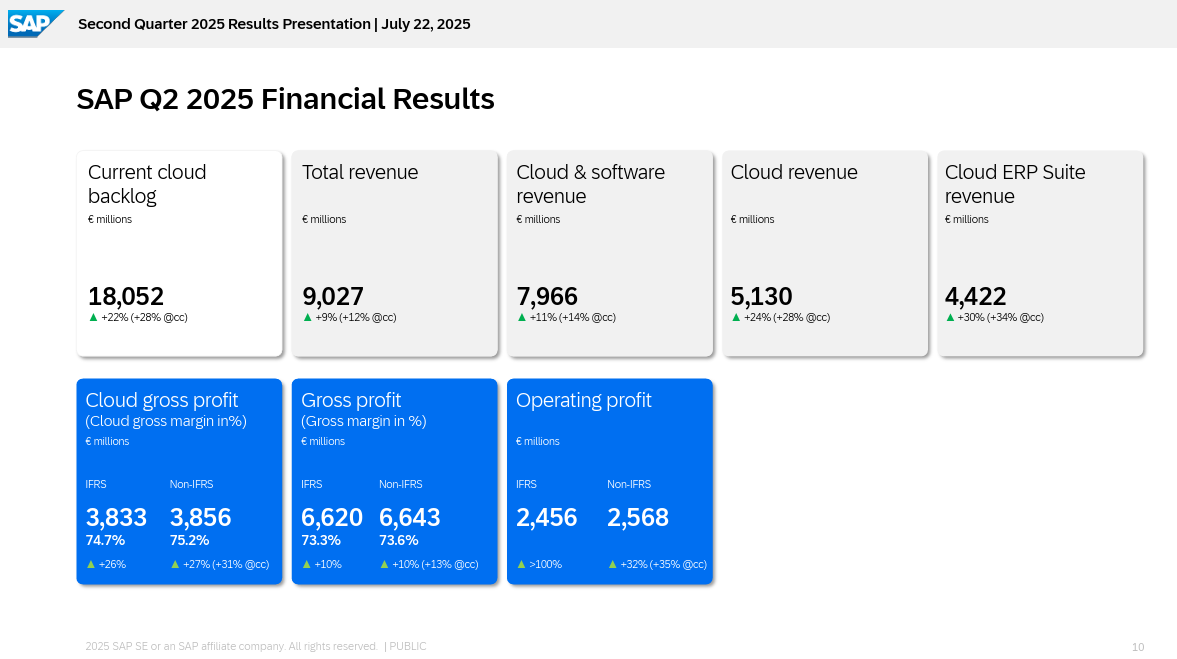

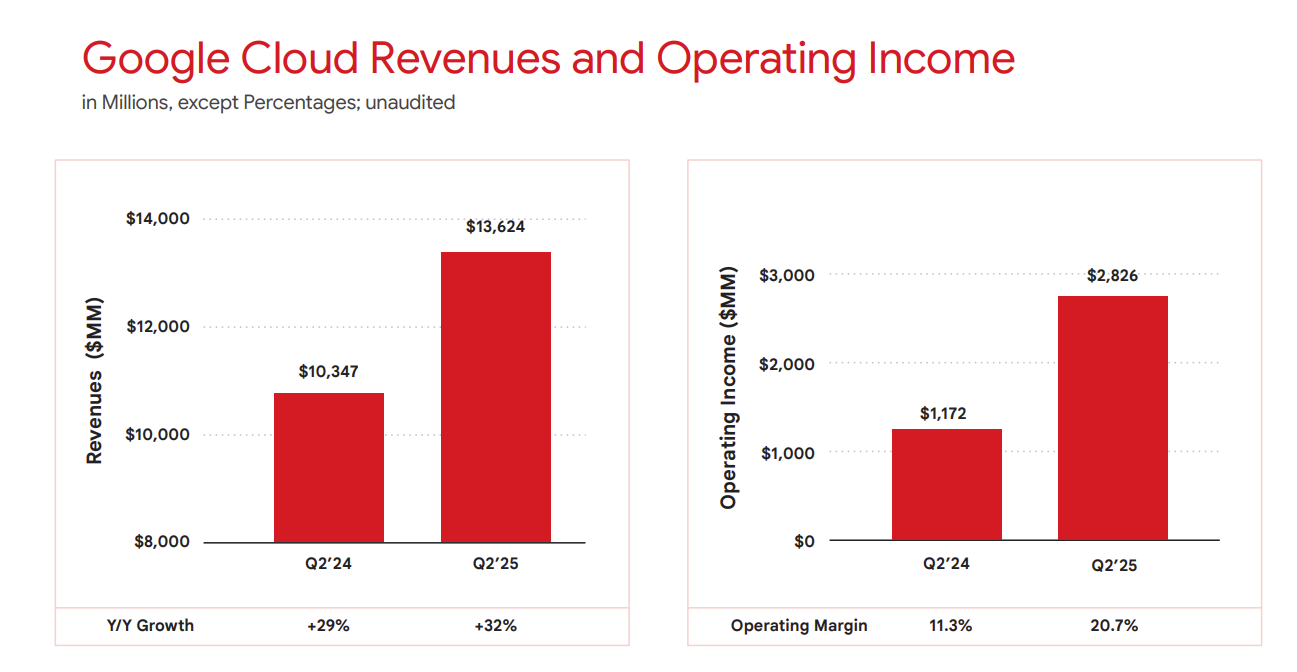

Google Cloud revenue in the second quarter surged 32% to $13.6 billion as Alphabet parent of Google, delivered better-than-expected results.

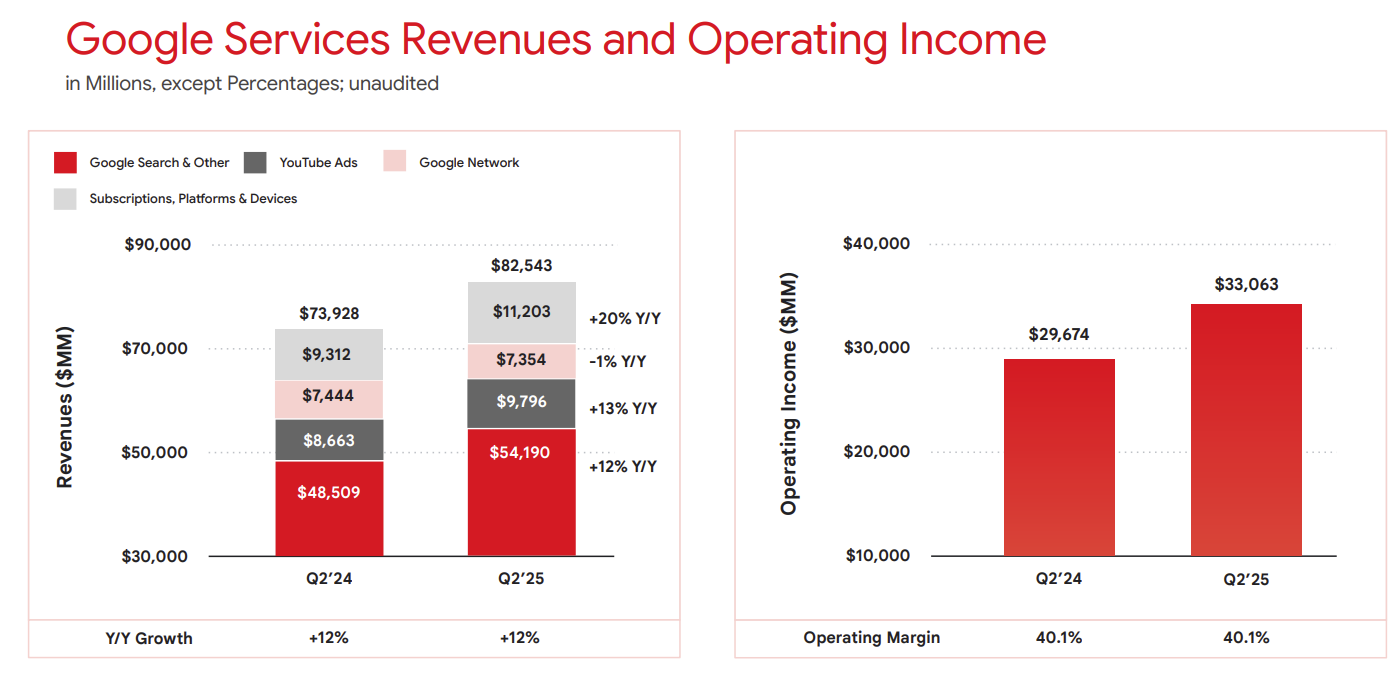

Alphabet reported second quarter earnings of $2.31 a share on revenue of $96.4 billion, up 14% from a year ago. The company said it saw strong growth across YouTube, Google Cloud, search and subscriptions.

Wall Street was looking for Alphabet to report non-GAAP earnings of $2.19 a share on revenue of $93.96 billion.

CEO Sundar Pichai said the second quarter was a "standout" that was fueled across its unit.

Referring to Google Cloud specifically, Pichai said the company had "strong growth in revenues, backlog and profitability. Its annual revenue run-rate is now more than $50 billion."

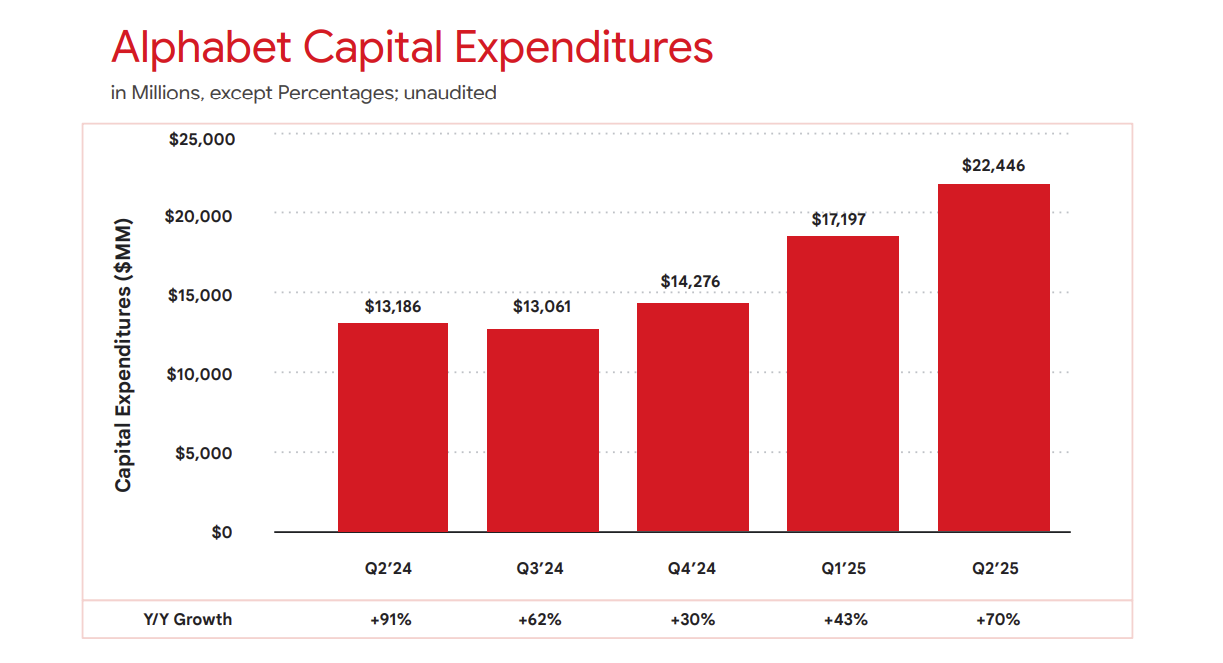

Pichai added that it will spend more on building out its Google cloud infrastructure with capital expenditures of $85 billion in 2025.

- Google I/O 2025: Google aims for a universal AI assistant

- Google Beam aims to bring 3D, AI to video conferencing with HP

- 9 Google Cloud customers on AI implementations, best practices

- Google Cloud CEO Kurian on agentic AI, DeepSeek, solving big problems

- Google Cloud CTO Grannis on the confluence of scale, multimodal AI, agents

As expected, Google Services delivered most of Alphabet's operating income with $33.06 billion on revenue of $82.5 billion. Google Cloud operating income was $2.83 billion.

Pichai said on the earnings conference call:

- "Nearly all Gen AI unicorns use Google Cloud, and it's why a growing number including leading AI research labs use TPU specifically."

- "Our AI infrastructure investments are crucial to meeting the growth and demand from Google Cloud customers."

- "Our 2.5 models have been a catalyst for growth, and 9 million developers have now built with Gemini."

- "The number of Google Cloud deals worth more than $250 million doubled year over year."

- "We also saw strong growth in the use of multimodal search, particularly the combination of Lens of Circle and Research together with AI overviews. This growth was most pronounced among younger users our new end to end AI search experience. AI mode continues to receive very positive feedback, particularly for longer and more complex questions. It's still rolling out, but already has over 100 million monthly active users in the US and India."

Constellation Research analyst Holger Mueller said:

"Google Cloud is doing well as it looks like enterprises are finally understanding that Google has a 3- to 4-year lead when it comes to putting custom algorithm (Tensorflow) on custom hardware (TPUs). It now has also a 1+ year lead for operating multimodal models. All that results in better and cheaper AI, a powerful formula that seems to be turbocharging Google Cloud."