Five9 acquires Aceyus, aims to expand analytics, enterprise reach

Five9 acquires Aceyus, aims to expand analytics, enterprise reach

Five9 said it will acquire Aceyus, which ingests data from multiple customer experience systems and provides insights and analytics. Separately, Five9 reported second quarter earnings and projected $908 million to $910 million in 2023 revenue.

Aceyus provides call center analytics, customer journey data, omni-channel reporting and multiple integrations. Terms of the deal weren't disclosed.

According to Five9, Aceyus will provide its CX platform with contextual data across disparate systems. Aceyus integration catalog will also boost Five9's data lake. Five9 said Aceyus will also bolster its AI and automation portfolio.

Mike Burkland, Five9 CEO, said Aceyus and Five9 have multiple joint accounts. "The addition of Aceyus will extend our platform to further facilitate the migration of large enterprise customers to the cloud and to leverage contextual data to deliver personalized experiences," said Burkland in a statement.

Separately, Five9 reported second quarter revenue of $222.9 million, up 18% from a year ago. Five9 reported a second quarter net loss of $21.7 million. Non-GAAP earnings for the second quarter were $37.4 million, or 52 cents a share.

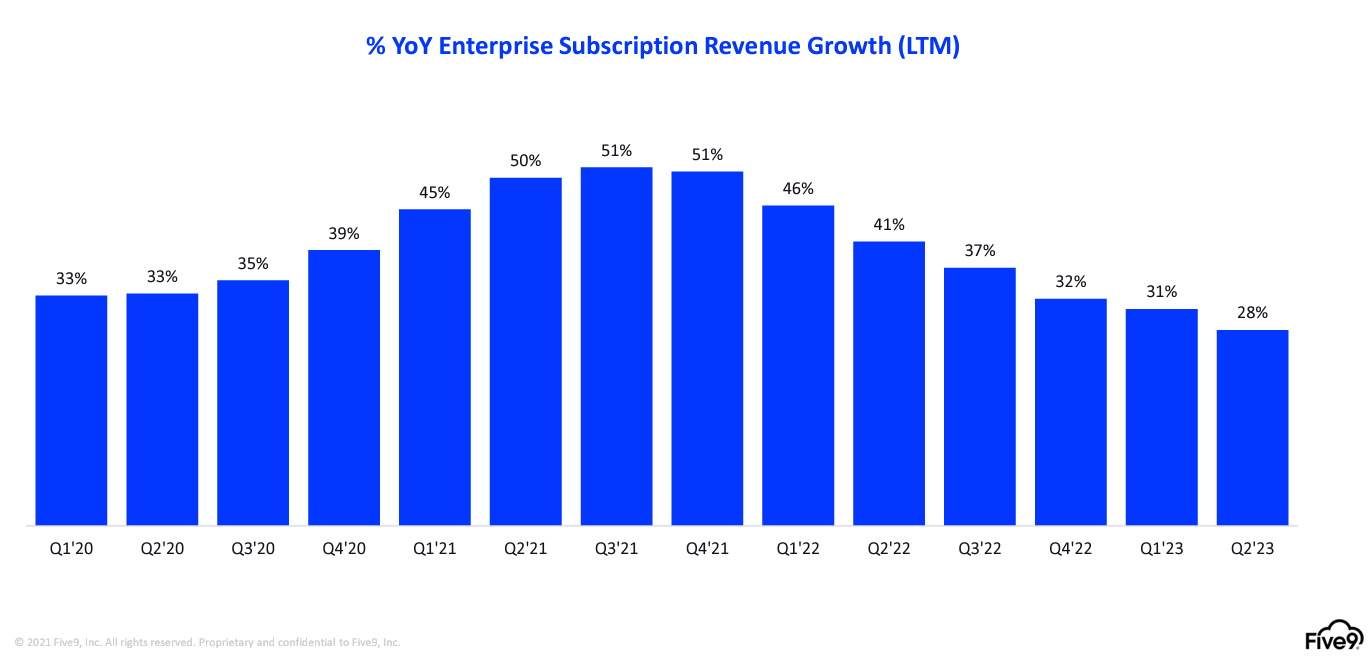

In the second quarter, Five9 reported enterprise subscription revenue growth of 28%. About 87% of Five9's revenue is enterprise.

As for the outlook, Five9 projected third quarter revenue of $223.5 million to $224.5 million with non-GAAP earnings of 42 cents a share to 44 cents a share. For 2023, Five9 projected non-GAAP earnings of $1.79 a share to $1.83 a share on revenue of $908 million to $910 million.

Constellation Research's take

Constellation Research analyst Liz Miller handicapped Five9 recent developments. Here's Miller's take:

Five9 Buys Aceyus: The opportunity here is to accelerate the shift from on-prem to cloud, especially when it comes to all that messy, complex and crazy customer data that delivers that robust, personalized and highly contextual experience that Five9 looks to deliver in an agile, cloud-first experience. Not only is the contact center a treasure trove of critical (and often untapped) customer data, it also sits at the front line of CX delivery. By harmonizing that gold found in the true voice of the customer with the stores of customer data that can be brought in from any number of CX outposts, we get a new, even more potent fuel for CX-centric AI applications. But the danger here is that this runs the risk of creating another silo of customer data that behaves just like a single use appliance for a single department or function. With Aceyus, the vision is to ingest and harmonize complex data…which many out there will recognize as the siren’s song of the enterprise Customer Data Platform (CDP). So the question here is will this vision assume that all of the teams at the front lines of real CX (eg: sales, service AND marketing) will all have their own CDP? Will this Five9 Aseyus offering sit next to, above or below an Amperity, Salesforce Data Cloud (eg Genie), Adobe Real-Time CDP or Segment implementation?

Five9 Earnings: These results are really a testament to a movement started almost 2 years ago to march up-market. The item to focus on here is the partner-driven growth that is behind this continued acceleration. Over 60% of Five9’s international implementations are being done by partners and there is phenomenal demand from partners to continue advancing, especially with the new innovations around AI. One note from the earnings indicates that 15 partners achieved over $1M in bookings for the quarter. Five9 had not always been touted as the “best” partner in the contact center market…but that has changed and they are actively investing in making sure that partners are happy and that channel growth and bookings are healthy.

Next-Generation Customer Experience Data to Decisions Innovation & Product-led Growth Future of Work Tech Optimization Digital Safety, Privacy & Cybersecurity ML Machine Learning LLMs Agentic AI Generative AI AI Analytics Automation business Marketing SaaS PaaS IaaS Digital Transformation Disruptive Technology Enterprise IT Enterprise Acceleration Enterprise Software Next Gen Apps IoT Blockchain CRM ERP finance Healthcare Customer Service Content Management Collaboration Chief Information Officer Chief Digital Officer Chief Executive Officer Chief Technology Officer Chief AI Officer Chief Data Officer Chief Analytics Officer Chief Information Security Officer Chief Product Officer