Education tech in turmoil amid genAI: Why consolidation is next

Education tech in turmoil amid genAI: Why consolidation is next

The education technology market has been rattled by generative AI, cautious enterprise and institutional spending and a set of overlapping companies with similar annual revenue profiles.

What's next? A merger and acquisition wave that's just getting started. The jockeying for position is already underway, but private equity will roll these companies together or they’ll merge on their own.

- In March, Accenture said it will acquire Udacity and absorb its employees to create Accenture LearnVantage. Accenture's plan is to use LearnVantage to reskill and upskill people in technology, data and AI.

- In May, Bain Capital said it will acquire PowerSchool for $5.6 billion. Meanwhile, Coursera and Chegg have been crushed by investors and now may look like buyout targets.

- Instructure Holdings, the company behind Canvas, is reportedly in play to go private again. Thoma Bravo, which already owns 83% of Instructure, acquired the company for about $2 billion in 2020 and then took it public a year later at $20 a share. Now Reuters reports that Thoma Bravo is gauging private equity interest in Instructure. Instructure's market cap today is north of $3 billion.

Here's a look at the education technology players and what they're planning for the reskilling boom that has yet to arrive.

This post first appeared in the Constellation Insight newsletter, which features bespoke content weekly and is brought to you by Hitachi Vantara.

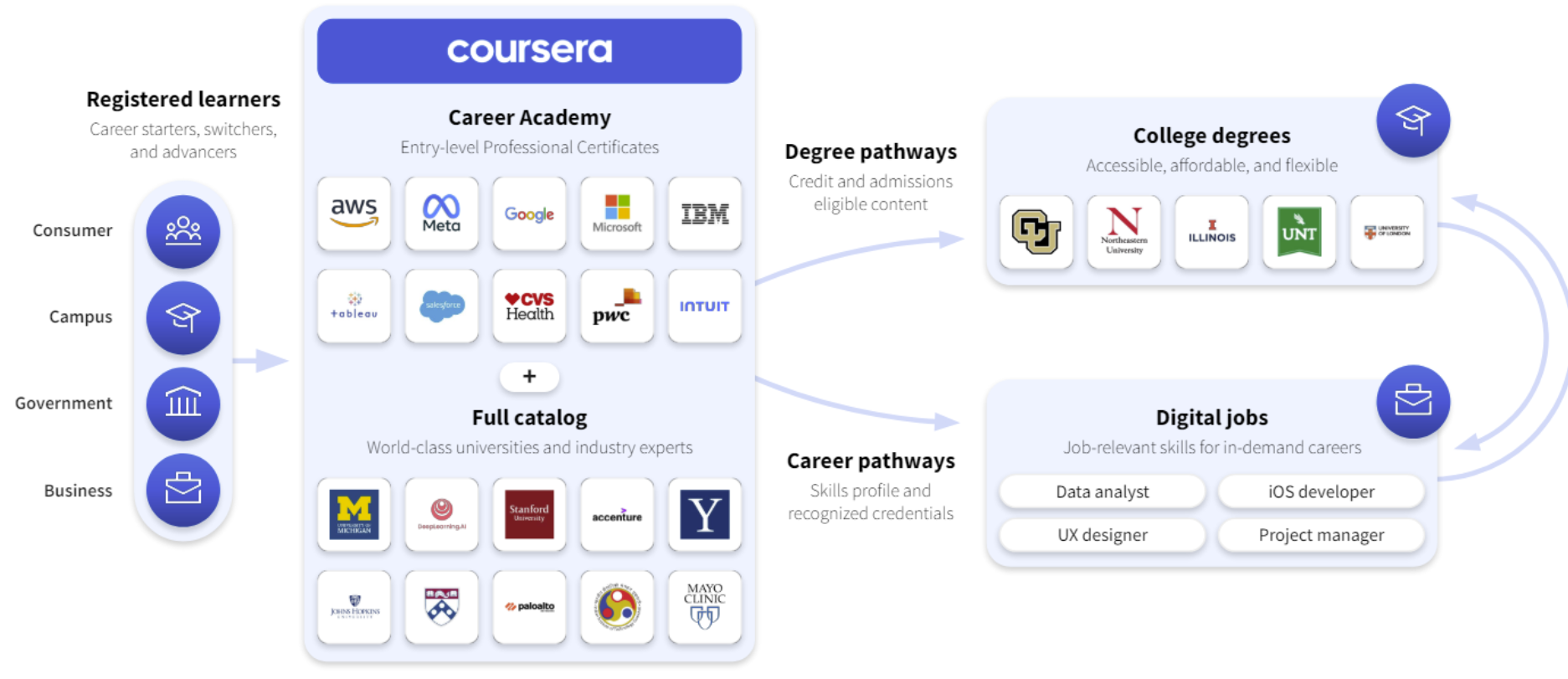

Coursera: Reskilling in genAI era tricky

Coursera has three operating units--consumer, enterprise and degrees. Consumer revenue was up 18% in the first quarter compared to a year ago and enterprise and degrees revenue was up 10%. AI courses were driving demand in consumer and building in enterprise and degrees.

What's the problem? Coursera's first quarter results were mixed and the second quarter outlook disappointed.

Simply put, Coursera has been in the penalty box since April. Coursera CEO Jeff Maggioncalda said, "we remain in the early stages of understanding how generative AI will reshape the way we live, learn and work."

Nevertheless, Coursera's plans and platform look solid. The company did see softness in North American subscribers, but has plays in higher-ed as well as corporate reskilling. The company is also using genAI to bolster its content.

Coursera has more than $725 million in cash and short-term equivalents and a market cap of $1 billion.

Chegg in turmoil

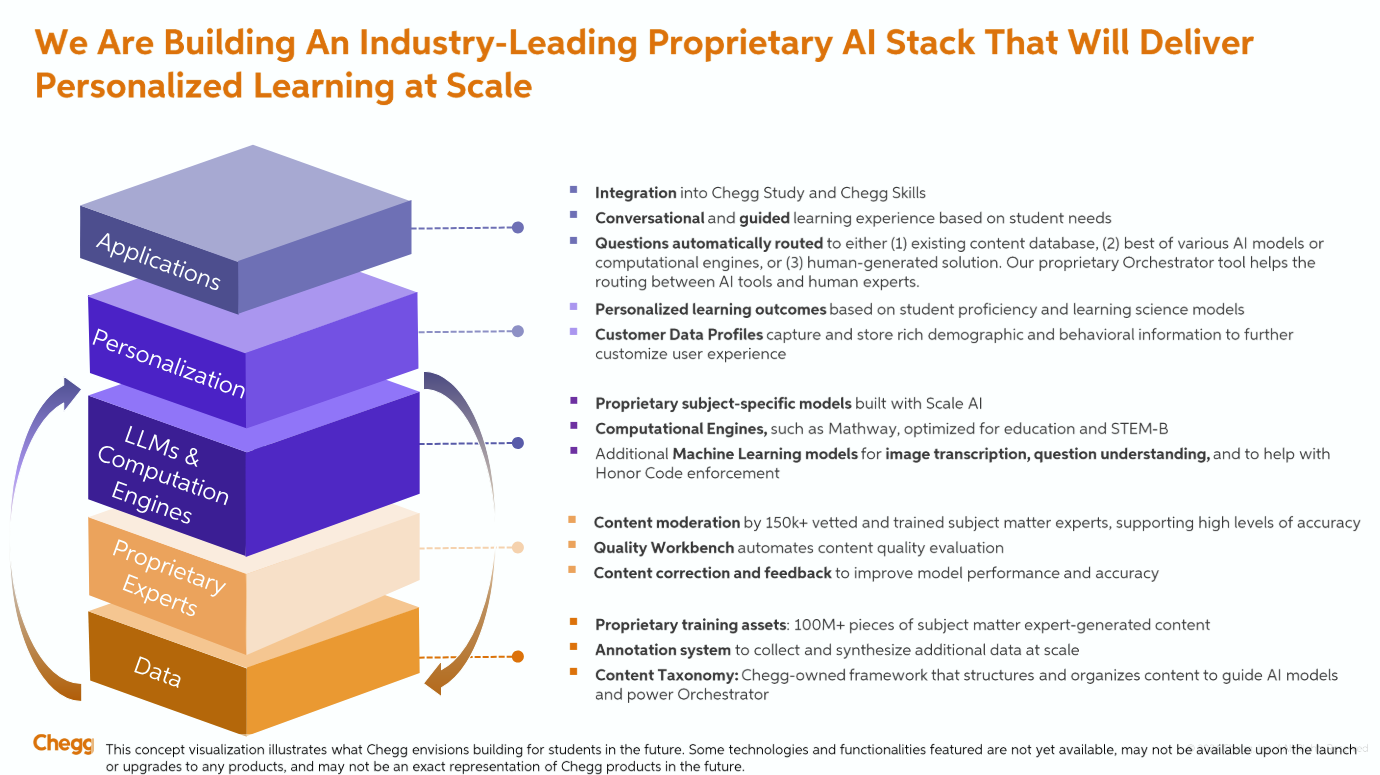

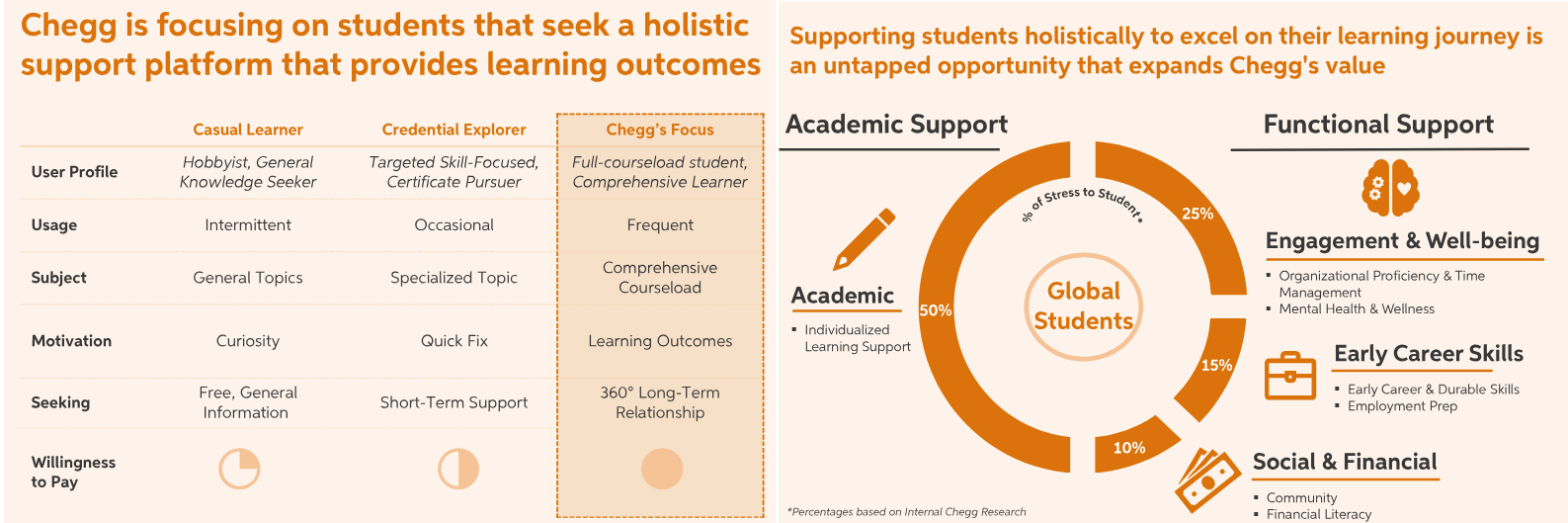

Chegg showed strong growth during the Covid-19 pandemic and has since struggled with declining subscriptions and fears that generative AI will hamper future business.

The company has swapped CEOs and cut headcount by 23%, or about 441 employees. In April, Chegg said second quarter revenue will be between $159 million to $161 million, a range that was well below Wall Street estimates.

In a shareholder letter that went along with its restructuring, new CEO Nathan Schultz said the company is going all-in on generative AI to offer better learning experiences. Schultz added that Chegg will provide 360-degree individualized support for students that will couple proactive guidance and a combination of AI, content and human experts.

Schultz said the plan is to become more efficient, consolidate platforms and expand internationally. Chegg will also ink commercial agreements with educational institutions. Typically, Chegg has marketed directly to students. "We plan to roll out an expanding marketing and branding program, build more AI-driven functionality and tools, localize our services internationally and layer in new non-academic offerings that address the whole student," said Schultz.

For now, Chegg needs to return to subscriber growth and remains in the penalty box. Chegg could also look enticing to private equity due to its brand with students and reality that transformations are best done without the quarterly results grind.

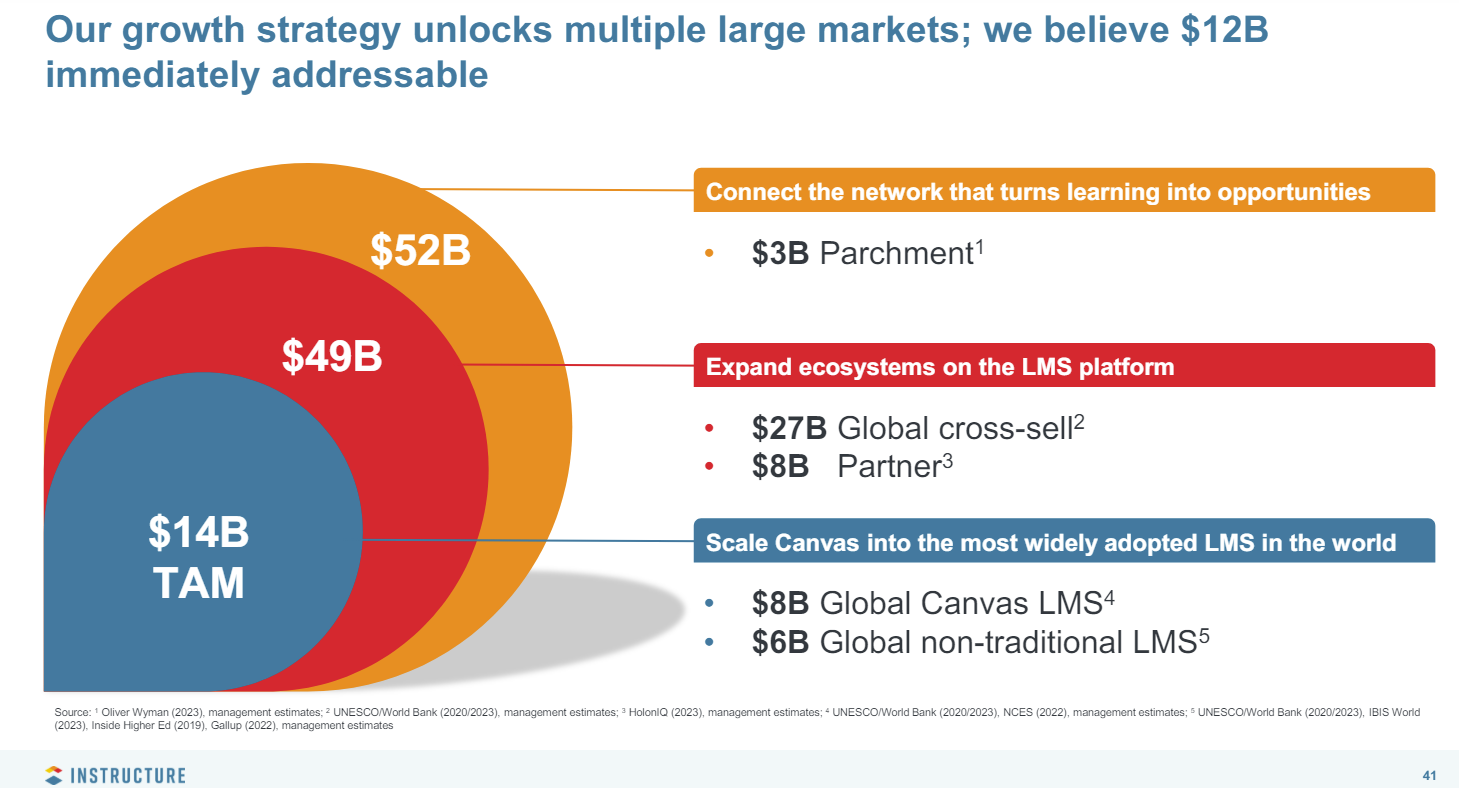

Instructure's powerful position with Canvas

It's unclear where Instructure shares would be without the report that Thoma Bravo is shopping the company. It's quite possible that Instructure would fall along with the rest of the education technology space.

Nevertheless, Instructure's position with Canvas--the education operating system is valuable. In its first quarter, Instructure reported revenue of $155.5 million, up 20.7% from a year ago. The company reported a net loss of $21.1 million.

The company guided to second quarter revenue of $166.5 million to $167.5 million with non-GAAP operating income of $66 million to $67 million. For the fiscal year, Instructure is projecting revenue of $656.5 million to $666.5 million with non-GAAP net income of $123 million to $127 million.

Here's the rub: Instructure has total debt of $1.17 billion as of March 31.

CEO Steve Daly said Instructure will continue to innovate with the Canvas Learning Management System (LMS) and be a cog in education digital transformation projects. Daly added that the education market is uncertain, but demand interest remains strong.

Daly said:

"The consolidation and optimization of technology resources continues to be a high priority, and the LMS is the natural place for consolidations to happen. Customers are pulling us into more broad-based digital transformation discussions and see Instructure as a platform for consolidating their tech stack. This is a long-term trend, and we believe our expanding portfolio of products, especially our EdTech effectiveness solutions, as well as the extensive reach of our partner ecosystem position us as the partner of choice for consolidation."

Instructure could be a consolidator in the education technology market. In February, Instructure completed the purchase of Parchment, which operates an academic credential market platform.

PowerSchool going private

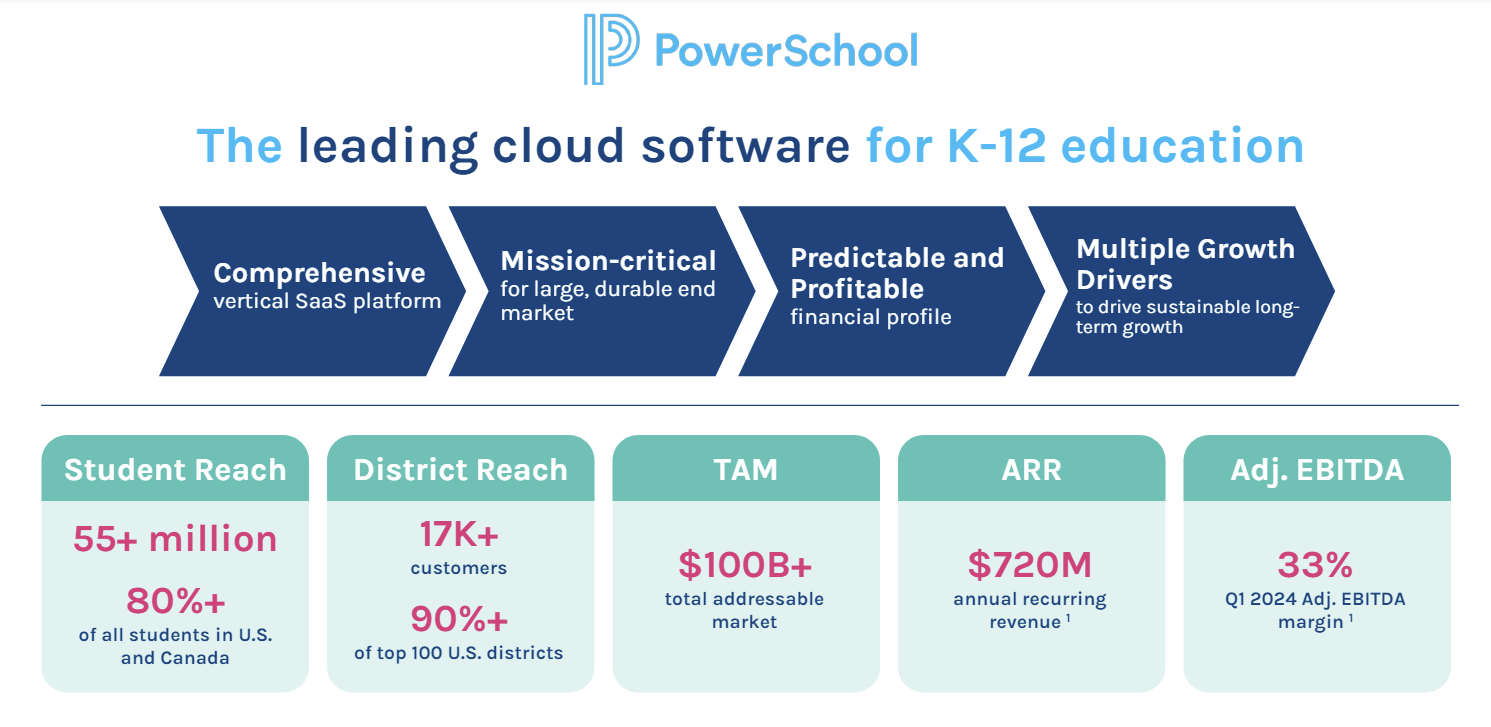

PowerSchool is being acquired for $5.6 billion, or $22.80 per share, by Bain Capital. PowerSchool, which makes software for the K-12 education market, supports more than 55 million students and 17,000 customers.

The company announced the general availability of PowerSchool PowerBuddy, a generative AI assistant for teachers and students that personalizes learning plans to improve student engagement.

PowerSchool went public at $18 a share in 2021 and was trading in the $16 range before the Bain purchase.

In the first quarter PowerSchool reported revenue of $185 million, up 16% from a year ago. The first quarter net loss was $22.8 million. Annual recurring revenue (ARR) was $720.3 million.

Udemy: Rides AI training wave

Udemy reported first quarter revenue of $196.8 million, up 12% from a year ago, with a net loss of $18.3 million. Non-GAAP net income was $5.3 million.

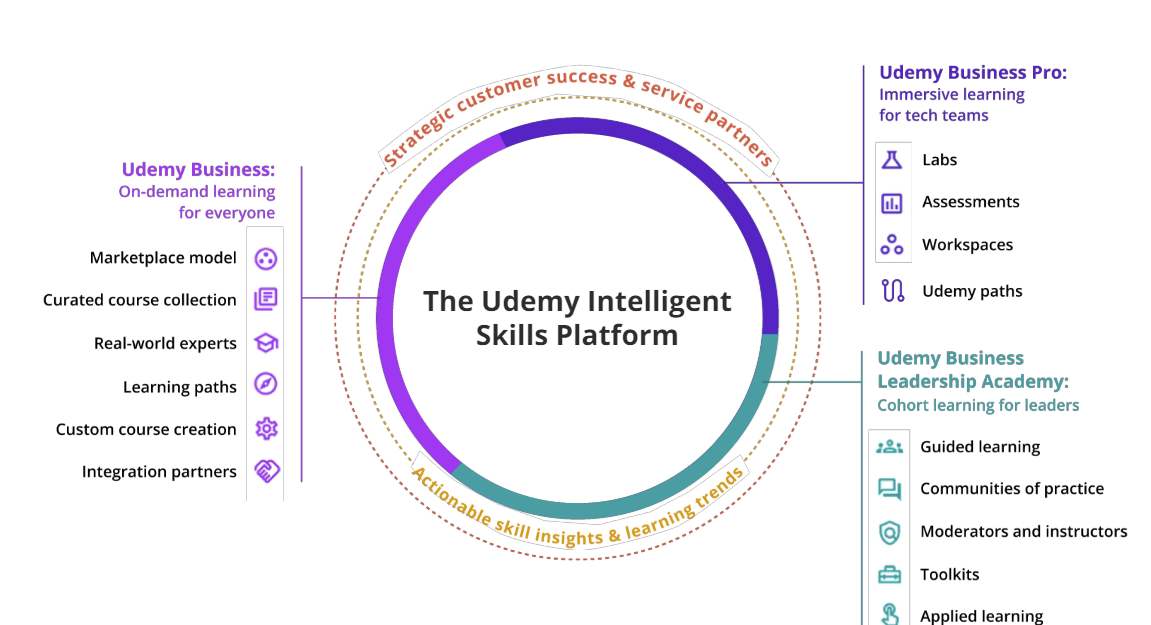

The company is focused on online skill development and has more than 16,000 enterprise customers.

Udemy looks more like Coursera in that it has consumer and enterprise businesses. The company projected second-quarter revenue of $192 million to $195 million and annual revenue of $795 million to $805 million.

Gregory Brown, CEO of Udemy, said on the company's first quarter earnings call said:

"Udemy is differentiated by the high quality, immersive and localized learning experiences we provide. Our comprehensive solution is purpose-built to provide three essential learning modalities, on-demand, immersive and cohort based. This combination allows us to offer an end-to-end solution where professional learners and organizations around the world can easily identify and quickly develop the skills, they need to deliver better business outcomes today and in the future."

In addition, Udemy has been riding the AI training and IT certification wave. "In just over a year since Chat GPT launched, we’ve seen more than 4 million enrollments in the 2,000 plus AI courses in the Udemy catalog," said Brown. "This massive surge in interest from individuals and organizations demonstrates the rapidly growing need to upskill talent on generative AI. Based on what we’ve seen in the past year, we believe generative AI will have a significant lasting impact across nearly every industry, region and professional role."

Future of Work Data to Decisions Innovation & Product-led Growth New C-Suite Tech Optimization Chief Information Officer Chief Experience Officer

h ACES to enable the guest services team to personalize visits:

h ACES to enable the guest services team to personalize visits: