Equifax bets on Google Cloud Vertex AI to speed up model, scores, data ingestion

Equifax bets on Google Cloud Vertex AI to speed up model, scores, data ingestion

Equifax said it is deploying Google Cloud's Vertex AI platform across its systems as it accelerates data ingestion as well as new product launches.

Speaking on Equifax's first quarter earnings call, CEO Mark Begor said driving AI innovation is key to the company's growth goals via products such as Ignite and Interconnect. Begor said:

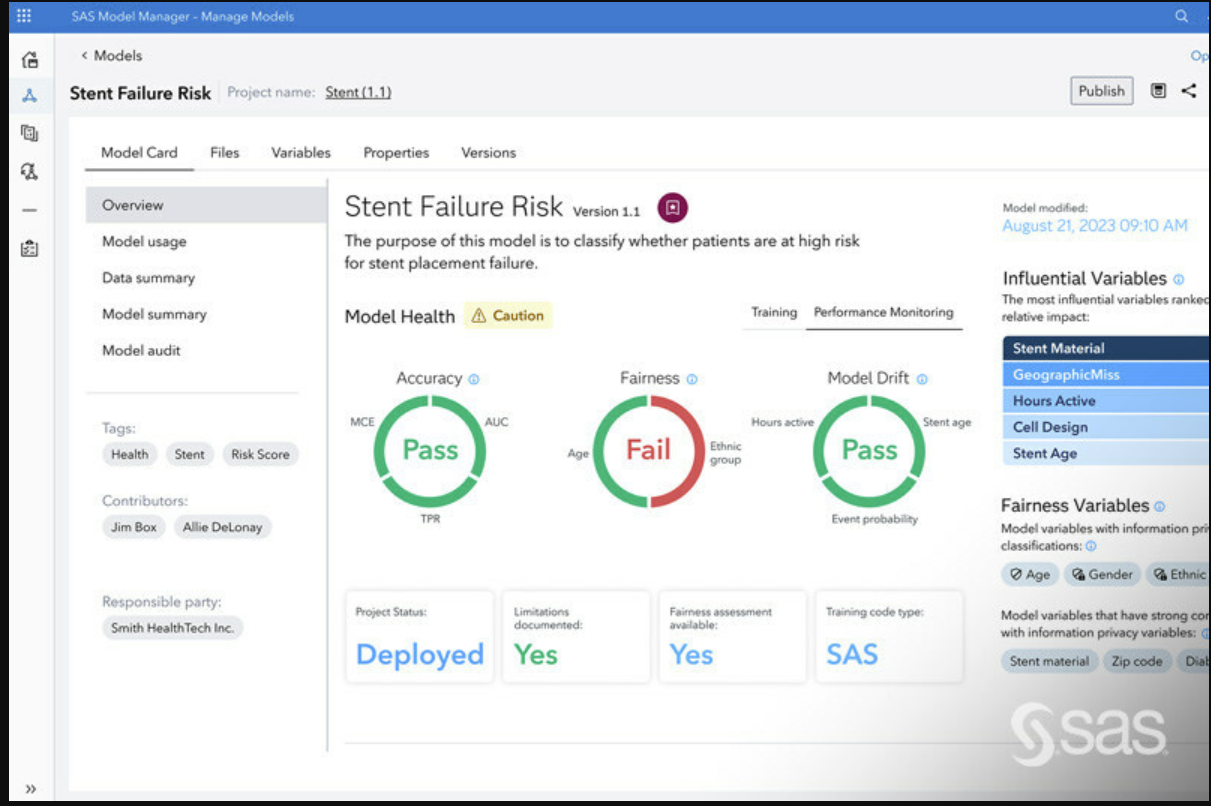

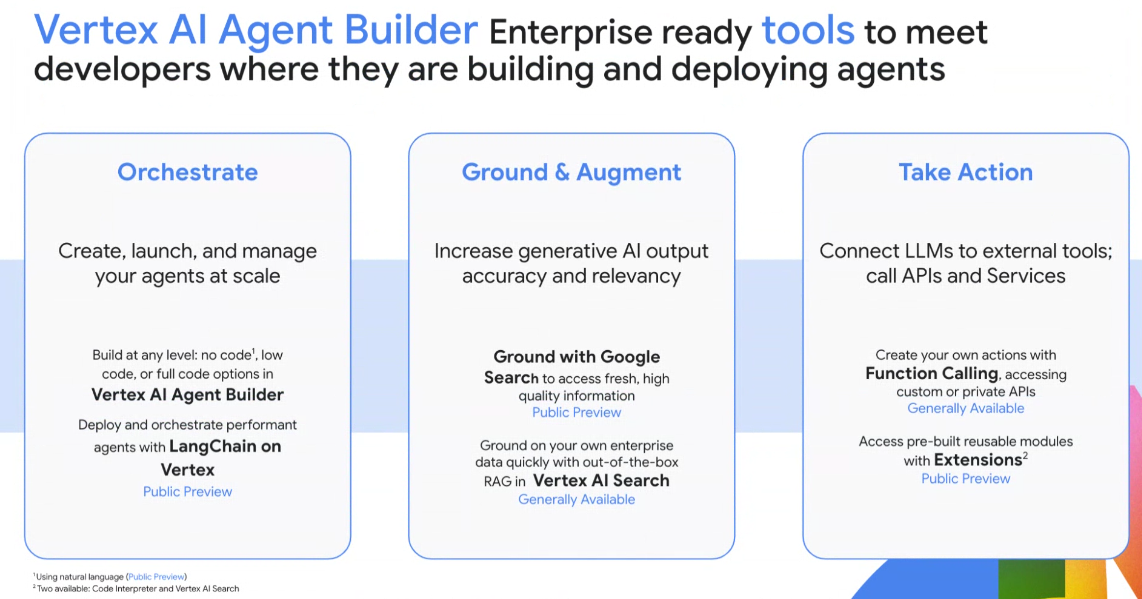

"During 2024, we're deploying both Equifax proprietary explainable AI along with Google Vertex AI across Ignite, Interconnect and our global transaction systems.

For Equifax, Vertex AI enables faster and more predictive model development on our Ignite platform. And for our clients, Ignite, which combines data analytics and technology into one cloud-based ecosystem, customers can connect their data with our unique data through our identity resolution process to gain a single holistic view of consumers."

Constellation Research previously covered Equifax's cloud transformation in a customer story. Equifax also was a reference account at Google Cloud Next.

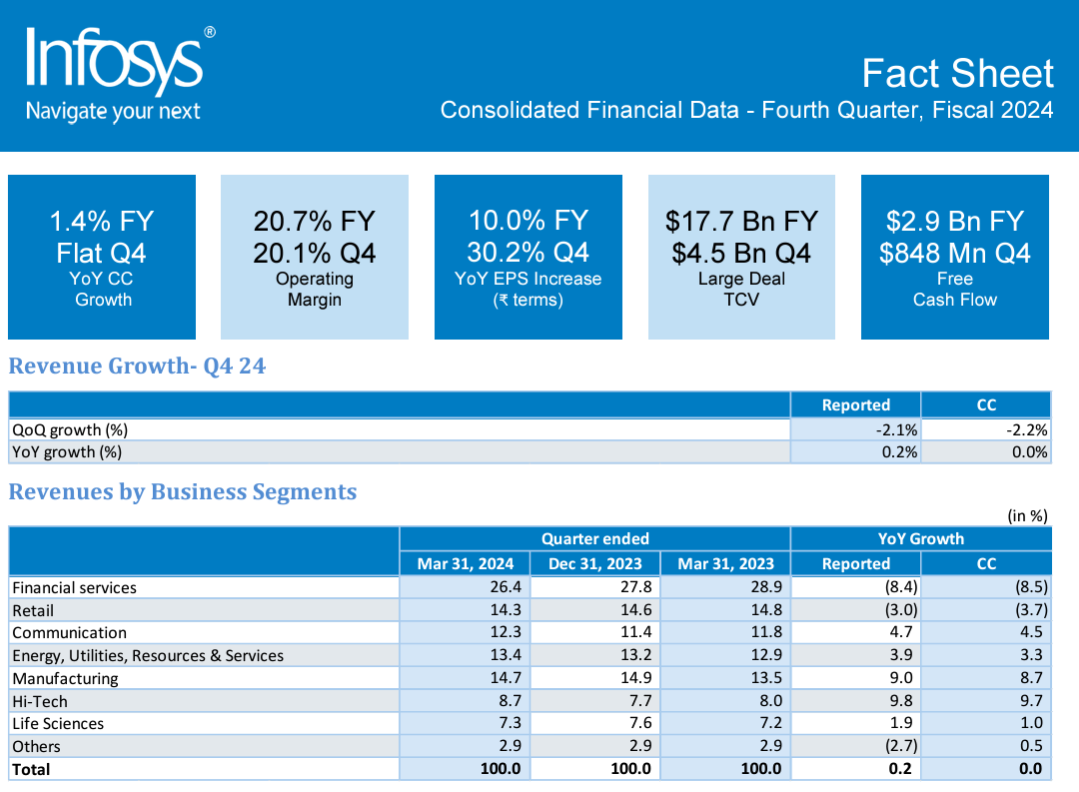

Equifax reported first quarter revenue of $1.39 billion, up 7% from a year ago, with net income of $124.9 million.

Those platforms combined with Equifax's broad reach into datasets means faster ingestion and analytics and new products and services.

Begor said Equifax has access to 100% of the US population through its data sets in a single data fabric. He added that Equifax's cloud infrastructure is processing data 5x faster than its legacy applications could.

Equifax is also planning on completing its cloud transformation, closing data centers and saving $300 million a year in 2024. From there, Equifax will be focused on growing its EFX.AI offerings with higher performing models, scores and data products.

"Completing the cloud transformation also frees up our team to fully focus on growth and expanding innovation, new products and new markets. Our progress towards completing the cloud is gaining momentum with over 70% of our total revenue in the new Equifax Cloud at the end of the quarter," said Begor. "And we're focused on executing the remaining steps to reach 90% with Equifax revenue in the cloud by year-end."

Data to Decisions Innovation & Product-led Growth Future of Work Tech Optimization Next-Generation Customer Experience Digital Safety, Privacy & Cybersecurity ML Machine Learning LLMs Agentic AI Generative AI AI Analytics Automation business Marketing SaaS PaaS IaaS Digital Transformation Disruptive Technology Enterprise IT Enterprise Acceleration Enterprise Software Next Gen Apps IoT Blockchain CRM ERP finance Healthcare Customer Service Content Management Collaboration Chief Information Officer Chief Executive Officer Chief Technology Officer Chief AI Officer Chief Data Officer Chief Analytics Officer Chief Information Security Officer Chief Product Officer