RSA Conference 2024 in a nutshell: What you need to know

RSA Conference 2024 in a nutshell: What you need to know

Google Cloud launched Threat Intelligence, Cisco and Splunk outlined integrations and new security offerings, Palo Alto Networks outlined its AI and cybersecurity future and CrowdStrike, Fastly and a bevy of others had announcements. Akamai acquired Noname Security.

While Chirag Mehta is at RSA looking at trends and developments and themes for CxOs, I'll round up the news nuggets to know. Here's a look.

- Akamai Technologies said it will acquire Noname Security, an API security vendor, to bolster its existing API Security offering. Akamai said the purchase price was $450 million.

- Google Cloud launched Google Threat Intelligence, which combines Google's security offerings into one package. Google T

hreat Intelligence includes Mandiant intelligence, VirusTotal and visibility tools built on the company's mobile and email scale. The headliner for Google Threat Intelligence is Gemini in Threat Intelligence, a generative AI agent built on Gemini 1.5 Pro that provides insights. Google Cloud also announced the latest release of Google Security Operations.

hreat Intelligence includes Mandiant intelligence, VirusTotal and visibility tools built on the company's mobile and email scale. The headliner for Google Threat Intelligence is Gemini in Threat Intelligence, a generative AI agent built on Gemini 1.5 Pro that provides insights. Google Cloud also announced the latest release of Google Security Operations.

- Splunk Asset and Risk Intelligence. Splunk launched Splunk Asset and Risk Intelligence to enable enterprises to be more proactive about security and mitigating risks. The system correlates and aggregates data from various sources to keep a rolling inventory of assets. This inventory can then be used to map assets and relationships to offer context for incident response. Dashboards and metrics are available out of the box to help with compliance.

- Cisco and Splunk integrations. Splunk's announcement landed as Cisco and newly acquired Splunk outlined integration to bridge security platforms. Cisco plans to converge its security portfolio with Splunk. For now, the two companies are rolling out new features and integrations. Cisco said Extended Detection & Response (XDR) is now integrated with Splunk Enterprise Security to provide one detection to remediation workflow. Cisco also said its unified AI Assistant for Security is available in Cisco XDR. The company also said its Panoptica application protection platform includes AI and machine learnings for real-time alerts. Cisco also said Hypershield, launched last month, will get tools to isolate attacks from unknown vulnerabilities within the runtime. See: Splunk’s Acquisition by Cisco Accelerates Convergence of Network, Security, and Observability, Fueled by AI | 11 Top Cybersecurity Trends for 2024 and Beyond

-

Palo Alto Networks announced three copilots across its cybersecurity platforms with Strata Copilot, Prism Cloud Copilot and Cortex Copilot. The assistants are powered by Precision AI, which combines generative AI, machine learning and deep learning from the data on Palo Alto Networks platforms. The Palo Alto Networks copilots are now in private preview. Palo Alto Networks is promising a launch that will highlight the intersection of AI and cybersecurity. The company also announced Cortex XSIAM, which will give customers the ability to integrate third-party EDR data and bring their own models to the platform. Cortex XSIAM will get one unified interface, security agents and integration with Prisma Cloud. See: CrowdStrike, Palo Alto Networks duel over platforms vs. bundles

- CrowdStrike outlined new additions to CrowdStrike Falcon Next-Gen SIEM. All Falcon Insight customers will get 10GB of third-party data ingest per day at no additional cost. In addition, Charlotte AI, CrowdStrike's genAI security analyst, will be available for all Falcon data in Next Gen SIEM. Charlotte AI will also get new promptbooks, the ability to summarize incidents and automated investigations. Falcon Next-Gen SEIM also gets more cloud connectors, data normalization and automated data onboarding. CrowdStrike also expanded its ecosystem. CrowdStrike also announced the general availability of CrowdStrike Falcon Application Security Posture Management (ASPM) as an integrated part of CrowdStrike Falcon Cloud Security. The effort aims to create a CloudSecOps platform that moves as fast as DevOps that includes business threat context, deep runtime visibility, and protection across multiple assets. CrowdStrike added cross-domain threat hunting tools in Microsoft Azure environments to its Cloud Detection and Response platform.

- Fastly said its Managed Security Service with coverage of Fastly Bot Management will now include a 30-minute time to notify service level agreement. With the SLA, Fastly said it is guaranteeing that its experts will notify and begin mitigating security incidents within 30 minutes of discovery for any web application security incident.

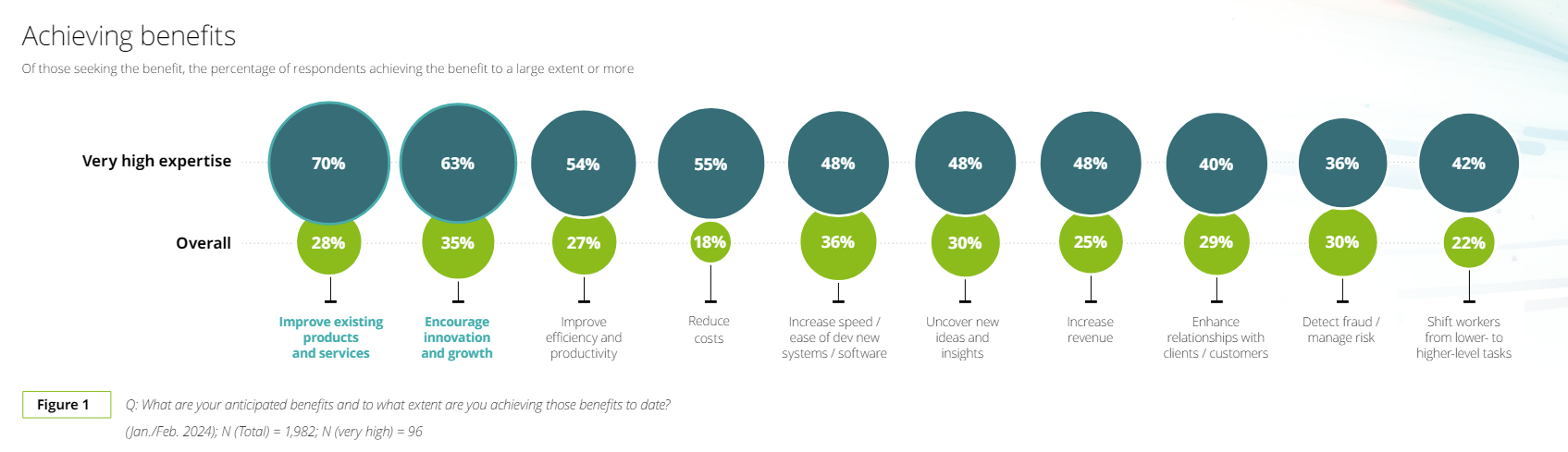

- Ernst & Young outlined some initial findings from its 2024 Human Risk in Cybersecurity Survey. EY said 85% of workers believe AI has made cybersecurity more sophisticated, 78% are concerned about the use of AI in cyber-attacks and 39% of employees aren't confident they know how to use AI responsibly.