Rubrik's IPO: Everything enterprises need to know

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

Rubrik, an enterprise backup and recovery company, has filed for an initial public offering in a move that indicates a new batch of security vendors are likely to hit the market as companies prep their post-breach strategies. Rubrik, which trades under the ticker RBRK, priced its IPO at $32 a share, raising $752 million at a $5.6 billion valuation. The price was above its expected range.

The nine-year old company, which is on the Constellation ShortList™ for Enterprise Backup and Recovery, competes with Dell, Cohesity, which is combining with Veritas' data recovery unit, Veeam and others. The IPO market has bounced back in 2024 from a two-year drought.

Chirag Mehta, an analyst at Constellation Research, said Rubrik is hitting the market when post-breach planning is a hot topic. Mehta said:

"As AI-led attacks become more sophisticated and breaches become inevitable, security and technology leaders are actively planning for their post-breach strategy. Organizations are bolstering their post-breach resilience to effectively mitigate the impact of cyber incidents and expedite recovery processes. Organizations recognize the intrinsic value of data as a strategic asset and are prioritizing measures to safeguard its integrity, availability, and confidentiality. Rubrik has an important role to play in helping CxOs as data resilience emerges as a linchpin of cyber resilience."

Rubrik will trade under the ticker RBRK. No price range has been set. Holger Mueller, Constellation Research analyst, said Rubrik's IPO will give the company a mindshare lift, but then the real work begins. "For Rubrik it will be key to manage expectations and have an eye on managing investor expectations. For instance Rubrik has done good progress improving profitability, it now has to deliver towards that and other shareholder needs quarter by quarter," he said.

Here's what you need to know about Rubrik:

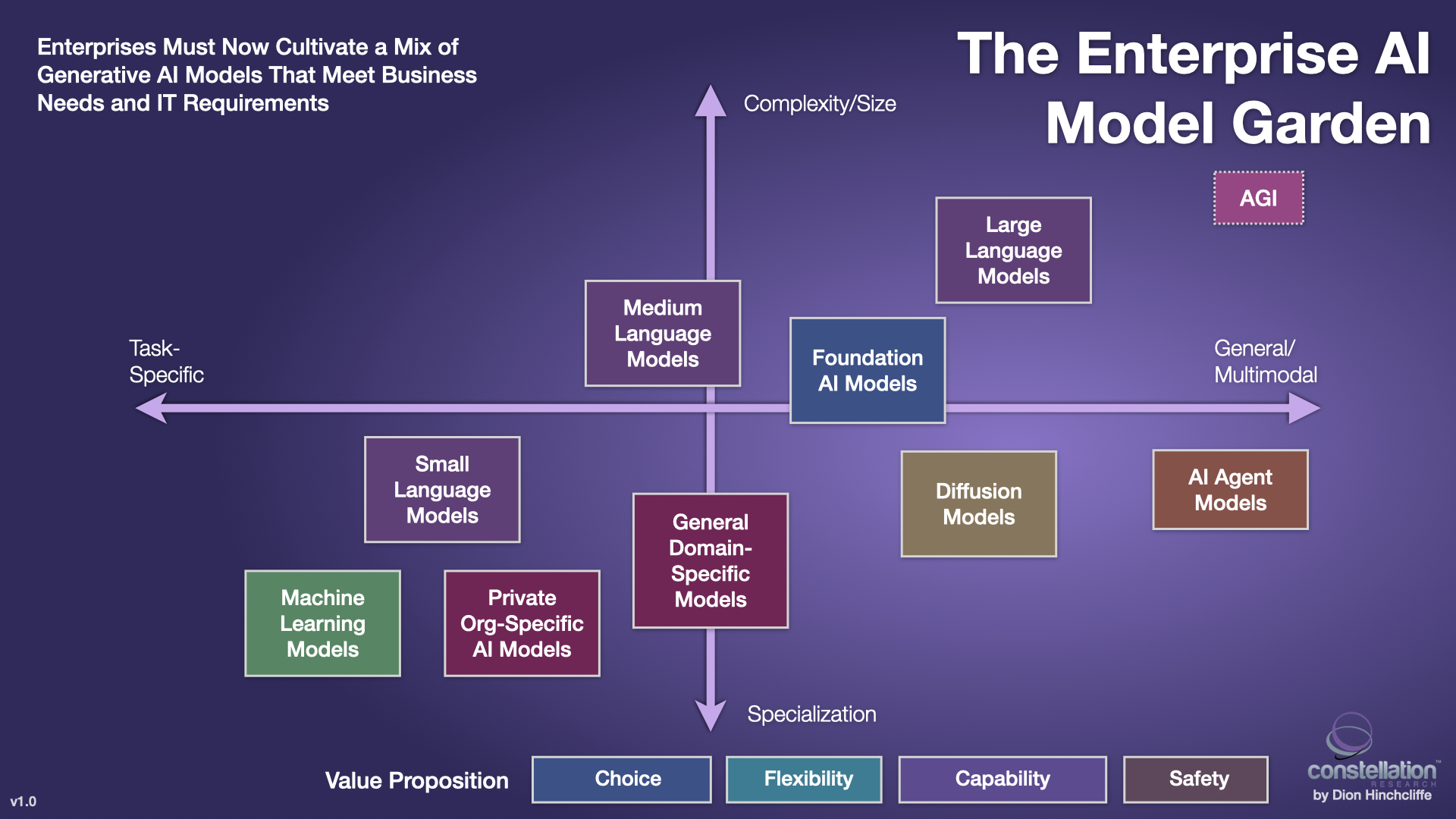

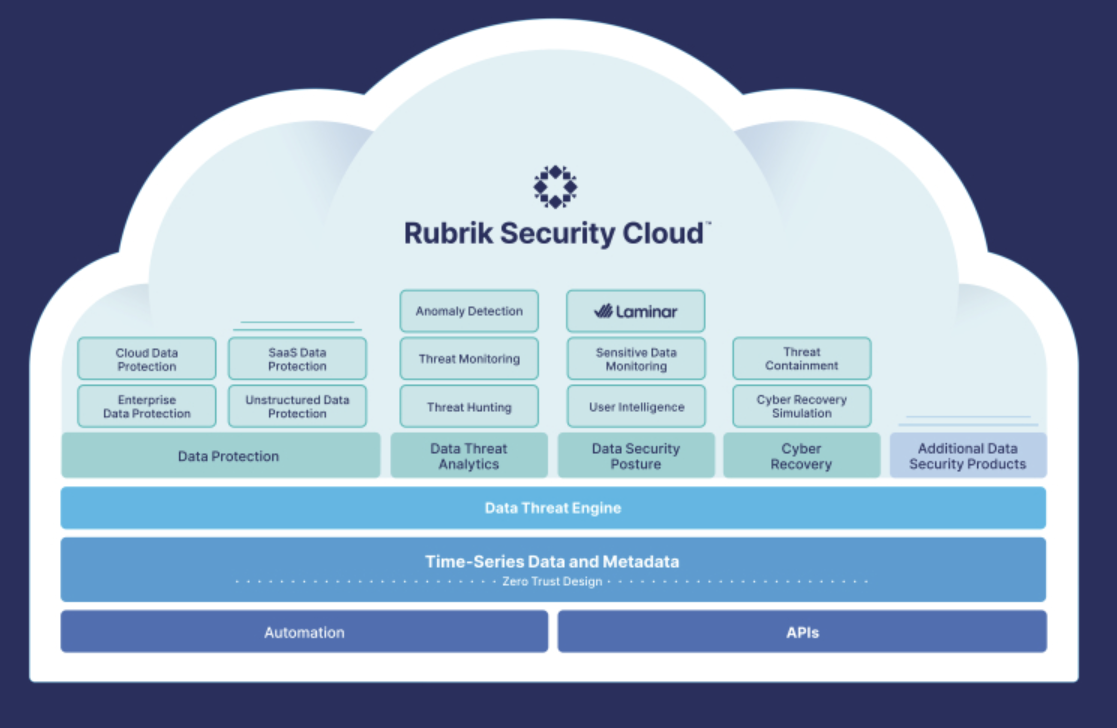

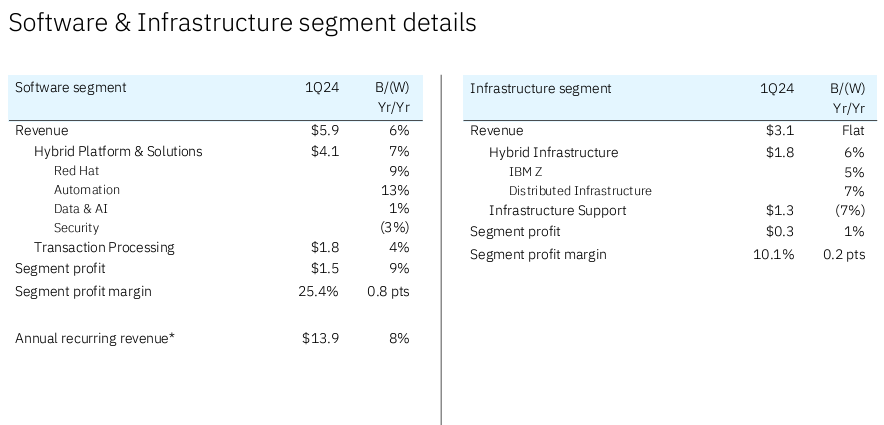

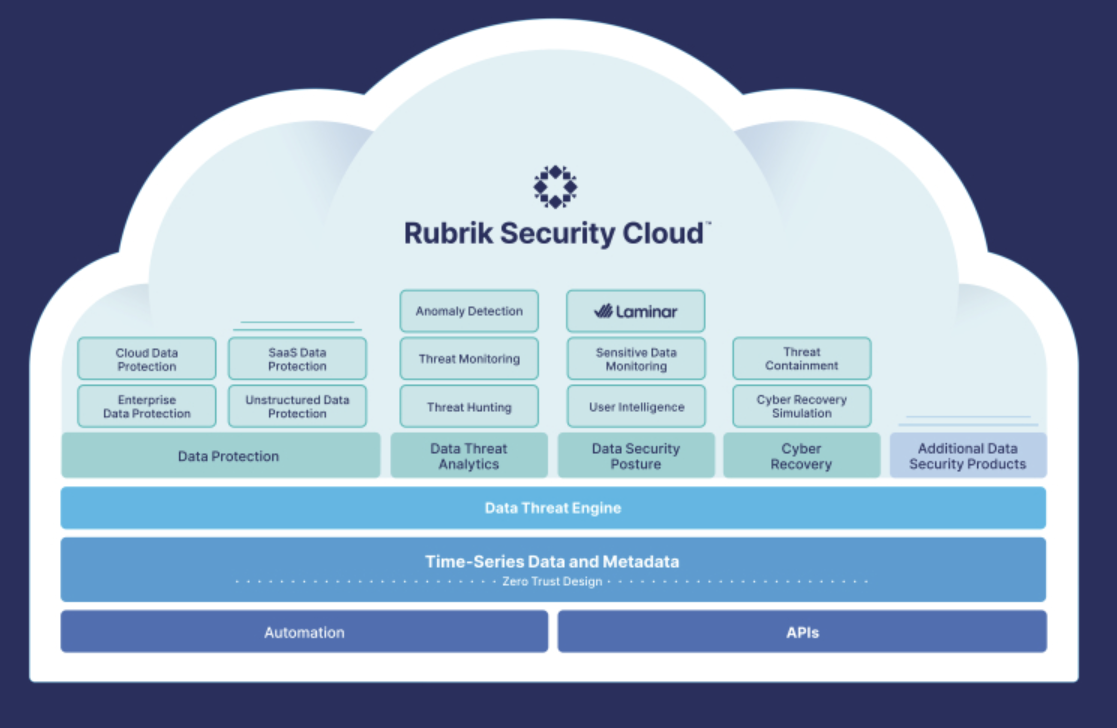

The platform. Rubrik Security Cloud (RSC) has a series of layers designed to address AI security and protecting data from threats. The platform also assesses data protection, security posture, analytics and recovery. Here's the stack:

Simply put, Rubrik's platform is built on Zero Trust design principles to address the fact that cyberattacks are inevitable, so the recovery matters more than ever. "Our Zero Trust Data Security platform assumes that information technology infrastructure will be breached, and nothing can be trusted without authentication," said Rubrik in its regulatory filing.

Indeed, Rubrik disclosed that in March 2023 a malicious third party accessed a limited amount of information in one of its non-production IT testing environments. The incident didn't include access to customer or sensitive data.

Customer base. Rubrik has more than 6,100 customers as of Jan. 31 and 1,742 of them have subscription annual recurring revenue of more than $100,000. For fiscal 2023, Rubrik had 5,000 customers. Rubrik said its cloud ARR for fiscal 2024 was $525 million.

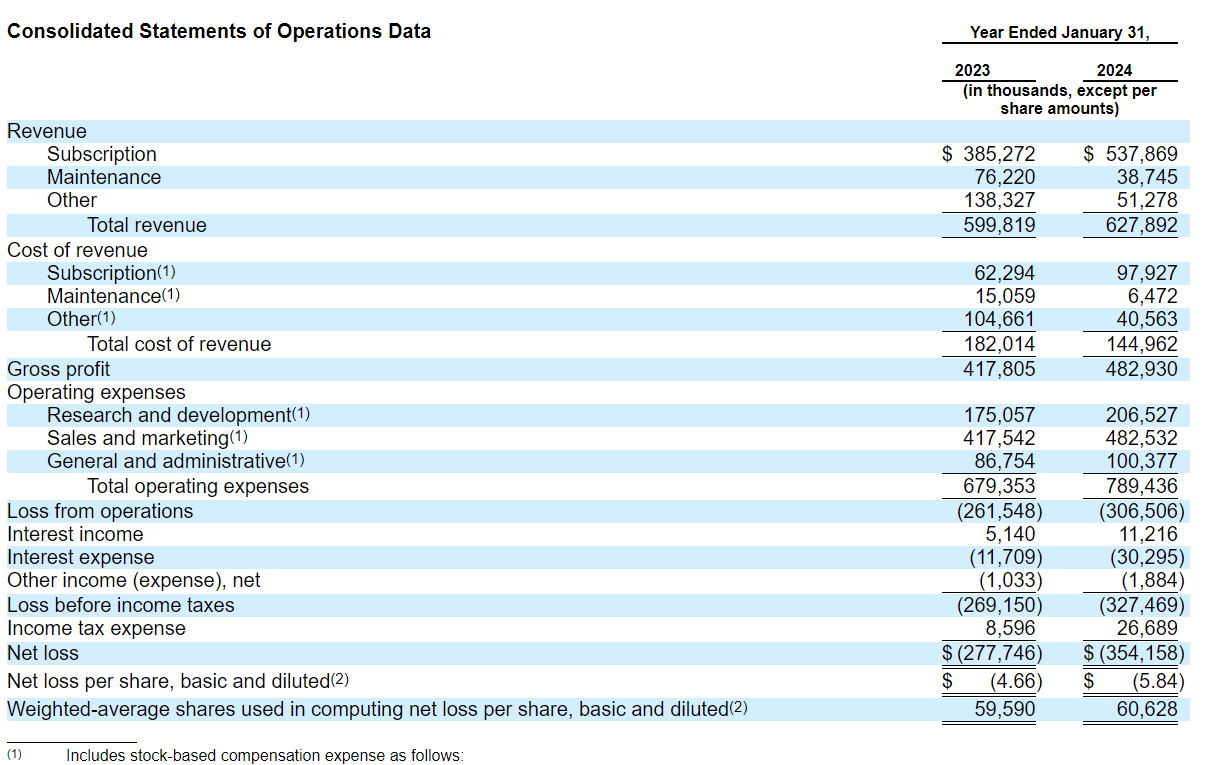

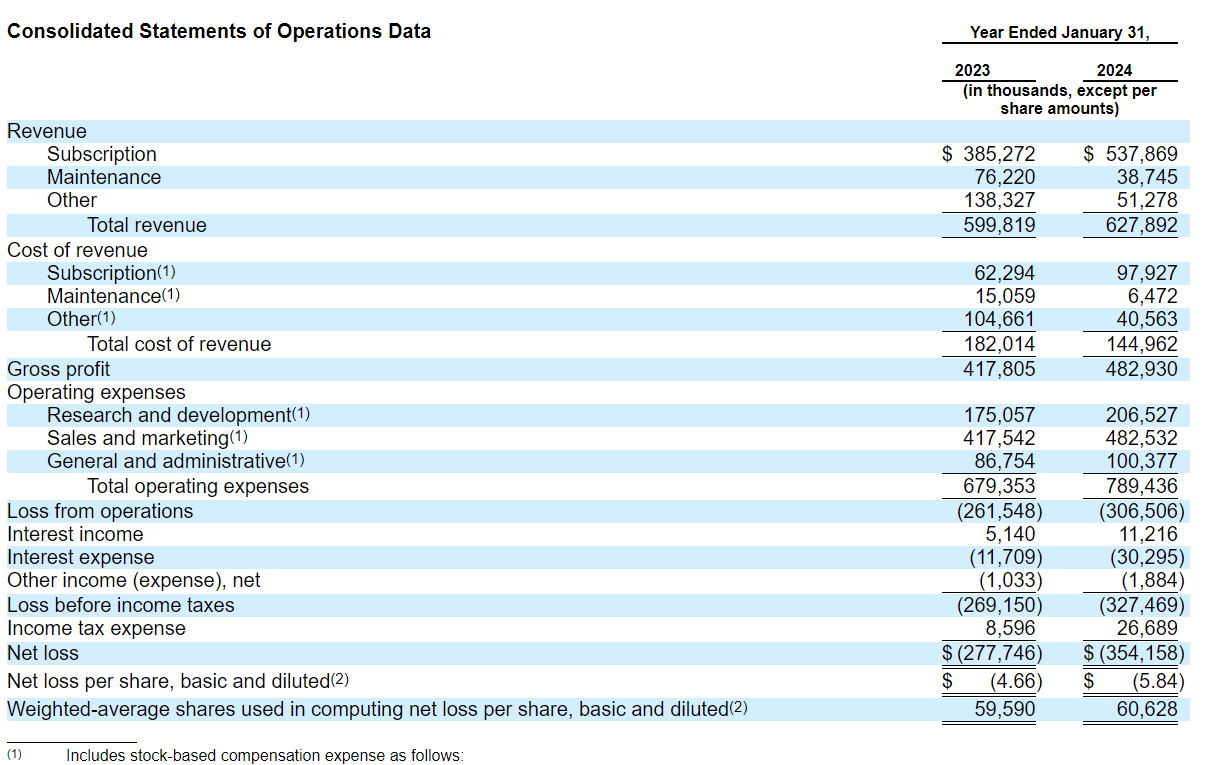

Rubrik is paying heavily to acquire customers and its go-to-market operations. For fiscal 2024, Rubrik delivered a net loss of $354.2 million on revenue of $627.9 million, up from $599.82 million in fiscal 2023. Rubrik spent $482.53 million on sales and marketing in fiscal 2024. In fiscal 2023 and fiscal 2024, operating cash flow was $19.3 million and $(4.5) million, respectively, and free cash flow was $(15.0) million and $(24.5) million, respectively.

Much of that investment in sales and marketing is to transition customers from subscription term-licenses to SaaS. Rubrik cited this transition as a risk factor:

"We are implementing certain initiatives to accelerate our existing customers’ migration to RSC as part of our business transition to SaaS, which include enforcement of migration deadlines. These initiatives may be perceived negatively by our customers. For example, these initiatives may require customers to prioritize preparation for their migration over other organizational needs, potentially resulting in diversion of resources. For certain existing customers, the perceived benefits from undertaking the migration may be outweighed by the anticipated time and effort required to prepare for and execute the migration, resulting in potential delays in customers’ transition to RSC."

Rubrik was founded in December 2013 with products and services launched in 2016. RSC, however, has only been offered as a cloud platform based on subscriptions in fiscal 2023. Rubrik noted that RSC is now the majority of revenue as Rubrik-branded appliances and licenses and support sales diminish.

Leadership. Bipul Sinha, CEO, Chairman and Co-Founder, has been a software engineer, venture capitalist and a CEO. In a shareholder letter, Sinha said Rubrik is trying to leverage market disruption to create a durable business. "We built a distinct architecture to combine data and metadata from business applications across the cloud to ensure data security and availability irrespective of incidence," he said. "This allowed us to transform backup data into a strategic asset that sits at the epicenter of security and artificial intelligence."

The generative AI play. No IPO can go forward without generative AI as a hook. Rubrik said generative AI breakthroughs will require more guardrails for security, privacy and compliance. Generative AI will also mean more sophisticated attacks. In its IPO filing, Rubrik argues that its approach accounts for backup and recovery after a cyberattack.

Rubrik also offers Ruby for AI data defense and recovery. In its filing, Rubrik said:

"Ruby is designed to augment human efforts with its generative AI capabilities, helping customers scale their data security operations with automation, boosting productivity, and bridging the users’ skills gap. Ruby uses Microsoft Azure OpenAI Service in combination with our own proprietary, internally developed software. Our proprietary software augments user queries to generate prompts that are submitted to the Azure OpenAI model and also enhances the model output to generate responses presented back to the user."

The company added that it chose Microsoft Azure OpenAI Service based on its security features that keep data with Rubrik's control. Microsoft is a core Rubrik partner.

Generative AI is also cited in Rubrik's lengthy risk factor section for everything from code to intellectual property, regulation globally, liability and customer adoption.

What does Rubrik secure? Rubrik works across the main enterprise applications and platform including VMware, Microsoft Hyper-V, Microsoft SQL Server, Oracle, Microsoft Windows, Nutanix, Kubernetes, Cassandra, MongoDB, Linux, UNIX, AIX, NAS, Epic, SAP HANA, Google Cloud, Azure, AWS, M365 (Microsoft Teams, SharePoint, Exchange Online, and OneDrive), and Atlassian Jira Cloud.

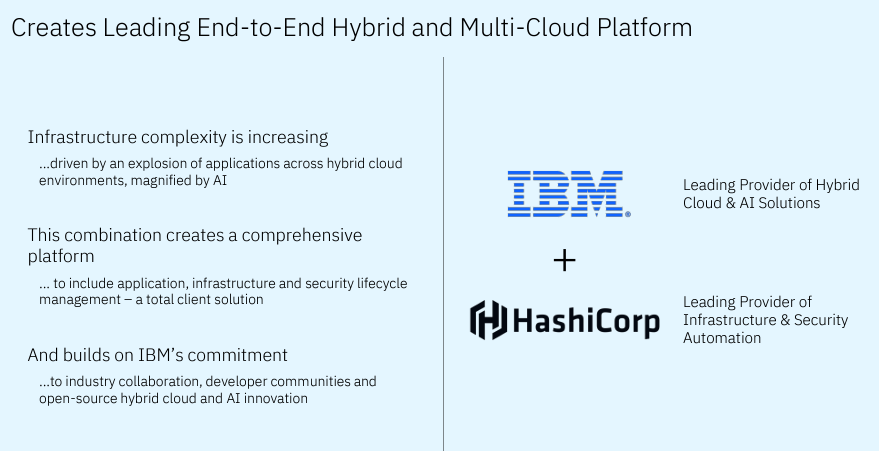

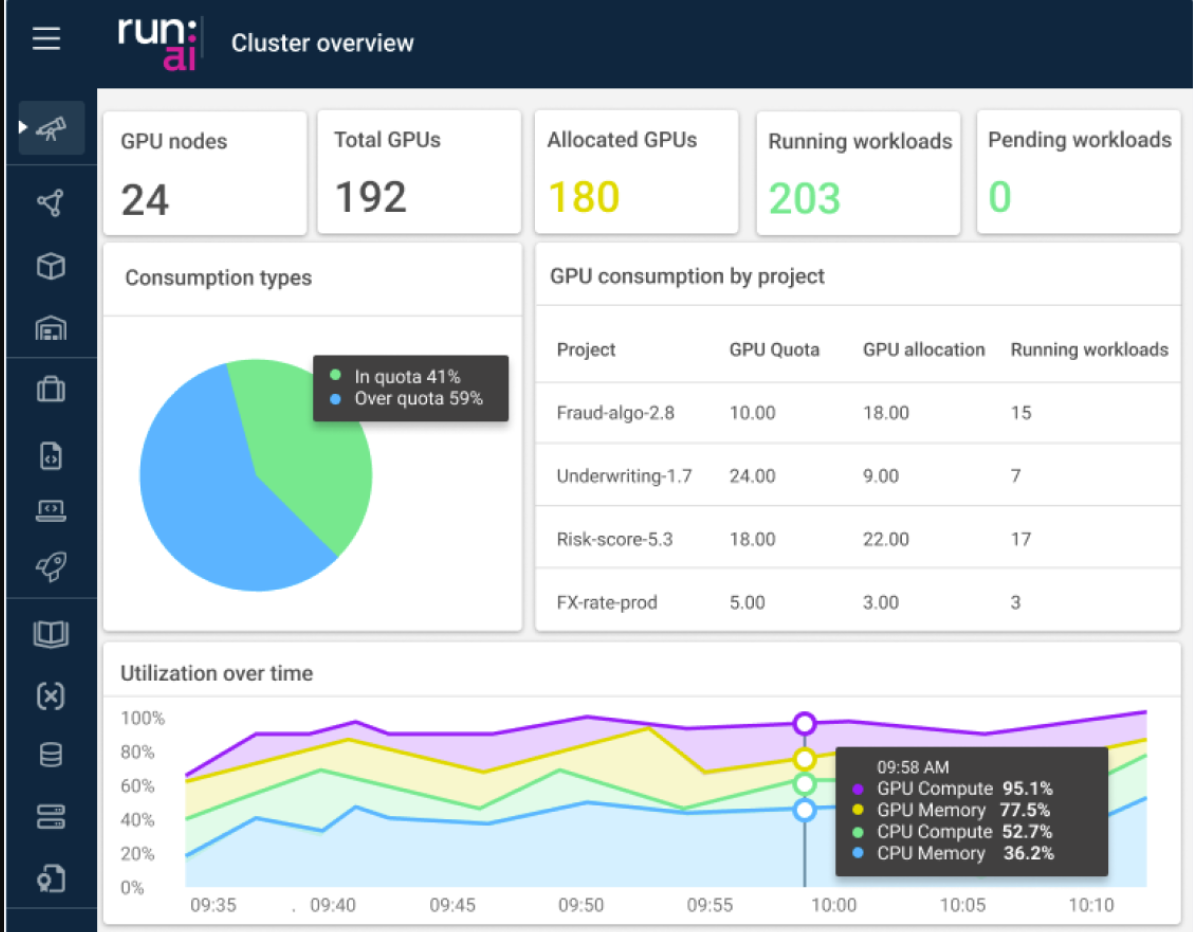

Competition. Multiple competitors were cited including Dell Technologies, IBM, Commvault, Veeam and Cohesity as well as cloud data management vendors in some areas and broader cybersecurity platforms.

Supermicro makes Rubrik-branded appliances. Rubrik said: "A large majority of the customer enterprise data we secure relies upon Rubrik-branded Appliances, which are currently built on servers supplied and designed by Super Micro Computer, Inc., or Supermicro."

Rubrik offers a Ransomware Recovery Warranty. Rubrik said one risk is liability. The company said:

"As part of our ransomware recovery warranty, or the Ransomware Recovery Warranty, we also provide certain customers with up to $10,000,000 for recovery expenses related to data recovery and restoration in the event that data backed up using our solutions cannot be recovered following a ransomware attack. As part of the Ransomware Recovery Warranty, if an eligible customer’s data that has been backed-up onto a Rubrik-branded Appliance, Rubrik-certified compatible third-party commodity server, or a Rubrik-hosted cloud platform, is not successfully recovered by way of one of our data security products due to a failure of such solution, we will reimburse the customer for its reasonable and necessary fees and expenses to restore, recover, or recreate its data up to $10,000,000. As of January 31, 2024, there had been no claims made under the Ransomware Recovery Warranty."

Digital Safety, Privacy & Cybersecurity

Data to Decisions

Innovation & Product-led Growth

Future of Work

Tech Optimization

Next-Generation Customer Experience

Security

Zero Trust

ML

Machine Learning

LLMs

Agentic AI

Generative AI

AI

Analytics

Automation

business

Marketing

SaaS

PaaS

IaaS

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

finance

Healthcare

Customer Service

Content Management

Collaboration

GenerativeAI

Chief Information Officer

Chief Information Security Officer

Chief Privacy Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Product Officer