Workday's Q3 highlights push, pull, patience of its AI strategy

Workday's Q3 highlights push, pull, patience of its AI strategy

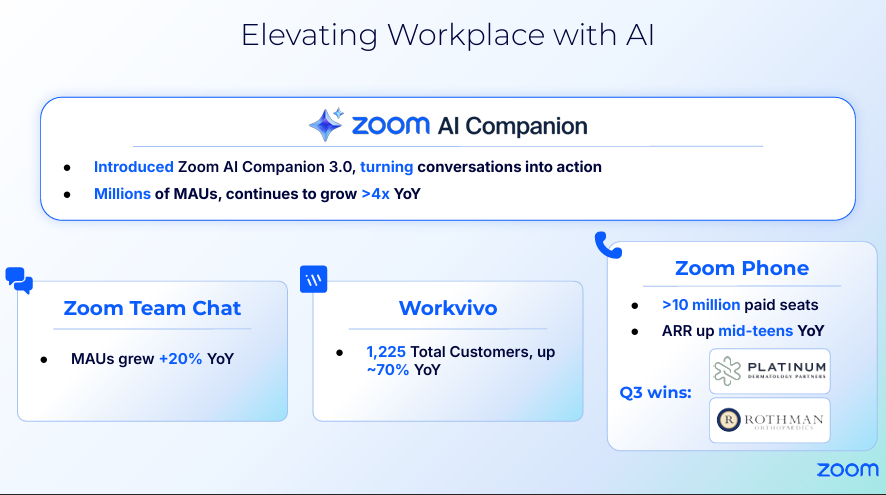

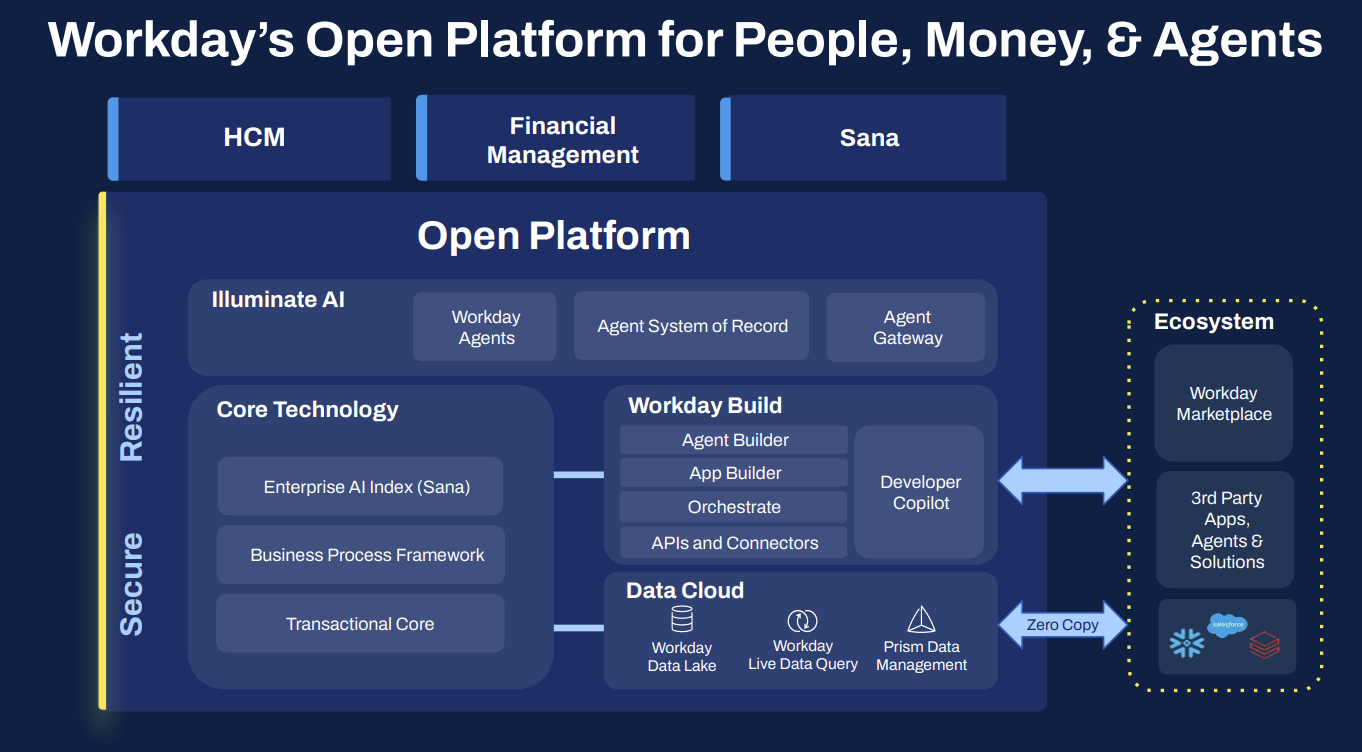

Workday is rounding out its AI strategy, building out its platform with multiple tuck-in acquisitions and looking to become an AI agent player because it can leverage its unified HR and finance data.

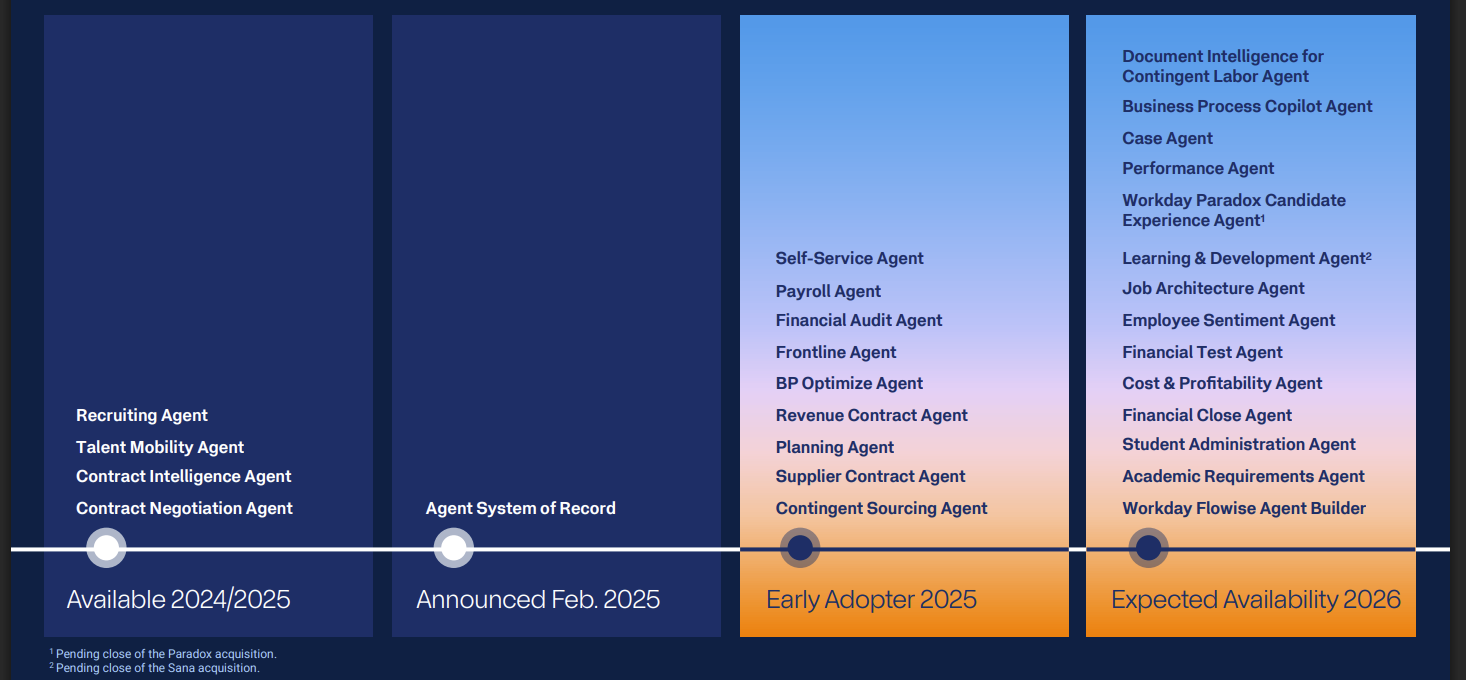

The company's third quarter earnings were better than expected, but also highlight how the grand AI strategy, which was outlined at Workday Rising, is just starting.

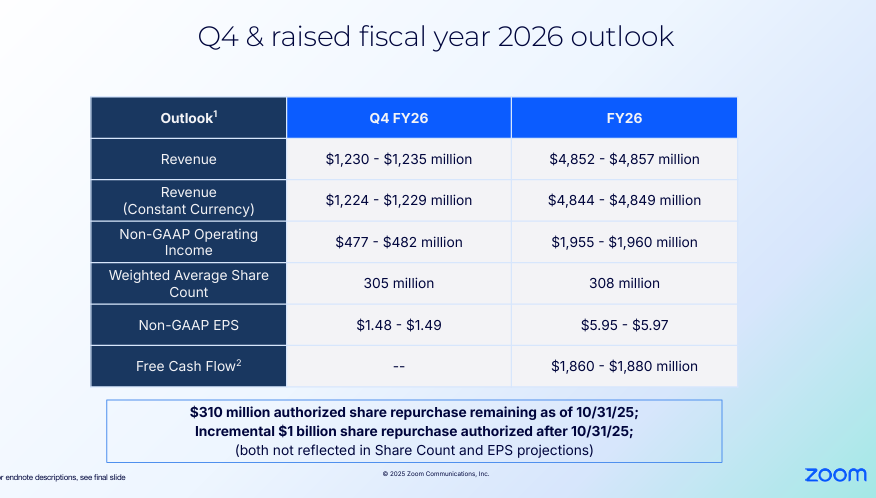

Workday reported third quarter net income of $252 million, or 94 cents a share, on revenue of $2.43 billion, up 12.6% from a year ago. Non-GAAP earnings were $2.32 a share, 15 cents ahead of Wall Street estimates. As for the outlook, Workday projected fourth quarter subscription revenue growth of 15.5%. For fiscal 2026, Workday projected subscription revenue growth of 14.4%. Fourth quarter operating margin guidance of 28.5% was slightly lower than expected. Workday has had a busy few months.

- Workday acquires Pipedream, launches midmarket focused Workday GO

- Workday launches Workday Data Cloud, Workday Build, more AI agents

- Workday spends $1.1 billion on Sana, aims to be front door to work

- Workday buys Paradox to build out its recruiting suite, delivers strong Q2

"I've been on the road a lot lately, meeting with our customers and prospects, and they're all saying the same thing. They see the potential of AI but they're stuck with disconnected systems, bad data and closed platforms. That's where Workday gives them the ultimate advantage by unifying HR and finance on one intelligent platform," said Workday CEO Carl Eschenbach.

The catch is that Workday has just closed key acquisitions to build out its intelligent platform and announced a few more designed to connect AI agents broadly across the enterprise.

"While other vendors confuse the market with thousands of overlapping general purpose agents, we're focused on what we do best, and that is building powerful agents for HR and finance that deliver real ROI and measurable business value," said Eschenbach, who said data quality is hindering enterprise AI.

If this theme sounds familiar it's because you've heard something similar from multiple enterprise software vendors. You've also heard the same argument from all the AI-driven startups looking to become the new SaaS leaders.

Related research:

- Constellation Research's CXO Confidence Survey

- Constellation Research's 2025 AI Survey

- Decision Velocity in the Agentic Era: Architecting for Decision Automation

- Augmenting and Accelerating Frontline Productivity

- Workday Extend Writes Its Next Chapter: AI

- Case Study - Workday Austrial Payroll

Making sense of Workday's acquisitions

Workday has been on a run of tuck-in acquisitions since its February 2024 purchase of HiredScore. The company just announced the acquisition of Pipedream and closed Sana, which is billed as the future front door to Workday. Paradox, Flowise and Evisort are all deals that are designed to expand Workday's AI agent ambitions.

- Workday acquires Flowise to speed up building AI agents, automated workflows

- Workday aims to be system of record for AI agents, digital labor

These tuck-in deals have largely created Workday's AI agent flywheel. These acquisitions also give Workday attachments to sell to core platforms. For instance, Eschenbach said on Workday's third quarter earnings call that the company is selling Paradox, which focuses on frontline workers, attached to its recruiting software. HiredScore also rides along with recruiting.

Eschenbach said:

"We have the industry-leading AI recruiting platform out there today. At the same time, this is now a new product that is a land-only product for our sales force who can now go and sell Paradox not only on top of Workday or back into our installed base, but also into our competitors' environment. In fact, a significant portion of their existing customers aren't Workday today. And we're going to continue to leverage that go-to-market model, so it gives us another land product without someone having to decide completely on Workday, HR or finance, they can go just with Paradox. And I've seen that come up multiple times just in the first 60 days of us having this great asset."

As for the returns, Eschenbach noted that "for every dollar of recruiting we sell, we sell about $2.50 of HiredScore on top of it." Evisort, a document intelligence for contract management company acquired in Sept. 2024, is a business growing at a triple digit clip for Workday. And Paradox opens the frontline worker recruiting market to Workday.

Zane Rowe, Workday CFO, said both Sana and Paradox are contributing 1.5 points to the company's fourth quarter subscription revenue outlook.

Sana will follow a similar playbook and be sold on top of Workday Learning.

"And then obviously, we're going to refresh our UI/UX, leveraging the Sana platform going forward," said Eschenbach.

Gerrit Kazmaier, President of Product and Technology, said on the third quarter earnings call that Sana will be the "leading UI experience for Workday" and a "complete conversational experience."

He said:

"Imagine every employee having access to HR and finance AI at scale. What that means in cost reduction. On the other side, you can see what drives that interest. And thirdly, Sana goes much more beyond that. And I would recommend you look at the big picture with also Pipedream, adding 3,000 connectors to the Sana platform, which now allows our customers to take Sana knowledge management actions in Workday and the actions that Pipedream adds to really drive enterprise-wide AI transformation with that model."

What Workday is doing is acquiring add-on features and front-ends to the company's HR and financial data.

Using a multi-cloud approach built on AWS and Google Cloud, Workday is mandating that customers move from its own data centers to public cloud. The thinking is that Workday will be able to leverage best-of-breed tooling and spin out innovation faster.

Workday customers will have to update Workday tenant URLs and reconfigure integrations.

The big picture

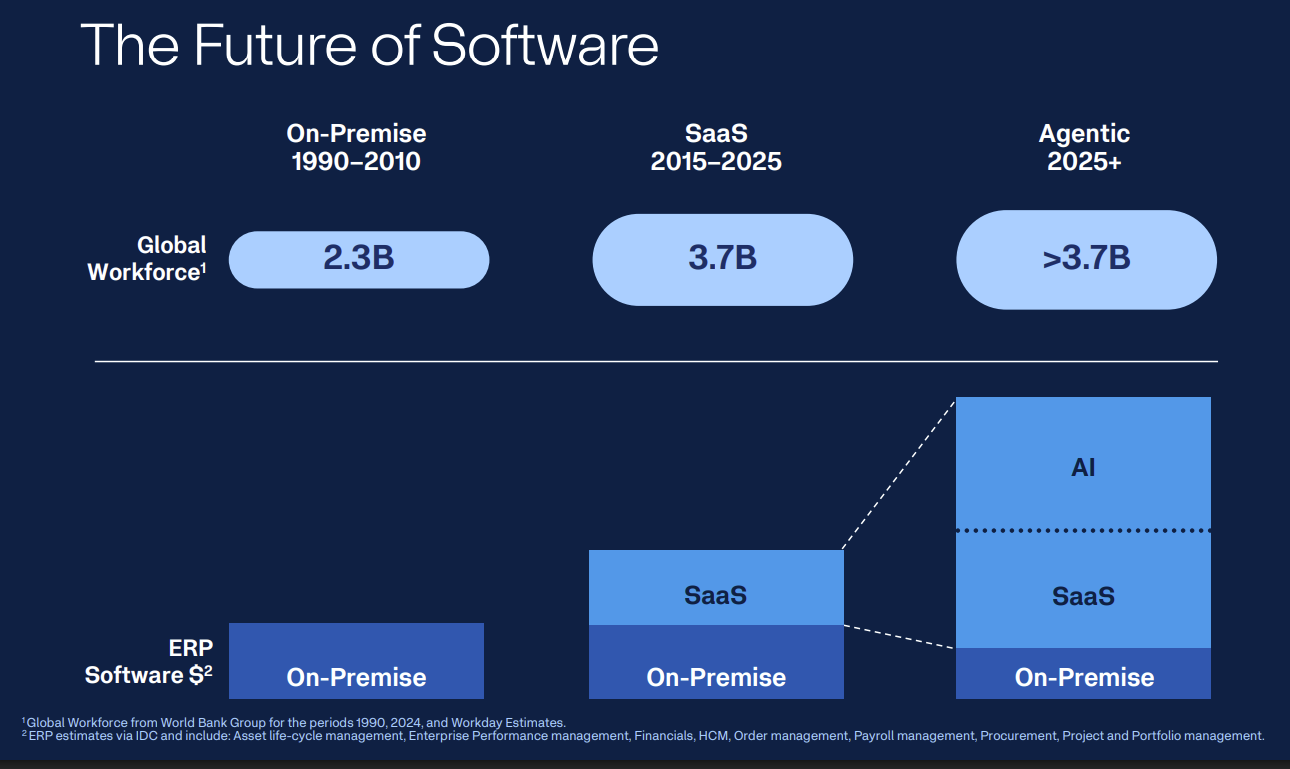

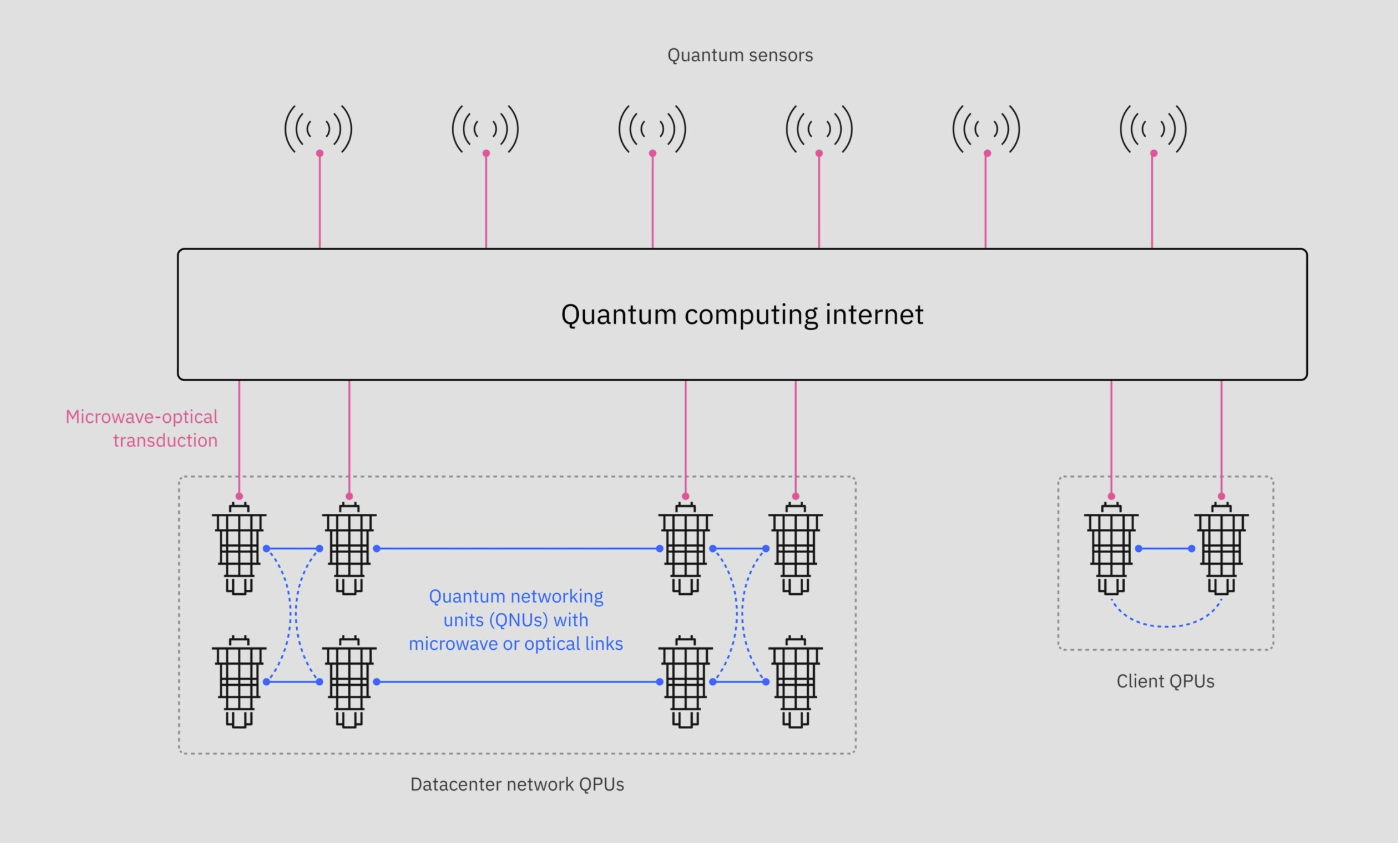

What Workday is really working toward is an army of controlled AI agents focused on driving enterprise productivity and processes. But to do that you need the data and process intelligence.

Eschenbach said during Workday's Analyst/Investor Day at Workday Rising: "It's not about the quantity of agents you're bringing to market. It's the quality of agents and they have to drive real business value. They have to drive real outcomes."

Kazmaier said AI will be the new UI and enterprise vendors will have to provide leading experiences or lose share.

Here's what Kazmaier outlined as the ingredients to deliver on Workday's ambition. Speaking on Workday's earnings call, Kazmaier outlined the key ingredients to deploying agentic AI:

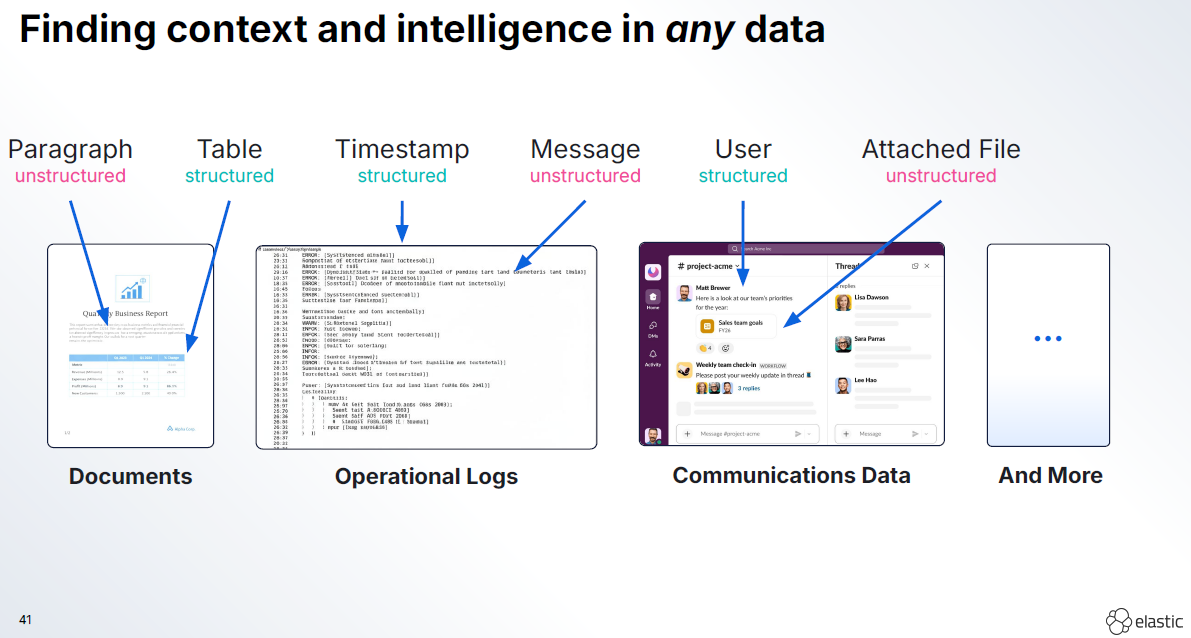

- "The first thing that you need is a vast set of data, which basically describes the domain, the domain of finance, the domain of HR. And you need a vast set of data that basically codifies how their data is being used."

- "You need to have strong semantics and clarity about what data we present. You need to have a data model that defines what data element represents what entity in the business? What do they relate on? And what are the rules for these business entities? They have integrity, they have meaning, they have purpose."

- "You need to have a business process system, which now basically tells you how to activate this data in a way that can drive towards a business outcome. Even more so, you need to have clarity on what that business outcome is."

Workday's argument is that its consolidated data set and process data is the differentiator. The bet is that data and process drive AI not the other way around.