How ResMed’s data prowess sets it up for AI, sleep health market expansion

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

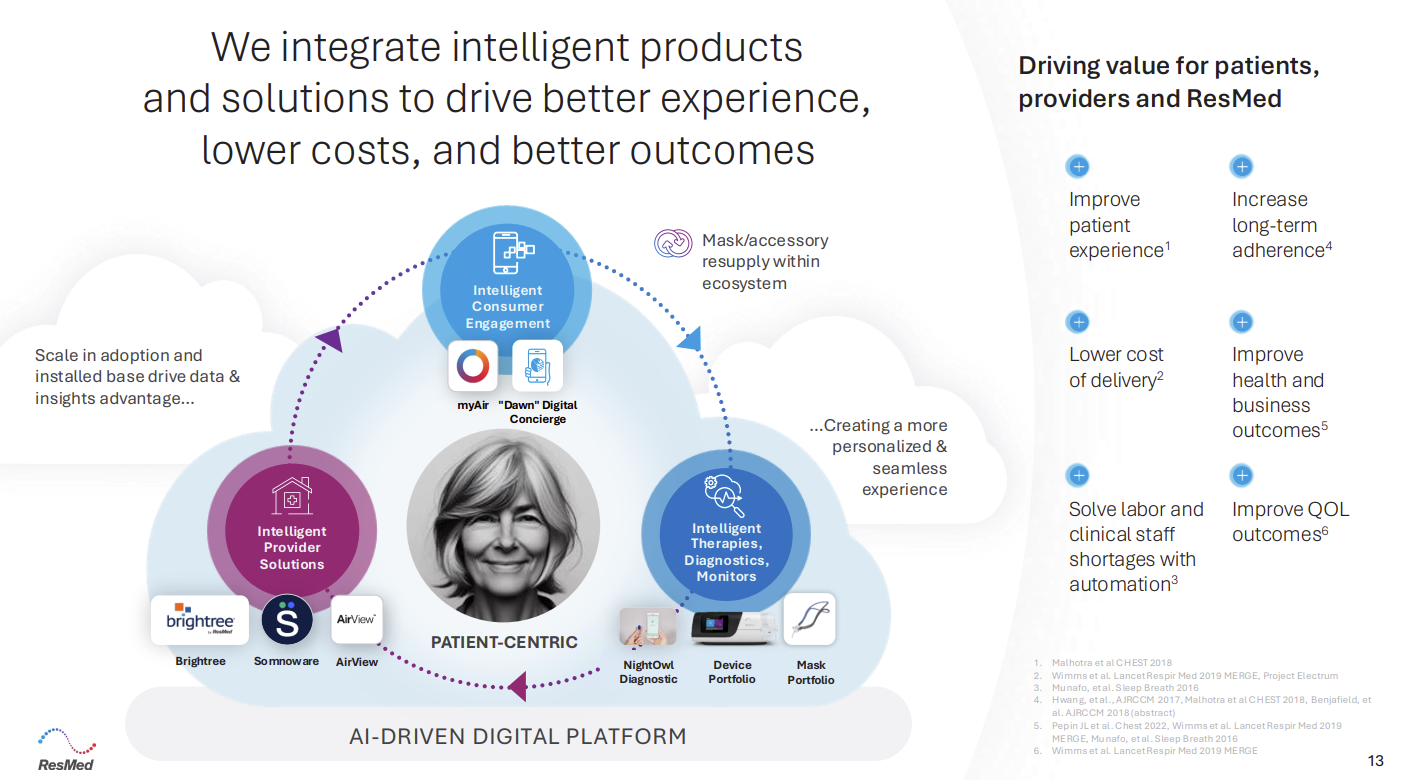

ResMed is best known for its CPAP devices, medical devices and masks, but it's also software company with a treasure trove of sleep and breathing data. The plan for ResMed: Leverage artificial intelligence, generative AI and machine learning to grow its market.

The company’s digital health data set includes:

- 28 million patients in ResMed's AirView software ecosystem.

- 26 million medical devices with 100% cloud connectivity.

- 20 billion nights of sleep health and breathing health data in the cloud across 140 countries.

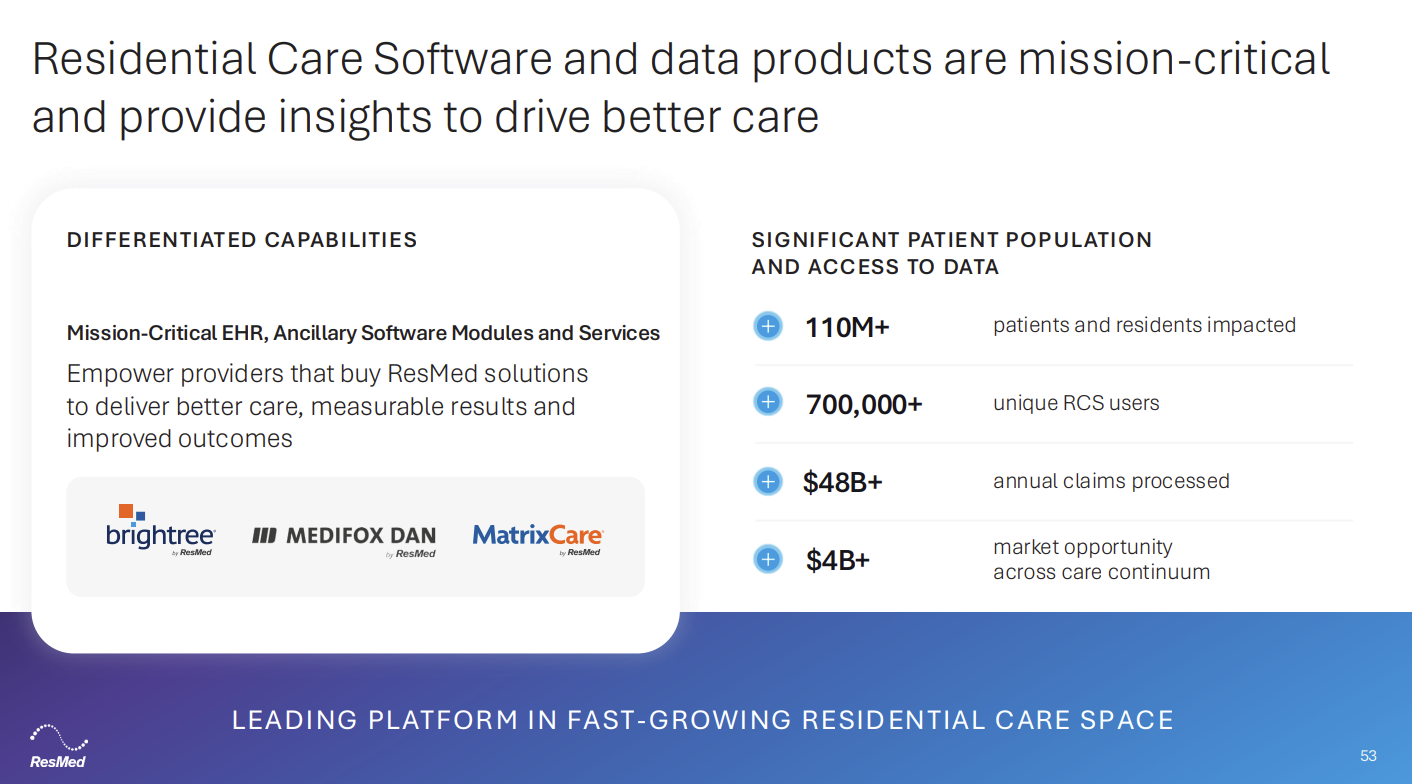

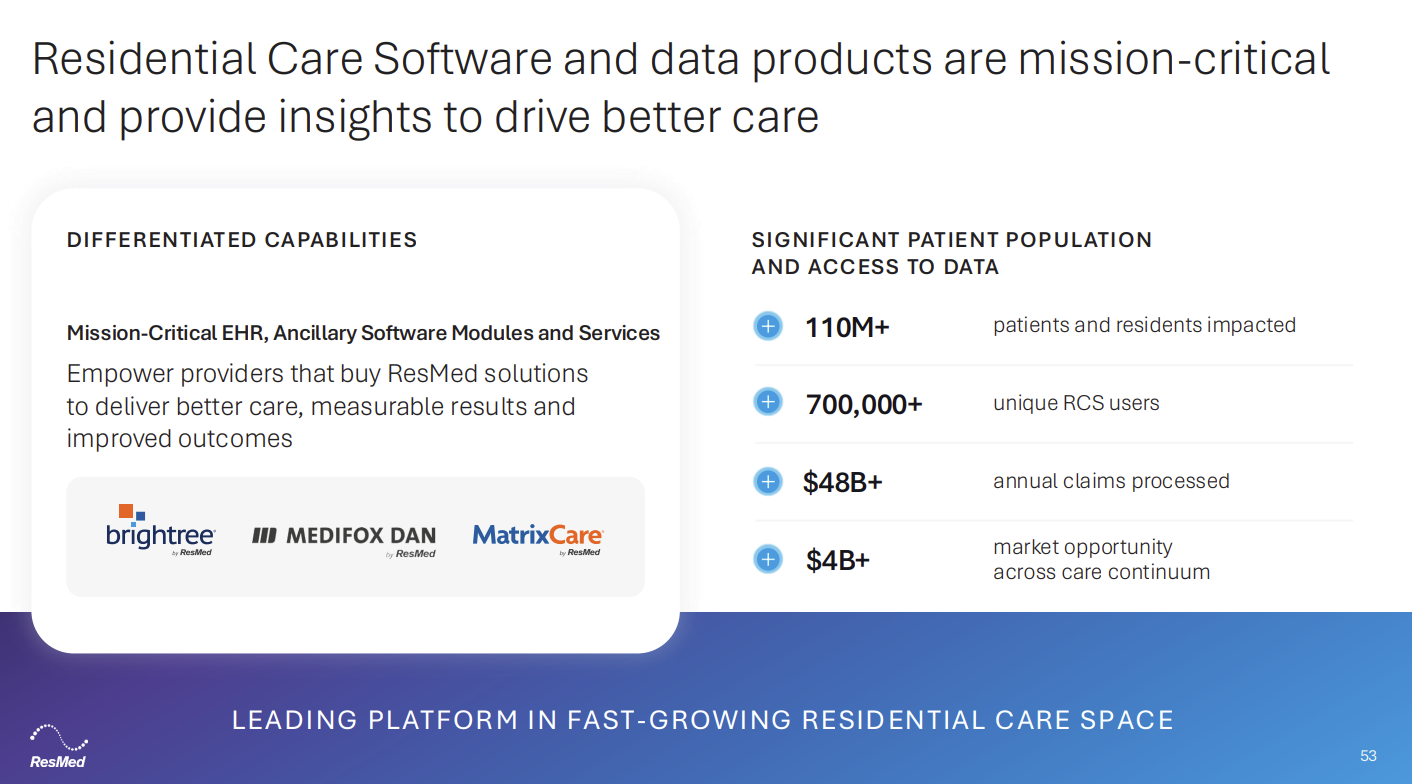

- 150 million accounts in ResMed's residential care software ecosystem.

- 8.3 million patients with ResMed's myAir patient app.

And a newly launched generative AI digital concierge called Dawn will provide more interactions. ResMed is an example of how enterprises are increasingly using proprietary data to create unique AI applications and new products and services.

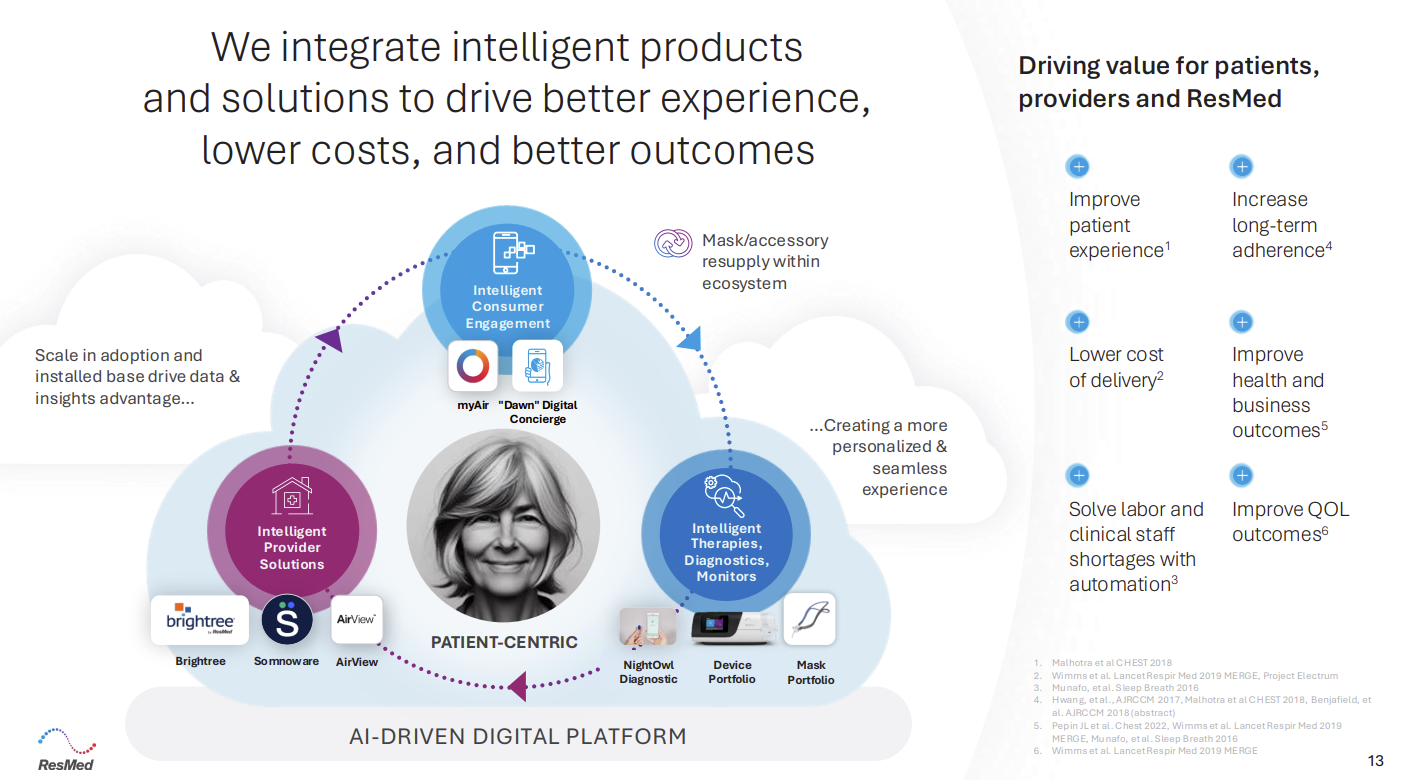

Here is the flywheel that ResMed is trying to leverage.

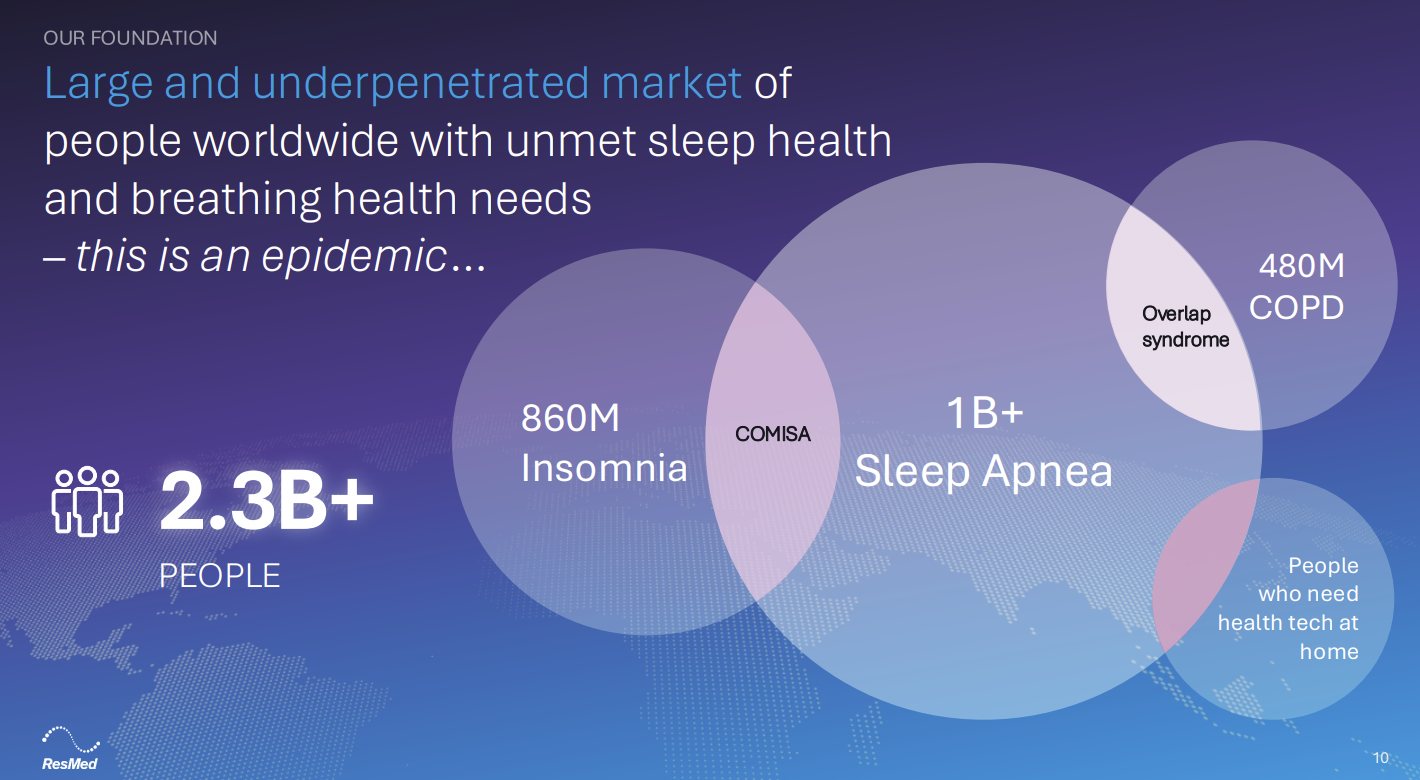

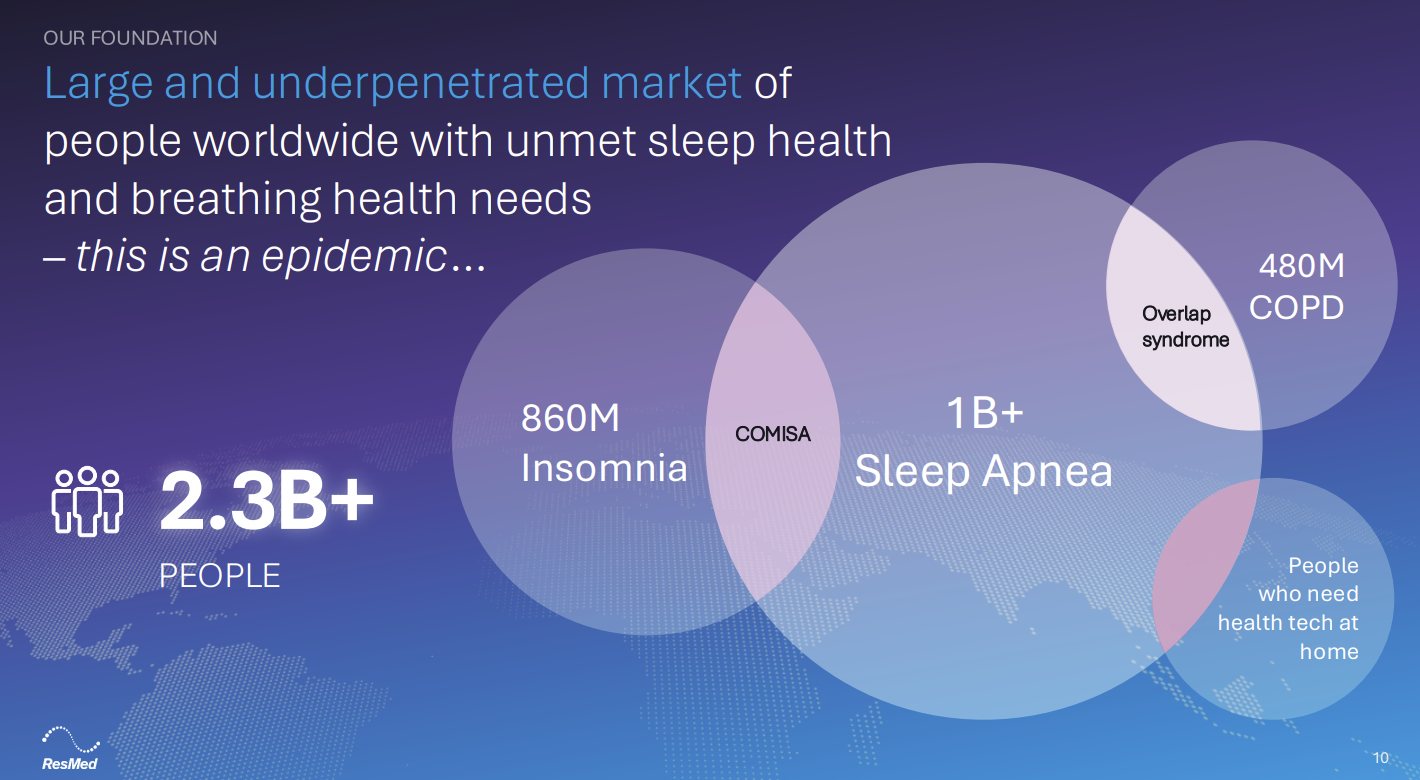

The bet is that ResMed has a massive untapped market that includes 2.3 billion people with sleep and breathing disorders.

On its investor day, ResMed CEO Michael Farrell said:

"The overlap between a person who suffocates every night with sleep apnea and who also has a psychological reason that they cannot sleep. It is incredibly difficult to treat insomnia. If you suffocate as well, it becomes a double wheeled problem. We don't know how many of our patients are not adherent to CPAP because they have insomnia. But that overlap is significant. And ResMed is investing in digital health on both sides of that.

The other overlap there is what's called overlap syndrome, which is chronic obstructive pulmonary disease and sleep apnea. You have difficulty breathing because of the geometry of the upper airway. But then in addition to that, you have lung disease. These are some of the most difficult patients to treat, and ResMed has the technologies, the bilevels, the ventilators, but also the digital health technology that can help physicians take care of these patients."

ResMed plans to expand into adjacent markets including insomnia, chronic obstructive pulmonary disease or COPD, neuromuscular disease and other chronic conditions. And ResMed is also working to make its core medical devices smaller and more comfortable.

Data driven

The idea that ResMed could expand its market is quite a turnabout considering many Wall Street analysts thought the company’s total addressable market would be shrinking until recently.

ResMed’s approach to data has helped it navigate a volatile 18 months as investors were concerned about how GLP-1 drugs used to treat obesity would impact ResMed sales of its medical equipment. The thinking behind the stock volatility was that lower obesity would hamper sales.

RedMed's approach to the GLP-1 threat was to analyze the data to continually test whether investor fears were warranted. Farrell said on the company's first quarter earnings call that the data so far is that patients on GLP-1 are more likely to start sleep apnea therapy and wear their CPAP (continuous positive airway pressure) devices.

"We've designed a real-world data analysis that now equals 989,000 subjects, who received both a prescription for a GLP-1 medication and a prescription for positive airway pressure therapy," said Farrell. "The results from this analysis are clear. People prescribed a GLP-1 and PAP therapy have 10.8 percentage points more likelihood or propensity to commence positive airway pressure therapy."

GLP-1 prescription and PAP prescription patients are also more likely to adhere to long-term therapy based on ResMed's analysis of ReSupply data.

Indeed, ResMed's first quarter revenue was up 11% and the company saw strong demand for its medical devices, mask, accessories and residential care software. A program called ReSupply keeps patient supply sales flowing.

The data flywheel

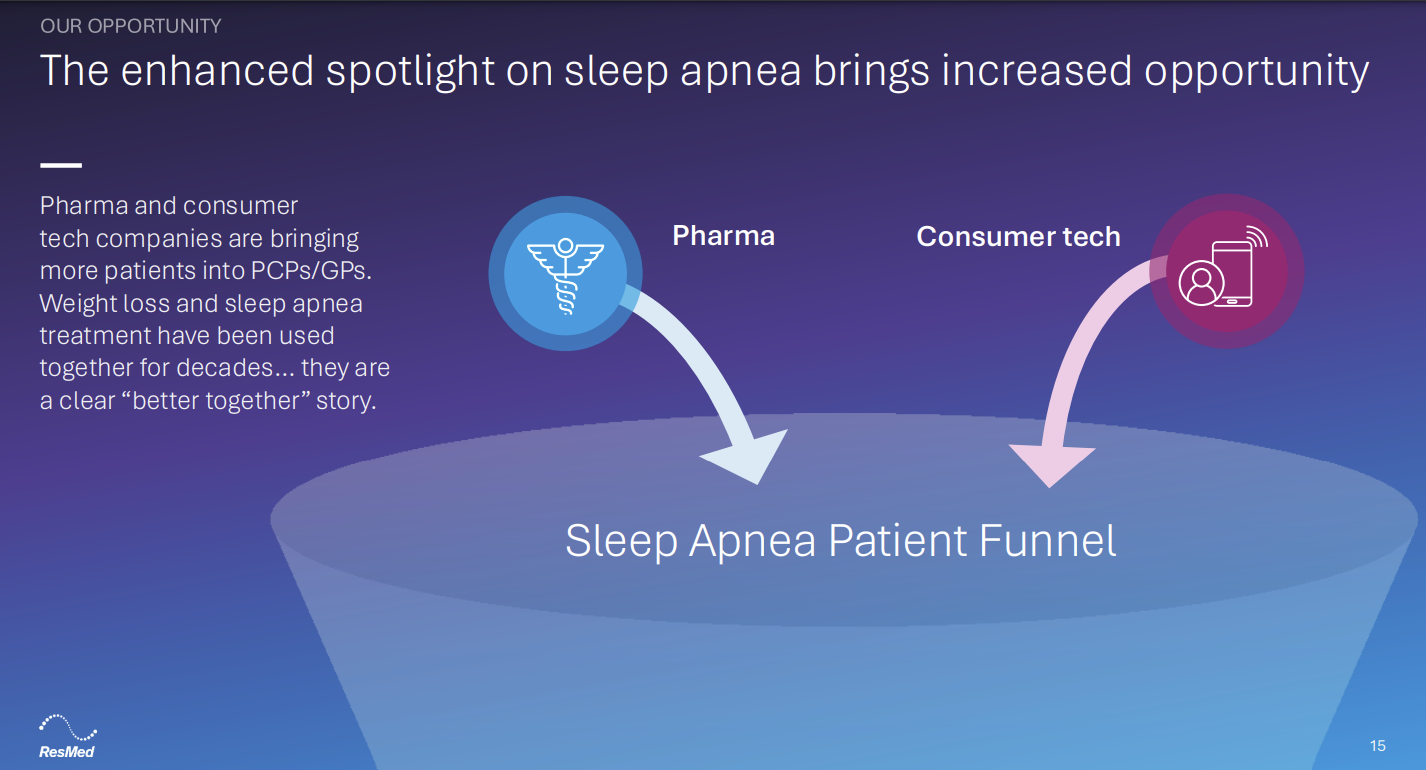

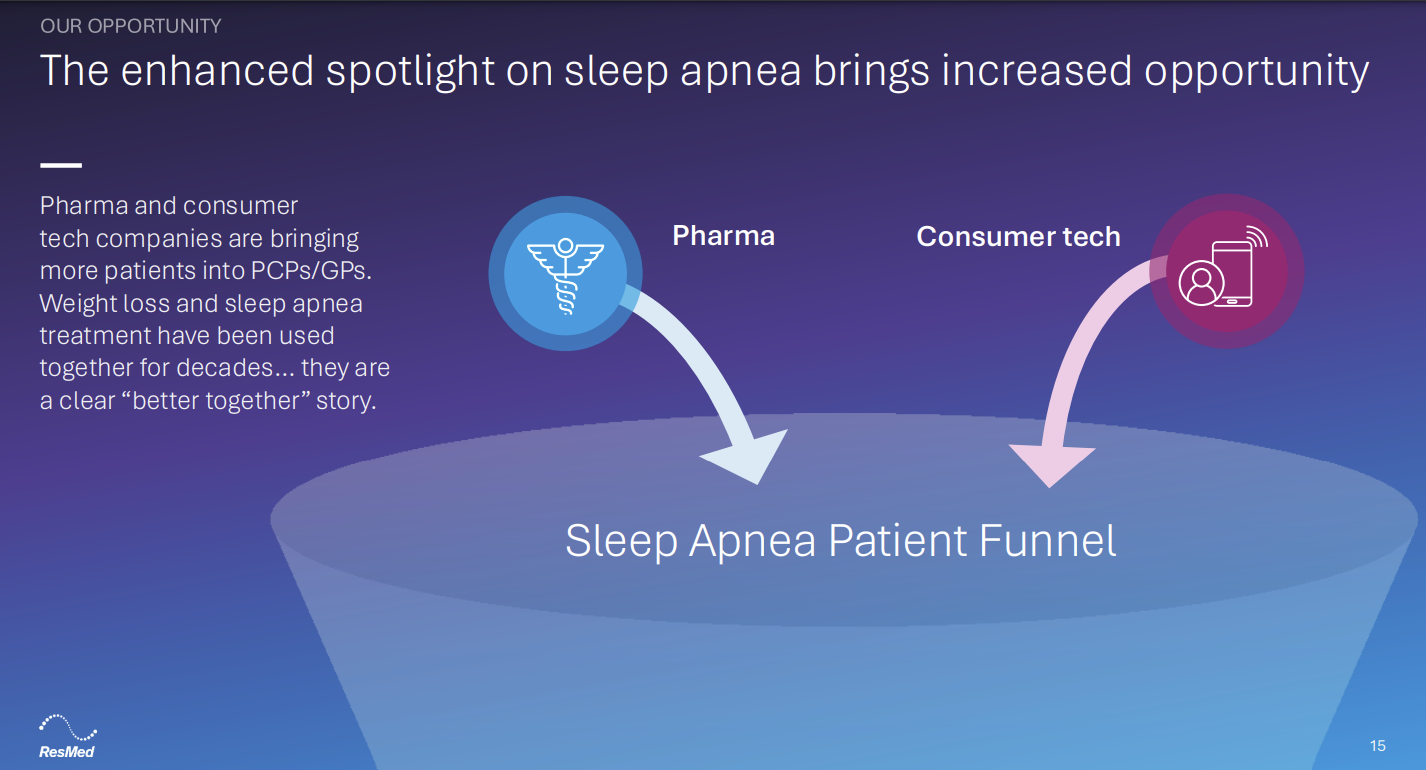

ResMed has a vast amount of sleeping and breathing data on patients, but the company is also getting an assist from consumer wearable devices, which are increasingly flagging sleep apnea issues.

Farrell said Samsung's latest Galaxy Watch and Apple's new Apple Watches are detecting sleep issues. Google's Fitbit and Garmin are also tracking sleep health. "We believe that these technologies will help drive more patients to seek out information regarding their sleep health and breathing health," said Farrell. "ResMed's obligation is to help these sleep health and breathing health consumers find their own pathway to appropriate diagnosis and treatment for sleep apnea."

The consumer wearable market is likely to drive the funnel for ResMed in the future. ResMed executives said the company plans to integrate with Apple HealthKit and other platforms and pursue strategic partnerships.

ResMed's data lake is one of the "deepest and most profound location of medical data on the planet," according to Farrell. That common data platform will continue to be an asset that unlocks value with de-identified data.

"What have we done with that? We've lowered costs. We lowered the cost of setting a patient up on positive airway pressure by 50% through the digital pathways. We've increased adherence, up to 87% from patients who are using myAir app on top of the doctor using AirView and the full connectivity," said Farrell. "What real-world data is going to come forward over the next five years, what are we going to do with the exponential technology that is generative AI and how are we going to take it to the next level?"

Farrell said patients will also create their own personalized data sets as they combine sleep health data with cardiovascular, diabetes and other data and then work better with health systems. "I think the outcomes will be there," said Farrell. "We see the person in the center. This is patient centric."

AI plans

ResMed is already seeing early returns from its Dawn generative AI assistant. After a few months, Farrell said about 25% of visitors have initiated a session with Dawn.

These sessions have reduced the volume of direct-to-live human contact center queries by 40%.

That example highlights half of ResMed's two-pronged AI strategy. AI will drive productivity in the company. Internally, ResMed is using AI to automate operations and processes to health providers, insurers and in the supply chain, said Bobby Ghoshal, Chief Commercial Officer, SaaS at ResMed.

"Our plan is to further infuse automation and AI across this entire process and specifically, to reduce friction and the patient intake side around documentation, authorization and billing," said Ghoshal.

ResMed is also betting that AI revenue through new products and services as well as personalized experiences.

Hemanth Reddy, ResMed's Chief Strategy Officer, said the company's 2040 strategy is to expand its market and use its data assets to harness the latest advancements in AI.

"We're going to connect our solutions much more deeply as one single integrated health technology ecosystem across an individual's patient journey. In doing so, we're going to drive much more personalized and digital-enabled pathways," said Reddy.

ResMed's plan is to benchmark itself against successful technology companies in terms of product management and speed.

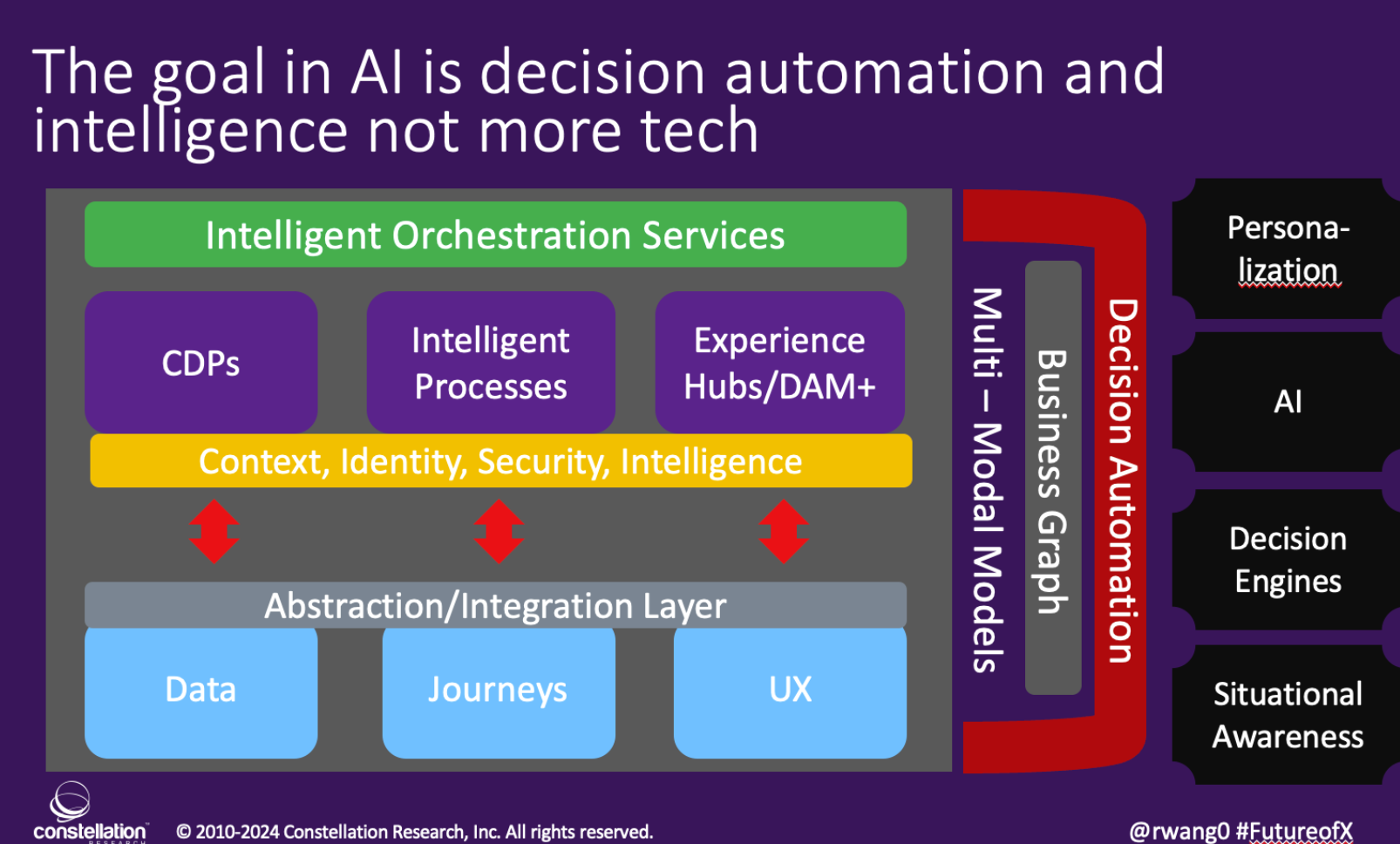

However, ResMed knows its core strengths and where AI fits in. "ResMed is not going to be the world's best at AI. That's going to be Amazon and Microsoft and Google. But we are going to be the world's best at applying generative AI to the world's biggest data lake. I actually call it a data well of sleep health and breathing health information on the planet," said Farrell.

The ResMed stack

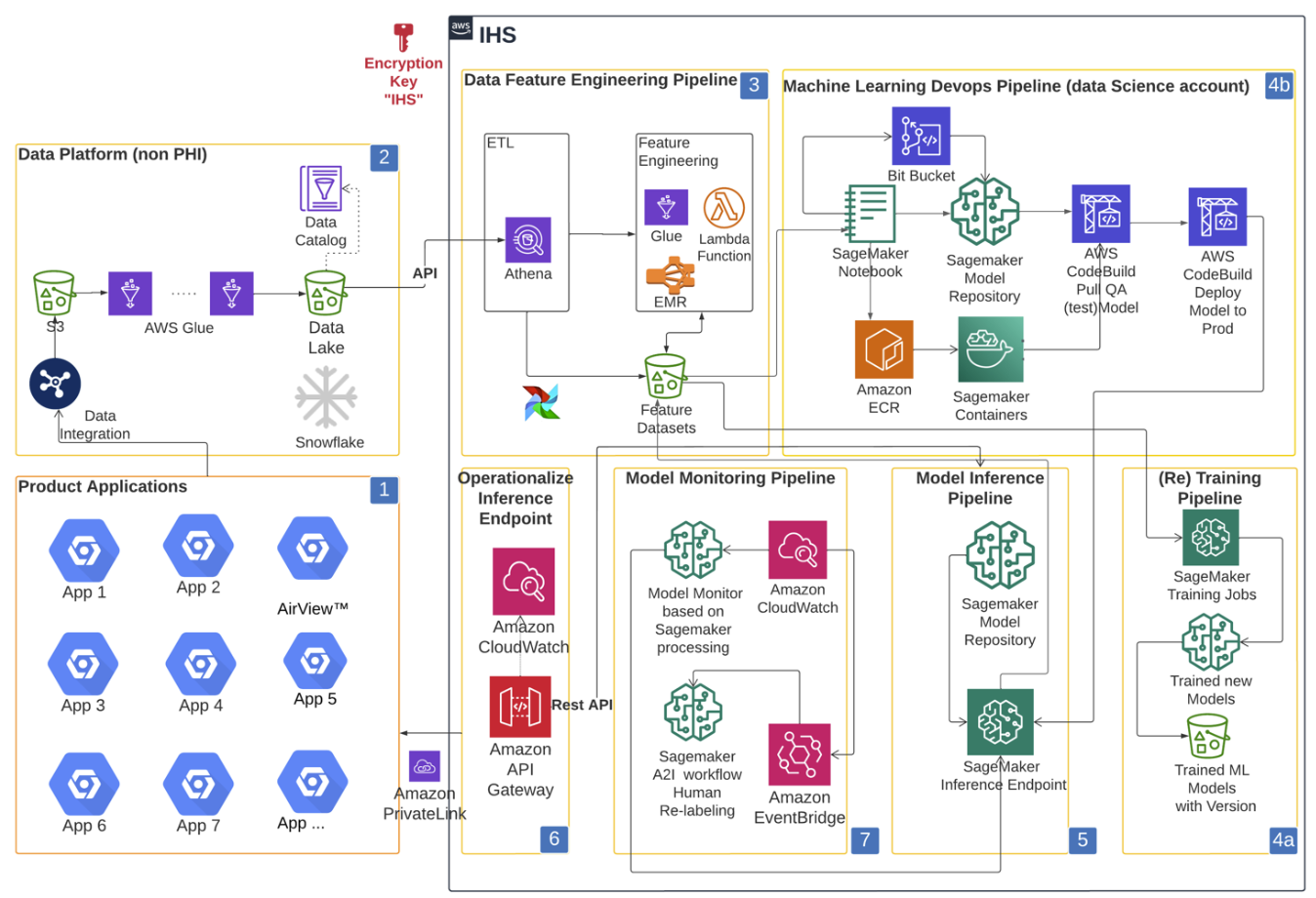

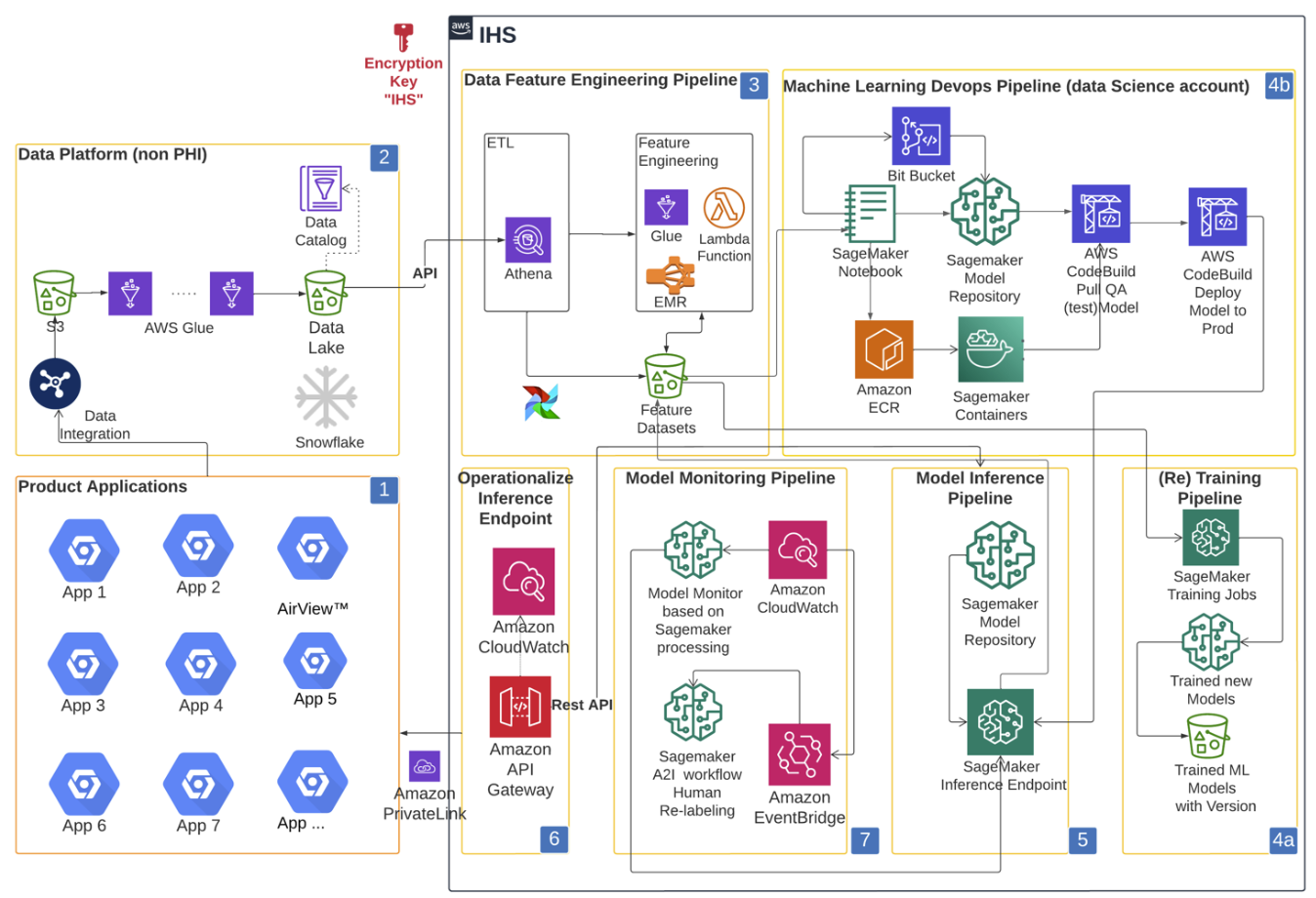

ResMed primarily uses Amazon Web Services for its data, AI and machine learning backbone. ResMed built its Intelligence Health Signals (IHS) platform on AWS so its data science team would build and deploy models.

In a 2022 case study, ResMed detailed its use of Amazon SageMaker for its artificial intelligence and machine learning platform. The company's data lake is also built on AWS and connects to SageMaker via AWS Glue.

Here's a look at RedMed architecture circa 2022.

Based on job listings, Snowflake is a key vendor for ResMed. The company also leverages open-source technologies as well as Terraform from HashiCorp, now owned by IBM.

More:

Data to Decisions

Next-Generation Customer Experience

Innovation & Product-led Growth

Future of Work

Tech Optimization

Digital Safety, Privacy & Cybersecurity

AR

AI

GenerativeAI

ML

Machine Learning

LLMs

Agentic AI

Analytics

Automation

Disruptive Technology

Chief Information Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer