AI data center building boom: Four themes to know

AI data center building boom: Four themes to know

The tide has turned as Wall Street is starting to ask questions about the capital expenditures being laid out for data centers designed for generative AI. Some answers from CEOs have been better than others, but common themes around logistics, land and power, business models and efficiencies have emerged.

Here are the big themes to note amid this AI-fueled data center binge.

Logistics matter

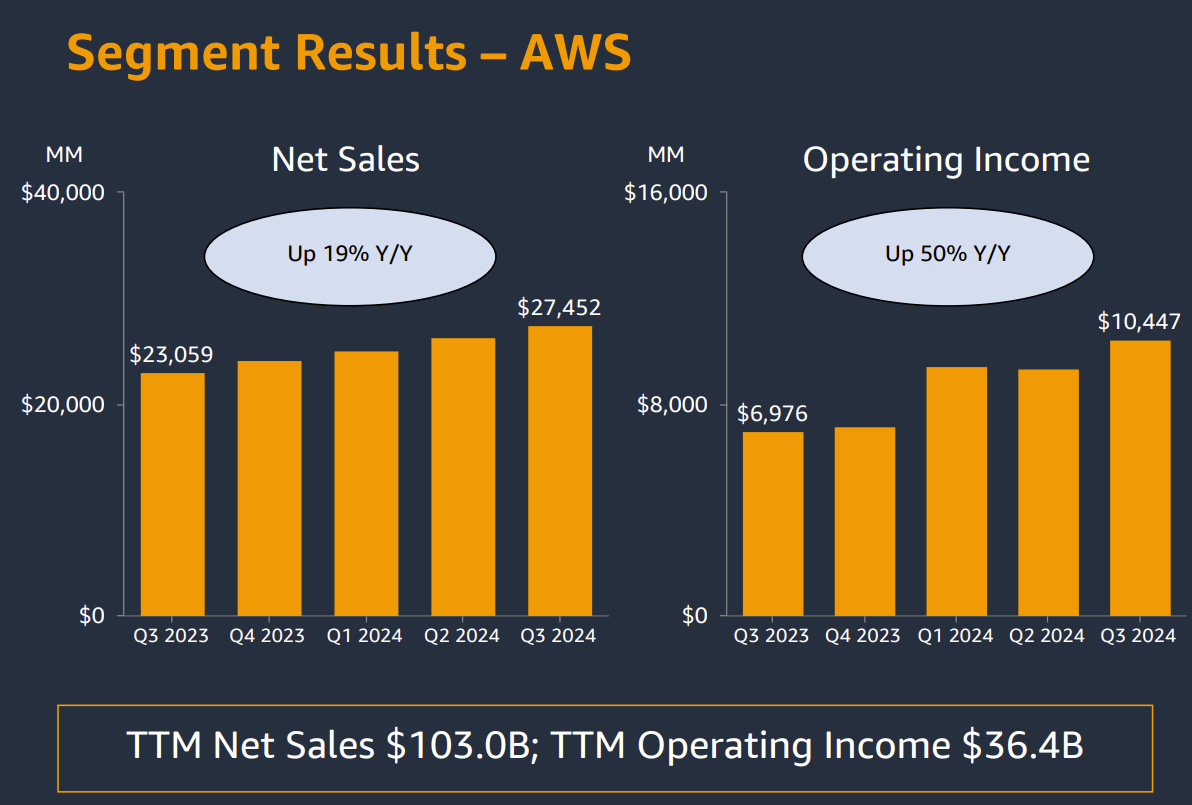

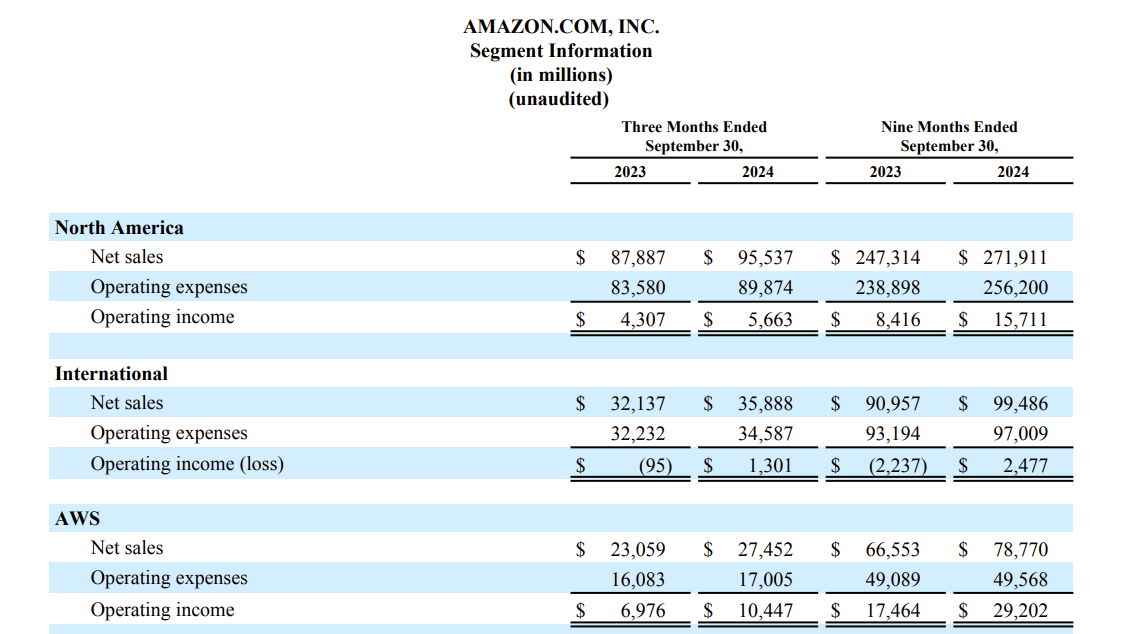

During Amazon's third quarter earnings call, CEO Andy Jassy made a few critical points about the data center buildout. First, he noted that data center assets last 20 to 30 years and have a long runway for monetization. But when asked about margins, Jassy said the following:

"I think one of the least understood parts about AWS, over time, is that it is a massive logistics challenge. If you think about it, we have 35 or so regions around the world, which is an area of the world where we have multiple data centers, and then probably about 130 availability zone through data centers, and then we have thousands of SKUs we have to land in all those facilities.

If you land too little of them, you end up with shortages, which end up in outages for customers. Most don't end up with too little, they end up with too much. And if you end up with too much, the economics are woefully inefficient."

Jassy added that AWS has sophisticated models to anticipate capacity and what services are offered. Given that it's early in the AI boom, demand is volatile and less predictable. "We have significant demand signals giving us an idea about how much we need," said Jassy, who acknowledged that there are lower margins because the AI market is immature.

Following the logic of Jassy's comments you'd expect companies that are used to building out data centers--Meta and Alphabet--will have advantages over a company like Microsoft--historically a software vendor.

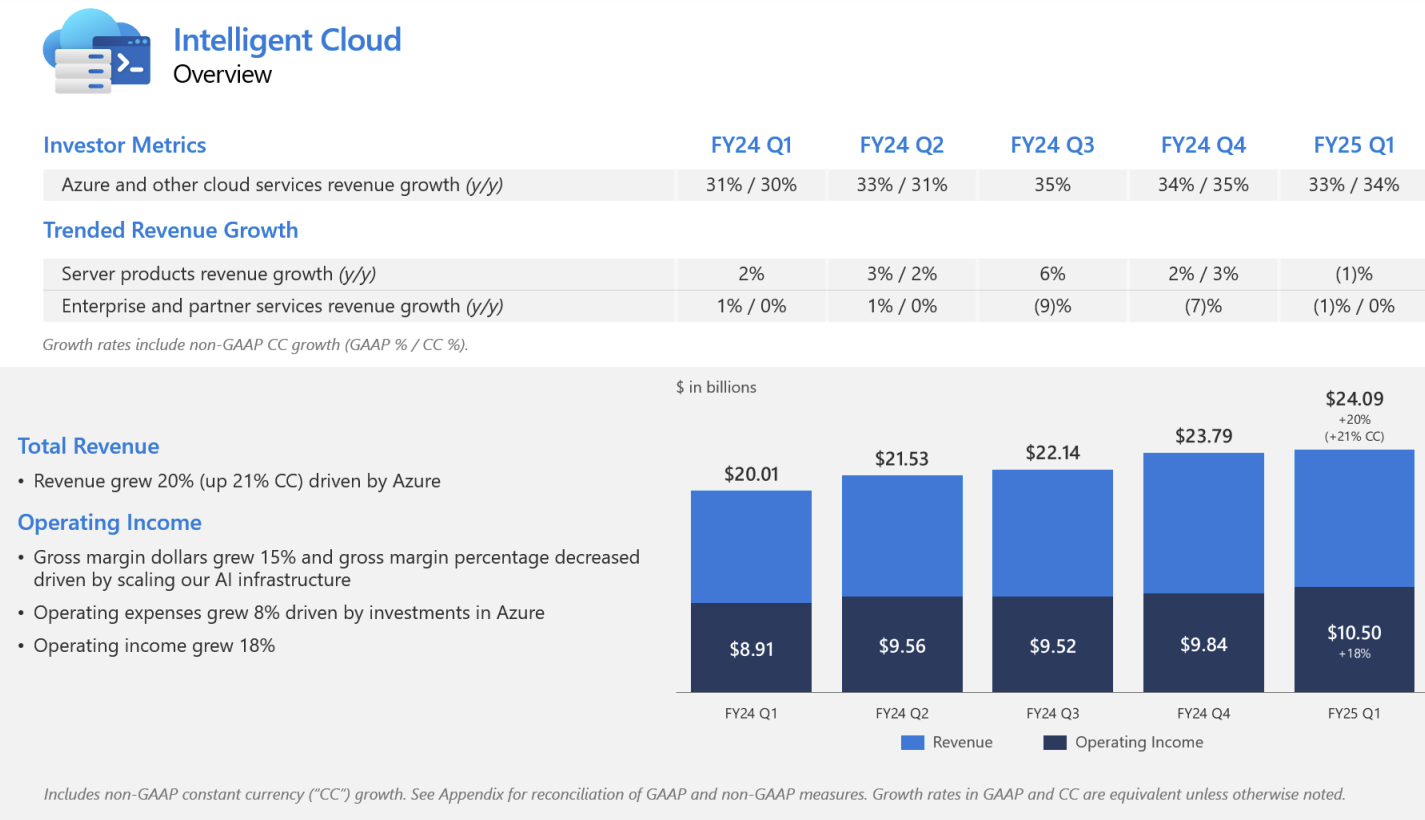

Indeed, Microsoft CEO Satya Nadella said:

"We have run into lots of external constraints because this demand all showed up pretty fast," he said. "DCs don't get built overnight. There is the DC, there is power. And so that's sort of been the short-term constraint. Even in Q2, for example, some of the demand issues we have or our ability to fulfill demand is because of external third-party stuff that we leased moving up. In the long run, we do need effective power and we need DCs. And some of these things are more long lead."

Lead times, land and power

In the end, AI data centers require a lot more than the gear that goes in them.

Comments from data center players like Equinix highlight the lead times with genAI facilities go well beyond GPU supplies. "Customer requirements and data center designs are evolving rapidly. Energy constraints and long-term development cycles pose challenges to our industry's ability to serve customers effectively," said Equinix CEO Adaire Fox-Martin, who noted that his company has the land and power commitments to continue to scale.

Digital Realty Trust CEO Andrew Power noted that hyperscalers that are running to nuclear energy for future power needs, but these purchase agreements in many cases (small nuclear reactors for instance) will take years to deliver.

- Amazon invests in X-Energy Reactor, fuels small modular nuclear reactor run

- Google plans to use Kairos Power small modular reactors for data centers

- Generative AI driving interest in nuclear power for data centers

- Constellation Energy, Microsoft ink nuclear power pact for AI data center

"Sourcing available power is just one piece of the data center infrastructure puzzle. Supply chain management, construction management and operating expertise are all challenges that customers rely on Digital Realty to solve," said Power.

- On-premises AI enterprise workloads? Infrastructure, budgets starting to align

- Blackstone's data center portfolio swells to $70 billion amid big AI buildout bet

Some business models lead to multiple AI wins

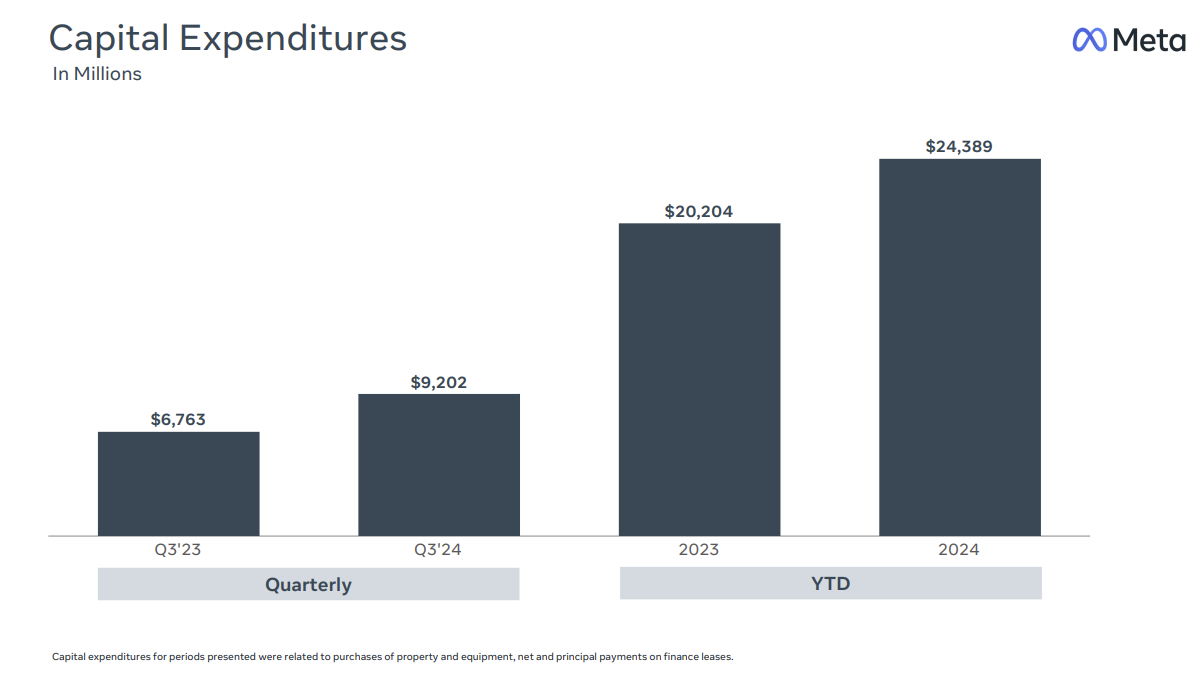

Alphabet and Meta are both spending massive amounts on data centers and have already told Wall Street 2025 capital expenditures are going higher.

Neither company talked about logistics or capacity issues with data center buildouts. These firms do depend on Nvidia GPUs, but also have their own processors. Amazon, Alphabet and Meta have been building data centers at scale since inception.

The big difference for Alphabet and Meta is that they have the models and business models to better justify the data center buildout. Both Alphabet's Google and Meta properties are using AI to better monetize their core properties.

Meta CEO Mark Zuckerberg said the company is leveraging generative AI across the properties to bolster efficiency and drive revenue.

Alphabet CEO Sundar Pichai had a similar refrain. Yes, AI is a big investment, but services like AI Overview, Circle to Search, and Lens can drive revenue and engagement.

- DigitalOcean highlights the rise of boutique AI cloud providers

- Equinix, Digital Realty: AI workloads to pick up cloud baton amid data center boom

- The generative AI buildout, overcapacity and what history tells us

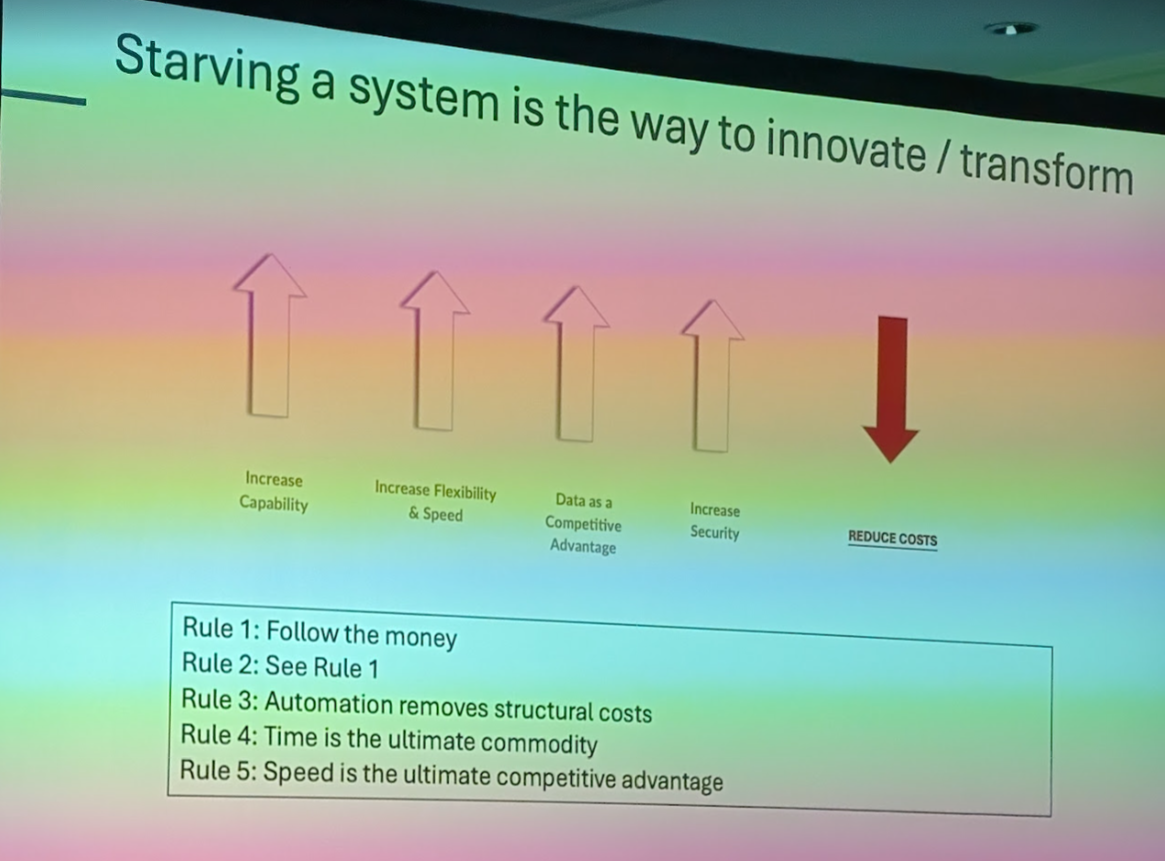

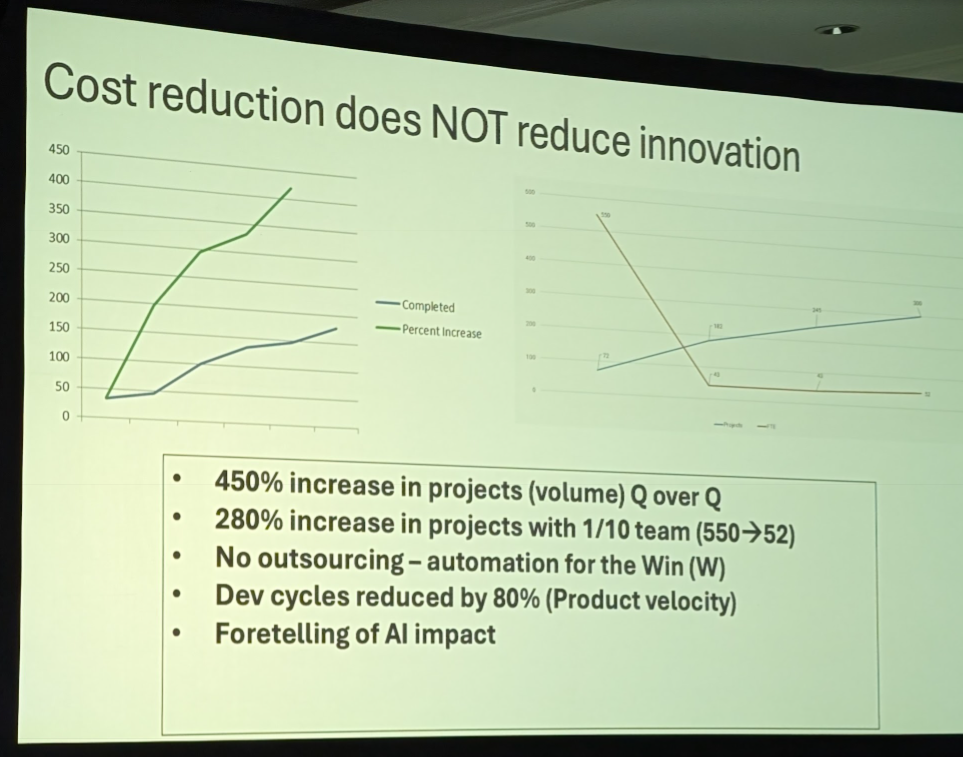

Massive efficiencies ahead

Feel free to question the theory that you need to build out a data center footprint based on today's relatively inefficient AI workloads.

AI workload efficiency is improving for a variety of reasons, but the primary one is money. Sure, Zuckerberg loves open-source AI and infrastructure, but he's also following the money.

Zuckerberg noted that infrastructure will become more efficient and that's why Meta has backed the Open Compute Project. He said:

"This stuff is obviously very expensive. When someone figures out a way to run this better, if they can run it 20% more effectively, then that will save us a huge amount of money. And that was sort of the experience that we had with open compute and part of why we are leaning so much into open source here."

Pichai made a similar point. He said:

"We are also doing important work inside our data centers to drive efficiencies while making significant hardware and model improvements. For example, we shared that since we first began testing AI Overviews, we have lowered machine cost per query significantly. In 18 months, we reduced cost by more than 90% for these queries through hardware, engineering and technical breakthroughs while doubling the size of our custom Gemini model."

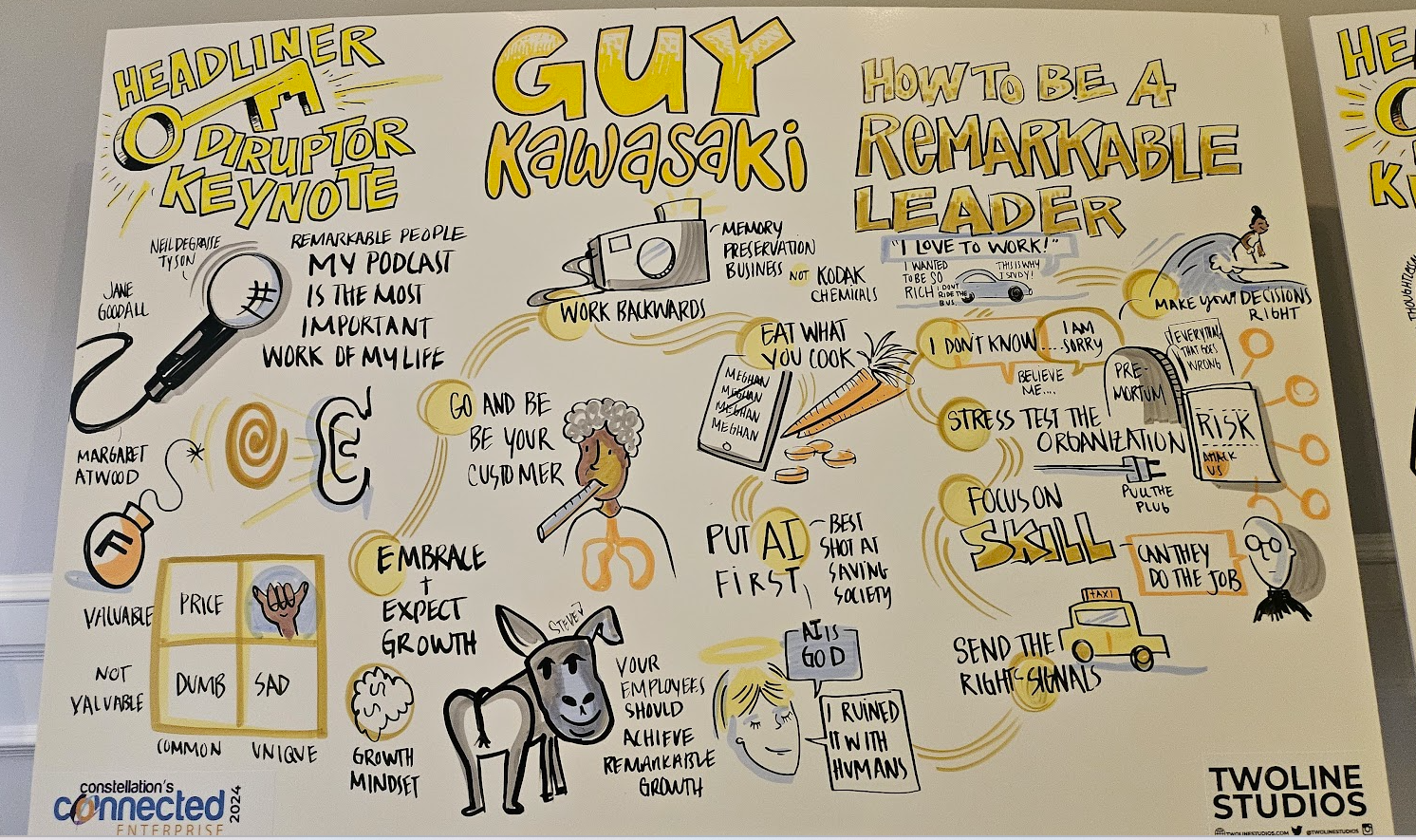

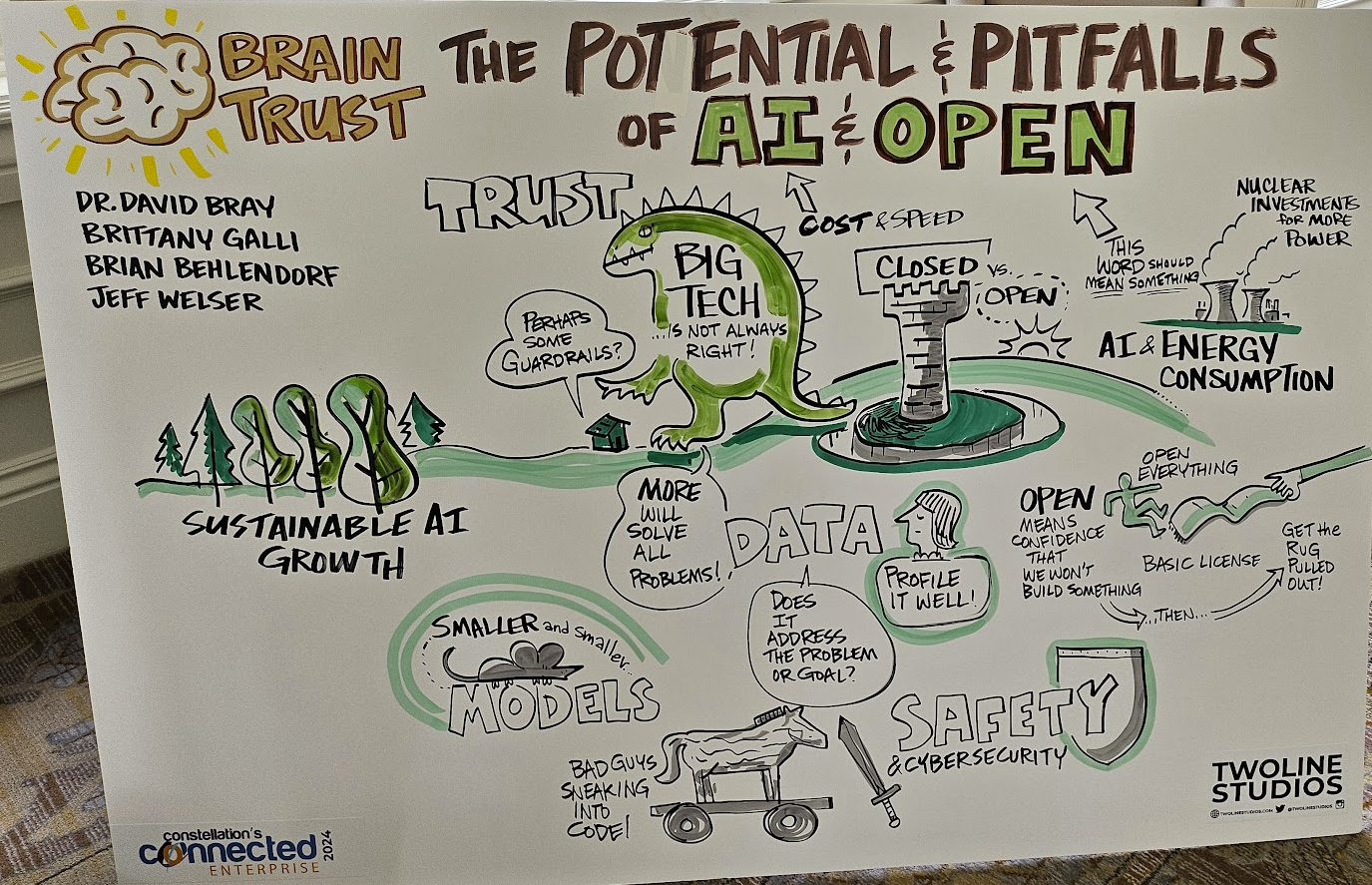

Speaking on a panel at Constellation Research's Connected Enterprise conference, Brian Behlendorf, CTO at the Open Wallet Foundation and Chief AI Strategist at The Linux Foundation said "there's a lot of irrational exuberance about the amount of investment that's going to be required both to train models and do inference on them."

"The cost of training AI is going to come down dramatically. There is a raft of 10x improvements in training and inference costs, purely in software. We're also finding better structured data ends lead to higher quality models at smaller token sizes," he said.

Data to Decisions Tech Optimization Innovation & Product-led Growth Future of Work Next-Generation Customer Experience Digital Safety, Privacy & Cybersecurity Big Data AI GenerativeAI ML Machine Learning LLMs Agentic AI Analytics Automation Disruptive Technology Chief Executive Officer Chief Financial Officer Chief Information Officer Chief Technology Officer Chief Information Security Officer Chief Data Officer Chief AI Officer Chief Analytics Officer Chief Product Officer