HPE delivers fiscal Q2, joins AI server sales parade

HPE delivers fiscal Q2, joins AI server sales parade

Hewlett Packard Enterprise delivered stronger-than-expected fiscal second quarter earnings and appears to be joining the AI server sales parade.

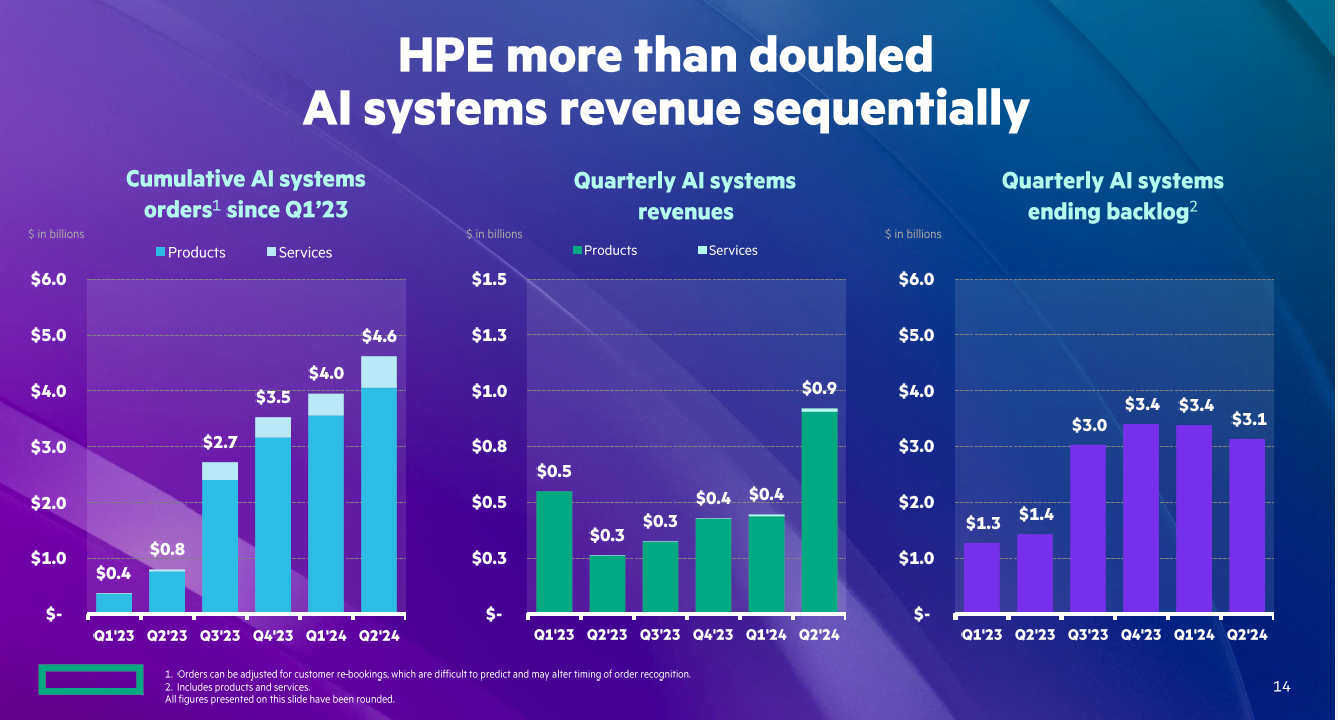

Last quarter, HPE took its lumps over supply chain issues that hampered its AI server sales. In the second quarter, AI systems revenue more than doubled sequentially.

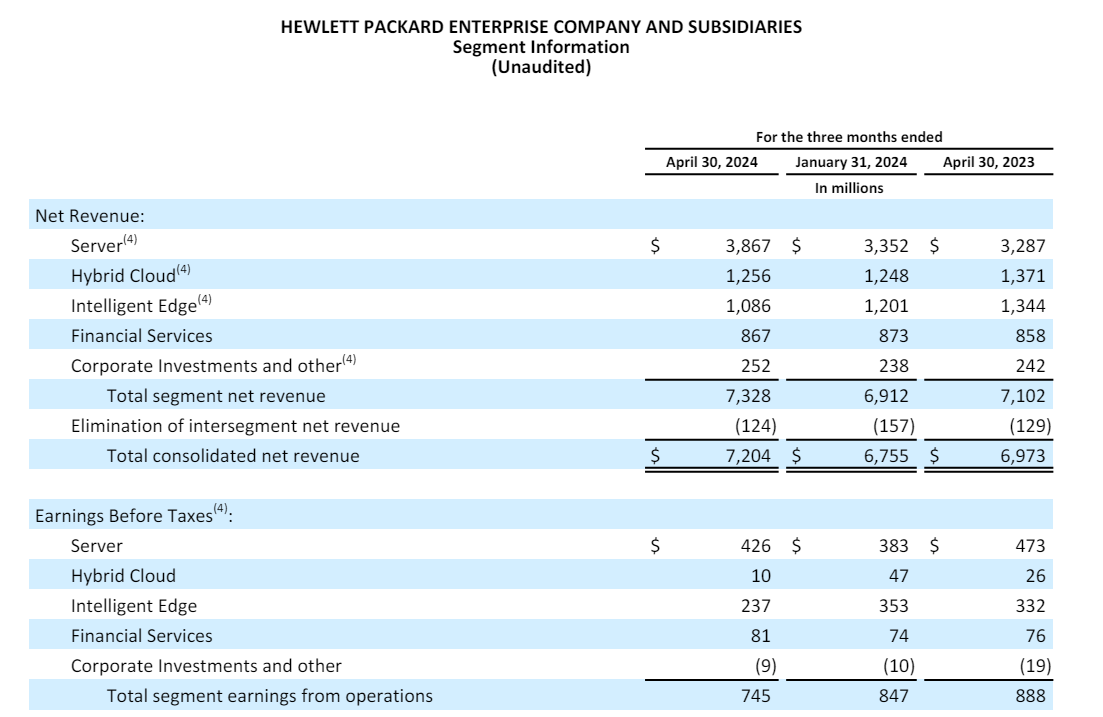

The company reported second-quarter earnings of 24 cents a share on revenue of $7.2 billion, up 3% from a year ago. Non-GAAP earnings in the quarter were 42 cents a share.

Wall Street was expecting HPE to report fiscal second quarter earnings of 39 cents a share on revenue of $6.83 billion.

HPE CEO Antonio Neri said:

"AI systems revenue more than doubled from the prior quarter, driven by our strong order book and better conversion from our supply chain. Our deep expertise in designing, manufacturing, and running AI systems at scale fueled growth of cumulative AI systems orders to $4.6 billion, with enterprise AI orders representing more than 15%."

CFO Marie Myers said AI systems order conversion along with cost discipline enabled HPE to raise its outlook.

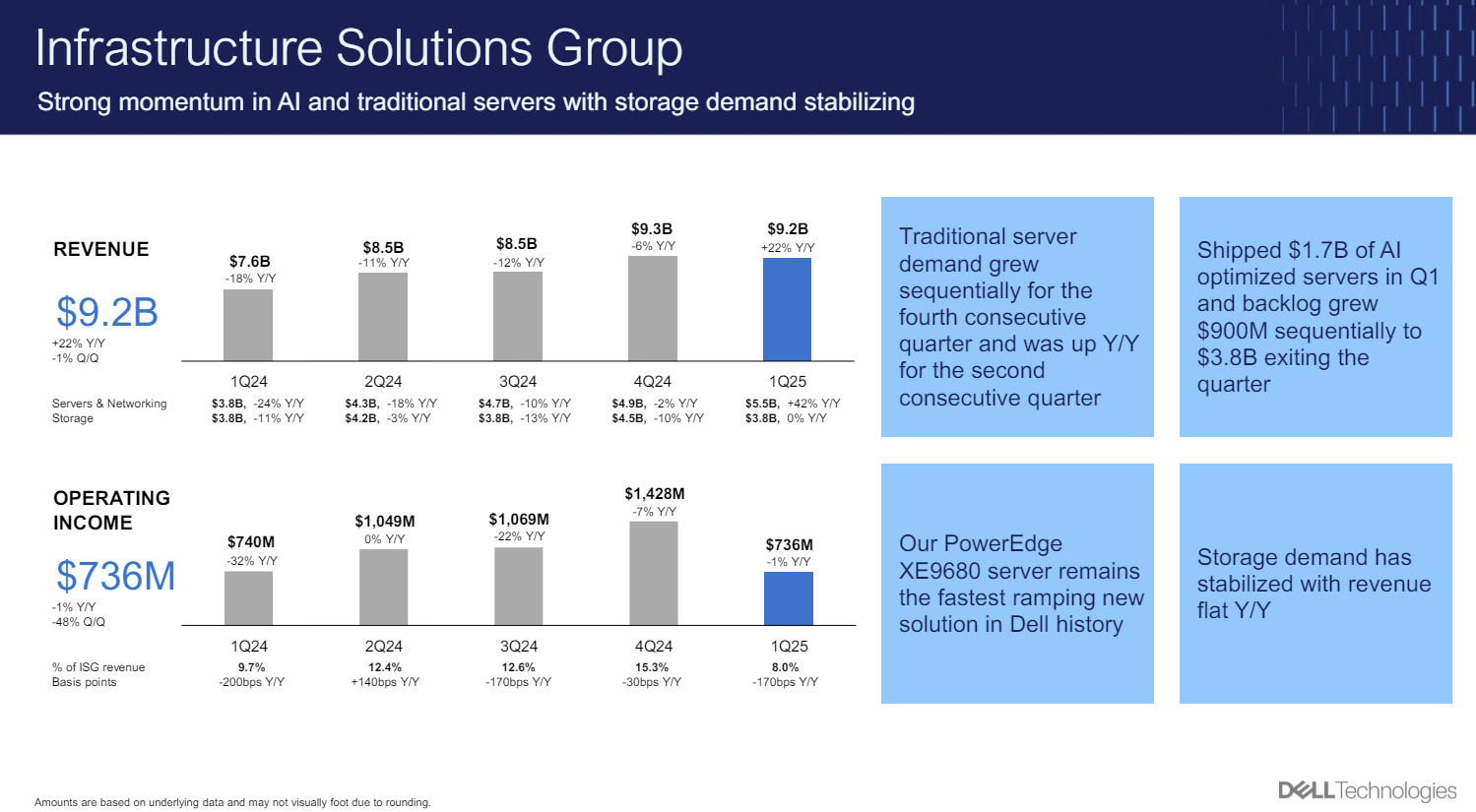

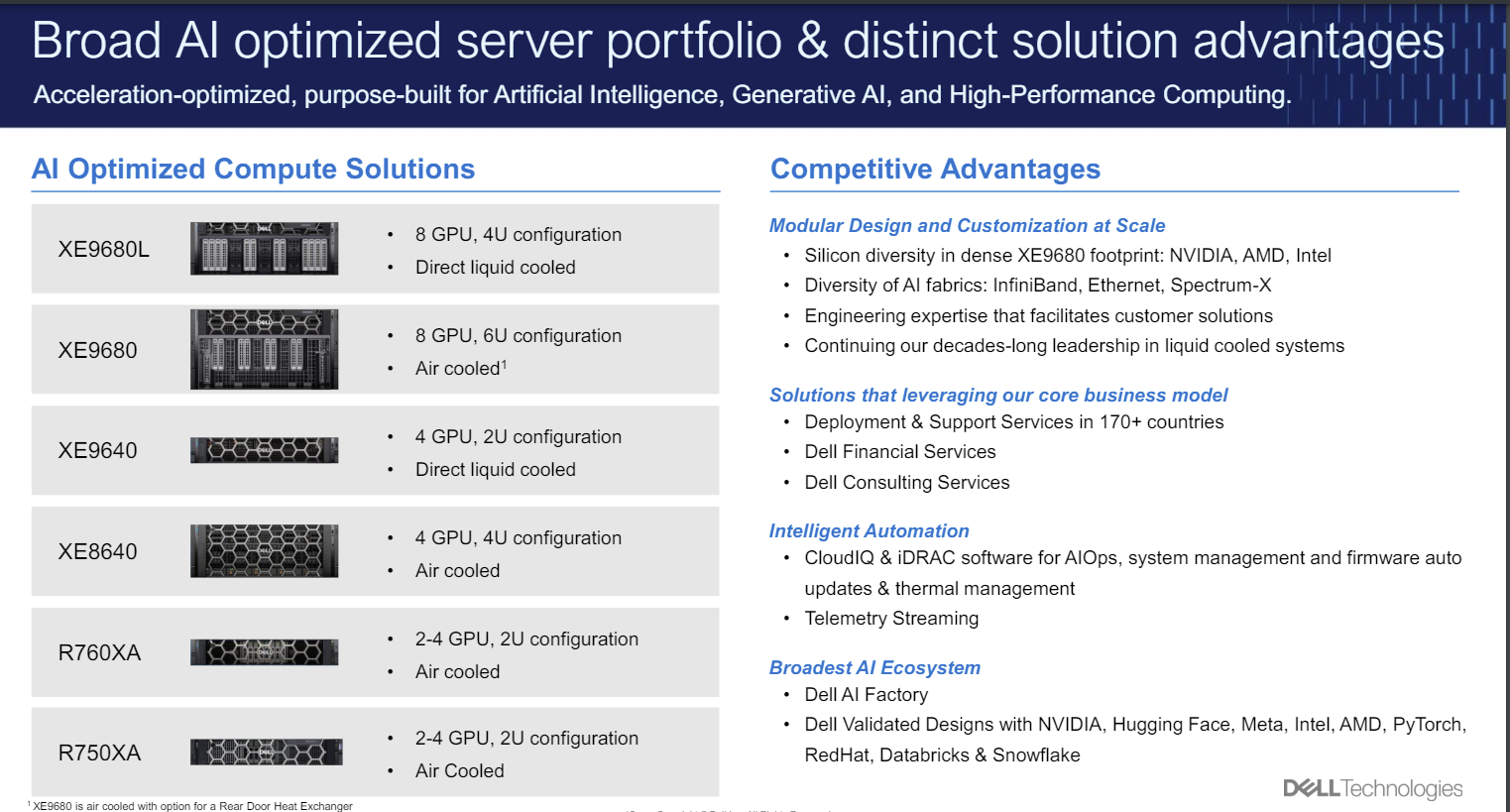

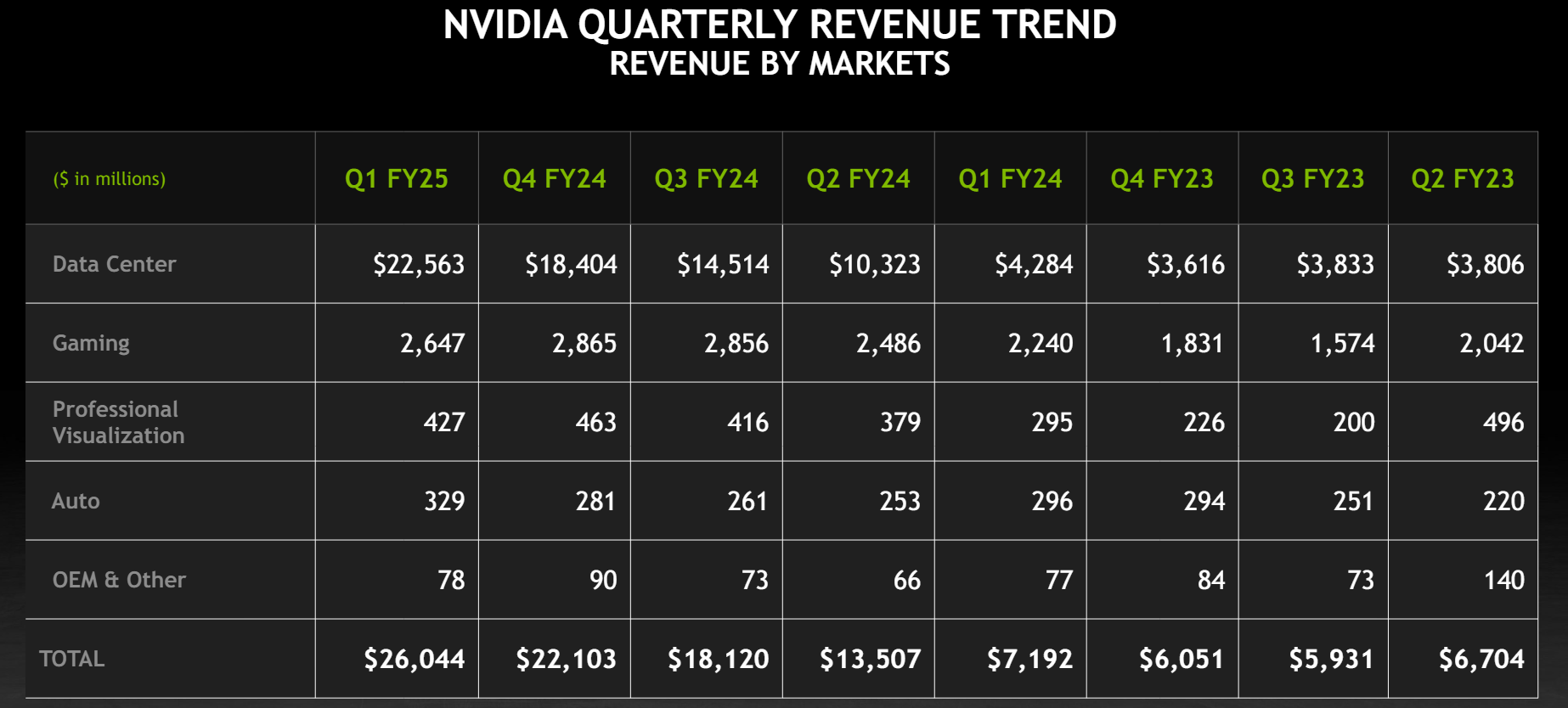

Going into the earnings results, HPE was seen as a bit of an AI-server also ran given Dell Technologies momentum. However, Dell's most recent quarter indicated expectations were overheated. HPE's results along with comments from Dell, Supermicro and Lenovo indicate that the AI server market.

Neri said on a conference call:

"The demand for HPE AI systems is accelerating at a faster pace in our solid execution enable us to more than double our AI systems revenue sequentially to over $900 million held by supply chain conversion through improved GPU availability. Our lead time to deliver NVIDIA H100 solutions is now between six and 12 weeks, depending on all the size and complexity. We expect this will provide a lift to our revenues in the second half of the year. Enterprise customer interest in AI is rapidly growing, and our sellers have seen a higher level of engagement. Enterprise orders now comprise more than 50% of our cumulative AI systems orders with a number of enterprise AI customers nearly tripling year over year. As these engagements continue to progress from exploration and discovery phase. We anticipate additional acceleration in enterprise AI systems. orders through the end of the fiscal year."

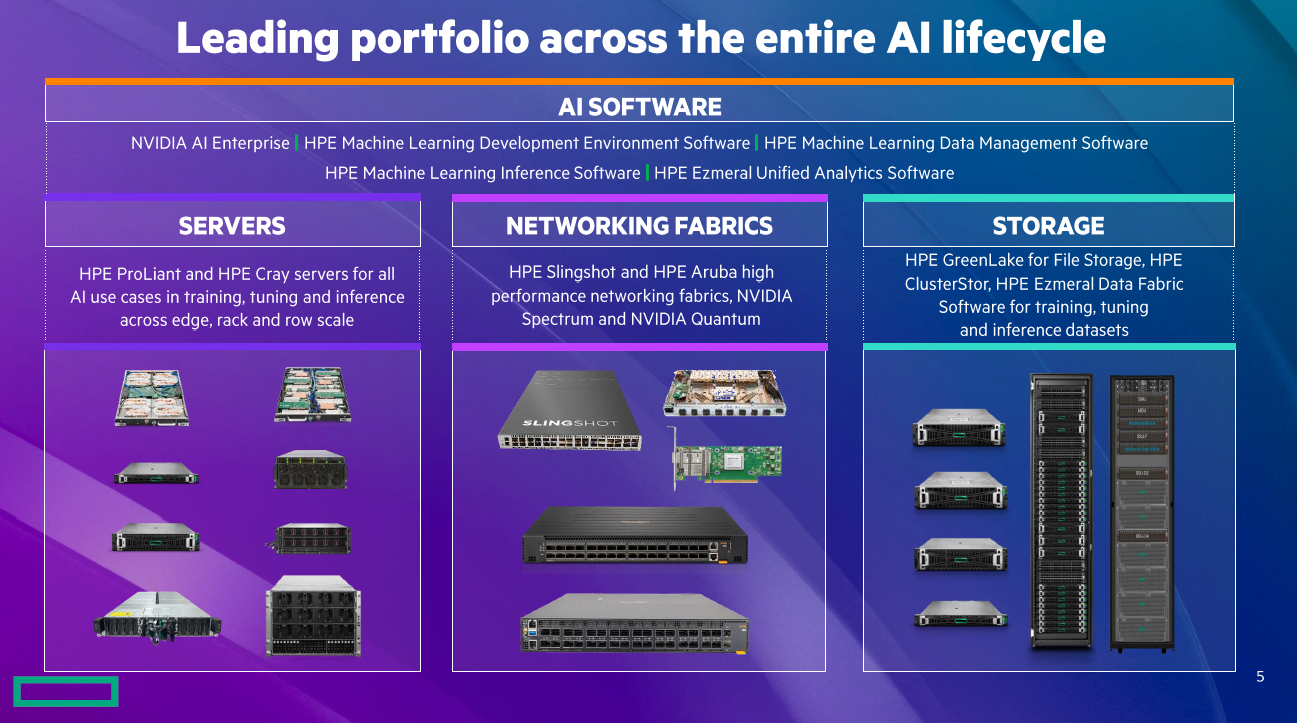

Neri added that HPE's experience in liquid cooling via its supercomputing business is also helping seal AI infrastructure deals.

"Building direct liquid cooling systems is complex and requires manufacturing expertise and infrastructure, including power, cooling and water with more than 300 HPE patents in direct liquid cooling. HPE is well positioned to help customers meet the power demands for current and future accelerated compute silicon designs."

Neri added that HPE will launch a series of AI systems at its Discover conference in Las Vegas.

Server revenue was HPE's strongest area this quarter. Server revenue in the second quarter was $3.9 billion, up 18% from a year ago. Intelligent edge revenue (Aruba) was $1.1 billion, down 19%. Hybrid cloud revenue was $1.3 billion, down 8%.

As for the outlook, HPE said third quarter revenue will be between $7.4 billion to $7.8 billion with non-GAAP earnings of 43 cents a share to 48 cents a share. For fiscal 2024, revenue is expected to grow between 1% to 3% with non-GAAP earnings of $1.85 a share to $1.95 a share.

- HPE to acquire Juniper Networks for $14B: What it means

- HPE Q1 server revenue falls 23% in mixed quarter

Constellation Research's take

Constellation Research analyst Holger Mueller said:

"Who would have though 12 months ago that HPE would feature AI as the growth driver (and no longer hybrid cloud)? CEO Neri and CFO Myers said AI 7x in their prepared statements – and cloud just one time. HPE's DNA in high performance computing plays well in the AI era since systems need high performance hardware. Favorably for HPE is also that the company has the history, track record and image of knowing HPC. The concern is that intelligent edge revenue and hybrid cloud revenue are down YoY, which were the previously designated growth engines. Neri and team took also $600 million of cost out of HPE and now the question is can AI help the company grow again overall."

Speaking at SAP Sapphire in Orlando, CEO Christian Klein had a few missions. First, Klein had to convince customers that its Business AI innovations were worth the upgrades and more than hype. Second, Klein wanted to ensure that SAP wasn't just doing AI because everyone else is. And third, Klein had to get its customer base more excited about programs like RISE with SAP and upgrading to S4/HANA and cloud.

Speaking at SAP Sapphire in Orlando, CEO Christian Klein had a few missions. First, Klein had to convince customers that its Business AI innovations were worth the upgrades and more than hype. Second, Klein wanted to ensure that SAP wasn't just doing AI because everyone else is. And third, Klein had to get its customer base more excited about programs like RISE with SAP and upgrading to S4/HANA and cloud.