LLM giants need to build apps, ecosystems to go with the models

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

The race is on to put enterprise applications around large language models (LLMs) and the stakes couldn't be higher for the likes of OpenAI and Anthropic as well as other foundation model players.

And there's a good reason for the focus on applications to surround LLMs. Pricing for LLMs will tank and foundational models are going commodity in a hurry. Simply put, generic LLMs are good enough for multiple enterprise use cases.

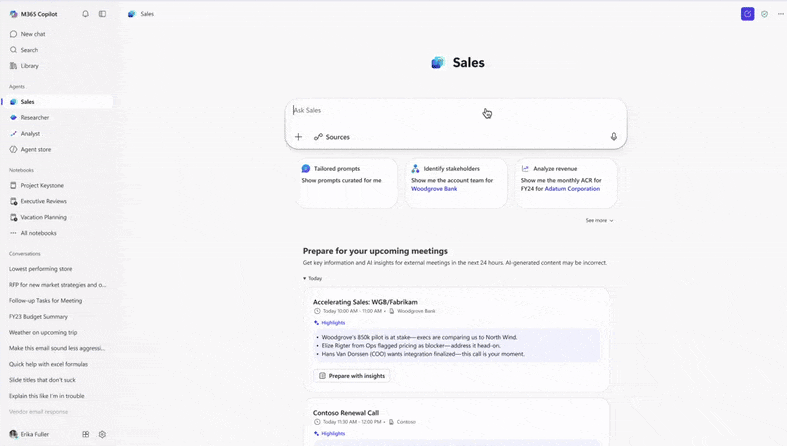

Consider that Microsoft became just the latest vendor to give the US government a sweet deal on software. Microsoft is offering the Feds its suite of productivity, cloud and AI services including Microsoft 365 Copilot for no cost for up to 12 months. And since Microsoft essentially resells OpenAI's ChatGPT undercut its partner and frenemy's $1 a year deal.

Get Constellation Insights newsletter

Microsoft can afford to play the long game because the model (often ChatGPT) is just part of the application buffet.

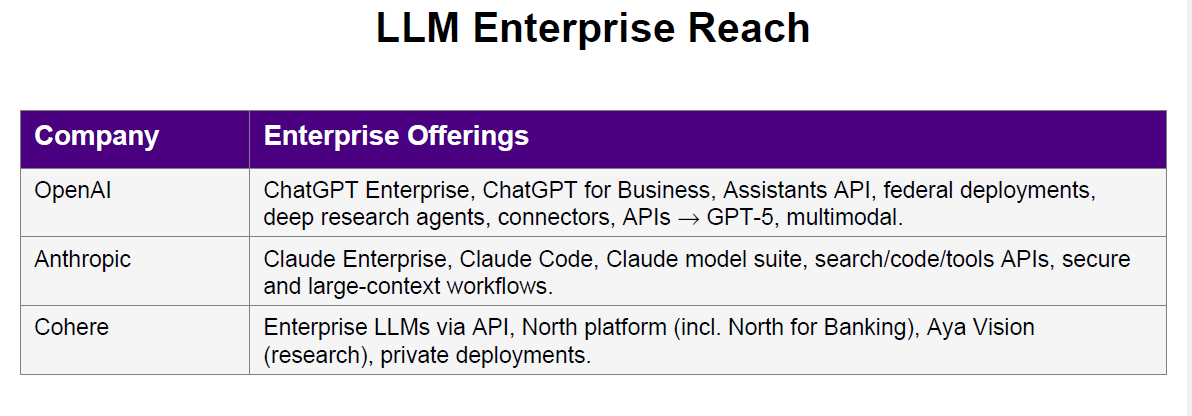

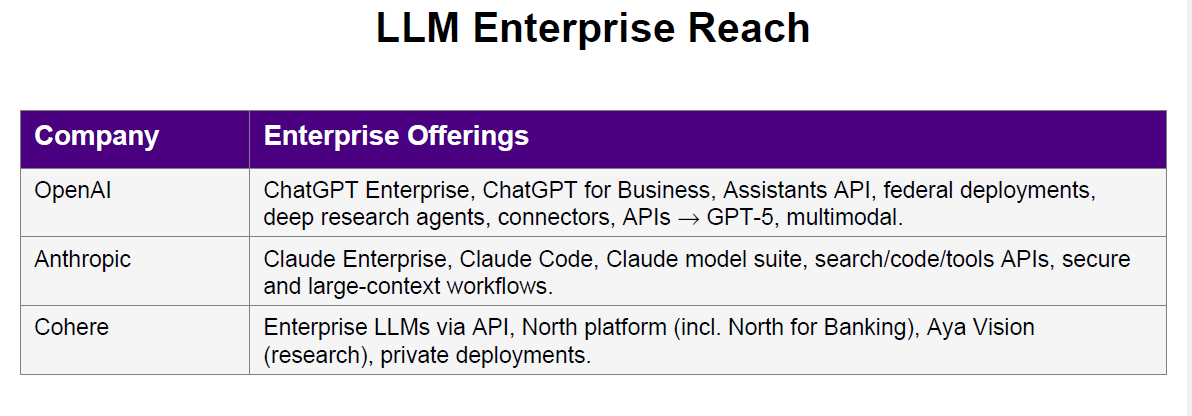

This application meets LLM reality isn't lost on the LLM providers, which need to prop up heady private market valuations. Here's a look at what LLM giants are doing and a dark horse that seems to be ahead of the application curve.

OpenAI

OpenAI's acquisition of Statsig is a big realization that the company needs to fast track its plans to build applications around ChatGPT. OpenAI has launched Codex, one of its first applications, and now will have Statsig in the fold. Statsig's platform focuses on A/B testing, feature flagging and feedback loops that move products into production.

Statsig CEO Vijave Raji made it clear that the company will continue to provide its services and invest in core products. Raji becomes OpenAI's CTO of applications and will report to Fidji Simo, CEO of OpenAI's applications unit.

Simo recently penned an introductory missive on OpenAI's application strategy. OpenAI CEO Sam Altman has noted that the company's enterprise business is surging, but the company's scaling plans revolve around consumer applications too. Altman seems to be cribbing a bit of Apple and a bit of Google in terms of business models with plans for consumer-business scale with enterprise extensions.

OpenAI also has its ChatGPT for Business and ChatGPT for Enterprise plans with the beginning of vertical extensions with ChatGPT for Government. The API Platform also drives revenue.

In many respects, OpenAI is starting to follow that enterprise software playbook with targeted efforts aimed at healthcare, financial senses and public sector. The challenge will be honing the sales ground game for industries as it scales GPT Team, Enterprise, Edu and Pro plans.

Anthropic

Anthropic is now valued at $183 billion after its latest funding round. The company is also on a $5 billion annual revenue run rate.

Focus on the enterprise is fueling that growth. Anthropic is closely following the enterprise software playbook and its tight partnership with AWS is embedding its Claude model family in businesses.

For instance, Anthropic recently hired Paul Smith, alum of ServiceNow, Microsoft and Salesforce, as Chief Commercial Officer. Anthropic also launched Claude for Financial Services.

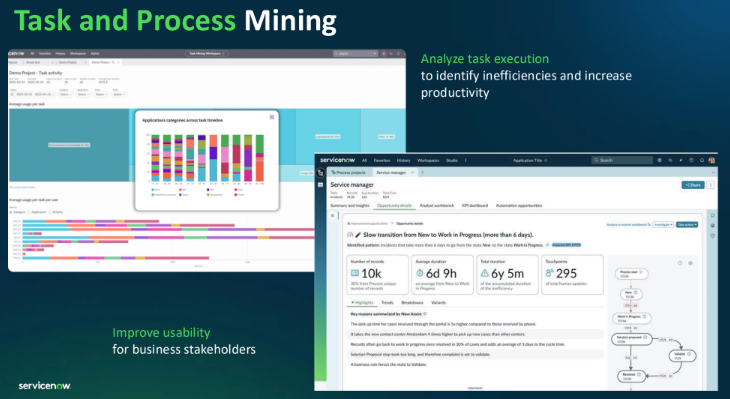

Enterprise software companies typically go horizontal and then drill down into industries. Once you land a big customer in one vertical others often follow. Smith has built out the go-to-market efforts at ServiceNow and will do the same for Anthropic.

Anthropic has specialized Claude versions for code, customer support, education, financial services and government. Claude Code is on a $500 million annual revenue run rate.

Like OpenAI, Anthropic has plans for businesses including Claude Max, Claude Team and Claude Enterprise. Anthropic has been taking steps to give Claude a collaboration and future of work spin.

Cohere as the dark horse

Cohere, a Constellation Insights underwriter, doesn't play LLM leapfrog, but has been building out applications around its models, which are enterprise focused.

Cohere North is a collaboration platform worth watching. North recently launched and is focused on enterprise productivity. There's also a version of North for Banking.

Meanwhile, Cohere Compass is focused on enterprise search and discovery system designed to surface business insights. Cohere's models and associated tools are focused on enterprise pain points such as search quality and multimodal retrieval.

The company is focused on financial services, healthcare and life sciences, manufacturing, utilities and public sector verticals.

Cohere recently raised $500 million and hired a Chief AI Officer and CFO. Cohere said the funding will be used to accelerate agentic AI use cases in businesses and governments primarily through North. The company added that strategic partners such as Oracle, Dell, RBC, Bell, Fujitsu, LG CNS and SAP are using North as a platform.

Bottom line

For LLM players to even think about growing into their valuations, applications and developer ecosystems need to be scaled.

It appears that the foundation model players will crib some business inspiration from enterprise software providers. However, enterprise software giants have the sales ground games, entrenched technologies and corporate data stores. And if LLMs (and smaller more focused models) are commodities then then value will be in orchestration and automation potentially through AI agents.

The future of enterprise software is being rewritten, but the business models underneath are likely to look very familiar.

Data to Decisions

Future of Work

Innovation & Product-led Growth

Tech Optimization

Next-Generation Customer Experience

Digital Safety, Privacy & Cybersecurity

openai

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Robotics

AI

Analytics

Automation

Quantum Computing

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

Leadership

VR

Chief Information Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer