AI's boom and the questions few ask

AI's boom and the questions few ask

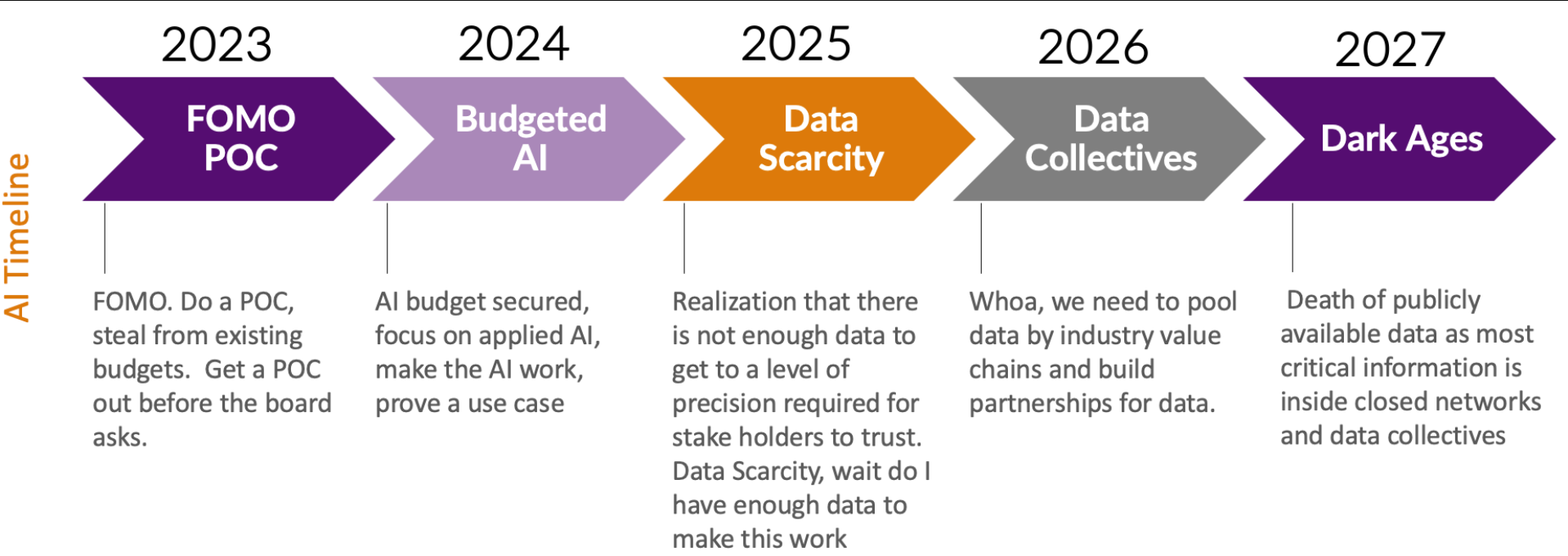

The money being thrown around AI talent and infrastructure is staggering, but the return on investment may be sketchy for longer time frames. What happens if AI demand doesn't deliver triple-digit growth forever?

In recent weeks, we've seen the following:

Oracle is predicting revenue gains for fiscal 2028. CEO Safra Catz told employees Oracle is off to a strong start in fiscal 2026 and the company signed multiple large cloud deals "including one that is expected to contribute more than $30 billion in annual revenue starting in FY28." Bloomberg later reported that Oracle's big cloud deal was with OpenAI.

Meta CEO Mark Zuckerberg is trying to hire a dream team and throwing billions into the effort. Zuckerberg is chasing superintelligence, but supergroups can be tough to manage.

CoreWeave said it’s the first AI cloud provider to deploy Nvidia's GB300 NVL72 systems for customers. CoreWeave has also signed a $11.9 billion deal with OpenAI for future compute capacity for model training. CoreWeave's model is fairly simple: Lever up with debt ($8.8 billion as of March 31) and grow your way out of it as future demand materializes. The issue: CoreWeave paid $460 million to service its debt for the first quarter ended March 30 and delivered overall net cash of $61 million. Simply put, CoreWeave would be a great business if it didn't have to pay interest rates between 9% and 15% depending on the credit facility. The company has cash and equivalents of $1.3 billion as of March 31. CoreWeave raises $7.5 billion in debt financing for AI data center buildout

As previously noted, the AI infrastructure game is really just a big leveraged bet that's working for now, but it's worth asking a few questions.

- How much of the AI boom is dependent on OpenAI posting crazy growth years into the future? Turns out a good bit. Oracle is building like mad on the bet that OpenAI is going to be bigger, badder and superintelligent in two years. What could go wrong? Well, Google, China's AI champions, Microsoft competition, hardware risks and a model training wall to name a few. CoreWeave is betting that OpenAI will be "a significant customer in future periods." Let's hope so. Microsoft was 72% of revenue in the first quarter. Three customers were 83% of CoreWeave revenue.

- Is Microsoft the smartest of the bunch? Microsoft is allowing OpenAI to diversify its infrastructure spending so it doesn't have to fork over so much dough. Microsoft and OpenAI are bickering over the terms of their partnership as the latter tries to ultimately go public and needs a new structure.

- Will Nvidia's rivals be good enough? The base of this AI infrastructure boom is Nvidia. Giants are spending mostly on Nvidia, but the market is diversifying with hyperscale cloud custom silicon and AMD. Is it possible that levering up to buy Nvidia GPUs isn't a slam dunk?

- When will the AI infrastructure music stop? The only guarantee is that the spending boom will pause and there will be glut. Timelines are debatable, but rest assured that deals based on demand years into the future are going to produce spectacular failures.

Add it up and AI infrastructure is looking a lot more like the sports world. Billionaires are spending hundreds of millions if not billions of dollars on player that may produce into the future (or not). A $300 million contract for a player often doesn't pay off. These AI deals aren't much different.

Data to Decisions Tech Optimization Big Data Chief Financial Officer Chief Information Officer Chief Technology Officer Chief Information Security Officer Chief Data Officer