Snowflake Summit 2025: The AI-Native Data Foundation Gets Real

Vice President and Principal Analyst

Constellation Research

Michael Ni is Vice President and Principal Analyst at Constellation Research, covering the evolving Data-to-Decisions landscape—where CDOs, CIOs, and CPOs must modernize data infrastructure, integrate AI into decision-making, and scale automation to improve business outcomes.

Ni’s research examines how enterprises operationalize AI, automate decision-making, and integrate data management and analytics into core business processes. He focuses on the challenges of scaling AI-driven decision systems, aligning data strategy with business goals, and the growing role of data and decisioning “products” in enterprise ecosystems.

With 25+ years as a product and GTM executive across enterprise software, AI platforms, and analytics-driven technologies, Ni brings a practitioner’s perspective to…...

Read more

Last year, Snowflake made a bold pitch for an "AI Data Cloud." This year, they got down to business.

??"We want to empower every enterprise on the planet to achieve its full potential through data and AI. And AI, and we think this moment makes it possible more than any such moment we have seen in a decade or even two decades." - Sridhar Ramaswamy, Summit 2025

CEO Sridhar Ramaswamy's message was clear: the age of AI-native data isn't coming—it's here. And with it, enterprise leaders no longer need to stitch together data lakes, BI tools, and AI pipelines themselves. Snowflake's platform is evolving to make data AI-ready from the moment it's created—and to empower business and technical users alike to act on it, securely and at scale.

While less flashy than 2024's announcements, this year's updates show Snowflake delivering the operational muscle to begin making Sridhar's vision a reality: faster performance, real integration, and tangible outcomes that will make or break AI investments.

1. AI-Native Analytics, Operationalized

Delivering decision intelligence and automation at scale, turning data into action through governed, AI-powered workflows accessible to analysts and agents alike.

What's new:

- Cortex AISQL: AI callable in SQL—functions like AI_FILTER, AI_AGG, and AI_EXTRACT add the ability to handle unstructured text, images, and audio atop structured queries.

- Snowflake Intelligence: Natural language agents to access structured/unstructured data.

- Semantic Views: Define business logic/ metrics in a reusable, governed layer—so SQL users, dashboards, and AI agents all use consistent logic.

- AI Observability: Trace AI behavior, audit responses, validate, and cite model outputs.

Why it matters:

- BI-to-AI leap: Analysts get access to advanced models without leaving SQL. It flattens the skill gap and speeds delivery.

- Shifts BI from dashboards to conversations with your data, giving business functions an AI assistant to explore your data in Snowflake.

- Trust first: From semantic models to cited answers, Snowflake treats transparency and observability as part of the core Snowflake architecture.

- Raises the question already being asked around BI/analytic investments: how can we empower analysts and decision-makers to safely leverage AI without introducing new silos or trust gaps?

2. Accelerated Data Modernization & Engineering

One of the top initiatives shared by customers and partners at the Summit was modernization — migrating and integrating diverse data sources, including structured, unstructured, and real-time data, into a single, governed platform. Snowflake listened.

What's new:

- OpenFlow (GA): Enterprise-grade, NiFi-based integration engine—handles batch, real-time, and unstructured ingestion.

- SnowConvert AI: Uses AI to automate code conversion, validation, and migration workflows from legacy warehouses.

- Workspaces & dbt-native Support: A single pane for SQL, Python, Git-integrated pipelines.

Why it matters:

- Integration plane arrives: Snowflake isn't just a warehouse—it now competes in the ETL/iPaaS layer with observability, unstructured support, and BYOC options.

- Legacy migration simplified: Snowflake removes excuses for staying on legacy platforms, especially when performance or interactivity on the data requires data centralization.

- Future-proofing pipelines: Snowflake is positioning itself as the one-stop shop for modernizing legacy data infrastructure and the AI workflows that come next.

- With the number of data projects ongoing, data and AI leaders must answer the question: How do we modernize legacy systems without creating brittle pipelines or compromising migration quality?

3. Adaptive Compute: Simpler, Smarter, Cheaper

As the category matures, enterprises naturally shift to prioritize interoperability, simplification, and managed cost against unpredictable AI and analytics workloads. These considerations consistently ranked among the top 5 requests from my conversations with customers and partners during the Summit.

What's new:

- Adaptive Compute Warehouses: Policy-based runtime management. Snowflake dynamically assigns compute—no sizing or tuning needed.

- Gen2 Warehouses: 2.1x–4.4x faster performance with new hardware/software blends.

- Unified Batch & Streaming Support: streaming ingestion with up to 10 gigabytes per second with 0.5 to 10 second latency without building separate pipelines or introducing external tools.

- Multiple FinOps tools: From reviewing workload performance, monitoring spending anomalies, to setting spending limits for resources based on tags, and minimizing FinOps overhead.

Why it matters:

- Less infrastructure babysitting: Auto-scaling and intelligent routing reduce platform overhead and FinOps headaches, ensuring performance without waste.

- Foundation for unpredictable AI: Agentic and ML workloads are bursty and need near-real-time data. Adaptive Compute removes the configuration burden.

- The question posed by data and AI leaders was how to make platforms more responsive to the growing set of AI and analytics workloads without increasing operational complexity. For more mature leaders, the question was how AI would drive operational efficiency to free resources for the next initiatives.

4. Interoperability & Governance: A Unified Control Plane for the AI Era

With AI agents and self-service analytics growing rapidly, a unified orchestration layer is essential to enforce trust, consistency, and visibility across users, tools, and data products.

What's new:

- Iceberg & Polaris Interop: Full read/write support for open table formats and external catalogs.

- Horizon Copilot & Universal Search: Natural language access to lineage, metadata, and permissions to all data unified to Snowflake.

- AI Governance Frameworks: Native identity, MFA, and audit controls extended to agents and hybrid environments.

Why it matters:

- Centralize to an open lakehouse to then orchestrate: As data use cases multiply—from self-service analytics to autonomous agents—data and analytic leaders need a single orchestration layer to manage access, visibility, and consistency.

- Governance as infrastructure: Horizon makes governance user-facing and operational—so rules aren't just enforced, they're discoverable and explainable across all your mapped data assets.

- Interoperability is execution: Supporting Iceberg, Polaris, and open catalogs ensures flexibility without fragmentation—an essential trait as AI workloads touch more business domains.

Final Take: The Battle for the Data and AI Foundation Is Now in Its Next Phase

Satya Nadella said it best: "We are in the mid-innings of a major platform shift." (NOTE: you can read my summary of MSFT Build 2025 here)

If that's the case, Snowflake just stepped up to the plate and knocked a double into deep Data and AI platform territory.

Snowflake's announcements aren't just analytics with AI features—it's the emergence of a unified execution layer where data, decisions, and intelligent agents converge to support a decision-centric architecture. The battlefield has shifted. It's not about who stores data better—it's about who activates it faster, governs it smarter, and enables AI to drive real outcomes.

With Cortex agents, Adaptive Compute, and OpenFlow integration, Snowflake is betting that the AI-native data foundation will be the next cloud OS. And in this next inning, the winners will be the ones who can orchestrate, not just visualize, enterprise intelligence.

For CDAOs, the implications are practical: Modernization no longer requires trade-offs between trust, scale, and agility. The race is no longer about who can analyze—it's about who can execute. And Snowflake's AI-native data foundation has just moved the line on execution, making it faster, safer, and more complete.

If You Want More on Snowflake Summit 2025

Related articles for more information:

- Watch Holger Mueller and me break down Snowflake Summit 2025: the customer/partner buzz from the floor, the OpenFlow acquisition, and Enterprise implications. Watch the recap here: https://bit.ly/443yPvp

- Snowflake makes its Postgres move, acquires Crunchy Data: bit.ly/4kvrzOG

Data to Decisions

Tech Optimization

Innovation & Product-led Growth

Chief Information Officer

Chief Digital Officer

Chief Analytics Officer

Chief Data Officer

Chief Technology Officer

Chief Product Officer

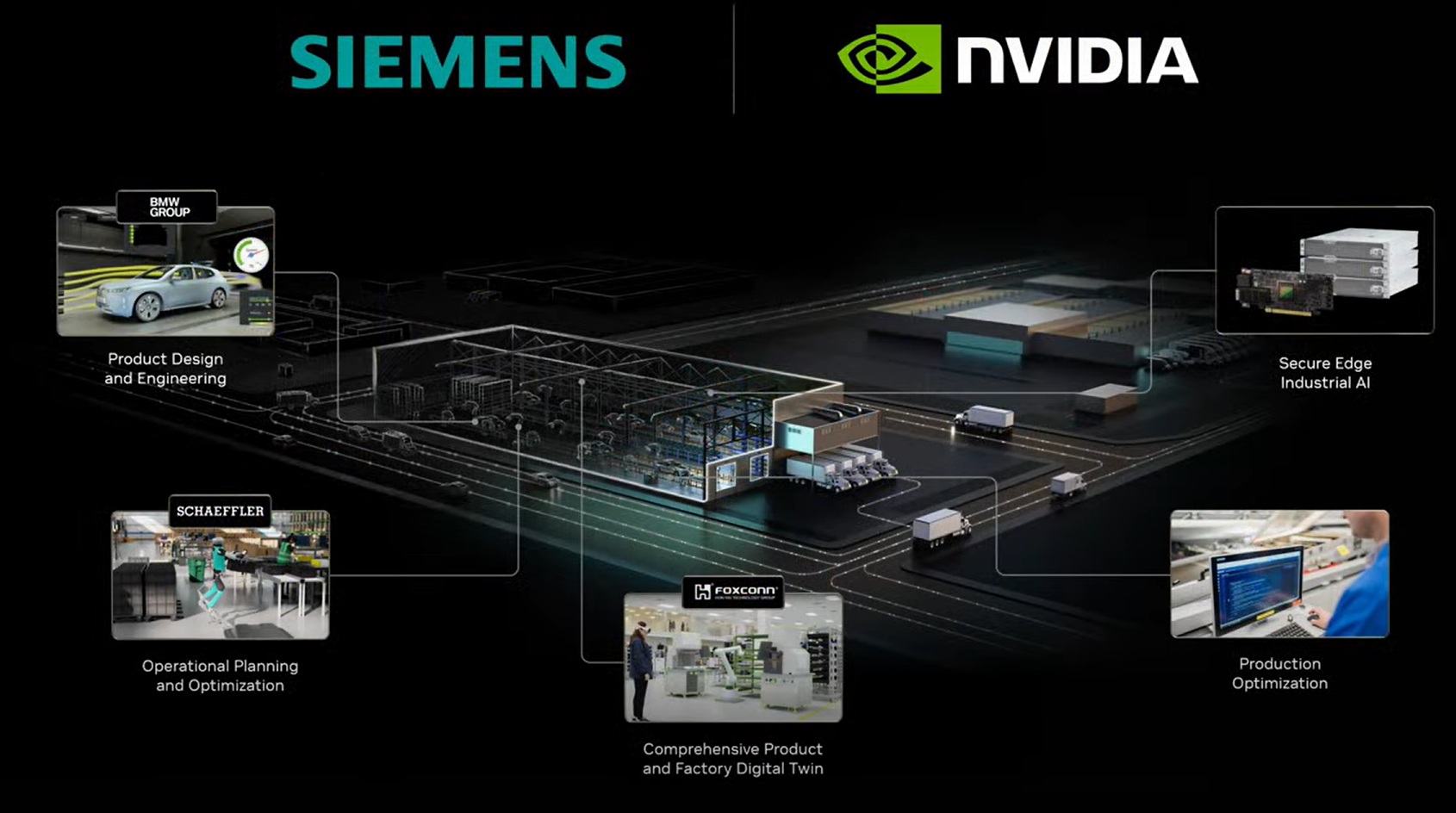

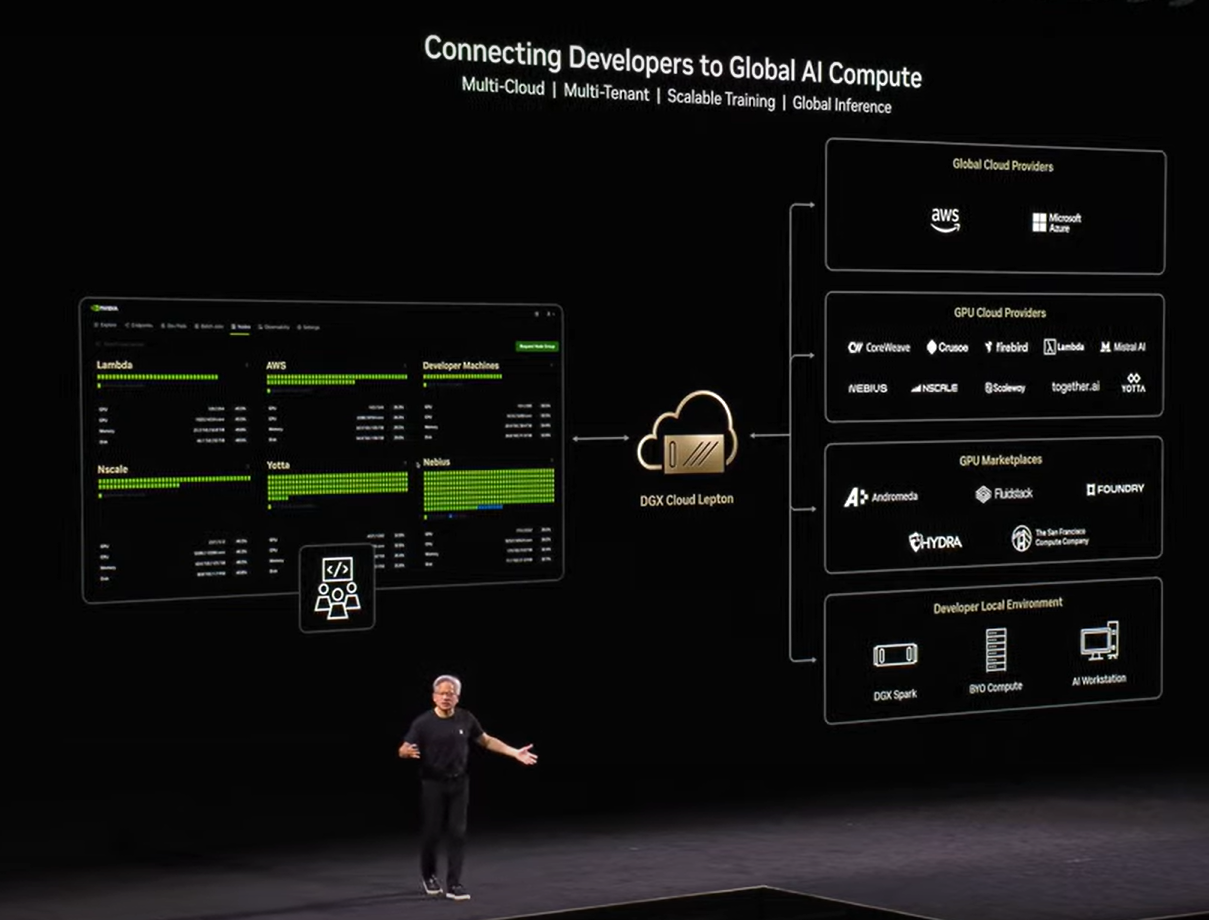

has more than 1.5 million developers. Nvidia also announced that European enterprises are adopting agentic AI including Novo Nordisk, Siemens, Shell, BT Group, SAP, Nestle, L'Oreal and BNP Paribas. The company also touted adoption of its Nvidia Drive autonomous vehicle platform at Volvo, Mercedes Benz and Jaguar as well as quantum computing efforts in the region.

has more than 1.5 million developers. Nvidia also announced that European enterprises are adopting agentic AI including Novo Nordisk, Siemens, Shell, BT Group, SAP, Nestle, L'Oreal and BNP Paribas. The company also touted adoption of its Nvidia Drive autonomous vehicle platform at Volvo, Mercedes Benz and Jaguar as well as quantum computing efforts in the region.