AI Investment Rising Significantly Among Early Adopters

AI Investment Rising Significantly Among Early Adopters

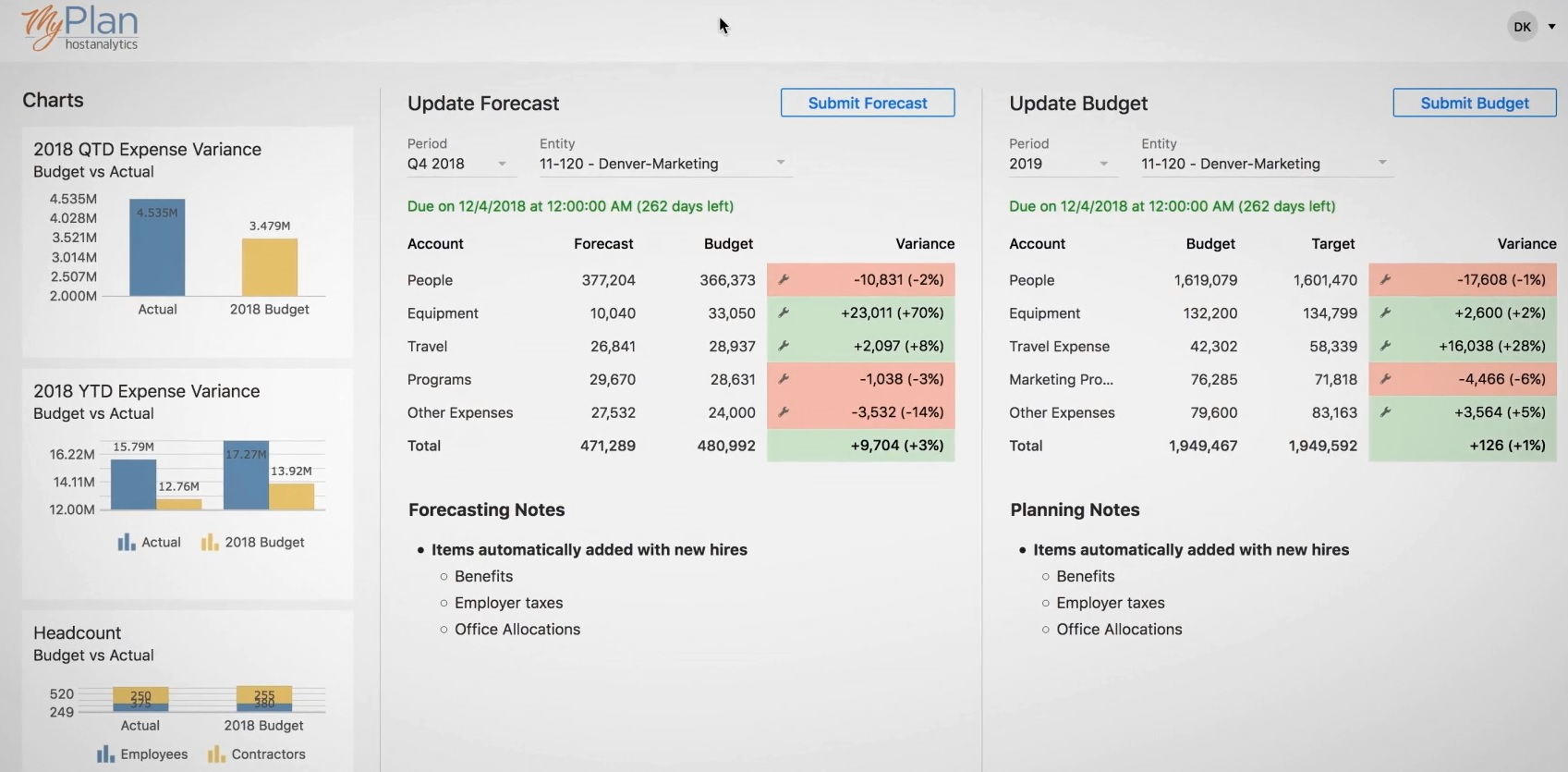

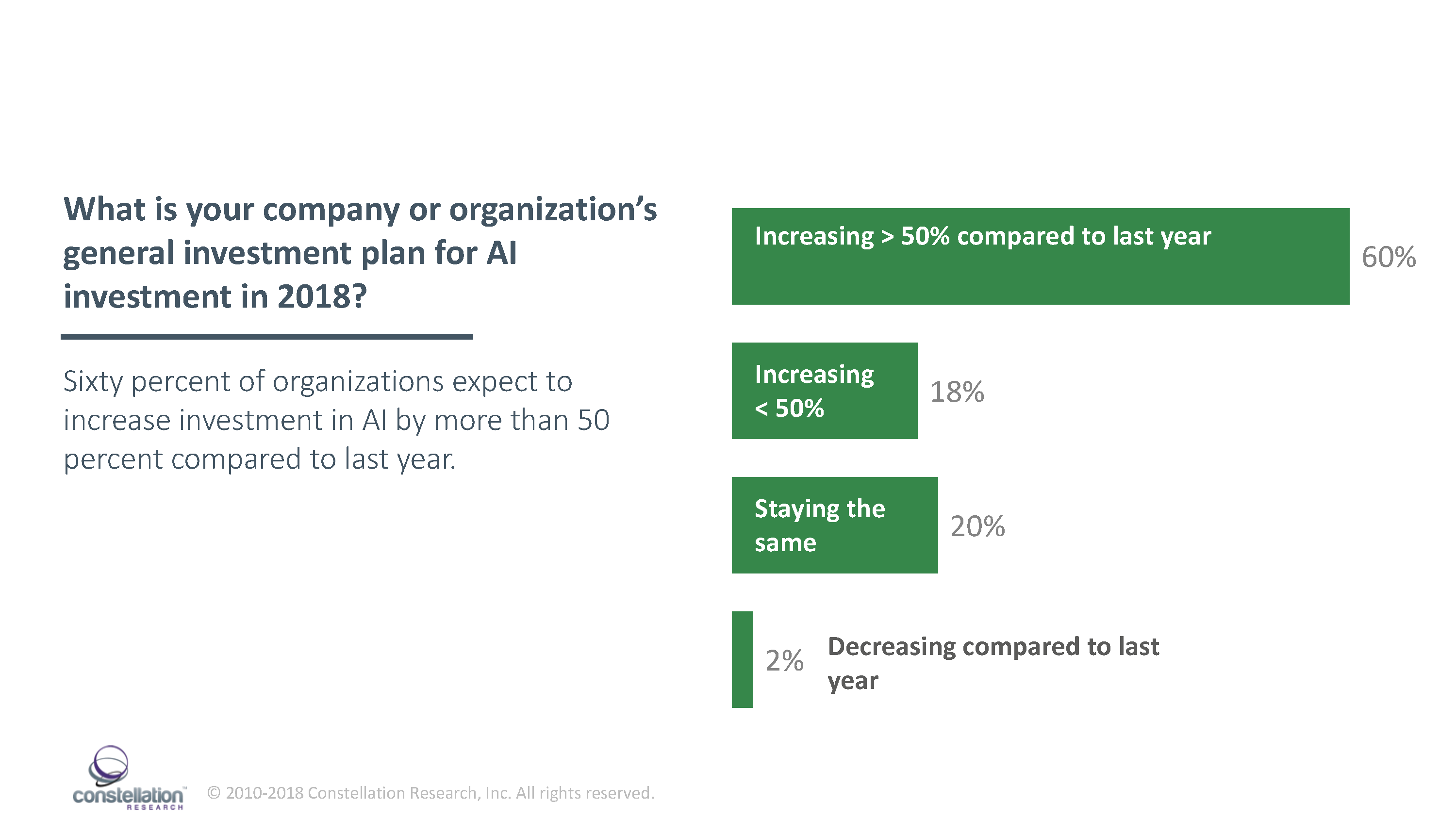

Sixty percent plan to increase investment by more than 50% compared to last year

Early adopters are ramping up investment in artificial intelligence (AI) technologies in 2018 reveals an AI study conducted by Constellation Research. Sixty percent of C-level executives surveyed say their organizations plan to increase investment in AI by over 50% compared to last year (Figure 1). AI budgets, however, remain relatively modest with 92% of respondents expecting to spend less than $5 million on AI in 2018.

Figure 1. AI Budgets Rising in 2018

Modest AI budgets signal cautious adoption and deployment of foundational AI technologies for now. However, because AI often delivers successes exponentially, Constellation expects AI budgets to continue to rise by more than 50 percent annually for the next four to five years as AI R&D yields bigger successes at an increasing pace.

Firms investing in AI to improve the customer experience and drive growth

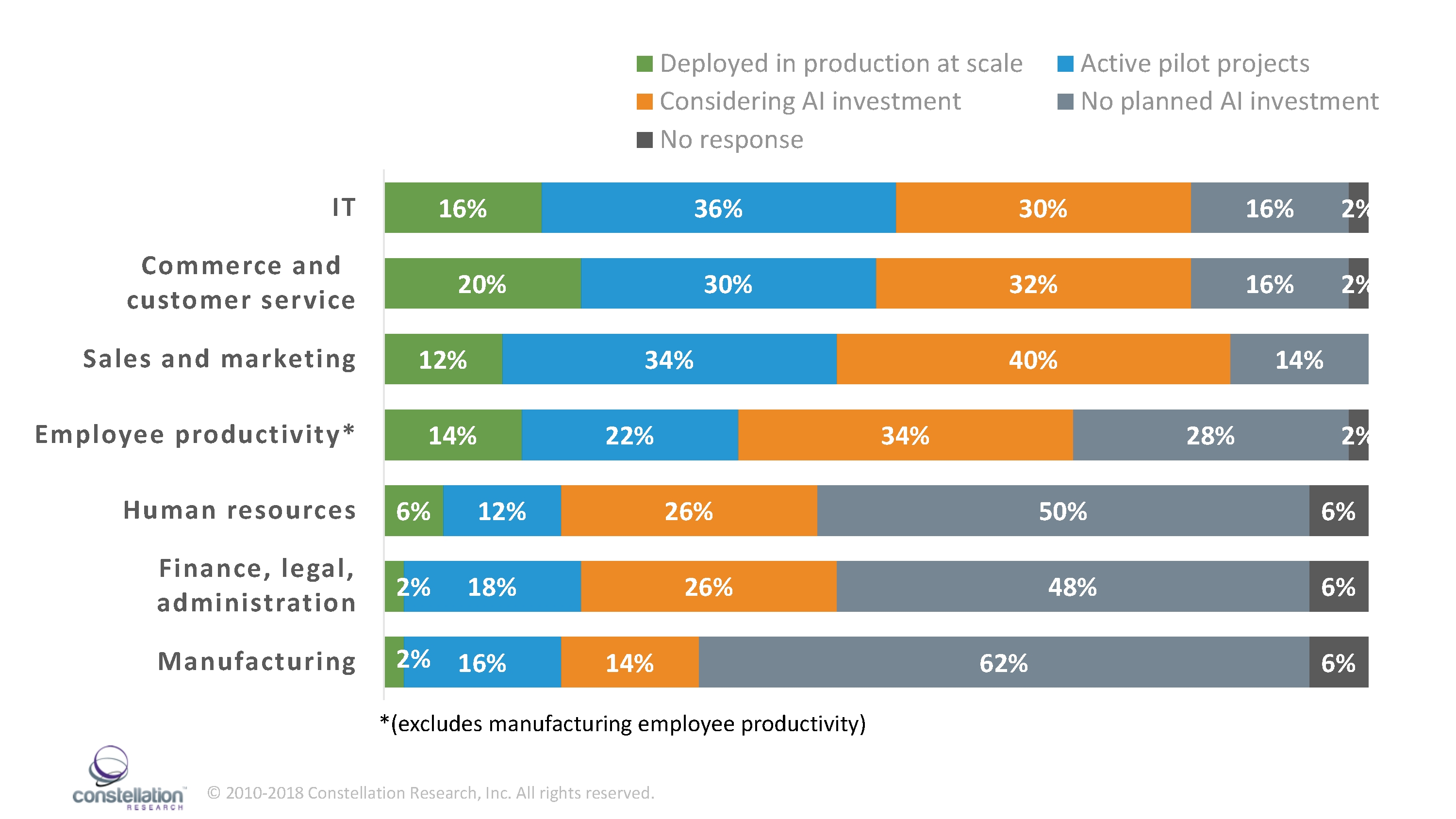

AI adoption highest among IT; Customer Service; and sales and marketing departments

The Constellation Research 2018 AI Study reveals firms are investing in AI to help improve the customer experience and drive growth. C-level executives surveyed by Constellation reported the highest levels of investment and adoption in the following departments: information technology; customer service/commerce; sales and marketing; and employee productivity.

Fifty-two percent of respondents report AI projects in production or in pilots in the IT department, 50 percent report production or pilot AI projects in customer service/commerce, 46 percent report AI projects in production or pilots in sales and marketing, and 36 percent report AI projects in production or in pilots in employee productivity (excludes manufacturing employee productivity) (Figure 2).

Figure 2. AI Spending By Department

Driving this trend, explains Cindy Zhou, a Constellation Research principal analyst, is the ease with which firms can acquire AI capabilities for these departments. Packaged apps with AI capabilities are widely available for sales, marketing, employee productivity and commerce. As stated earlier, packaged apps with AI capabilities are often features of existing software or a platform that can simply be "turned on."

"As far as sales, marketing, and customer service organizations are concerned, AI is already here," says Zhou. "Salesforce and Microsoft for example, sell applications with AI capabilities rolled into the core product, or they are sold as add-ons. Salesforce Lightning platform customers automatically get basic Salesforce Einstein capabilities with their contract. If customers need more robust AI capabilities, they can upgrade," she explains, speaking about Salesforce Einstein, the company's AI layer within the platform, which powers a variety of built in and, in some cases, optional skills and application capabilities.

Highlights from the Study

- Widespread adoption with caveats: Seventy percent of respondents to the Constellation 2018 AI Survey indicate their organization currently employs some form of AI technology. This number, however, tells only part of the story. AI budgets remain relatively modest (see below), and, even among early adopters, no organizations in the study have deployed true AI.

- AI investment modest but growing fast: Ninety-two percent of respondents say they will spend less than $5 million on AI in 2018. However, respondents indicate significant year-over-year increases in AI budgets, with 60 percent of respondents registering a 50 percent increase in AI budgets compared to last year.

- Firms employ three primary modes of AI development: developing homegrown applications by building out data science teams and using open source frameworks; developing homegrown applications using cloud-based ML and deep learning (deep learning services; and adopting packaged applications with AI capabilities.

- Companies are investing in AI to help improve the customer experience and drive growth. When Constellation asked respondents to indicate the department(s) where AI is planned or implemented, IT, customer service/commerce, sales and marketing, and employee productivity ranked among the departments receiving the most planned or in-production AI spending. Driving this trend is the ease with which firms can acquire AI capabilities for these departments. Packaged apps with narrow AI capabilities are widely available for sales, marketing, employee productivity and commerce.

- Potential AI-proficient talent shortage. Rising demand for workforce talent with AI proficiency poses a potential challenge for organizations that want to implement AI solutions. Eighty percent of executives say their organizations need to hire additional human capital to implement AI solutions. Seventy-two percent of organizations say they obtain new talent for AI projects via recruiting. Taken together, these two trends have the potential to culminate in a talent war as more AI projects come online.

- Executives are feeling the pressure. Eighty-eight percent of executives say they expect their roles to change as their organizations adopt AI. Fifty-four percent of executives say they will need to understand how to restructure the business to accommodate new business models, 50 percent report a need to acquire data expertise and 48 percent report needing to learn how to motivate an AI-augmented team.

- Resistance to AI: Fifty-two percent of respondents report resistance to AI within the organization. Top sources of resistance among those who reported resistance to AI include lines of business at 67 percent, IT at 32 percent and HR at 25%.

- Data privacy strategies are not yet ubiquitous among firms using or developing AI. Among firms using AI, 23 percent say their organization does not have a data privacy strategy to protect personal information ingested by AI. Thirty-one percent of organizations currently using AI do not have an opt-in policy to handle personal information ingested by AI.

About the Study

The Constellation Research 2018 Artificial Intelligence Study leverages findings from the 2018 Constellation Research Artificial Intelligence Survey which assesses the state of AI among the first movers, early adopters, and fast followers that comprise Constellation's subscriber base.

The Survey asked C-level executives about the state of AI investment and deployment in their organizations, budgets for AI investment, technologies driving AI development, how AI might impact executives and the workforce, sources of internal resistance to AI, and privacy.

For the purposes of both the Survey and the Study, Constellation defines artificial intelligence as the culmination of technologies including deep learning, neural networks, natural language processing, and big data/predictive analytics to produce software that is self-improving, automatic, and emulates human intelligence.

Seventy-four percent of responses came from the C-suite, with CEOs making up 26 percent of the sample; CIOs, 20 percent; CTOs, 10 percent; CDOs, 6 percent; CMOs, 2 percent; and other C-level executives, 10 percent. There were no CFOs in the survey sample.

The sample consists of respondents from twelve different sectors, mostly in the United States. Sectors include automotive; consumer electronics; consulting/systems integration; finance/insurance/real estate; government; healthcare/medical/pharmaceutical; media/interactive/PR agency; news/entertainment; retail; technology-hardware, software, services; and telecommunications or travel/hospitality.

Total revenue of respondents' firms in 2016 range from less than $10 million to more than $1 billion. Twenty-nine percent of respondents reported revenue of less than $10 million; 14 percent reported revenue between $10 million and $50 million; 29 percent reported revenue between $50 million and $500 million; and 29 percent reported revenue of over $1 billion.

Download a complimentary copy of the Constellation Research 2018 AI Study.

Data to Decisions Digital Safety, Privacy & Cybersecurity Future of Work Marketing Transformation Matrix Commerce New C-Suite Next-Generation Customer Experience Tech Optimization