Monday's Musings: Exponential Efficiency In The Age Of AI At Davos

Monday's Musings: Exponential Efficiency In The Age Of AI At Davos

AI Will Dominate The Davos Agenda, Yet Only A Privileged Few Can Pay For It

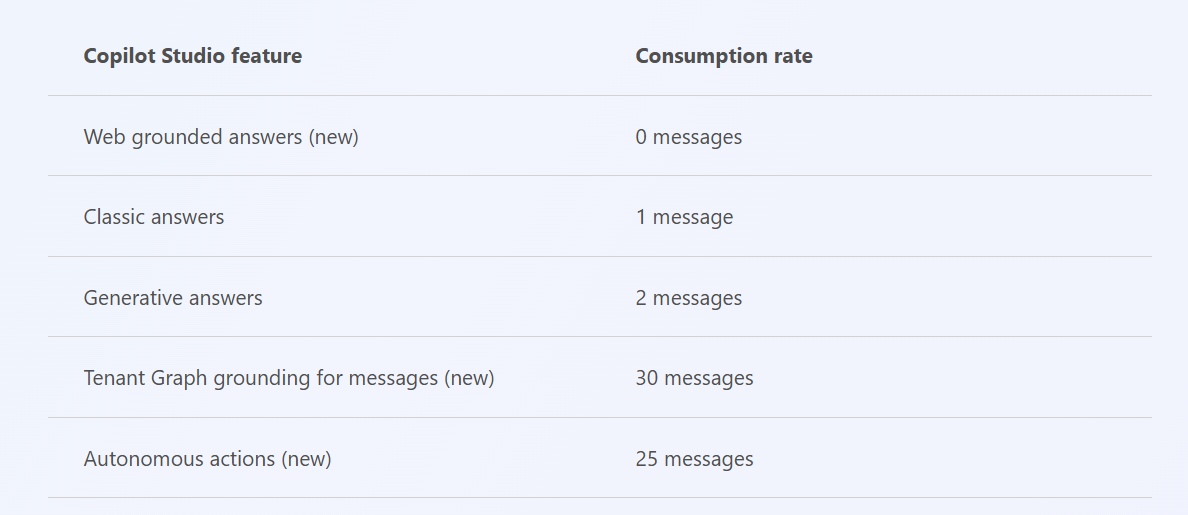

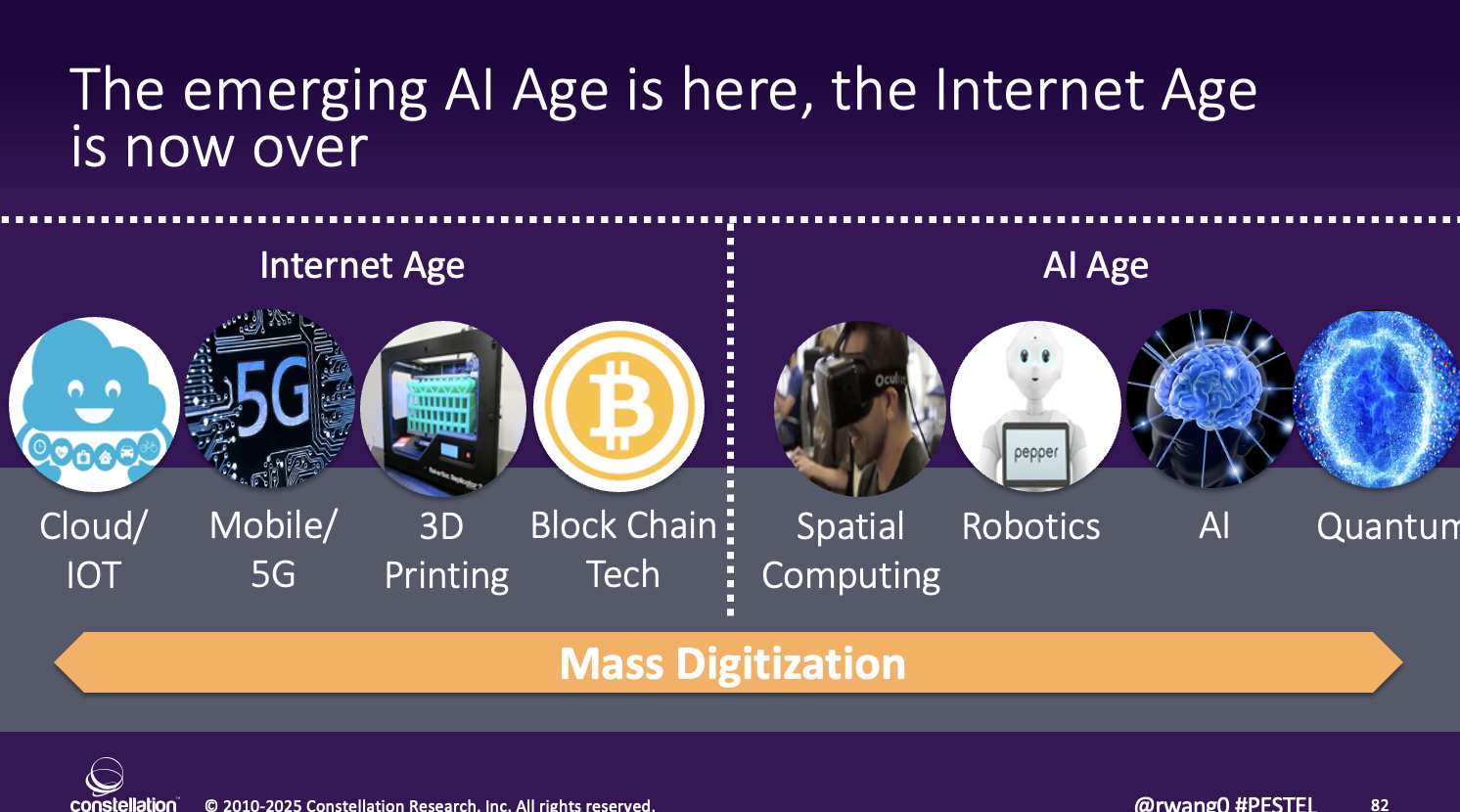

While, Trump, China, and the global economy take centerstage at this year’s World Economic Forum, AI will be the main topic du jour. Senior executives and policy wonks seek to understand how AI will transform businesses, societies, and economies. The AI Age truly is the 4th Industrial Revolution, not the other stuff that was peddled as conference fodder over the past five years in Davos (see Figure 1).

Figure 1. Welcome To The AI Era

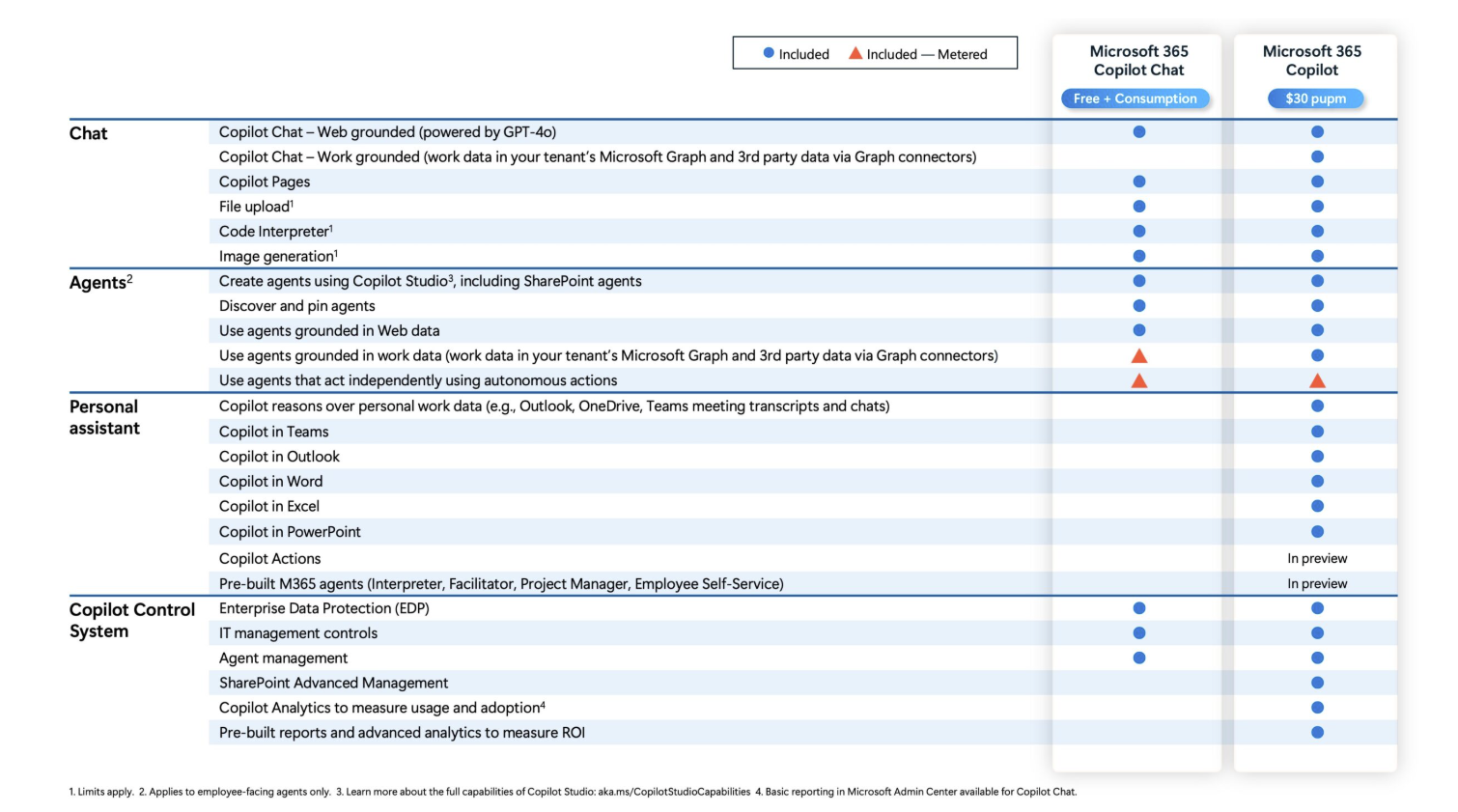

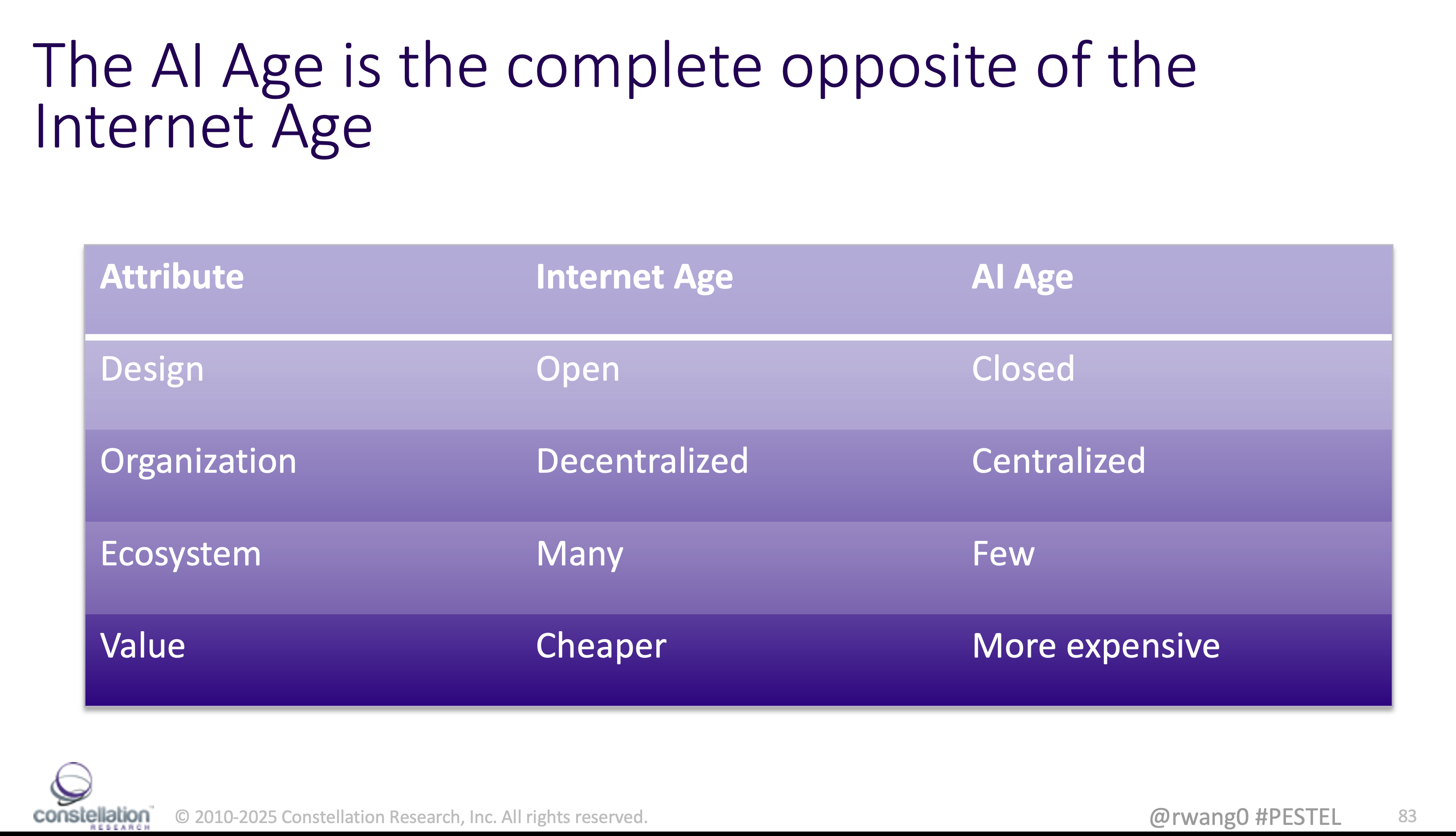

Unlike the Internet Age, where there was a push for massive decentralization, open systems, many players, and lower costs, the AI Age has so far become the opposite (see Figure 2).

Figure 2. AI Age Is Opposite of Internet Age

Today, the AI systems are highly centralized, closed, with fewer players, and much higher costs. In fact, the barriers of entry to AI will create an AI divide bigger than the current digital divide. Many countries will not be able to afford the compute power, the energy, and the human power required to effectively manage AI. And even worse, the world is abuzz about AI, yet the costs of AI continue to go up, not down. Constellation Research expects an AI divide worse than than today’s digital divide.

Legacy Costs And Technical Debt Burden Most Organizations

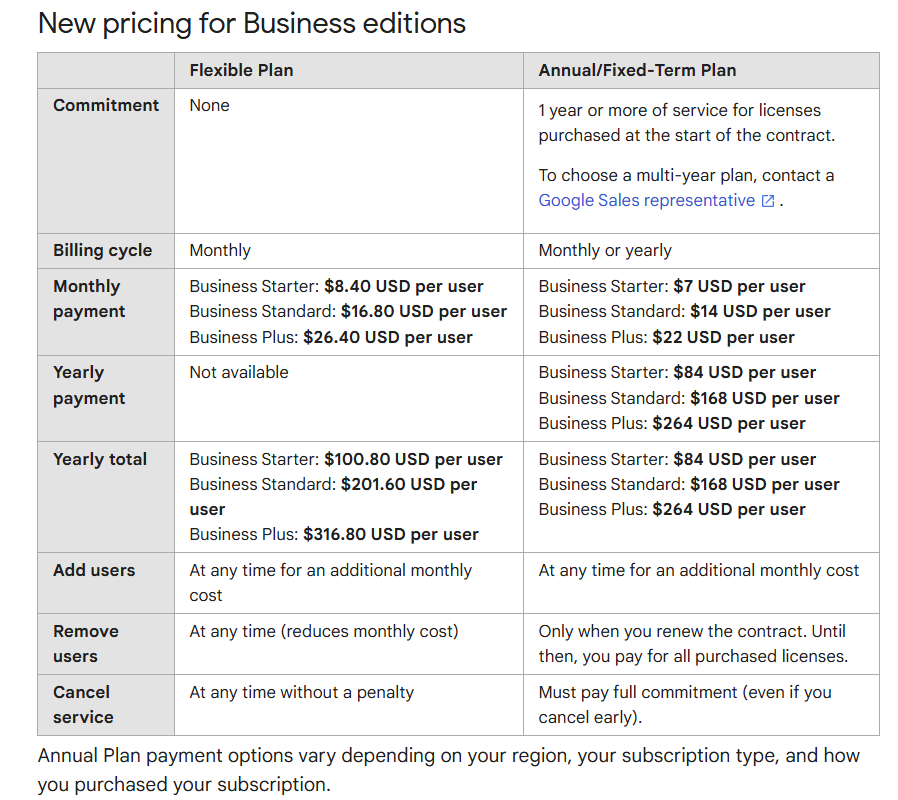

Concurrently, the legacy costs of the Internet Era and technical debt continue to pile up with hybrid cloud, legacy transactional systems, expensive and dated software, and rising maintenance costs by technology vendors trying to raise account values not commensurate with the increase in innovation. HINT: most organizations are paying too much for technology without a proper return.

One favorite quote from a Constellation’s Executive Network (CEN) member:

"As a CFO, all my costs go down with volume and time, except for F!@#%G healthcare and tech (SaaS)."

This CFO’s astute comments reflect the current malaise in the SaaS world and cost of hyperscaler compute with lift and shift. In fact, today’s cost structures are way too high and organizations will continue to struggle to cut costs from the Internet Era to pay for the AI Era. The AI era has yet to show that the investments will yield productivity gains for many. Hence the push for exponential efficiency to pay for AI and other innovations.

Exponential Efficiency Explained

Exponential efficiency refers to an algorithm or function whose running time grows exponentially with respect to its input size. In other words, as the input gets larger, this type of algorithm experiences rapid growth in its execution time.

The simple rule for exponential efficiency in the first order: 10 X better or 1/10th the cost.

In the second order of exponential efficiency, organizations achieve: 10x better AND 1/10th the cost.

This continues as organizations achieve the holy trifecta of faster, better, AND cheaper. Yes, all three can be achieved when exponential efficiency is applied. Now, many readers may contemplate whether this is possible or not. Recently both X (formerly Twitter) and Meta (formerly Meta), found ways to cut their engineering teams with both AI and automation to achieve exponential efficiency. X reduced 2/3’s of engineering staff and others with little detriment. Meta continues to reduce 1/3 or more of its engineering teams and others with very little detriment. In fact, in back office financial operations, they may be able to get to 50% automation in the next two years.

Exponential Efficiency Will Help Pay For AI

More than just paying for the high cost of AI, organizations see the potential but need to fund innovation with cost savings. Today’s cost structures are no longer sustainable for the AI era. Legacy infrastructure costs must be taken down by 1/10th or improvements must be 10 times better in order to achieve exponential efficiency. In the Internet Era, telecommunications, commerce, distribution, and financial services costs were exponentially cut to make way for this new transformational technology. These innovations paved the way for 1000’s of new business models and monetization techniques leading to explosive growth and societal advancement. In almost every industry, the dawn of exponential efficiency has arrived, yet legacy players struggle to grasp the impacts. Here are five industries where exponential efficiency has arrived:

- Financial services. SWIFT wires run about $25 a transaction. ACH costs about $2.50 per payment. ATM costs run about $1.000. In India, the government set up a payment system for over 1 billion people that costs next to free. Why does a Mastercard or Visa or Amex payment gateway exist? These merchant and user fees have been disrupted and smart enterprises will stop paying soon.

- Defense and military. Expensive weapons programs create million dollar missiles to be used on the battlefield. Meanwhile, $100 drones have better effectivity. This consumerization of dual use technologies will continue with robotics as future kinetic wars may not be fought with human combatants. The gorgeous drone shows on display will be AI controlled dual use for warfare.

- Enterprise software. Users locked into legacy systems pay $500 to $1000 per user per month for CRM, ERP, collaboration, office productivity, video conferencing etc. an Indian software pioneer, Zoho, offers all this for $10 per user per month. The cloud vendors who were the young Turks challenging legacy vendors are now the ones holding customers hostage and hindering innovation. The cycle has been repeated with a lot of money wasted for slow innovation and very little return to enterprises.

- Energy and utilities. The cost of electricity continues to go up, while the raw carbon used to power is lower in cost when adjusted for inflation. Why? The cost of pensions and capital expense of power plants and transmission continue to rise. Many localities pay up to $0.25/kwh for not just transmission, but generation. In less than a decade, China will be able to produce energy at close to zero with hydro, nuclear, and battery storage from solar. Nuclear costs China $.05 per kw/hour. Any country who succeeds at lowering power costs will dominate economies with lower cost manufacturing, compute, and AI.

- Public sector. The US will begin an exercise to take the bloat out of government. The Department of Government Efficiency (not an official department) will be focused on fraud, waste, abuse, and efficiency. With some of the best minds, this effort could create an efficiency in public sector that would have ripple effects throughout the economy and free up funds for more quality of life spending for society. This advantage will give the US another advantage on the world stage beyond digitization.

Senior executives must prepare for a swath of new competitors to create exponential efficiency disruption. For example, in the consulting industry, the combination of automation and AI, a glut of young computer science graduates and the carnage of layoffs for older workers will open the opportunity for new firms built on AI to operate with 1/10th the labor. Expect the first $1 billion consulting firm to achieve a revenue per employee of $10 million - $25 million each in the next five years.

The Bottom Line: Put AI To Work On Exponential Efficiency

At the 2024 Constellation Connected Enterprise executive summit at the Ritz Carlton Half Moon Bay, exponential efficiency pioneer David Giambruno showed how he achieved $712 million in hard costs with five different industries. David used a combination of AI and automation with bare metal cloud compute to deliver a 68% reduction in IT costs in 21 months. His teams were able to rewrite legacy code and reduce overall cloud costs with this novel approach.

Time is of the essence. Exponential efficiency funds the AI projects which set up the foundation for organizations achieving Second Order exponential efficiency gains. PE firms looking at turnaround playbooks will start with a thesis on exponential efficiency before starting the turnaround. Investment bankers will roll out their exponential efficiency playbooks for post-merger integration models. Be warned. Any Global 2000 company missing the exponential efficiency playbook will be left behind without the resources to fund their AI investments.

Your POV

How will you achieve exponential efficiency? Will you aim for 10x or 1/10th?

Add your comments to the blog or reach me via email: R (at) ConstellationR (dot) com or R (at) SoftwareInsider (dot) org. Please let us know if you need help with your strategy efforts. Here’s how we can assist:

- Developing your metaverse and digital business strategy

- Connecting with other pioneers

- Sharing best practices

- Vendor selection

- Implementation partner selection

- Providing contract negotiations and software licensing support

- Demystifying software licensing

Reprints can be purchased through Constellation Research, Inc. To request official reprints in PDF format, please contact Sales.

Disclosures

Although we work closely with many mega software vendors, we want you to trust us. For the full disclosure policy,stay tuned for the full client list on the Constellation Research website. * Not responsible for any factual errors or omissions. However, happy to correct any errors upon email receipt.

Constellation Research recommends that readers consult a stock professional for their investment guidance. Investors should understand the potential conflicts of interest analysts might face. Constellation does not underwrite or own the securities of the companies the analysts cover. Analysts themselves sometimes own stocks in the companies they cover—either directly or indirectly, such as through employee stock-purchase pools in which they and their colleagues participate. As a general matter, investors should not rely solely on an analyst’s recommendation when deciding whether to buy, hold, or sell a stock. Instead, they should also do their own research—such as reading the prospectus for new companies or for public companies, the quarterly and annual reports filed with the SEC—to confirm whether a particular investment is appropriate for them in light of their individual financial circumstances.

Copyright © 2001 – 2025 R Wang and Insider Associates, LLC All rights reserved.

Contact the Sales team to purchase this report on a a la carte basis or join the Constellation Executive Network

Data to Decisions Digital Safety, Privacy & Cybersecurity Future of Work Innovation & Product-led Growth Marketing Transformation Matrix Commerce New C-Suite Next-Generation Customer Experience Tech Optimization Revenue & Growth Effectiveness Davos World Economic Forum Insider Associates Executive Events business finance Leadership Innovation AI ML Machine Learning LLMs Agentic AI Generative AI Robotics Analytics Automation Cloud SaaS PaaS IaaS Quantum Computing Digital Transformation Disruptive Technology Enterprise IT Enterprise Acceleration Enterprise Software Next Gen Apps IoT Blockchain CRM ERP CCaaS UCaaS Collaboration Enterprise Service developer Metaverse VR Healthcare Supply Chain B2B B2C CX EX Employee Experience HR HCM Marketing Growth eCommerce Social Customer Service Content Management M&A AR Chief Analytics Officer Chief Customer Officer Chief Data Officer Chief Digital Officer Chief Executive Officer Chief Financial Officer Chief Information Officer Chief Information Security Officer Chief Marketing Officer Chief People Officer Chief Privacy Officer Chief Procurement Officer Chief Product Officer Chief Revenue Officer Chief Supply Chain Officer Chief Sustainability Officer Chief Technology Officer Chief Experience Officer Chief AI Officer