News Analysis – SAP slices and dices into more Cloud, and of course more HANA

Vice President and Principal Analyst

Constellation Research

Holger Mueller is VP and Principal Analyst for Constellation Research for the fundamental enablers of the cloud, IaaS, PaaS and next generation Applications, with forays up the tech stack into BigData and Analytics, HR Tech, and sometimes SaaS. Holger provides strategy and counsel to key clients, including Chief Information Officers, Chief Technology Officers, Chief Product Officers, Chief HR Officers, investment analysts, venture capitalists, sell-side firms, and technology buyers.

Coverage Areas:

Future of Work

Tech Optimization & Innovation

Background:

Before joining Constellation Research, Mueller was VP of Products for NorthgateArinso, a KKR company. There, he led the transformation of products to the cloud and laid the foundation for new Business Process as a…...

Read more

In a virtual press conference SAP’s Vishal Sikka in conversation with Jonathan Becher went through two press releases that the company just published.

Let’s look first at the key statements in the cloud press release:

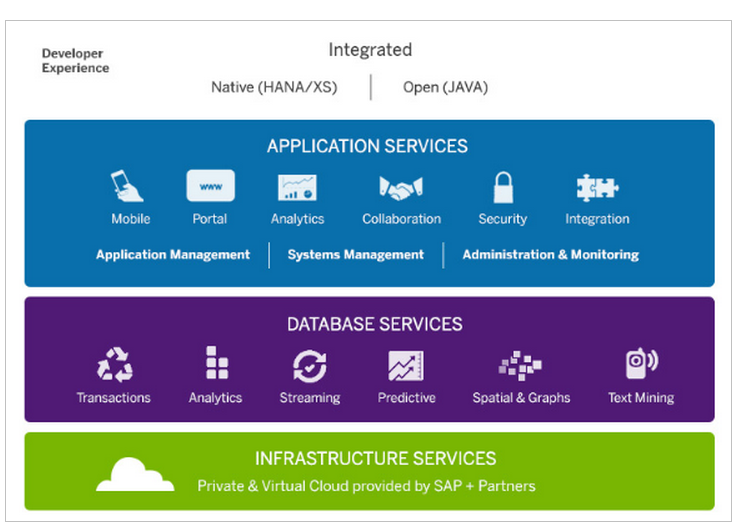

SAP announced new offerings for SAP HANA Cloud Platform. Customers now have the ability to choose from three offerings: SAP HANA AppServices, SAP HANA DBServices, and SAP HANA Infrastructure Services.

MyPOV –

Good for SAP to make products / services more consumable – the separation of Apps, DB and Infrastructure services makes sense from a SAP perspective, given HANA’s prominence in marketing and product plans, but may confuse buyers who are either used to a complete shield of physical details (e.g. Salesforce.com) or a more granular details (e.g. Amazon’s AWS, Google's GCP).

SAP HANA Offers New Pricing and Consumption Model New pricing options for SAP HANA intend to broaden the reach of the SAP HANA platform and make it easily accessible to everyone starting with a base price with add-on options available as desired. Customers buy through a consumption model and can either implement end-to-end platform use cases or choose additional options as needed such as predictive analytics, spatial processing and planning. This significantly increases the opportunities to get started with SAP HANA and affords customers the ultimate ability to innovate.

MyPOV –

More granular pricing is usually a good strategy, so kudos to SAP. But SAP needs to pay attention to not get too complex and get the pricing right. Too early to tell if the first release has achieved that.

SAP HANA Cloud Platform Delivers Choice With New OfferingsTo address the growing apps economy, SAP announced new and enhanced offerings for SAP HANA Cloud Platform. Startups, ISVs and customers can now build new data-driven applications in the cloud. This platform-as-a-service (PaaS) offers in memory-centric infrastructure, database and application services to build, extend, deploy and run applications. SAP HANA Cloud Platform is available today via SAP HANA Marketplace. Customers can gain access to SAP HANA in as little as 30 minutes and immediately benefit from a unified platform service for next-gen apps, and can easily buy, deploy and run with the flexibility of a subscription contract.

MyPOV –

Unfortunately the press conference was light on more details on SAP’s PaaS strategy. Earlier in the week my hope was that SAP might unveil something in conjunction with Pivotal’s CloudFoundry, similar to what IBM has done with CloudFoundry and BlueMix. And many of the apps services – around analytics, mobile etc. are similar to the ones offered on BlueMix. But overall I remain undecided on SAP’s PaaS strategy till we hear more – still overcoming the River / RAD announcement and plans. Which may be the reason SAP will come back with more PaaS details later in the year.

|

A very good representation of services by Matthias Steiner from here |

SAP HANA Marketplace Simplifies Purchasing ExperienceThe new SAP HANA Cloud Platform offerings are available for a simplified trial and purchase experience in sizes ranging from 128 GB to 1 TB of memory on SAP HANA Marketplace, an online store that lets customers learn, try, and buy applications powered by SAP HANA. SAP Fiori™ apps and hundreds of startups and ISVs offerings are also available on the site.

MyPOV

The improvements and new UI of the HANA marketplace are probably some of the key advancements we have seen today. It looks simple and easy to procure apps and services, though I have not purchased any. Fiori looms prominently across all offerings, but I am not sure how Fiori will run in combination of the market place and its dependency on the Suite being somewhere available. Finally the monthly prices do not foretell a good story for the elasticity of the offering – one of my concerns around HANA since the early days.

SAP HANA Innovation Breakthrough With SAP Genomic AnalyzerThe SAP HANA platform continues to change the world of compute and what is possible. SAP is paving the way for real-time personalized medicine with SAP Genomic Analyzer, a new application powered by SAP HANA that aims to allow researchers and clinicians to find breakthrough insights from genomics data in real time. Currently in the early adoption phase, key planned benefits include faster data processing through various stages of genomics pipeline — alignment, annotation and analysis — and immediate analysis of data in minutes rather than days. Researchers are envisioned to be able to analyze genetic variants of large-scale cohorts to find patterns of variation within and between populations. With better identification of clinically actionable genetic variants and real-time visibility into “in-the moment” situations, clinicians shall be able to understand and personalize care to patients with diseases such as Type II diabetes.

MyPOV –

Looks like SAP is on to something with genetic processing. When the product was first announced my un-representative sample of scientists I know and polled dealing with genetics shrugged their shoulders. Fast forward 6-8 months and they are asking me about HANA, a good turnaround for SAP.

In a separate press release SAP said, that it has broken (together with partners) the world record for the largest data warehouse:

This new world record demonstrates the ability of SAP HANA and SAP IQ to efficiently handle extreme-scale enterprise data warehouse and Big Data analytics. SAP and its partners had previously set a world record for loading and indexing Big Data at 34.3 Terabytes per hour.

A team of engineers from SAP, BMMsoft, HP, Intel, NetApp, and Red Hat, built the data warehouse using SAP HANA and SAP IQ 16, with BMMsoft Federated EDMT running on HP DL580 servers using Intel® Xeon® E7-4870 processors under Red Hat Enterprise Linux 6 and NetApp FAS6290 and E5460 storage. The development and testing of the 12.1PB data warehouse was conducted by the team at the SAP/Intel Petascale lab in Santa Clara, Calif., and audited by InfoSizing, an independent Transaction Processing Council certified auditor.

MyPOV –

This announcement is key as HANA critics have been stating that in memory is too expensive to run large scale data warehouses there. We now have proof of scale – but barring details on what was done, the business benefit and the cost of running at 12.1PB data warehouse are screaming for SAP to provide more details.

MyPOV

SAP delivered some key advances around moving its offerings to the cloud, in an entertaining and informative format – I hope to see the Sikka / Becher combo soon again – as it worked very well.

But then I would have preferred for SAP to provide a more practical slicing of services for HANA: Price the cost for storing data in memory, maybe moving it on or out to another medium, processing it and any networking costs to get it there. These are the intuitive options for cloud database pricing. It’s fair and fine to bundle – but that’s the parameters of costs incurred. Sooner or later SAP will come hopefully closer to these categories – or better ones.

In the meantime SAP deserves kudos for moving into the right direction.

Lastly I still see SAP as an enterprise software automation company (despite my own findings) – and not a technology company in the first place – so talking about hardware specs and performance is still something I need to get used to. But then SAP should be the one vendor talking about the business impact and benefits of technology with flair. At least once every 10 minutes of a press conference.

I compiled a Storify tweet collection, too - you can find it here. Have a look the twitter convo with the pundits is worth it.

New C-Suite

Tech Optimization

Data to Decisions

Digital Safety, Privacy & Cybersecurity

Innovation & Product-led Growth

Future of Work

SAP

Google

amazon

Oracle

SaaS

PaaS

IaaS

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

CCaaS

UCaaS

Collaboration

Enterprise Service

Chief Information Officer

Chief Technology Officer

Chief Information Security Officer

Chief Data Officer

Chief Digital Officer

Chief Analytics Officer

Chief Executive Officer

Chief Operating Officer