Europe and US move on from Safe Harbor privacy - but not far

Europe and US move on from Safe Harbor privacy - but not far

For many years, American businesses have enjoyed a bit of special treatment under European data privacy laws. The so-called "Safe Harbor" arrangement was negotiated by the Federal Communications Commission (FCC) so that companies could self-declare broad compliance with data security rules. Normally organisations are not permitted to move Personally Identifiable Information (PII) about Europeans beyond the EU unless the destination has equivalent privacy measures in place. The "Safe Harbor" arrangement was a shortcut around full compliance; as such was widely derided by privacy advocates outside the USA, and for some years had been questioned by the more activist regulators in Europe. And so it seemed inevitable that the arrangement would be eventually annulled, as it was last October.

With the threat of most personal data flows from Europe into America being halted, US and EU trade officials have worked overtime for five months to strike a new deal. Today (January 29) the US Department of Commerce announced the "EU-US Privacy Shield".

The Privacy Shield is good news for commerce of course. But I hope that in the excitement, American businesses don't lose sight of the broader sweeper of privacy law. Even better would be to look beyond compliance, and take the opportunity to rethink privacy, because there is more to it than security and regulatory short cuts.

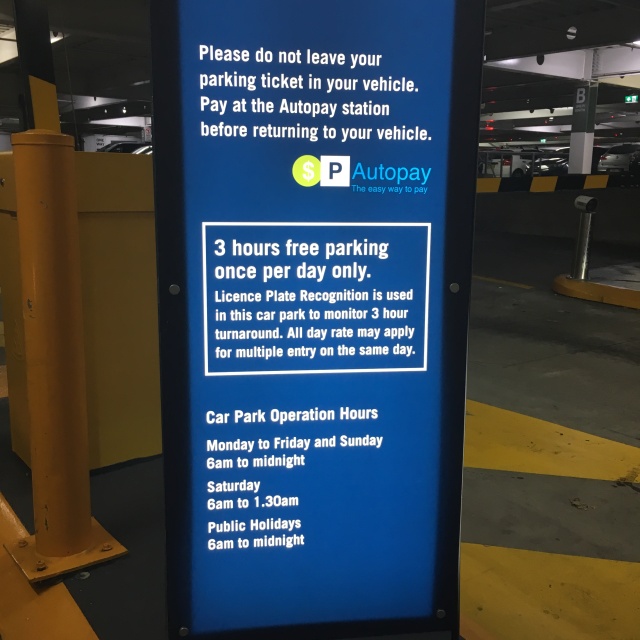

The Privacy Shield and the earlier Safe Harbor arrangement are really only about satisfying one corner of European data protection laws, namely transborder flows. The transborder data flow rules basically say you must not move personal data from an EU state into a jurisdiction where the privacy protections are weaker than in Europe. Many countries actually have the same sort of laws, including Australia. Normally, as a business, you would have to demonstrate to a European data protection authority (DPA) that your information handling is complying with EU laws, either by situating your data centre in a similar jurisdiction, or by implementing legally binding measures for safeguarding data to EU standards. This is why so many cloud service providers are now building fresh infrastructure in the EU.

But there is more to privacy than security and data centre location. American businesses must not think that just because there is a new get-out-of-jail clause for transborder flows, their privacy obligations are met. Much more important than raw data security are the bedrocks of privacy: Collection Limitation, Usage Limitation, and Transparency.

Basic data privacy laws the world-over require organisations to exercise constraint and openness. That is, Personal Information must not be collected without a real demonstrated need (or without consent); once collected for a primary purpose, Personal Information should not be used for unrelated secondary purposes; and individuals must be given reasonable notice of what personal data is being collected about them, how it is collected, and why. It's worth repeating: general data protection is not unique to Europe; at last count, over 100 countries around the world had passed similar laws; see Prof Graham Greenleaf's Global Tables of Data Privacy Laws and Bills, January 2015.

Over and above Safe Harbor, American businesses have suffered some major privacy missteps. The Privacy Shield isn't going to make overall privacy better by magic.

For instance, Google in 2010 was caught over-collecting personal information through its StreetView cars. It is widely known (and perfectly acceptable) that mapping companies use the positions of unique WiFi routers for their geolocation databases. Google continuously collects WiFi IDs and coordinates via its StreetView cars. The privacy problem here was that some of the StreetView cars were also collecting unencrypted WiFi traffic (for "research purposes") whenever they came across it. In over a dozen countries around the world, Google admitted they had breached local privacy laws, apologised, and deleted the collected WiFi contents. The matter was settled in just a few months in places like Korea, Japan and Austraia. But in the US, where there is no general collection limitation privacy rule, Google has been defending this practice. The strongest legislation that seems to apply is wiretap laws but their application to the Internet is complex. And so it's taken years, and the matter is still not resolved.

I don't know why Google doesn't see that a privacy breach in the rest of the world is a privacy breach in the US, and instead of fighting it, concede that the collection of WiFi traffic was unnecessary and wrong.

I don't know why Google doesn't see that a privacy breach in the rest of the world is a privacy breach in the US, and instead of fighting it, concede that the collection of WiFi traffic was unnecessary and wrong.

Other proof that European privacy law is deeper and broader than the Privacy Shield is found in social networking mishaps. Over the years, many of Facebook's business practices for instance have been found unlawful in the EU. Recently there was the final ruling against "Find Friends", which uploads the contact details of third parties without their consent. Before that there was the long running dispute over biometric photo tagging. When Facebook generates tag suggestions, what they're doing is running facial recognition algorithms over photos in their vast store of albums, without the consent of the people in those photos. Identifying otherwise anonymous people, without consent (and without restraint as to what might be done next with that new PII), seems to be an unlawful under the Collection Limitation and Usage Limitation principles.

In 2012, Facebook was required to shut down their photo tagging in Europe. They have been trying to re-introduce it ever since. Whether they are successful or not will have nothing to do with the "Privacy Shield".

The Privacy Shield comes into a troubled trans-Atlantic privacy environment. Whether or not the new EU-US arrangement fares better than the Safe Harbor remains to be seen. But in any case, since the Privacy Shield really aims to free up business access to data, sadly it's unlikely to do much good for true privacy.

The examples cited here are special cases of the collision of Big Data with data privacy, which is one of my special interest areas. See for example "Big Privacy" Rises to the Challenges of Big Data.

Data to Decisions Matrix Commerce Digital Safety, Privacy & Cybersecurity Distillation Aftershots Security Zero Trust Chief Customer Officer Chief Digital Officer Chief Executive Officer Chief Financial Officer Chief Information Officer Chief Marketing Officer Chief Supply Chain Officer Chief Information Security Officer Chief Privacy Officer

My March issue of Bon Appetit magazine arrived just days ago, and it's not just any issue. It's the culture issue. Why does this matter? It matters because we've been dubbed as food obsessed. Notice the smartphone with the pizza photo. That artisan pizza looks good enough to eat.

My March issue of Bon Appetit magazine arrived just days ago, and it's not just any issue. It's the culture issue. Why does this matter? It matters because we've been dubbed as food obsessed. Notice the smartphone with the pizza photo. That artisan pizza looks good enough to eat.

seems to be

seems to be

Earlier his week I had the chance to attend the IBM InterConnect conference to learn about what IBM is doing in the sports and technology space and share some thoughts in that area via their blog and social media. The event itself has a large emphasis on technology infrastructure needed to support the volume of data and systems integration that all industries now require, and within that, the Internet of Things (known shorthand as IoT) kept popping up as a topic.

Earlier his week I had the chance to attend the IBM InterConnect conference to learn about what IBM is doing in the sports and technology space and share some thoughts in that area via their blog and social media. The event itself has a large emphasis on technology infrastructure needed to support the volume of data and systems integration that all industries now require, and within that, the Internet of Things (known shorthand as IoT) kept popping up as a topic.