Results

Google Cloud Next 17 Recap

Google Cloud Next 17 Recap

Google Cloud is adding must-have enterprise features and scaling the business to meet data platform, machine learning and AI demand. Here’s a progress report.

On <iframe src="https://player.vimeo.com/video/208189440?badge=0&autopause=0&player_id=0" width="1280" height="720" frameborder="0" title="Google Cloud Next 17 Recap" webkitallowfullscreen mozallowfullscreen allowfullscreen></iframe>Down Report - Human error takes AWS S3 down in US-EAST-1 - and it is felt - 3.8 Cloud Load Toads

Down Report - Human error takes AWS S3 down in US-EAST-1 - and it is felt - 3.8 Cloud Load Toads

To make the effort a little more fun – we assign ‘Cloud Load Toads” to the overall event and each circumstance. We mean no disrespect to the ‘load toads’ that work valiantly in the worlds air forces, but liked the suggestion of our colleague Alan Lepofsky (@alanlepo), who came up with the term ‘Cloud Load Toad”.

On the ‘Cloud Load Toad’ scala that goes from 1 (bad but ok, can happen) to 5 (very bad, should never ever happen) we rate the severity off the event overall and the events that lead to it.

AWS S3 Down in US-EAST-1

First of all, kudos to AWS, who published the post mortem post (see here) in about 48 hour past the event, faster than usual, judging from other downtime events in the past. But then each cloud outage is different, the root cause – manual error – is easier to establish than e.g. trouble shooting a battery fire, that destroys its very evidence (think Samsung).But let’s dissect the post mortem report:

We’d like to give you some additional information about the service disruption that occurred in the Northern Virginia (US-EAST-1) Region on the morning of February 28th. The Amazon Simple Storage Service (S3) team was debugging an issue causing the S3 billing system to progress more slowly than expected.

MyPOV – Certainly production and billing systems need to be connected, and in many scenarios the production system can create issues with the load triggered for the billing system. But a production system should never be able to be stopped by an administrative system, like a billing system. Production should be kept running, billing can be worried about later. It is likely that the S3 billing system (my speculation) is using S3, too – creating a potential recursive dependency. Needless to say – these systems should be isolated.

At 9:37AM PST, an authorized S3 team member using an established playbook executed a command which was intended to remove a small number of servers for one of the S3 subsystems that is used by the S3 billing process.

MyPOV – Related to above, obvious that the billing system is also using S3 now. Good to drink your own champagne, but when it goes bad because of a mistake by the champagne maker – never good and not only the customers but the champagne maker gets food poisoning – not what you want to have happen. But humans can make mistakes.

Unfortunately, one of the inputs to the command was entered incorrectly and a larger set of servers was removed than intended. The servers that were inadvertently removed supported two other S3 subsystems. One of these subsystems, the index subsystem, manages the metadata and location information of all S3 objects in the region. This subsystem is necessary to serve all GET, LIST, PUT, and DELETE requests. The second subsystem, the placement subsystem, manages allocation of new storage and requires the index subsystem to be functioning properly to correctly operate. The placement subsystem is used during PUT requests to allocate storage for new objects. Removing a significant portion of the capacity caused each of these systems to require a full restart. While these subsystems were being restarted, S3 was unable to service requests.

MyPOV – Kudos to AWS for transparency. But any attendee to its reInvent user conference knows how much the vendor prides itself of not letting humans make mistakes, but putting key / vital processes into code. Certainly, the approach and philosophy wasn’t followed here. Would be good to chat with AWS CTO Werner Vogels about this one… I am sure that enough people in Seattle are pondering that in the future typos, manual human error should not take systems down. Of course, we still need a kill switch for the humans…

Other AWS services in the US-EAST-1 Region that rely on S3 for storage, including the S3 console, Amazon Elastic Compute Cloud (EC2) new instance launches, Amazon Elastic Block Store (EBS) volumes (when data was needed from a S3 snapshot), and AWS Lambda were also impacted while the S3 APIs were unavailable.

MyPOV – AWS suggests to write critical processes to span across regions. Its own website – amazon.com and subsidiary zappos.com did not go down, and were probably coded correctly. The question is (and sorry if I have not read the fine print) – could an AWS client still use the US-EAST-1 services like EC2, EBS, AWS Lambda etc. if pointed to other S3 stores – or does an S3 failure take the whole region out? This is a deeply critical issue for any IaaS techstack in a IaaS data center. So, did customers have a chance here? A question to follow up with AWS. Not Rated.

S3 subsystems are designed to support the removal or failure of significant capacity with little or no customer impact. We build our systems with the assumption that things will occasionally fail, and we rely on the ability to remove and replace capacity as one of our core operational processes. While this is an operation that we have relied on to maintain our systems since the launch of S3, we have not completely restarted the index subsystem or the placement subsystem in our larger regions for many years. S3 has experienced massive growth over the last several years and the process of restarting these services and running the necessary safety checks to validate the integrity of the metadata took longer than expected. The index subsystem was the first of the two affected subsystems that needed to be restarted. By 12:26PM PST, the index subsystem had activated enough capacity to begin servicing S3 GET, LIST, and DELETE requests. By 1:18PM PST, the index subsystem was fully recovered and GET, LIST, and DELETE APIs were functioning normally. The S3 PUT API also required the placement subsystem. The placement subsystem began recovery when the index subsystem was functional and finished recovery at 1:54PM PST. At this point, S3 was operating normally. Other AWS services that were impacted by this event began recovering. Some of these services had accumulated a backlog of work during the S3 disruption and required additional time to fully recover.

MyPOV – AWS describes well that things break all the time, and they can even go down. But IaaS providers need to be certain they can come back up, and part of that coming back is also to understand how long it will take to come back up. S3 has been very popular, so the harder to take it down, test (or simulate) time for it to come back, but certainly something AWS could and should have done and known. When you run IT, and don’t know when a system that is down will come back up more or less for sure, the IT professionals are in a bad spot.

We are making several changes as a result of this operational event. While removal of capacity is a key operational practice, in this instance, the tool used allowed too much capacity to be removed too quickly. We have modified this tool to remove capacity more slowly and added safeguards to prevent capacity from being removed when it will take any subsystem below its minimum required capacity level. This will prevent an incorrect input from triggering a similar event in the future.

MyPOV – This section read like there was a software tool – but it malfunctioned. That of course is not good. Granted hard to simulate and test with systems of this scale – but not a good enough answer.

We are also auditing our other operational tools to ensure we have similar safety checks. We will also make changes to improve the recovery time of key S3 subsystems. We employ multiple techniques to allow our services to recover from any failure quickly. One of the most important involves breaking services into small partitions which we call cells. By factoring services into cells, engineering teams can assess and thoroughly test recovery processes of even the largest service or subsystem. As S3 has scaled, the team has done considerable work to refactor parts of the service into smaller cells to reduce blast radius and improve recovery. During this event, the recovery time of the index subsystem still took longer than we expected. The S3 team had planned further partitioning of the index subsystem later this year. We are reprioritizing that work to begin immediately.

MyPOV – Kudos to AWS for transparency, explaining that it has a solution and committing to get better going forward. School book response that all vendors with an outage should share – not all have.

From the beginning of this event until 11:37AM PST, we were unable to update the individual services’ status on the AWS Service Health Dashboard (SHD) because of a dependency the SHD administration console has on Amazon S3. Instead, we used the AWS Twitter feed (@AWSCloud) and SHD banner text to communicate status until we were able to update the individual services’ status on the SHD. We understand that the SHD provides important visibility to our customers during operational events and we have changed the SHD administration console to run across multiple AWS regions.

MyPOV – This is probably the worst finding, a too optimistic implementation of the key dashboard on AWS overall status. It should never have a single point of failure, but yet we see this happening over and over in outages. Vendors need to learn not to rely on their services to communicate with clients in an outage situation – as they may not be able to respond, a cardinal mistake (see e.g. for another outage issue here)... but yet vendors keep doing so.

Finally, we want to apologize for the impact this event caused for our customers. While we are proud of our long track record of availability with Amazon S3, we know how critical this service is to our customers, their applications and end users, and their businesses. We will do everything we can to learn from this event and use it to improve our availability even further.

MyPOV – Kudos for acknowledging and owning the issue. No blame game and scape goating (that is often seen here too, the most common scape goat being the network / network provider).

A pretty severe event

Lessons for IaaS Customers

Here are the key aspects for customers to learn from the AWS S3 outage:Have you built for resilience? Sure, it costs, but all major IaaS providers offer strategies on how to avoid single location / data center failures. Way too many prominent internet properties did not chose to do so – so if ‘born on the web’ properties miss this – its key to check regular enterprises do not miss this. Uptime has a price, make it a rational decision, now is a good time to get budget / investment approved, when warranted and needed.

Ask your IaaS vendor a few questions: Enterprises should not be shy to ask IaaS providers if they have done a few things:

- Do your run your systems by hand or with software

- Could the same issue that happened with AWS S3 in US-EAST-1 happen to you?

- How do you test your operational software?

- When have you taken your most popular services down last time?

- What is the expected up time of your most popular services?

- When did your produce that test of expected up time last and how has the system usage increased since then?

- How can we code for resilience – and what does it cost?

- What kind of renumeration / payment / cost relief can be expected with a downtime?

- What single point of failure should we be aware of?

- How are your operation consoles built?

- How do you communicate in a downtime situation with customers?

- How often and when do you refresh your older datacenters, servers?

- How often have your reviewed and improved your operational procedures in the last 12 months? Give us a few examples how you have increased resilience.

And some key internal questions, customers of IaaS vendors have to ask themselves:

- What are your customer / employee communication tools?

- When your IaaS vendor goes down, so may your customer and employee facing apps. How do you communicate then?

- Make sure to learn from AWS mistake – do not rely on the same point of failure / architecture as the production systems – as it will not be available. Simple, but always good to check and better even monitor.

MyPOV

Outages are always unfortunate. The key thing is to learn from them, knowing AWS they will be ruthless to address issues (and hopefully update customers and analysts on status progress). Kudos for a fast past mortem, taking responsibility and sharing first strategies to avoid another occurrence.On the concern side AWS needs to ask itself how it recycles and reviews architecture and servers. US-EAST is a behemoth that is nonetheless popular, but may need more rejuvenation than AWS may expect / have planned. In the cloud location monopoly race it is possible that vendors might stretch aging infrastructure beyond the breaking point. Of course, afterwards it is easy to armchair everything, but this remains an area to watch.

Overall hopefully plenty of lessons learnt all around, for AWS, other IaaS providers and customers.

Google Cloud Invests In Data Services and ML/AI, Scales Business

Google Cloud Invests In Data Services and ML/AI, Scales Business

Google Cloud is adding must-have enterprise features and scaling the business to meet data platform, machine learning and AI demand. Here’s a progress report.

Google’s reputation for big data, machine learning and artificial intelligence innovation is richly deserved. The challenge for Google Cloud – which exposes that innovation to enterprises through the Google Cloud Platform (GCP) – isn’t scaling the technology so much as scaling the business.

Last week’s Google Cloud Next '17 event in San Francisco was a case in point. The event was sold out, with more than 10,000 attendees packing the Moscone Center’s West hall. Many conference sessions were overbooked and had long standby lines. You got the feeling Google could have easily filled another massive conference hall.

Google Cloud Next 17 Recap from Constellation Research on Vimeo.

So what is Google Cloud doing to meet fast-growing demand? For starters, parent company Alphabet Inc. is investing big money in the business — more than $30 billion, according to Chairman Eric Schmidt, a keynoter at the event. But Google Cloud is not only hiring on all fronts and expanding the ecosystem, it’s also doubling down on tech investments in data centers and network improvements; new big data, machine learning (ML) and artificial intelligence (AI) services; and enterprise-oriented migration, administrative, governance and security features. Here’s a closer look at the business- and data-tech-oriented announcements.

You may get started on a public cloud through clicks and credit card payments, but it takes lots of people-centric interaction and hand holding to move an entire business into the cloud as scale. That’s why Google Cloud saw the largest headcount increase of any Alphabet business in 2016, and it expects to add at least 1,000 more salespeople in the first six months of this year. What’s more, Google Cloud’s professional services team has seen 5X growth over the last year, and partnerships with systems integrators and resellers are quickly multiplying.

At Google Cloud Next, Diane Greene, senior VP of Google Cloud, announced a significant new partnership with SAP, adding to a growing list of enterprise software partners. SAP executive board member Bernd Leukert joined Greene on stage to highlight certification of SAP Hana as well as plans for joint work on enterprise-grade data governance and compliance in the cloud. The more such partnerships Google can forge with blue-chip enterprise software vendors, the better.

Of course, the big draw for many companies to Google is its expertise in ML and AI. This point was validated onstage at Google Next by C-level customer guests from Colgate-Palmolive, Disney, Home Depot, HSBC and Verizon. And bolstering the company’s case, Google Cloud Chief Scientist Fei-Fei Li announced the acquisition of Kaggle, a company that has been a magnet to more than 850,000 data scientists through its famed Kaggle competitions.

Bringing Kaggle inside the Google Cloud ecosystem “combines the world’s largest data science community with the world’s most powerful machine learning cloud,” wrote Kaggle CEO Anthony Goldbloom. It also gives Kaggle and all those data scientists access to (and, it’s hoped, familiarity and comfort using) Google Cloud infrastructure, scalable training and deployment services and the ability to store and query large data sets.

MyPOV on business-side investments: Google Cloud clearly has big momentum. But whatever it’s investing in people and customer support, the company could probably double the effort and still not match the scale of its chief rival, Amazon Web Services (AWS). Amazon does not break out AWS headcount from its far more labor-intensive retail business, so it’s not an apples-to-apples comparison, but Amazon is many times larger than Google, with nearly 350,000 employees and plans to hire more than 100,000 more full-time employees over the next 18 months.

Suffice it to say that Google Cloud needs to stay aggressive on workforce expansion. My sense is that it’s growing as fast as it can without creating the sort of internal chaos that could negatively impact customer experience. Partnerships with systems integrators and high-scale vendors like SAP and acquisitions such as the Kaggle deal are smart ways to grow the ecosystem without putting all the pressure on internal development.

Data-Platform Investments

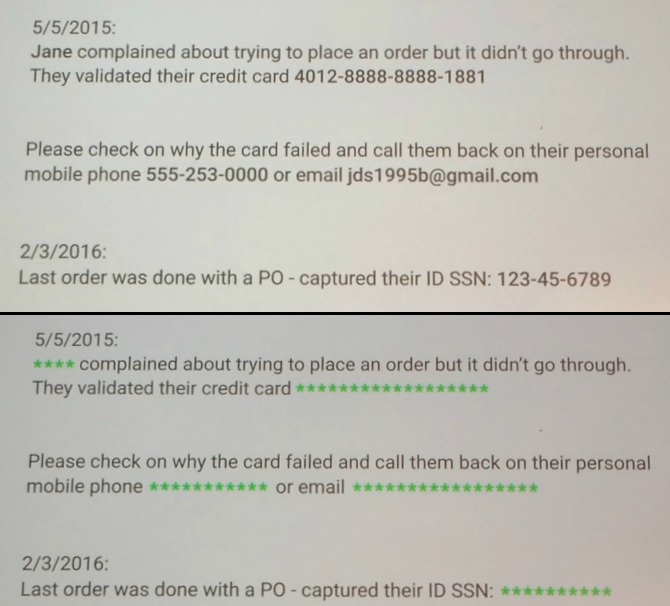

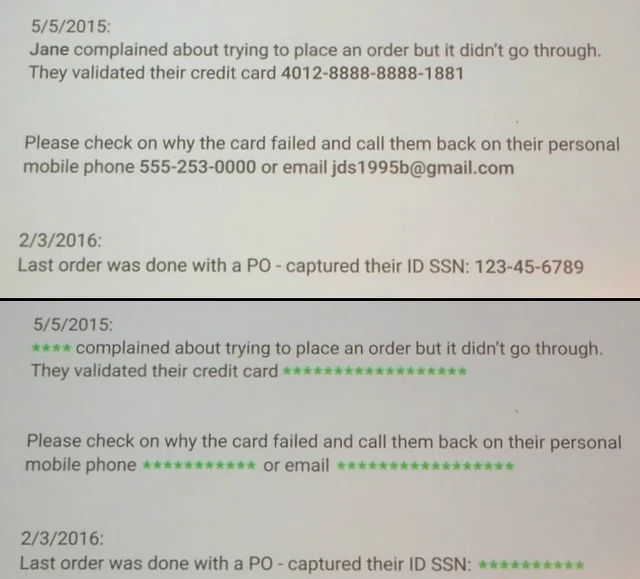

Google is investing on many technology fronts, but my focus is on data platforms, ML and AI, so I’m not going to get into the details of the three new data center regions, app developer news or the G Suite announcements (see Alan Lepofsky’s blog). There were also many infrastructure and security announcements, but the one most relevant to my data-to-decisions research is the new Data Loss Prevention (DLP) API. Now in beta, the DLP API promises to automatically discover, classify and redact sensitive information such as Social Security numbers, credit card numbers, phone numbers and more.

Together with security key and encryption developments, Google is addressing cloud security concerns that persist no matter how many times actual breaches show conventional corporate data centers to be far more vulnerable than clouds.

Google Cloud’s Data Loss Prevention API, now in beta, is designed to automatically discover, classify and redact sensitive data, as shown above.

As for those data platform, ML and AI announcements, here’s a rundown of the highlights:

Cloud ML Engine goes GA. This managed machine learning service based on Google’s open sourced TensorFlow ML framework is now generally available. The service features automatic hyperparameter tuning and tools for job management and resource utilization. In beta are GPU-based training and online prediction, which promise state-of-the-art performance at scale.

Cloud Video Intelligence API. Now in private beta, this service is designed to search and automatically tag large libraries of videos. The service spots entities within videos and notes the timecode of their appearance, making sense of videos and large collections of videos in automated fashion. Developers need no knowledge of machine learning or deep learning, says Google. They simply invoke the API.

Cloud Dataprep. This service, now in beta, promises to bring self-service data preparation to structured and unstructured information. The no-code, point-and-click interface provides a guided approach to joining data and automated data-transformation suggestions.

Google BigQuery Data Transfer Service. Also in beta, this managed data-import service will launch with support for moving data from DoubleClick, Google AdWords and YouTube, but Google Cloud execs say they’re keen on providing managed, automated migration options from other high-volume data sources. Stay tuned.

Cloud SQL for PostgreSQL. This managed service, now in beta, supports the popular, enterprise-standard, open-source relational database, opening the door for a world of compatible enterprise applications.

MyPOV on the tech announcements: Google Next announcements were heavy on betas and light on general releases, although the company less than a month ago announced the GA of its Cloud Spanner service. Spanner is Google’s globally distributed relational database service. It’s unique (and distinguished from global NoSQL database services) in offering consistency for demanding financial services, advertising, retail, supply chain and other applications demanding synchronous replication.

Aside from its big data, ML and AI depth, another differentiator for Google Cloud is the open-source nature of Google Cloud Platform services. Cloud Dataproc, for example, is based on Apache Spark and Apache Hadoop, and the batch-and-stream-data processing capabilities of Cloud Dataflow were open sourced as Apache Beam. This means you could run all these technologies on premises, in other clouds or in hybrid scenarios, diminishing vendor lock-in. It’s a contrast with AWS services that are unique to that vendor, although I don’t recall any customers discussing actual hybrid deployments or moving between cloud and on-premises deployments.

The more practical and real differentiator with Google Cloud Platform – and the one that multiple customer at Google Cloud Next actually talked about — is the managed nature of its services. With BigQuery, for example, you load data and issue queries and Google automatically makes all the necessary moves to ensure adequate compute and storage capacity. With unmanaged cloud services you have to think about, plan and take care of all the provisioning. And if you get it wrong, you either overpay for capacity or suffer from poor performance. This has been Google Cloud’s ace in the hole, but it’s a card the vendor is now playing up with every customer and at every opportunity.

Related Reading:

Cloud BI and Analytics Options Aren’t Just for Cloud Data

AWS Analytic and AI Services Are No Surprise, But They Will Succeed

Strata + Hadoop World Highlights Long-Term Bets on Cloud

Google Next: Analysis of G Suite News

Google Next: Analysis of G Suite News

March 8-10 Google held their Google Next conference, where they shared news about Google Cloud, G Suite and more. My primary focus for the conference was G Suite (formerly known as Google Apps), Google's personal productivity and team collaboration platform. My colleagues Doug Henschen and Holger Mueller focused on the Google Cloud Platform (GCP) side, and you can see their reviews here and here.

My Quick Take: After several years of working on architecture improvements for Drive and Hangouts, G Suite is doing several things right for their enterprise customers. However, given Google's past reputation for innovation, I'm disappointed that most of the announcements were catch-up to features/applications that other vendors are already doing in this space.

Below is a video in which I provide my full review and analysis of the G Suite news. I'm using the Acrossio video player, which allows you to jump back and forth to annotated moments of the video, as well as add your own annotations and comments. So if you're just interested in a specific section, find it in the conversation stream on the right side of the player, click and the video will start playing from there.

There were several announcements which you can read about here, including:

- Team Drives - Shared team folders

- Drive File Stream - files hosted in the cloud appear in Windows File Manager or Mac Finder as if they were local on your harddrive. (Early Adopter Program for G Suite Enterprise, Business and Education customers)

- Vault for Drive - Audit, compliance, governance. (DLP was announced earlier in Jan)

- Acquisition of AppBridge - for migrating content to G Suite

- Hangouts Meet - video / webconferecing

- Hangouts Chat - 1:1 and persistent group chat rooms. Available to G Suite Early Adopter customers.

- General Availability of Jamboard - white-boarding device and applications

- Gmail Add-ons - A new integration platform for Gmail that works across web and mobile devices (in Developer Preview)

Is your organization using G Suite? If so, what do you think of these announcements? If not, what are you using and how do you think it compares to G Suite?

Future of Work

Google Next - Review of GSuite News

Google Next - Review of GSuite News

Google announced several new features around Drive and Hangouts. What are they? How do they compare to the competition? Listen to find out.

On <iframe src="https://player.vimeo.com/video/207951242?badge=0&autopause=0&player_id=0" width="1152" height="720" frameborder="0" title="Google Next - Review of GSuite News" webkitallowfullscreen mozallowfullscreen allowfullscreen></iframe>Salesforce Einstein Rolls Out; IBM Watson Awaits

Salesforce Einstein Rolls Out; IBM Watson Awaits

Salesforce this week detailed progress on Einstein artificial intelligence. Less clear was how a new IBM partnership will change Salesforce AI plans. Here’s what's behind the headlines.

What’s the state of Salesforce Einstein, the portfolio of artificial intelligence (AI) capabilities announced last fall? Salesforce generated plenty of media coverage this week with two big announcements. Here’s my take on the realities of a partnership with IBM and what we have yet to understand about the packaging and pricing of Einstein.

Watson Meets Einstein?

The first announcement from Salesforce this week was the unexpected bombshell on a new partnership with IBM that headlines simplified as "Watson meets Einstein." As described by Salesforce CEO Marc Benioff in this CNBC appearance, one key benefit for Salesforce, and perhaps the first and easiest to implement, will be access to IBM cloud-accessible data from the Weather Company and other high-scale sources.

Data is a key ingredient of accurate AI, and in the wake of Microsoft’s acquisition of LinkedIN last year – a real competitive threat -- Salesforce can only benefit from significant data partnerships. Benioff cited a scenario in which weather data could be used by insurers to alert policy holders in the path of a predicted hail storm to park their cars indoors.

Benioff also noted that IBM offers cloud compute capacity and systems integration muscle. Here’s where joint IBM-Salesforce customers are most likely to benefit. IBM amped up its focus on CRM generally and Salesforce specifically last year by acquiring long-time Salesforce partner and systems integrator Bluewolf. And in yet another benefit to Salesforce, IBM committed to using the Salesforce Service Cloud internally. Given the scale of IBM’s 380,000-plus-employee workforce, that’s a significant customer win.

As for the potential combination of Watson and Einstein AI capabilities, we'll have to wait and see what the deal brings. Aside from application-integration and API-level ties expected as soon as next month (and, I suspect, already underway through Bluewolf), both companies stated that we won't see Watson-meets-Einstein synergies until the second half of this year – at the earliest.

MyPOV on the IBM Partnership: Salesforce has no lack of data science talent and capabilities, so I see IBM’s data assets and systems-integration muscle as the lure of this deal from Salesforce’s perspective. As for IBM, it’s way to grow the systems integration business while bringing Watson into the conversation with joint customers.

Einstein Generally Available

This week’s second announcement came in the form of a one-hour “Year of Einstein” videocast led by Salesforce execs including CEO Benioff and co-founder Parker Harris. Digging into the details, Chief Product Officer Alex Dayon talked about the 10 new Einstein AI features released in last month’s “Spring 2017” release. Salesforce now has more than 20 Einstein features generally available (including 16 listed in the slide below).

This slide from the Salesforce "Year of Einstein" video streamed this week lists 17 of

the 20 AI features now generally available from the CRM vendor.?

All 45 of the Einstein features planned for release in 2017 are detailed in my latest research, “Inside Salesforce Einstein Artificial Intelligence.” This 19-page report explores the foundational requirements of AI success, which include high-scale data, massive compute capacity, advanced data-science capabilities and plenty of time to work with all of the above. The report explores Salesforce capabilities on all these fronts, including the two years it spent developing the machine-learning-based predictive data pipeline that will power the vast majority of Einstein capabilities. Finally, it looks at Einstein compared to the cognitive and AI capabilities currently available or, in some cases, in development, within IBM, Microsoft, Oracle and various public cloud service providers.

In Tuesday’s video, Salesforce execs stressed that Einstein is “built into the platform” and now “available to all Salesforce customers.” But packaging and pricing varies by feature and cloud. On the most popular clouds -- Sales, Service and Marketing -- Einstein entails extra cost, and in some cases it’s available only to certain license tiers.

For example, on the Sales Cloud Einstein Opportunity Insights, Einstein Account Insights and Einstein Activity Capture are bundled together for $50 per user, per month, and they’re only available to those with Sales Cloud Enterprise or Unlimited licenses.

On the Service Cloud, Einstein Supervisor is a bundle of the pre-existing Omni-channel Supervisor and Service Wave Analytics apps with the Analytics Cloud Einstein Smart Data Discovery feature. In this case, Einstein is reserved for customers using Enterprise and Unlimited licensing levels and optional apps. Omni-channel Supervisor, for example, is included as part of Service Cloud Enterprise edition and higher. The Service Wave Analytics app is optional and starts at $75 per user per month. Analytics Cloud Einstein Smart Data Discovery, which is optional, is priced based on data volume and user counts. So Einstein Supervisor is “included” with other offerings, but they all happen to be available only to those with Enterprise-grade-plus licenses and an optional add-on application.

Marketing Cloud Einstein Journey Insights and Einstein Segmentation are available with the combination of any Marketing Cloud license and a Krux license. Pricing is based on Krux data collection volumes and storage and data processing use cases.

On some of the newer clouds that Salesforce is hoping to grow, Einstein features are included. Commerce Cloud Einstein, for example, is built into the Commerce Cloud Digital service. And in the Community Cloud, Einstein Recommendations and Einstein Trending Posts are included at no cost with any license. Other Einstein pricing is detailed in this press release.

MyPOV on the Einstein Rollout: It should be no surprise that Salesforce’s most sophisticated, in-demand and potentially labor-, time- and cost-saving capabilities are being tied to higher license levels or optional features. A key goal with Salesforce Einstein is to help people focus on what matters by getting redundant, time-consuming work out of the way or by presenting the lowest-hanging fruit, such as ripe sales leads, based on smart, predictive analysis. As I conclude in my report, the big challenge for Salesforce customers will be calculating the value of Einstein capabilities before turning those features on for lots of users.

Related Reading:

Inside Salesforce Einstein Artificial Intelligence

Oracle Preps AI Apps, Next Steps for Data Cloud

Salesforce Takes Apps-First Approach with Einstein AI

Event Report: #GoogleNext17 On Path To Enterprise Ready

Event Report: #GoogleNext17 On Path To Enterprise Ready

Google Cloud Takes Key Steps To Enterprise Class

Over 10,000 customers, prospects, partners, Google developers, and employees gathered in San Francisco’s Moscone West Conference Center for Google’s flagship enterprise event. Of note, Alphabet CEO, Eric Schmidt, bookended the event by highlighting the $30 billion capital infrastructure investment into the overall Google Cloud Platform. The conference brought together Google’s ecosystem and highlighted Google’s commitment to invest in the enterprise market. A few proof points on enterprise-ready were on hand:

VIDEO: The 60 Second LowDown On #GoogleNext17

20170308 The 60 second LowDown on #GoogleNext17 from Constellation Research on Vimeo.

- Google showcases enterprise cred on stage. Customers such as Disney, eBay, Home Depot, HSBC, and Verizon were on hand to share how they made the move to GCP. Verzion shared plans to move 115,000 employees to G Suite by year’s end. HSBC’s CIO Darryl West noted how their data scientists found the tools easy to use. Disney’s CTO, SVP Michael White highlighted Google’s machine learning capabilities in their road to AI. Home Depot’s SVP of Technology Paul Gaffney talked about how Google handled “the smoothest Black Friday ever”. Colgate-Palmolive shared how they migrated 28,000 employees on to G-suite.

Point of View (POV): While customers started first using the Google Cloud Platform for G Suite, big data, analytics, machine learning, translation, and big query, success has led to land and expand strategies. Constellation sees customers and prospects moving legacy workloads in a “lift and shift” fashion. Organizations fearing Amazon’s cannibalization of their market and those unsure of Microsoft’s ability to deliver on AI have begun pilots on GCP as well as sought choices in partners as Google beefed up their enterprise requirements. At the leadership summit, many customers and prospects expressed significant interest in setting up POC’s.

- SAP expands Hana distribution with Google. SAP’s Bernd Leukert appeared on stage with Diane Greene to announce the official certification that Google Cloud Platform (GCP) technology could run the SAP HANA database and other mission critical SAP applications. Certification is critical for customers as SAP and Google will ensure that specific performance enhancements for SAP apps and HANA are maintained. SAP intends to have Hana Cloud Platform working on Google Cloud in the next two months.

(POV): The partnership shows how Google seeks to attract large enterprise workloads and enterprise customers onto the platform. The deal also addresses key privacy concerns as SAP remains the custodian of the data in the cloud allowing customers to meet data governance and compliance requirements. Longer term, SAP must decide whether to operate its own data centers or they could rely on Google as their partner. SAP gains a key ally in delivering much needed compute power and distribution of SAP’s Hana technologies. SAP will also resell Google’s G-Suite into it’s 345,000 customers.

- Google improves support offerings. New smart tiering engineering support plans, Rackspace partnership, and Pivotal partnership bolster support. Three new engineering support plans were announced for Google Cloud based on response times. Level one at four to eight hour response was set at $100 per user per month. Level 2 for one hour response was set at $250 per user per month, and Level 3 for one for a 15 minute response at any hour of the day was set at $1500 per user per month.

(POV): Constellation sees the new support plans as key to driving value for clients based on their need and usage of engineering services. The Rackspace relationship helps customers with better managed support options. Pivotal’s partnerships gives customers running mixed clouds better support with Customer Reliability Engineering.

The Bottom Line: Google Taking Steps In The Right Direction For Customers

Just two years ago, analysis of Google’s enterprise efforts showed very little enterprise credibility. The sales team barely understood enterprise, the products were rife with transient talent, and customers had no input into the product direction. With the recent house cleaning in the management and product teams, a commitment to enterprise by Eric Schimdt, and a host of new enterprise class alliances and partnerships, Google is rapidly building a worthwhile option for clients across the five entry points to cloud maturity (see Figure 1)

- Migrate workloads. From test and dev, to key production workloads, organizations can start reducing data center costs and driving down the cost of compute power.

- Migrate enterprise. While certain systems will remain on-premises or in a mix of compute power environments, over time, organizations will shift their enterprise systems onto the cloud.

- Access SaaS Apps. While workloads and enterprises are making shifts, new capabilities in apps will come from a surround strategy.

- Manage hybrid cloud. Organizations will operate multiple clouds on multiple deployment options and this hybrid environment will require governance and management.

- Innovate with the cloud. While the cloud will provide many core capabilities to clients, innovation will come from application development on platforms in the cloud.

As customers use the cost savings in the cloud to fund innovation, expect Google to emerge as an option among the 5 Amigos (Amazon, Microsoft, IBM, Google, Oracle) for full stack cloud capabilities (i.e. IaaS, PaaS, DaaS, SaaS). Customers are drawn to the machine learning, translation, maps, and analytics opportunities.

However, Google will have to make more acquisitions such as Kaggle and Apigee in order to round out key enterprise requirements. For this reasons, customers and prospects should watch carefully what partnerships and alliances emerge in the next six to twelve months. Google is taking steps in the right direction but must move quickly in order to keep its momentum as a worthwhile enterprise class option.

Your POV.

Looking at cloud options? What do you think of Google Cloud Platform? Will you rely on Google for your digital transformation efforts?

Add your comments to the blog or reach me via email: R (at) ConstellationR (dot) com or R (at) SoftwareInsider (dot) org.

Please let us know if you need help with your Digital Business transformation efforts. Here’s how we can assist:

- Developing your digital business strategy

- Connecting with other pioneers

- Sharing best practices

- Vendor selection

- Implementation partner selection

- Providing contract negotiations and software licensing support

- Demystifying software licensing

Resources

- Monday’s Musings: Dynamic Leadership – A Responsive And Responsible Approach #Davos #WEF17

- Monday’s Musings: Why A Bi-Modal Approach to Digital Transformation Is Just Stupid

- Monday’s Musings: Secrets Behind Building Any AI Driven Smart Service

- Trends: Five Data Center Trends For 2017

- News Analysis: America In An @RealDonaldTrump Era – Everything International Clients Need To Know PESTEL Part 1.

- Research Summary: Constellation’s AstroChart For Business Trends, Q4 2016

- Research Summary: Constellation’s AstroChart For Tech Trends

- News Analysis: @Adobe @Microsoft Partner Up For Marketing Cloud, Azure and #AI #MSIgnite

- Monday’s Musings: Understand The Spectrum Of Seven Artificial Intelligence Outcomes

- Tuesday’s Tip: Seven Factors For Precision Decisions In Artificial Intelligence

- Monday’s Musings: Data – The Foundation Of Real-Time Digital Business

- ‘Monday’s Musings: Who Gets To Be A Chief Digital Officer?

- Monday’s Musings: The Seven Rules For Digital Business And Digital Transformation

- Tuesday’s Tip: Five Steps To Starting Your Digital Transformation Initiative

- Monday’s Musings: What Organizations Want From Mobile

- Research Summary: Economic Trends Exacerbate Digital Business Disruption And Digital Transformation (The Futurist Framework Part 3)

- Research Summary: Five Societal Shifts Showcase The Digital Divide Ahead (The Futurist Framework Part 2)

- Research Summary: Sneak Peaks From Constellation’s Futurist Framework And 2014 Outlook On Digital Disruption

- Research Report: Digital ARTISANs – The Seven Building Blocks Behind Building A Digital Business DNA

- Research Summary: Five Societal Shifts Showcase The Digital Divide Ahead (The Futurist Framework Part 2)

- Research Summary: Next Generation CIOs Aspire To Focus More On Innovation And The Chief Digital Officer Role

- Trends: [VIDEO] The Digital Business Disruption Ahead Preview – NASSCOM India Leadership Forum (#NASSCOM_ILF)

- News Analysis: New #IBMWatson Business Group Heralds The Commercialization Of Cognitive Computing. Ready For Augmented Humanity?

- Harvard Business Review: What a Big Data Business Model Looks Like

- Monday’s Musings: How The Five Consumer Tech Macro Pillars Influence Enterprise Software Innovation

- Tuesday’s Tip: Understand The Five Generation Of Digital Workers And Customers

- Monday’s Musings: The Chief Digital Officer In The Age Of Digital Business

- Slide Share: The CMO vs CIO – Pathways To Collaboration

- Event Report: CRM Evolution 2013 – Seven Trends In The Return To Digital Business And Customer Centricity

- News Analysis: Sitecore Acquires Commerce Server In Quest Towards Customer Experience Management

- News Analysis: Salesforce 1 Signals Support For Digital Business at #DF13

- Research Summary And Speaker Notes: The Identity Manifesto – Why Identity Is At The Heart of Digital Business

Reprints

Reprints can be purchased through Constellation Research, Inc. To request official reprints in PDF format, please contact Sales .

Disclosure

Although we work closely with many mega software vendors, we want you to trust us. For the full disclosure policy,stay tuned for the full client list on the Constellation Research website.

* Not responsible for any factual errors or omissions. However, happy to correct any errors upon email receipt.

Copyright © 2001 -2017 R Wang and Insider Associates, LLC All rights reserved.

Contact the Sales team to purchase this report on a a la carte basis or join the Constellation Customer Experience

The post Event Report: #GoogleNext17 On Path To Enterprise Ready appeared first on A Software Insider's Point of View.

Event Report - Google Next 2017 - Google makes progress - but is it fast enough?

Event Report - Google Next 2017 - Google makes progress - but is it fast enough?

So take a look at my musings on the event here: (if the video doesn’t show up, check here)

No time to watch – here is the 1-2 slide condensation (if the slide doesn’t show up, check here):

Want to read on?

| Schmidt shares his 3 step program for moving to the cloud |

Google is serious about Enterprise – It was already clear from the hiring of Greene and last year’s event. But it’s one thing to say it and then to deliver on it. Greene shared that the division is the fastest growing in headcount with over 1000 new hires, Schmidt shared today that over time Google has invested 30B+ US$ into the cloud infrastructure. As Greene shared earlier the ‘business’ things are easy – the basic ‘blocking and tackling’ – but it still needs to be executed. So Google for the first time had a partner event with the conference and showed it has attracted interest from all the large SIs. It’s a lot of progress on the partner numbers, but a totally different ball game than with the other two key players. It was also good to have more enterprise customers on stage, talking to Greene on different carefully selected use cases – Disney for an ‘All in’, HSBC for a data warehouse decommission an move to cloud, Home Depot as a website / commerce reference and eBay as an enterprise showcase for an inhouse built next gen app – a Google Home powered eBay application.

| Greene talks Google Cloud |

Machine Learning remains the carrot – Not surprisingly, Machine Learning remains the core attraction for Google Cloud. Disney’s CTO clearly labelled it as the reason why Disney is building apps on Google Cloud. Newly minted head of AI / Machine Learning Fei Fei Li shared the visions and unveiled (next to the Cloud Speech, Vision, Translate and Natural Language APIs) the new Video Intelligence API. No surprise given YouTube is part of Alphabet, but very powerful to index and find things in video, e.g. all the beach scenes in your vacation videos. Can’t wait for it to come to Google Photos. Or find out how many of my videos where in cars, at airports or somewhere else. But the key announcement – with long term impact – is Google’s acquisition of Kaggle, the analytics, machine learning, AI community, famous for its competitions. A key acquisition that could – if well executed – further bolster the Machine Learning / AI leadership of Google: It’s not enough to have great software capabilities, you also need to educate people about them and win their hearts and minds as a community. The strategy is clear here, now Google will have to show that it can create, foster a vendor independent ecosystem around Machine Learning.

| Google'e Fei Fei Li announces the Kaggle acquisition |

Google gets SAP HANA – The major news in regards of ‘meeting the enterprise, where the enterprise is’ (the slogan from last year) was that SAP HANA is now certified on Google Cloud. Leukert was there to unveil the news with Greene, certainly a good move, that shows SAP’s multi vendor cloud strategy. You can / will be able to run SAP products on all three major IaaS. The SAP Hana Express developer product is available, too, with SAP Cloud platform coming later. And when Colgate came on stage, it was clear that SAP to GSuite integration is also on the roadmap. More options for SAP customers is a good development, it may make it easier for SAP to sell more HANA going forward. And Google gets load for Google Cloud. The usual ‘SaaS vendor picks IaaS’ move. The only downside: SAP is working hard to get its customers to HANA – so it is not the bulk of the current load where SAP customers are from an on premises install base. That would be SAP customers running mySAP ERP etc. – mostly on Oracle.

| Google's Greene and SAP's Leukert on SAP HANA coming to GCP |

MyPOV

A lot of good progress from Google on the Google products for the enterprise, both Cloud and GSuite. The conference has only started – so more is going to come for both products in the days to come. The Google Enterprise team is working hard, no doubt and has made progress in the last 12 months with partners, support and create more unique offerings e.g. the site reliability engineer for customers, a start up program etc. etc.On the concern side, it is not clear if this will be enough, I asked the Greene leadership team on what they will do to catchup from ‘Bronze to Gold’ and they all came up with good and plausible answers (check out my Storify of the analyst event here). But in a year where we may see VMware running on AWS and Oracle making its cloud work first time – this may not be enough for Google to get on premises load of scale. So it all comes back to the speed of Google being able to attract enterprise load for the next few years, and the speed of the enterprise moving to cloud.

In the meantime, when enterprises have to build new software, the Google capabilities are very attractive for the next generation application use cases the 21st century demands. So in the short run this could well mean that Google can attract more next generation application use cases than attract traditional enterprise IT loads. We will be watching, stay tuned.

Want to learn more? Checkout the Storify collection below (if it doesn’t show up – check here). And if interested in the Analyst Summit from Tuesday this week - there is a Storify collection here.

- New Analysis – Google enables citizen developers and developers with Google App Maker - read here

- First Take - Google enters enterprise software space with Google Jobs API - read here

- Event Report - Google I/O 2016 - Android N soon, Google assistant sooner and VR / AR later - read here

- First Take - Google Google I/O 2016 - Day #1 Keynote - Enterprise Takeaways - read here

- Event Preview - Google's Google I/O 2016 - read here

- Event Report – Google Google Cloud Platform Next – Key Offerings for (some of) the enterprise - read here

- First Take - Google Cloud Platform - Takeaways Day #1 Keynote - read here

- News Analysis - Google launches Cloud Dataproc - read here

- Musings - Google re-organizes - will it be about Alpha or Alphabet Soup? Read here

- Event Report - Google I/O - Google wants developers to first & foremost build more Android apps - read here

- First Take - Google I/O Day #1 Keynote - it is all about Android - read here

- News Analysis - Google does it again (lower prices for Google Cloud Platform), enterprises take notice - read here

- News Analyse - Google I/O Takeaways Value Propositions for the enterprise - read here

- Google gets serious about the cloud and it is different - read here

- A tale of two clouds - Google and HP - read here

- Why Google acquired Talaria - efficiency matters - read here

Find more coverage on the Constellation Research website here and checkout my magazine on Flipboard and my YouTube channel here.

20170308 The 60 second LowDown on #GoogleNext17

20170308 The 60 second LowDown on #GoogleNext17

Insights into Day 1 at #GoogleNext17 from Constellation Research Analyst R "Ray" Wang.

On <iframe src="https://player.vimeo.com/video/207647722?badge=0&autopause=0&player_id=0" width="1280" height="720" frameborder="0" title="20170308 The 60 second LowDown on #GoogleNext17" webkitallowfullscreen mozallowfullscreen allowfullscreen></iframe>