Digital Business Distributed Business and Technology Models Part Three; Distributed Services Business Management

Vice President and Principal Analyst, Constellation Research

Constellation Research

Andy Mulholland is Vice President and Principal Analyst focusing on cloud business models. Formerly the Global Chief Technology Officer for the Capgemini Group from 2001 to 2011, Mulholland successfully led the organization through a period of mass disruption. Mulholland brings this experience to Constellation’s clients seeking to understand how Digital Business models will be built and deployed in conjunction with existing IT systems.

Coverage Areas

Consumerization of IT & The New C-Suite: BYOD,

Internet of Things, IoT, technology and business use

Previous experience:

Mulholland co authored four major books that chronicled the change and its impact on Enterprises starting in 2006 with the well-recognised book ‘Mashup Corporations’ with Chris Thomas of Intel. This was followed in…...

Read more

Digital Business takes place within distributed network-based ecosystems using interactions in the form of various ‘Services’ to create Business value and revenues. The ability to dynamically orchestrate a set of Services involving various partners within the Business Ecosystem brings the requirement for a new kind of ‘Middleware’. Distributed Service Management ensures that partners in orchestrated Business Service activities have their individual transactions recorded and settled as necessary.

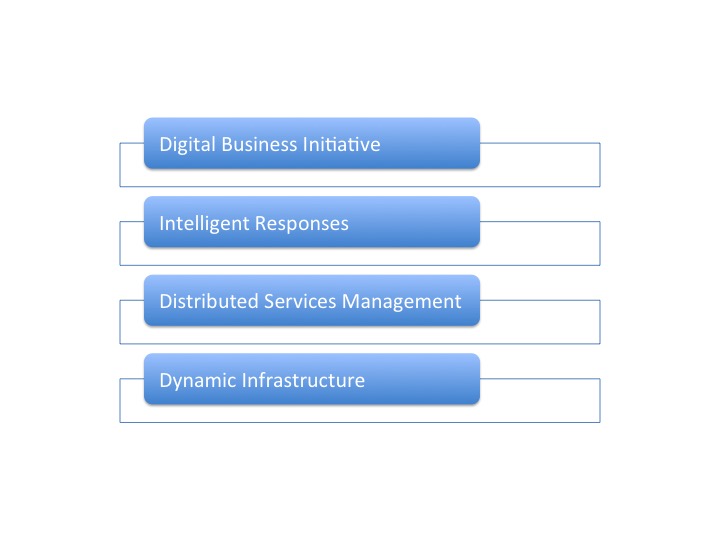

Previous parts of this series started with an examination of the Business activities and actions of an Enterprise, followed by part 2 defining the need for a new kind of dynamic infrastructure. In part 3a the need for a Distributed Services Technology Management is explored to introduce this part 3b adding the Distributed Services Business Management. (It is recommended to read the parts sequentially from Part 1, though a brief overview is included in the summary at the end of this part).

Blockchain Technology is seen as the most likely solution to providing Distributed Service Management, hence the recent wave of interest in the topic. However knowledge of what Blockchain Technology is, and how it can be applied, to Digital Business is generally poor. Bitcoin tends to be seen as Blockchain, whereas it is a specific implementation using aspects of Blockchain technology.

There is considerable development being devoted to develop Blockchain Technology to create Distributed Ledger implementations that will provide transactional synchronization across a Distributed System, or Business Ecosystem, without requiring any single, or centralized control. There are some significant issues that have to be addressed that lead to some experts expressing concern as to the level of enthusiasm for Blockchain as the basis for Distributed Ledgers, and in particular IoT.

Consider a Smart City example where its many businesses and consumers interact through many Smart Services and how that creates a complex web of commercial settlements. The intention is that new developments of Distributed Ledger will ensure all financial settlements, and possibly other forms of transactions, are recorded across the community with out the need for a central brokering Service operated by the City.

There are some serious issues to be addressed in the development of Blockchain based Distributed Ledgers for wide spread use. As well as the usual constraints relating to scale and operational speed there is a complicated core issue around the issuing and management of the secure ‘keys’ that lie at the heart of the technology.

Owners of any part of the value chain covering Sensors, Services, Resources, Assets and general Infrastructure all require a reliable trusted shared decentralized service to record transactions. Smart Services has been widely used as a term to describe the Business value offered in these systems, but the underlying technology is correctly called Distributed Apps, (Dapps), in recognition of the distributed, or shared, nature of its operating environment.

AirBnB currently uses the familiar technologies of the Internet + Web, but has a stated ambition to develop their Service as a fully functional DApp to allow global levels of scale and interactions.. Other popular Smart Services are likely to migrate into DApps over time to extend their business value propositions, and scale.

The role of a Distributed Ledger, (which is itself a DApp), is to provide a decentralized trust model that prevents fraud, or assumption of control, by allowing all participants to ‘check’ every transaction. (Note that an expert on the detail of Blockchain technology would wish this term to be expanded into much greater detail). The term ‘every transaction’ means between all members of the specific business ecosystem book every transaction, even if not a direct participant in the current transaction.

A Distributed Ledger would treat each IoT Device, or set of Devices that correspond to a chargeable business Service element, as a Node with its own version of the common Distributed Ledger. Whenever a pair, or group, of such IoT Devices/Nodes carry out an interaction, ending in a transaction, all registered Nodes are provided with a copy. A distributed consensus algorithm leads to shared agreement on the legitimacy of the latest transactions and the overall state of the network At which point all Nodes will update their copy of the Distributed Ledger to include the new transaction.

As there are so many versions of the ledger being maintained and compared separately fraudulent interactions are, dependent on the quality of the Distributed Ledger implementation, somewhere between very difficult and impossible. However there are several practical difficulties around scale; firstly the number of nodes and the size of the ledger; secondly the number of transactions equating to the frequency of updates. As a comparison the Bitcoin Blockchain deployment provides ledger updates roughly every ten minutes, introducing significant latency for real time applications, and volume is limited to no more than five entries per second.

The scaling issue currently sets requirements for brand new DLT developments. Expectations are that for initial IoT deployments it will be possible to achieve acceptable operating parameters. The Business Services ecosystem covering a Smart City would be expected to operate with an acceptable number of nodes and transactions in its early years.

The development of Smart City, other IoT business networks and DApps are all driving the requirement for suitable Distributed Ledger solutions. By their very nature Open Source would seem to be an attractive proposition. Two Open Source projects; Ethereum and Hyperledger, have successfully attracted support and build sizable community support though their focuses are different.

Ethereum founded in 2014, supports an eclectic mix of activities, often with crowd funding, where the focus is on DApps that require the decentralized transaction architeure. The Ethereum name will often come into a conversation of Blockchain, or DApps, but the community is not aimed at supporting large-scale IoT requirements for Distributed Ledger solutions. A sample of Ethereum projects can be found here.

The focus of HyperLedger is distinctly different, aiming to support the broader needs of IoT Distributed Systems that can be served by Blockchain based technology. The HyperLedger Explorer core project defines its mission as; ‘the fabric is an implementation of blockchain technology that is intended as a foundation for developing blockchain applications or solutions. It offers a modular architecture allowing components, such as consensus and membership services, to be plug-and-play. It leverages container technology to host smart contracts called “chaincode” that comprise the application logic of the system’(sic).

HyperLedger has significant support from well-known industry names, both Business users as well as Technology Venders. Premier Members include; Accenture, Airbus Industries, Deutsche Bourse, Fujitsu, IBM, Intel and JP Morgan. (A full membership list can be found here).

There are four HyperLedger projects underway that contribute in different ways to making Distributed Ledgers a reality for commercial operations; Blockchain Explorer, Fabric, Iroha, and Sawtooth Lake. Summaries of each project and its aims can be found here. It would seem that Hyperledger Fabric is destined to become the most significant in 2017 with its stated aim;

Fabric is an implementation of block chain technology that is intended as a foundation for developing block chain applications or solutions. It offers a modular architecture allowing components, such as consensus and membership services, to be plug-and-play. It leverages container technology to host smart contracts called “chaincode” that comprise the application logic of the system.

IBM aims in 2017 to accelerate the Hyperledger Fabric project by recruiting and supporting an Ecosystem of Developers who will be offered various IBM provided Facilities. This ambitious plan highlights the value in understanding the details which can be found in a Constellation post, Why IBM’s Blockchain Plans Make Sense,.

Clearly effective decentralized transaction management using Blockchain Distributed Ledger technology is a highly important part of the development of Services based ‘Trading” Business Networks. However, this has become, thanks to the coverage on the topic, the most visible element in the overall challenge of building, operating and maintaining a new generation of de-centralized systems.

Summary; Background

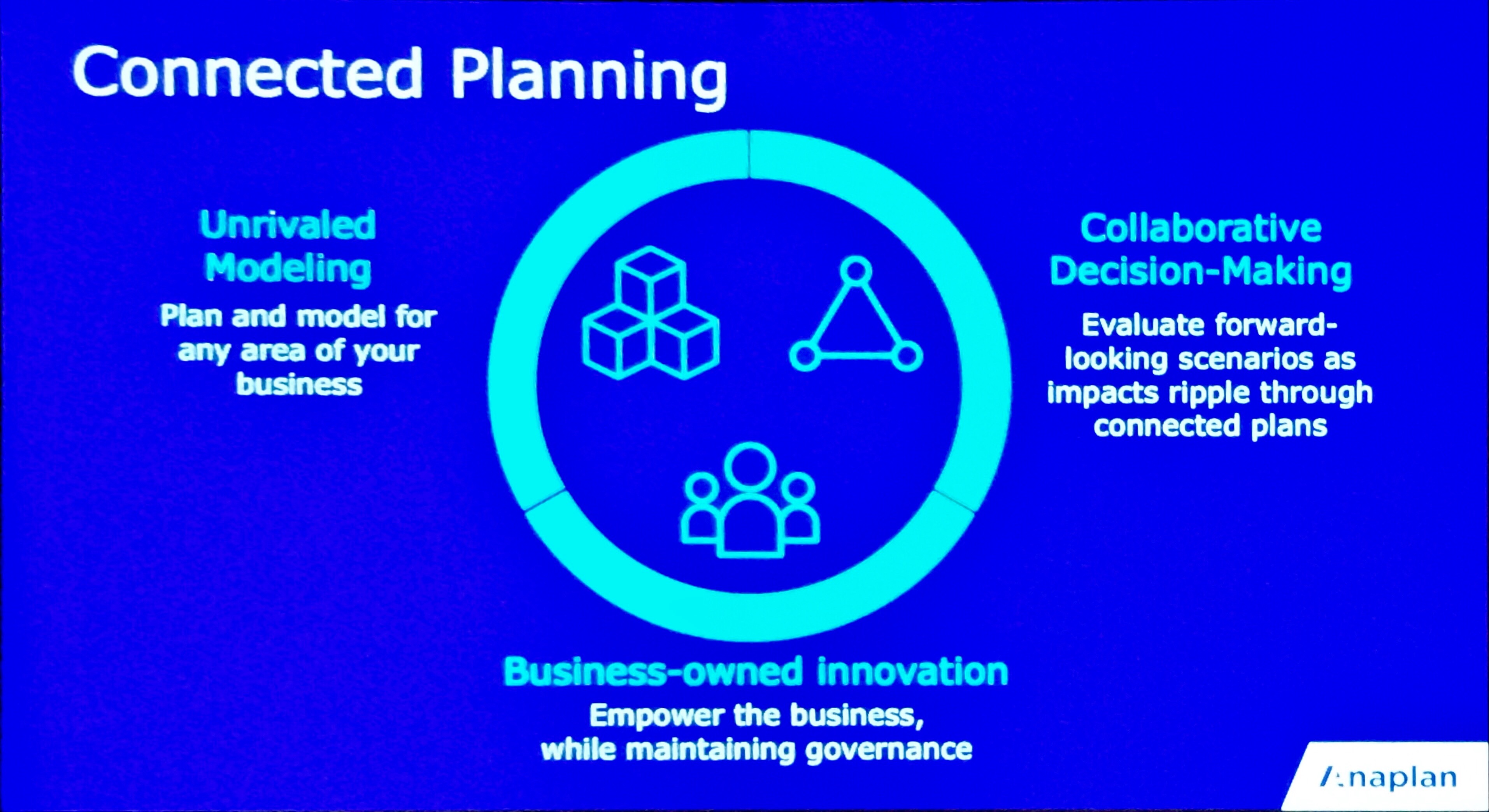

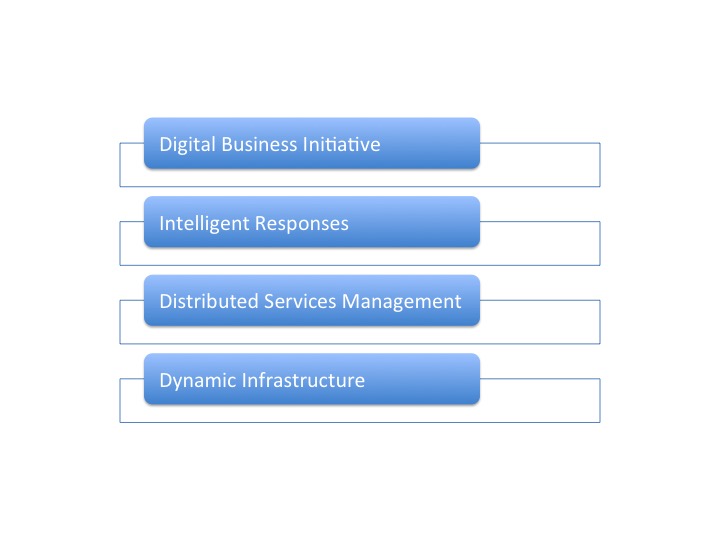

This is third part in a series on Digital Business and the Technology required to support the ability of an Enterprise to do Digital Business. An explanation for the adoption of a simple definition shown in the diagram below to classify the technology requirements rather than attempt any form of conventional detailed Architecture is provided, together with a fuller explanation of the Business requirements.

Part 1 - Digital Business Distributed Business and Technology Models;

Understanding the Business Operating Model

Part 2 - Digital Business Distributed Business and Technology Models;

The Dynamic Infrastructure

Part 3a – Digital Business Distributed Business and Technology Models;

Distributed Services Technology Management

New C-Suite

Innovation & Product-led Growth

Tech Optimization

Future of Work

AI

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Analytics

Automation

B2B

B2C

CX

EX

Employee Experience

HR

HCM

business

Marketing

SaaS

PaaS

IaaS

Supply Chain

Growth

Cloud

Digital Transformation

Disruptive Technology

eCommerce

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

Leadership

finance

Customer Service

Content Management

Collaboration

M&A

Enterprise Service

Chief Information Officer

Chief Technology Officer

Chief Digital Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Executive Officer

Chief Operating Officer