News Analysis: ServiceNow Buys Armis, Top 5 Trends For 2026

News Analysis: ServiceNow Buys Armis, Top 5 Trends For 2026

Five Market Trends for 2026

Today on Fox Business Network with Ashley Webster on Varney and Co, 5 Market Trends for 2026 were shared (watch the full program here):

- Exponential Efficiency and Infinite Possibilities with AI. AI is going to have a productivity effect and will create new types of companies that we have not imagined. These tiny teams can operate with massive scale and few people.

- IPO boom ahead. Lower interest rates meets IPO boom meets AI investments. As Scott Shellady mentioned in the program earlier in the day, the effects of the One Big Beautiful Bill will be coming soon into 2026. This stimulus will have a bigger impact and potentially move GDP past the 4.6% growth we saw this past month.

- Battle for cheap energy. Europe's $.25 kWh costs and America's $.15 kWh costs can not compete with China's $.08kWh costs. The ideological green programs have left the West behind. Further, China's moving to $.04 kWh. This means that China’s energy dominance will give them an advantage on AI compute, manufacturing, and war time capacity.

- Return to real asset basics. AI has shown that there is a battle for assets – water, power, gold, rare earths, and real estate. The focus on tangible assets will grow into 2030.

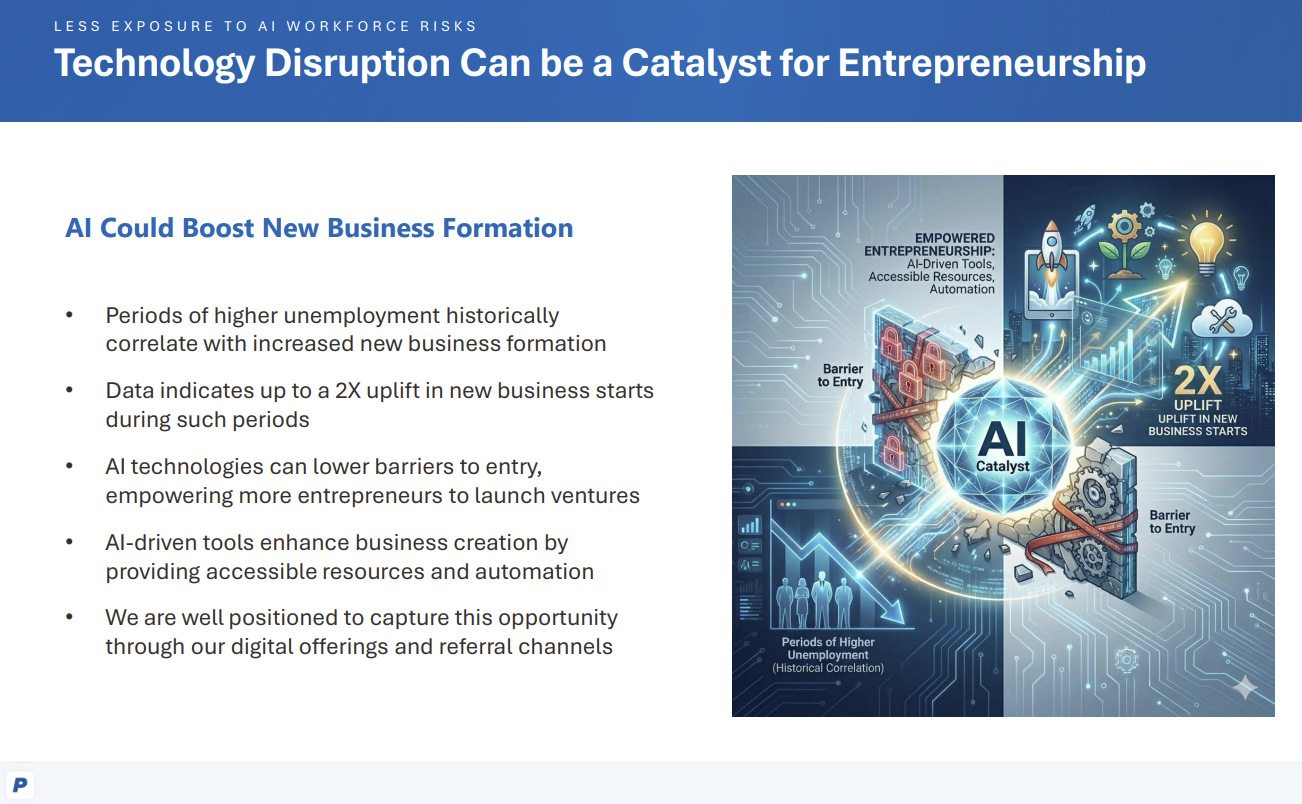

- Massive shift in the workforce. Last generation of managers to only manage humans. Human managers will be managing agents, robots, devices and in some cases vice versa. This will create a cultural shift but also come in time as overall populations decline in the West.

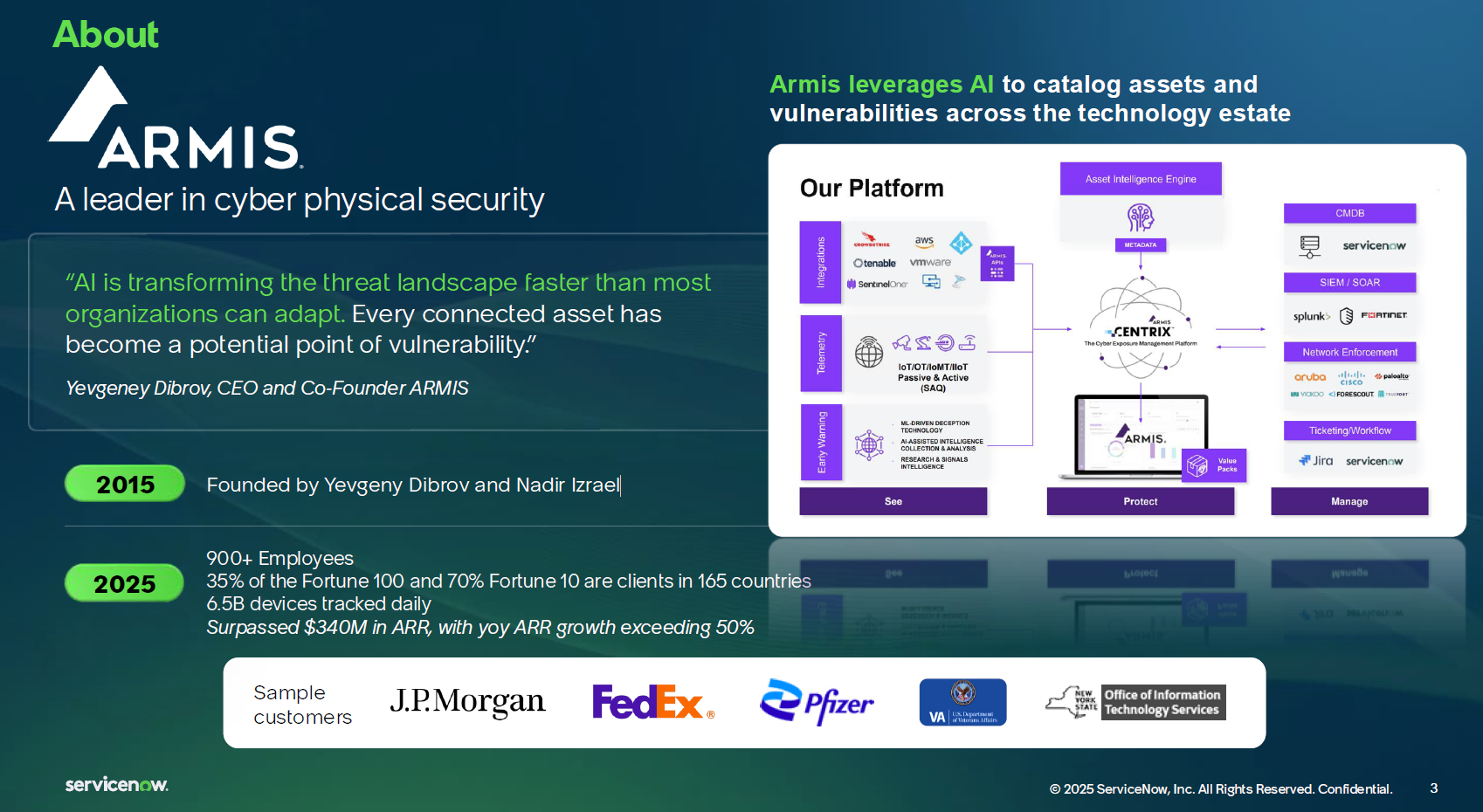

ServiceNow Buys Armis For $7.75B

On December 23rd, ServiceNow's announced its acquisition of Armis for $7.75B. Armis was reaching 300M in ARR in mid 2025 and on a trajectory to 1B in ARR by 2026. ServiceNow has had organic growth with the Rule of 50. Together ServiceNow's customers now have the ability to bring operational technology (OT) with information technology (IT) and manage post-breach resilience from a cybersecurity landscape. As my colleague Chirag Mehta often mentions, this is the main priority in cyber security today - what happens after a breach and how to stem the damage.

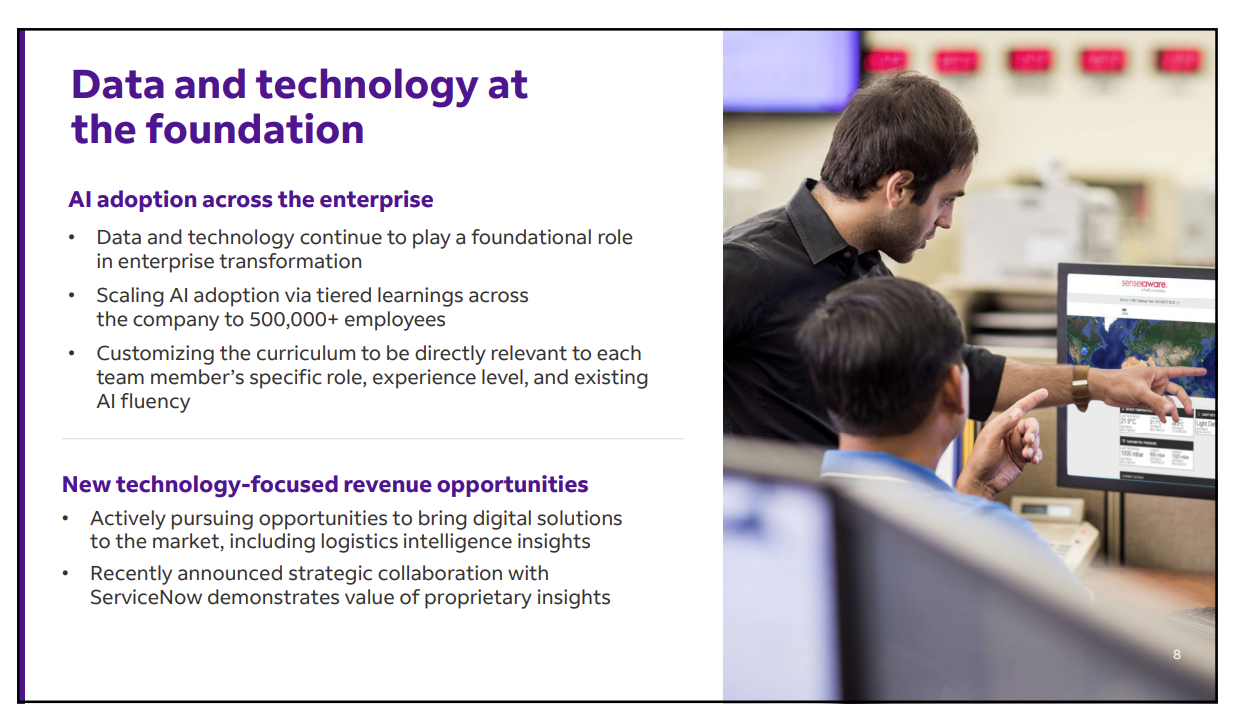

Leveraging workflows and the CMDB from ServiceNow and security from Armis, customers will be able to secure both physical objects and cyber security. The AI control towers give company’s visibility into their environment The long term goal is situational awareness across the physical and digital world. Overall a great deal

For more details, reach the latest analysis from Larry Dignan in Constellation Insights.

Your POV

Are you ready for 2026? What do you think about the ServiceNow - Armis deal?

Add your comments to the blog or reach me via email: R (at) ConstellationR (dot) com or R (at) SoftwareInsider (dot) org. Please let us know if you need help with your strategy efforts. Here’s how we can assist:

- Working with your boards to keep them up to date on technology and governance.

- Connecting with other innovation minded leaders

- Sharing best practices

- Vendor selection

- Implementation partner selection

- Providing contract negotiations and software licensing support

- Demystifying software licensing

Reprints can be purchased through Constellation Research, Inc. To request official reprints in PDF format, please contact Sales.

Disclosures

Although we work closely with many mega software vendors, we want you to trust us. For the full disclosure policy,stay tuned for the full client list on the Constellation Research website. * Not responsible for any factual errors or omissions. However, happy to correct any errors upon email receipt.

Constellation Research recommends that readers consult a stock professional for their investment guidance. Investors should understand the potential conflicts of interest analysts might face. Constellation does not underwrite or own the securities of the companies the analysts cover. Analysts themselves sometimes own stocks in the companies they cover—either directly or indirectly, such as through employee stock-purchase pools in which they and their colleagues participate. As a general matter, investors should not rely solely on an analyst’s recommendation when deciding whether to buy, hold, or sell a stock. Instead, they should also do their own research—such as reading the prospectus for new companies or for public companies, the quarterly and annual reports filed with the SEC—to confirm whether a particular investment is appropriate for them in light of their individual financial circumstances.

Copyright © 2001 – 2025 R Wang and Insider Associates, LLC All rights reserved.

Contact the Sales team to purchase this report on a a la carte basis or join the Constellation Executive Network