Hershey finishes SAP S/4HANA implementation: Is it sweet or suite?

Hershey finishes SAP S/4HANA implementation: Is it sweet or suite?

Hershey has to navigate inflation, supply chain disruptions, consumer tastes and GLP-1 obesity drugs and their impact on demand. But at least the company has reached the finish line moving to SAP S/4HANA and one instance.

The Hershey journey is worth noting since it's just one example of how SAP's large enterprise customer base is migrating. Yes, SAP launched a program that can give companies more time, but the migration to cloud ERP instances is going to happen.

Hershey is using SAP S/4HANA, SAP Business Technology Platform (BTP), SAP Analytics Cloud and SAP Datasphere.

- SAP launches Business Data Cloud, partnership with Databricks: Here's what it means

- SAP launches SAP ERP, private edition, transition option: What you need to know

Hershey's latest SAP upgrade is also notable since it has gone well relative to the company's history. In the 1990s, the Hershey SAP implementation was a disaster, hurt financial results and wound up being a business school case study on the importance of change management.

We'll let Hershey CFO Steve Voskuil highlight how that SAP finish line feels. Let's just finishing an ERP implementation is sweet (or maybe suite). Speaking at an investor conference, Voskuil said: "As we look forward, we can look at what we did last year and we talked about ERP. No one's happier to have the ERP program behind us than me and that's a big one for us."

Voskuil also said Hershey is seeing benefits from the SAP upgrade. "Over 95% of our global business is on one instance of that ERP platform, and there are more efficiencies to be had in the go-to-market space," he said. "It's also allowed us to accelerate innovation and the focus this year on bigger, bolder, more impactful innovation."

There's also a financial tailwind now that Hershey won't have to spend on the ERP implementation and the consultants and integrators that tag along. Here's the breakdown of Hershey's annual capital expenditures via SEC filings including capitalized software and the ERP implementation.

- 2024 capex: $605.9 million.

- 2023 capex: $771.1 million.

- 2022 capex: $519.5 million.

- 2021 capex: $495.9 million.

- 2020 capex: $441.6 million.

In 2023, Hershey explained that its capex increased due to "continued investments in digital infrastructure including the build and upgrade of the new ERP system across the enterprise." The 2024 capex figures fell due to the completion of the SAP project. Based on Hershey's SEC filings, the company has been spending in earnest on the SAP ERP implementation since 2020.

Now that the SAP implementation is complete, here's a look at Hershey's digital plans on tap.

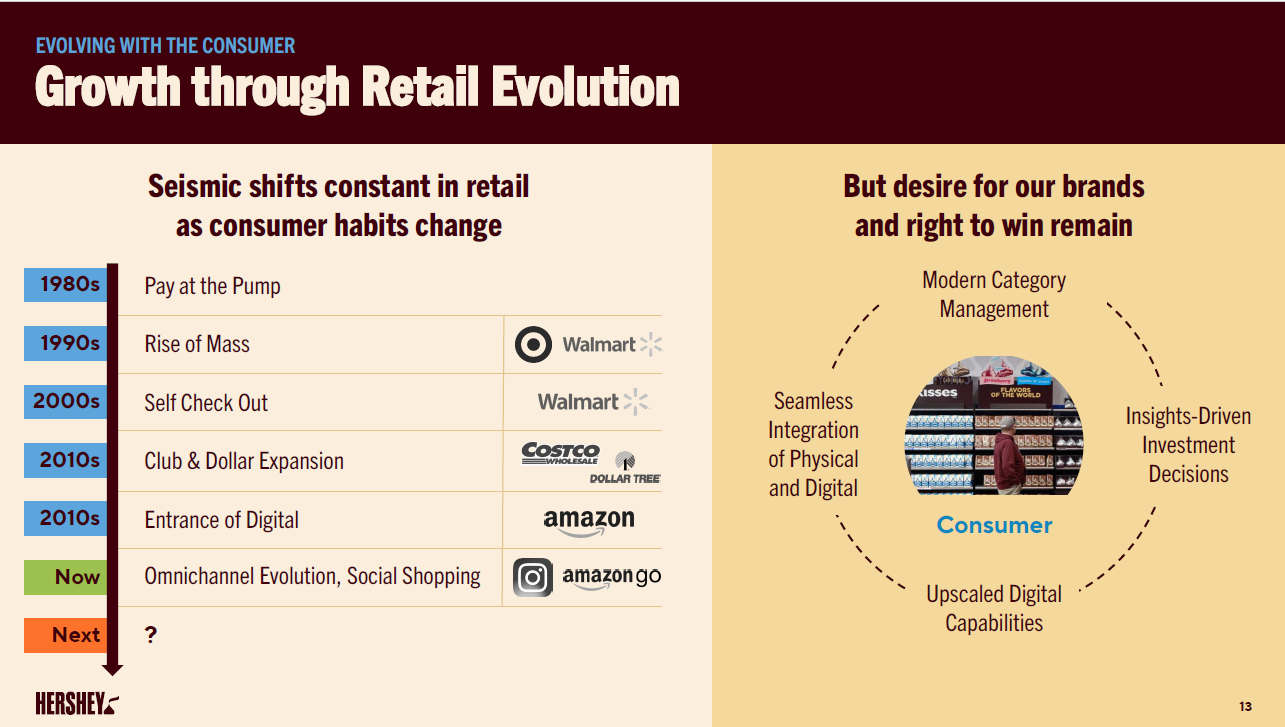

Planogram wins. Michelle Buck, CEO of Hershey's, said the company is putting science behind its shelves. "We're implementing our gold standard planogram in at least 40% of C-stores this year. That planogram uses a shopper decision tree along with some other proprietary pieces of information to get to an optimized shelf set that can drive results at retail," said Buck. "What we've seen with that shelf is where we have implemented that we see the category doing two times the growth versus where we have not implemented it."

Integrated demand planning. Voskuil said Hershey is planning to tie demand signals to supply chain and fulfillment processes. "In this case, we're using a product from Kinaxis, a best-in-class tool that uses a combination of machine language, data and some AI to make real time adjustments to the way that manufacturing and supply chain responds to demand," he said.

Data, analytics and AI-driven optimization. Hershey is looking to blend data on shopping and consumer behaviors, promotion feedback and effectiveness and AI to optimize the company's promotional spend via an in-house system. Hershey has deployed this system in about 40% of its portfolio. "We're going to continue to expand a proprietary solution that we have built with an outside partner," said Voskuil.

The integrated demand planning and promotional optimization efforts account for 15% to 20% of Hershey's transformation savings today, said Voskuil.