Southwest Power Pool building small AI factory to better simulate electric grid

Southwest Power Pool building small AI factory to better simulate electric grid

Southwest Power Pool (SPP) is building a mini AI factory with Nvidia, Hitachi Vantara and other Hitachi units to better simulate future demand on the electric grid in its region.

The first phase of the SPP effort is expected to become operational in the fourth quarter of 2025 or early 2026. Hitachi Vantara announced the partnership with SPP in June. The goal at the time was to develop industrial AI infrastructure to speed up simulations to resolve energy shortages, boost grid reliability and respond to outages.

Speaking at Hitachi Vantara's Analyst Live 2025 event, Felek Abbas, CTO and CISO for SPP, outlined the collaboration which aims to dramatically speed up the simulation process by at least 80% to predict future demand on the electric grid in its core states.

"The preliminary tests we've done show us getting an 80% reduction in time and we're hoping to beat that once we're on the final hardware," said Felek. "We'll essentially have a small AI factory within our facility."

SPP is a regional transmission organization that aims to improve the reliability of the electric grid while keeping costs low. The organization's footprint runs from Northwest Texas to the Canadian border. SPP also provides energy services such as reliability coordination and market facilitation in Arizona and Colorado.

SPP has about 125 stakeholders and member companies. Before AI data center demand, Felek said that electric peak loads for their region increased by about 1.5% a year. Today, growth is anywhere from 4% to 5% a year, said Felek..

"One of the things we absolutely need to do is bring more generation to the grid and in order to serve that load from AI, data centers and crypto mining is to run studies to determine what's feasible, whether the transmission system is robust enough and will it require additional construction," explained Felek.

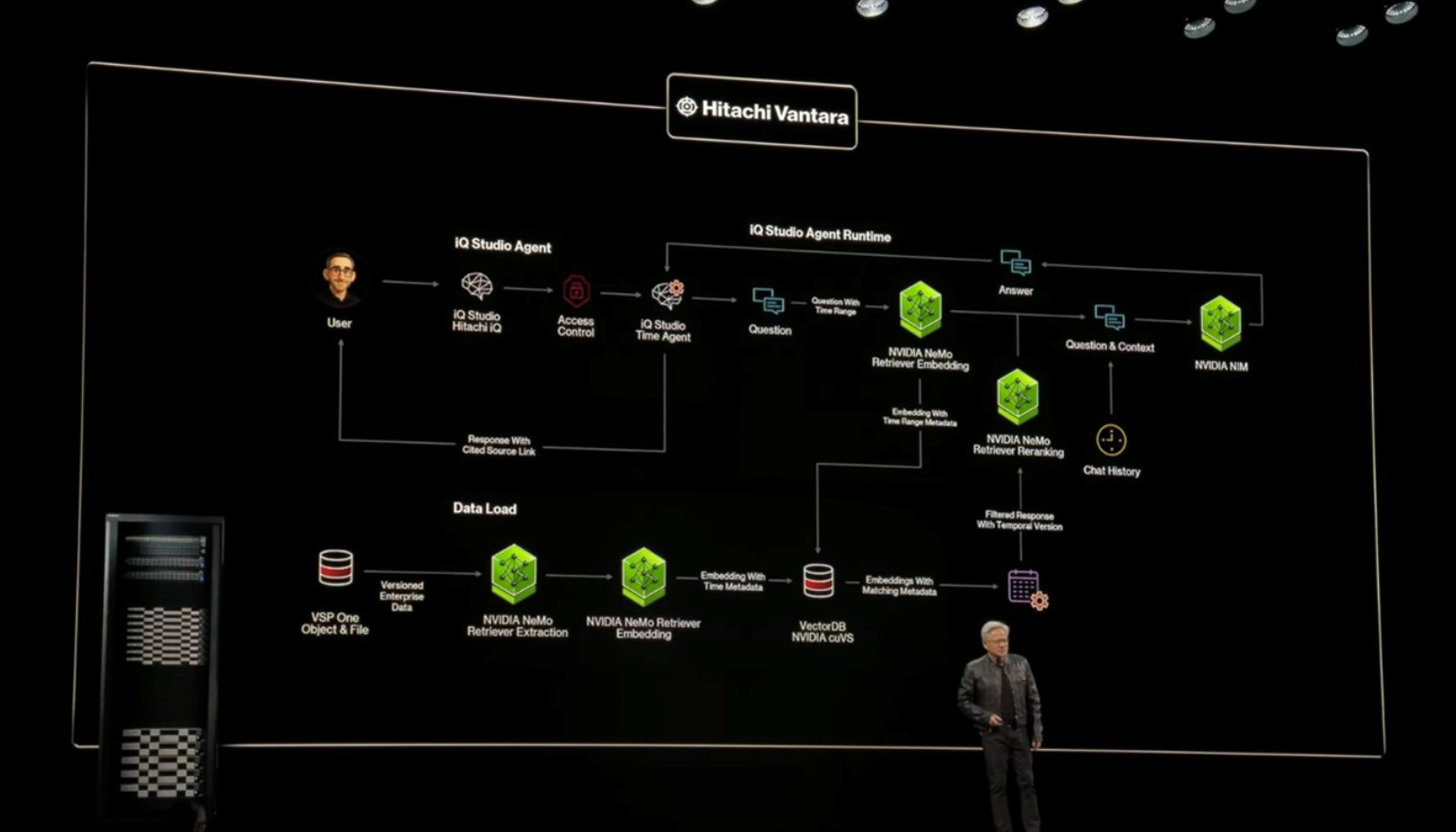

The AI infrastructure deployed for SPP combines Hitachi Vantara's Hitachi-IQ and Nvidia's accelerating computer platform. Hitachi Vantara and Nvidia have been collaborating on enterprise AI and industrial AI systems and the storage provider has been called out during Nvidia CEO Jensen Huang's GTC keynotes.

Hitachi Vantara, along with its sibling companies such as Hitachi's GlobalLogic unit and Hitachi Energy, can bring expertise to multiple industrial AI use cases. SPP was a collaboration between Hitachi Vantara, Hitachi Digital Services along with Nvidia.

Felek said SPP's previous simulation process, which was largely manual, took 27 months. He added that SPP would run three simultaneously so it could deliver decisions for project requests once a year. "While this project is about speed, it's also about gaining more information, analyzing more areas of the grid and providing data to generation builders so they have expectations on the economic returns," said Felek. "To do that you need to know all the scenarios over the 20 year to 40 year lifespans."

This project with SPP, Hitachi Vantara and Nvidia is in the early stages but highlights on-premise AI and industrial AI use cases. Here are the key points:

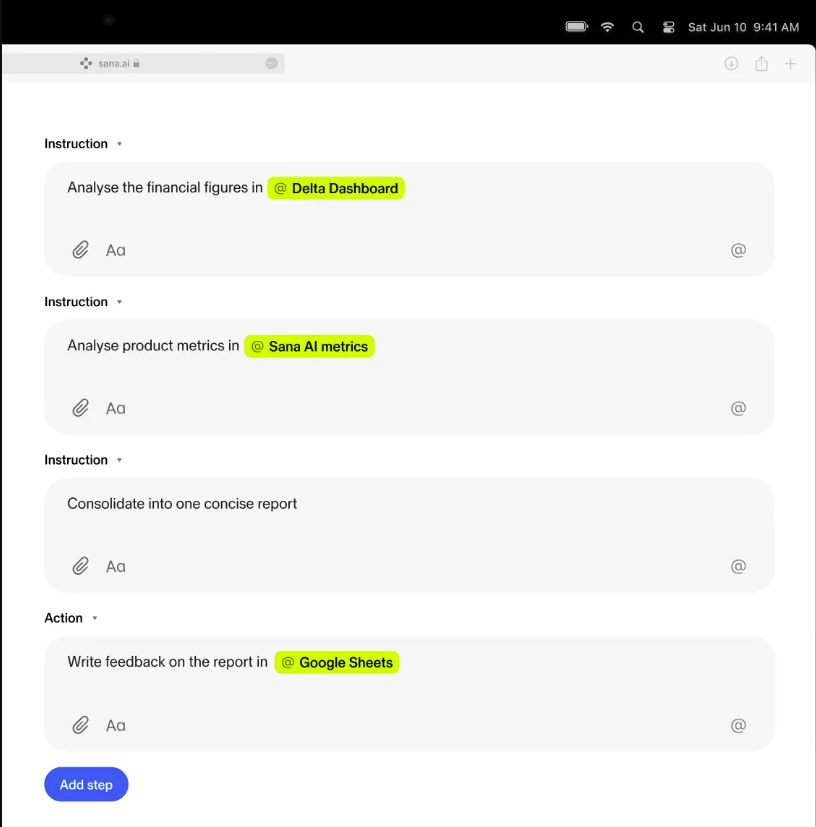

- Felek said the company is reinventing the entire simulation process.

- SPP with Nvidia and Hitachi Vantara are looking to consolidate the data intake process and "have AI analyze the data as it is coming in and provide feedback to our stakeholders on quality." The previous process required human back-and-forth on data quality and corrections.

- SPP is planning to automate reports and analysis to free up engineers.

- Once the data is consolidated in a single repository, SPP can build models. Today, SPP takes six months to build models, but Felek said that process can dramatically sped up.

- Felek added that SPP plans on leveraging an AI agent to build models from the data repository in real time. "The AI agent is going to determine issues with the models and automatically fix them," he said.

- Leveraging Nvidia GPUs, SPP is building an inference engine that can deliver analysis on power flows dynamically and also provide economic analysis.

SPP will oversee the systems and technology implementation, but the Hitachi IQ and Nvidia enterprise stack will cover process automation, predictive analysis and communication systems integration. The Nvidia and Hitachi partnership runs alongside the SPP project and focuses on transmission planning processes and future needs for interconnections, planning, forecasting and analysis.

Data to Decisions Next-Generation Customer Experience Tech Optimization Chief Information Officer