Private Cloud a Compelling Option for CIOs: Insights from New Research

Vice President and Principal Analyst

Constellation Research

Title: About Dion Hinchcliffe

Dion Hinchcliffe is an internationally recognized business strategist, bestselling author, enterprise architect, industry analyst, and noted keynote speaker. He is widely regarded as one of the most influential figures in digital strategy, the future of work, and enterprise IT. He works with the leadership teams of large enterprises as well as software vendors to raise the bar for the art-of-the-possible in their digital capabilities.

He is currently Vice President and Principal Analyst at Constellation Research. Dion is also currently an executive fellow at the Tuck School of Business Center for Digital Strategies. He is a globally recognized industry expert on the topics of digital transformation, digital workplace, enterprise collaboration, API…...

Read more

As the world finds itself hurtling ever-further into the digital age, the race towards the public cloud seems to be a foregone conclusion for most IT leaders. The public cloud, with its allure of almost infinitely scalable resources and minimal maintenance, has long been heralded as the inevitable destination for the vast majority of IT workloads. As a long-time proponent of the public cloud, I've long understood its many positive benefits (elasticity, modern architecture, quick provisioning, and a bulwark against technical debt.)

There has been an undeniably gilded narrative surrounding the public cloud in recent years, viewing it as a digital knight in shining armor that has come to liberate our data from the parochial and inefficient clutches of traditional data centers.

The Private/Public Cloud Decision Point Has Changed

But what if the story turned out to be not quite as simple as that? What if, like all good journeys of self-discovery, there were unexpected plot twists as the real story actually emerged? This is in fact the case today when it comes to a fuller and clearer view of private cloud vs. public cloud. I discovered this during a recent study I embarked upon, involving in-depth interviews with 22 Chief Information Officers (CIOs) and senior IT executives in the spring of 2023, uncovering an unexpected protagonist: The private cloud.

This study sought to venture beyond the buzzwords and industry assumptions, to dig into the reality of cloud solutions in today's organizations, exploring aspects ranging from cost and control to data movement and the handling of high-cost, always-on workloads. The findings, as it is seen in the summary below, challenges much of the prevailing wisdom about public cloud. It paints a more nuanced picture, one where the private cloud, often overlooked in our rush towards the hyperscalers, emerges as a compelling, and in many cases, superior choice.

This study sought to venture beyond the buzzwords and industry assumptions, to dig into the reality of cloud solutions in today's organizations, exploring aspects ranging from cost and control to data movement and the handling of high-cost, always-on workloads. The findings, as it is seen in the summary below, challenges much of the prevailing wisdom about public cloud. It paints a more nuanced picture, one where the private cloud, often overlooked in our rush towards the hyperscalers, emerges as a compelling, and in many cases, superior choice.

I've just published a detailed new research report that delves into the study and its findings. It certainly challenges many long-held assumptions and will perhaps, help rewrite the narrative of our IT future. As I noted in a recent industry podcast, "to increase cloud value, it's essential to manage complexity and control costs."

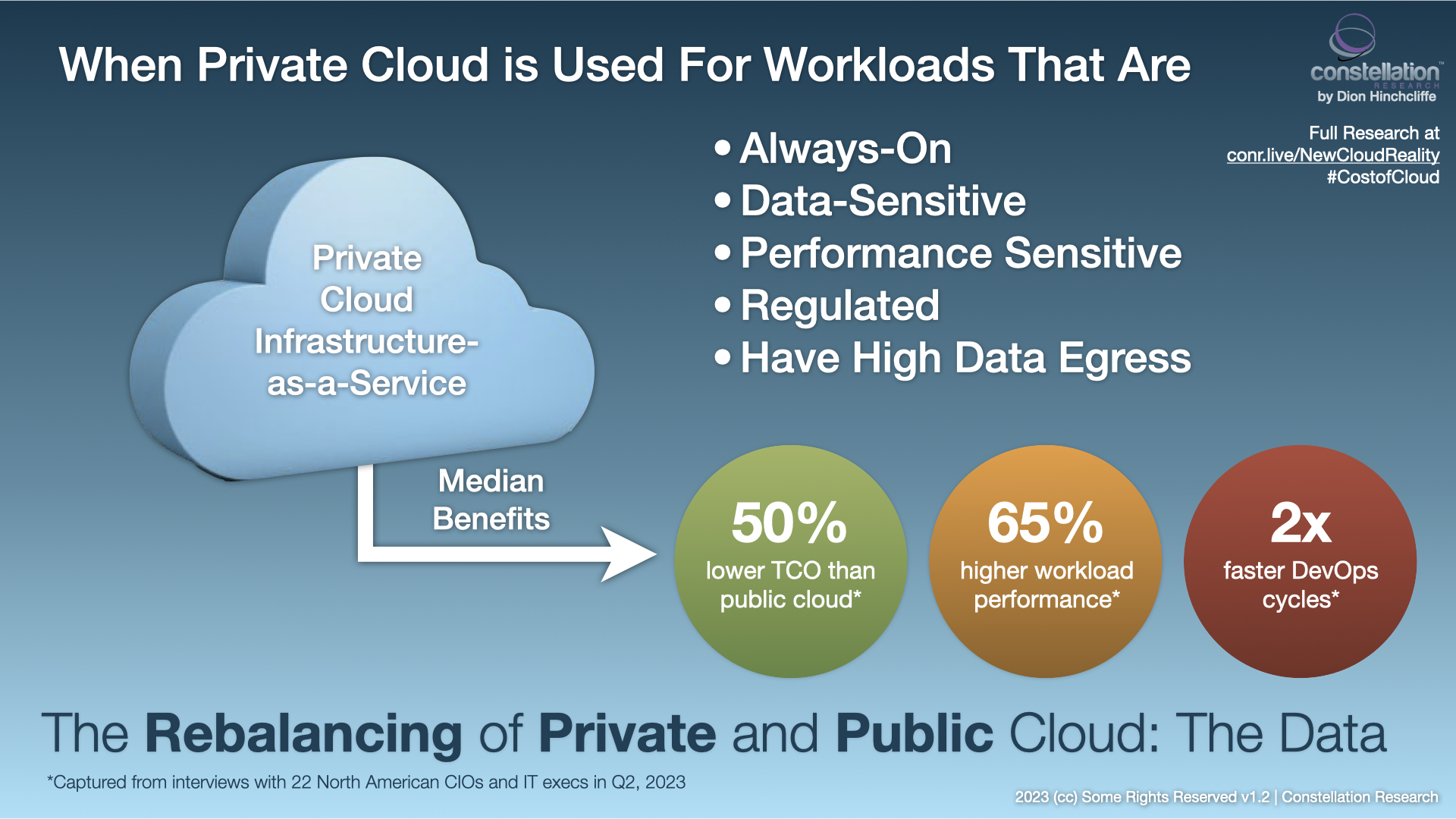

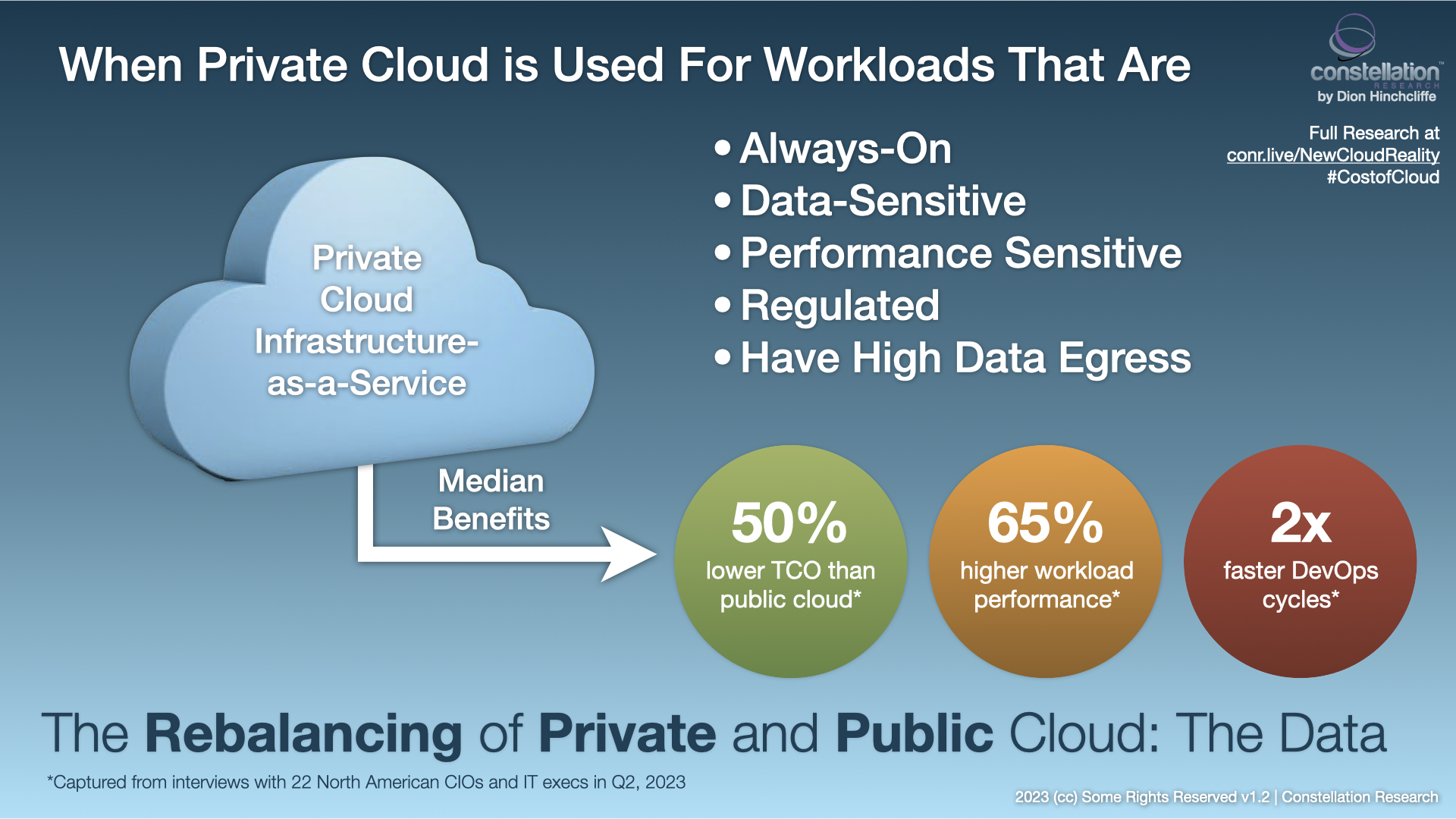

The key findings of the report, for those IT organizations that have begun to proactively incorporate private cloud into their workload mix, include:

- 50% lower cost

- 65% more performant

- Twice as agile for development/DevOps cycles

These are surprising outcomes, and not what I was necessarily expecting to uncover. Private cloud of yore was often a so-called "brownfield" of highly heteregenous legacy IT, was often not consistently managed/governed, and did not use a modern cloud architecture such as cloud-native. Private cloud was seen as rife with high costs and inefficiency. It was to be avoided if possible. However, data from serious adopters show that this is often no longer the case. Private cloud today in fact is often as sleek and contemporary as public cloud from a technology and run-time perspective. And it turns out that in particular use cases it can genuinely shine.

Note: These numbers are median figures, and are only for workloads in which private cloud is specifically advantageous.

My video exploration of the private/public cloud workload placement research.

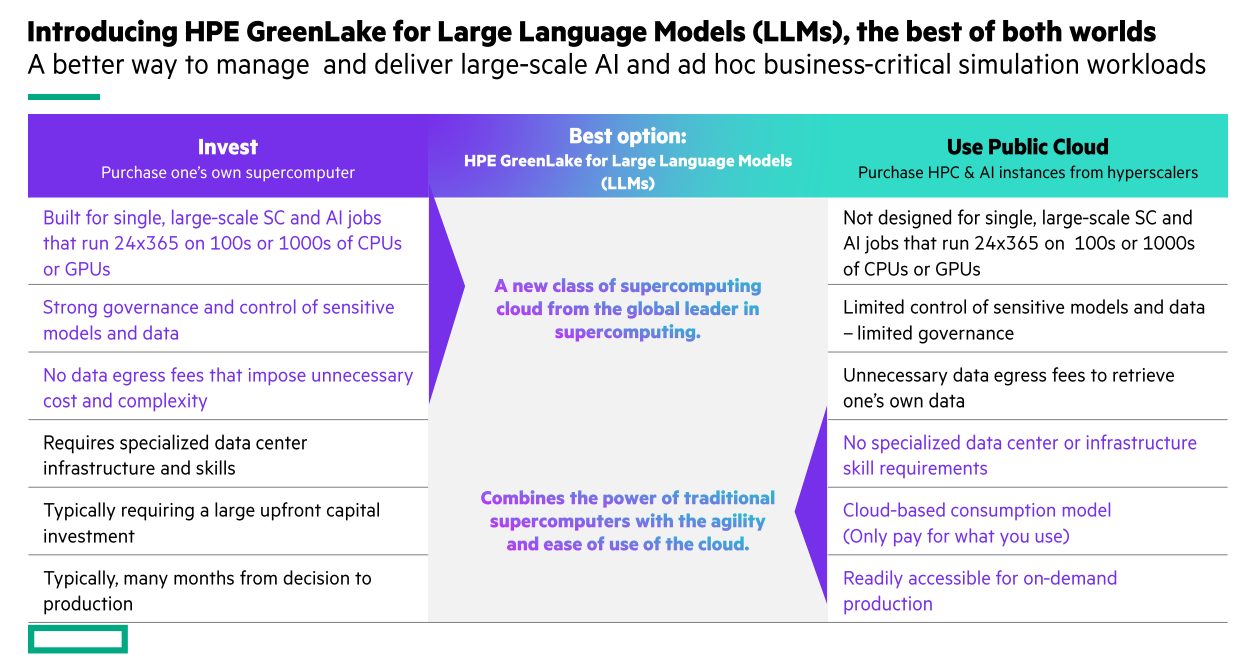

Private Cloud Is Closing the Workload Decision Gap

Thus, we can now see that many of the disadvantages of private cloud have dropped away, while it appears that some of its other attributes have gained in comparison with public cloud. This is especially the case in certain increasingly well-understood yet vital scenarios. The interviewees I spoke with often struggled with public cloud for certain key use cases. The result was costly bills for frequently-on workloads, poorly performing workloads they had trouble optimizing, needs for data residency, poor multi-year cloud budget predictability, or high data egress to support analytics or high bandwidth digital experiences.

It's important to emphasize that this study did not delve into the causal factors involved, merely what the CIOs and senior IT leaders experienced in terms of benefits as they rebalanced their IT portfolio with a combination of public and private cloud, putting their workloads where they made the most sense for them at the time. I will seek to explore the root causes for these benefits in a future report.

There is no question that some of these results can seem counterintuitive: Don't hyperscalers have higher economies of scale and shouldn't they be cheaper? Isn't it more agile to be able to spin up instances quickly in public cloud? The findings were that no, for various reasons hyperscalers now appear not to be passing their full cost savings to customers (perhaps putting them into R&D, marketing, growth, or profit.) And FinOps and the cloud operations bureaucracies that have accumulated around cloud usage in organizations, putting considerable red tape between teams trying to innovate and easily spinning up new cloud instances. There was much less worry about such budget risks with private cloud in this study.

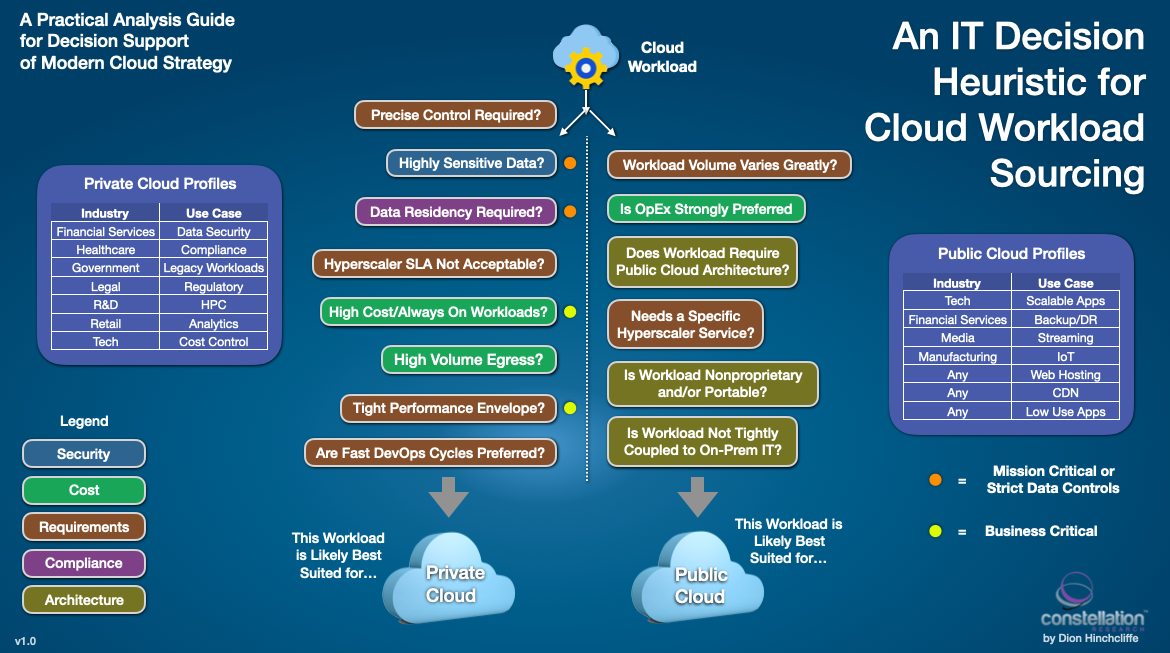

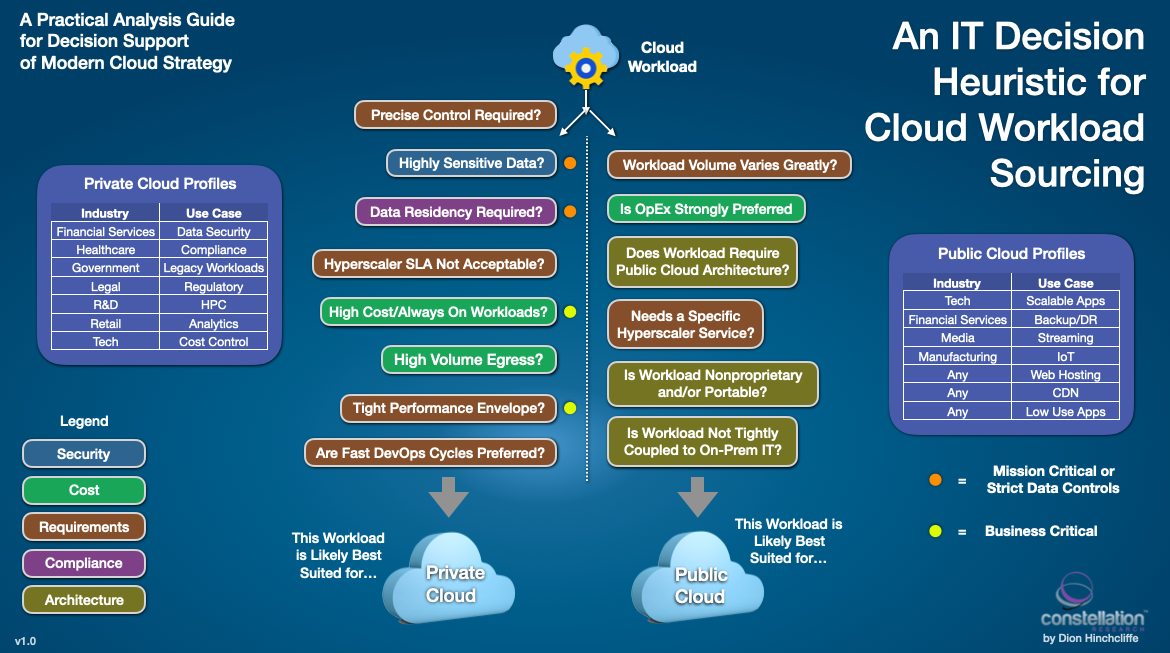

Determining Where Your Cloud Workloads Belong

In the course of conducting this study, I realized we would also able to use the rich data from the interviews to create an up-to-date cloud workload heuristic that can help identify when a workload might be a good candidate for private cloud vs. public cloud today. You can see this in the figure above. The hope is that it will serve as a lighthouse in an era of more pragmatic and nuanced adoption of cloud in the enterprise.

Be sure to read the report itself, with many quotes, more data, and additional color. The path to cloud is not only more complex but more fascinating than we might have first thought. Subscribers to Constellation Research's Research Unlimited subscription can download the new report, The New 2023 Cloud Reality: A Rebalancing Between Private and Public.

Update: HPE has graciously made this report available to the general public for a limited time. You can download a copy here.

Findings Summary for this Cloud Computing Research

My Related Research

Digital Assets in the Cloud for Public Sector CIOs

An Update on IBM Cloud for the CIO

An Oracle NetSuite Roadmap for the CIO and CFO

Digital Transformation Target Platforms: A ShortList

AWS re:Invent 2022: Perspectives for the CIO

The Cloud Reaches an Inflection Point for the CIO

How a Transformation Platform Reimagines Success

Digital Transformation Blueprint for the Office of the CFO

The CIO Must Lead Business Strategy Now

The Strategic New Digital Commerce Category of Product-to-Consumer (P2C) Management

How DataStax is Emerging as a Strategic Anchor in Cloud Data Management

New C-Suite

Tech Optimization

Chief Financial Officer

Chief Information Officer

Chief Procurement Officer

Chief Supply Chain Officer

Chief Digital Officer

Chief Technology Officer