IoT and Cloud Computing; the killer combination for Services; But not always a well defined relationship!

IoT and Cloud Computing; the killer combination for Services; But not always a well defined relationship!

It has been stated that IoT is the killer App for Cloud Computing, but given that the definition of Cloud Computing itself is often difficult, this seems to add to the difficulty of defining Cloud based IoT Services. Add the fact that just about every IoT offering seems to be called a Platform, even those that are genuine Platforms, and understanding relationship between Clouds and different IoT capabilities becomes even more complex.

The Internet of ‘Things’, meaning the connection and interaction of intelligent devices, produces a flow of Data triggered by current ‘Events’ as unpredictable activity stream. The unpredictable nature of the computational services requirement relates directly to one of the major benefits of Cloud Computing. In reality Clouds are just further examples of the ‘Things’ that are part of IoT.

The business value doesn’t necessarily directly reside in either Clouds, or IOT, but in the integration to create Services as the Business valuable outcome.

Unsurprisingly the similarities between IoT and Clouds are many, for as the opening paragraph suggests they are inherently part of the same technology environment. Both are part of the same Network Based Architecture that ‘functions’ in response to a particular demand, rather than predicted steady loading of IT style applications. Adding that both are deployed as a mixture of Public, Private, Hybrid and Community Business solutions and the commonality two technologies should be recognizable, even if the exact definitions are less so.

Mixing the diversity of classifications for Cloud Computing deployment Service models with the classification of IoT enabled Business Services offers too so possible combinations to support any simple labeling. But not all, in fact currently perhaps most Cloud based provisioning, is not deployed to support an IoT integrated Business Service. It simplifies classifications to recognize this and remove many Cloud Services from being considered as part of IoT.

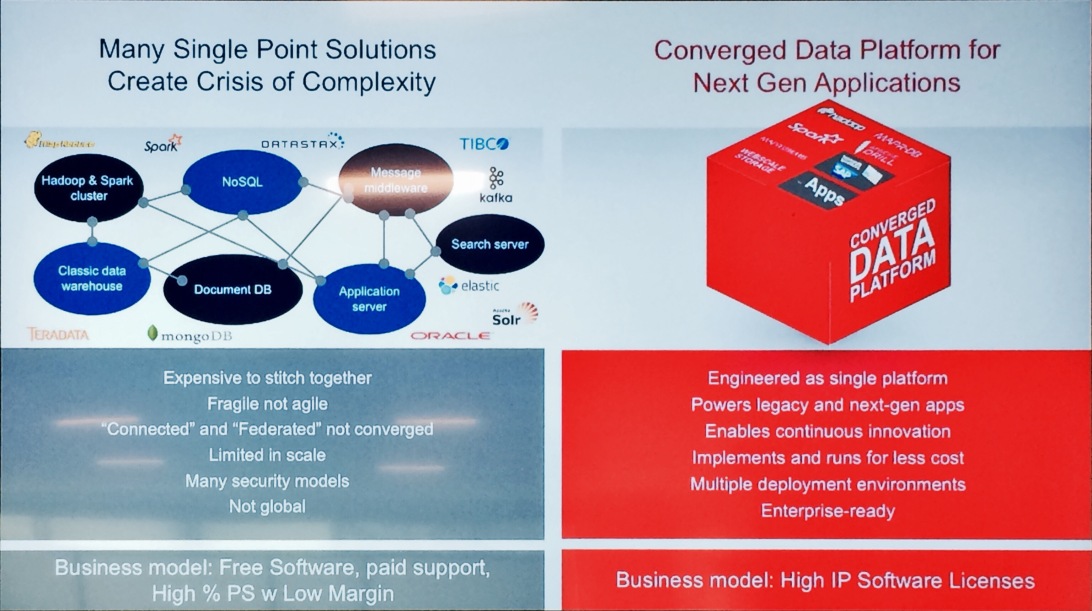

Commercial Cloud Service provisioning models were originally designed to be a cost reduction replacement of traditional computing resources in an Enterprise Data Centre, or to run occasional high loads such as Data Rich Analytics. As the advantages offered by Cloud Data Centre models became better understood definable three major hosting, or provisioning, models started to dominate; Infrastructure as a Service (IaaS), Platform as a Service (PaaS) or Software as a Service (SaaS). Each model could be used in a Private, Public or Hybrid deployment.

IoT solutions that are using Clouds don’t fit so readily to these definitions with their more predictable demands and foreseeable operational consumption of Mips. In contrast IoT solutions which are circumstance, or event driven activities, often a mass of small consumption demands created by evoking specific services to run in different combinations are radically different. So different in fact that it is better to regard them as a fourth hosting model with some Cloud Service providers offering different hosting and charging models.

To understand this in more detail it is necessary to look more closely at the types of IoT and Smart Services being run on Cloud Hosting companies. There are Web Sites that attempt to list all, or at least as many as possible, Cloud Based IoT Service providers, (see appendix for some examples). This blog is focused on noting the impacts of IoT on commercial Cloud hosting.

There is a further clarification necessary to point out that the IoT Cloud Services referred to in this blog all offer capabilities with direct Business value. Unlike the previous blog on large-scale infrastructural IoT connectivity Services Platforms which will interconnect and integrate hundreds of thousands to millions of IoT Devices to various Business Services referred to earlier in this blog.

However it should be noted that current smaller scale, or dedicated single function solutions, IoT Business Services do make direct connections to their dedicated cluster of IoT Devices to collect event data.

So IoT poses an interesting commercial challenge to the providers of the basic Cloud computational capacity. The IoT enabled Services are light, but frequent users of Cloud processing power in a way that doesn’t fit with conventional Cloud hosting tariffs expectation longer usage periods at moderate to heavy computational power usage.

Amazon Web Services, AWS, provides a published tariff as an example, (there are of course similar offers from their competitors), of charging on the basis of events handled, and in what manner.

However AWS, just as other large Cloud Service providers are keen to offer more than just compute power, however they charge for it. Instead they are aiming to re-bundle IoT offerings to merge compute power with direct IoT Business Services with a much higher value Business outcome. These may take the form of IoT Service Templates, as in this Microsoft Azure example, aimed at particular Industry sectors, or logic templates to simplify defining unique IoT services. Either way the aim is to offer ‘pay as you profit’ simplicity whilst avoiding the complexity/cost for an Enterprise building their own IoT Services.

In line with this shift to new commercial models for IoT a growing number of IoT Cloud Service providers offering sophisticated high value Services charged on the basis numbers of events handled. (Salesforce Thunder Cloud for IoT is an example of approaching IoT from the direction of Business outcomes). As events relate directly to business valuable outcomes and insights this is generally seen as a welcome move by both the customer and the provider, but it does require some care in establishing the exact cost model as few customers have any real experience to negotiate on the expected numbers.

Charging on the basis of the received Business Value outcomes is at an early stage of innovation, as the example of IBM Watson IoT Service working in conjunction with Aerialtronics drones. Equipment installed in inaccessible places, such telecommunication towers, can now have an annual survey by a drone with the results interpreted by IBM Watson visual recognition. A straight cost substitution of the cost of a manual visit to the lower cost of a drone visit, but notice little to nothing in this is concerned with IoT event driven activities.

More conventional IoT examples around preventative maintenance come from several sources, as an example Software Ag, and allow clearer and more immediate linkage to business beneficial value. Finding and fixing potential failures at a convenient time with the cost saving of expensive failure makes this a particularly popular IoT business target.

The coming together of Cloud Computing and IoT certainly creates a new era of technology capabilities, with genuinely new business value. Add in Augmented Intelligence, AI, and the stage is set to deliver market transformation to the Digital Economy. Combined Clouds and IoT lead a move away from ‘transaction recording’ Enterprise Application IT and into a Business Services driven ‘read and react’ to opportunities capability. The result is a new generation of business models offering new innovative competitive abilities.

However, this new Business environment is still in its early stages, and, as such the commercial terms for outcome value based charging is still emerging. Buyers of IoT Services are buyers of Cloud hosting Services, but that doesn’t mean on the same terms as those for Enterprise Application IT. At this early stage of the IoT enabled Business Services model those responsible for deployment should take a very careful look at the various commercial options being offered.

Appendix; Three sites that list Cloud Based IoT Service Providers

https://iot-analytics.com/product/internet-of-things-company-list-2015/

http://postscapes.com/internet-of-things-platforms/

Data to Decisions Matrix Commerce Next-Generation Customer Experience Future of Work Innovation & Product-led Growth New C-Suite Tech Optimization Chief Customer Officer Chief Information Officer Chief Digital Officer

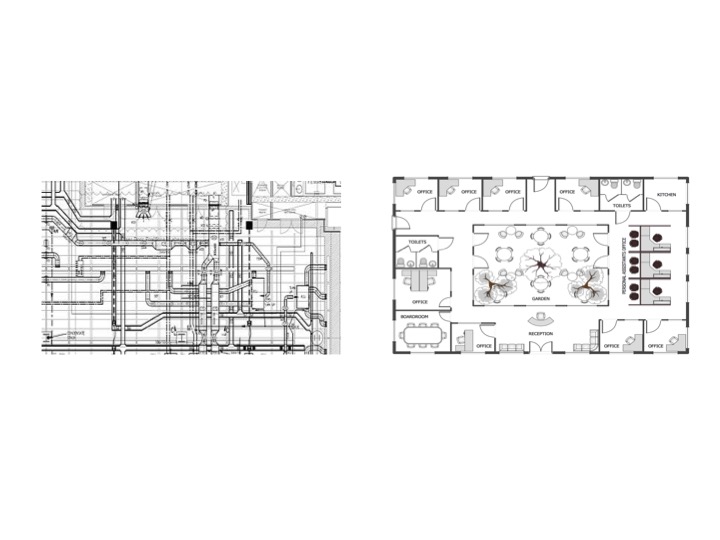

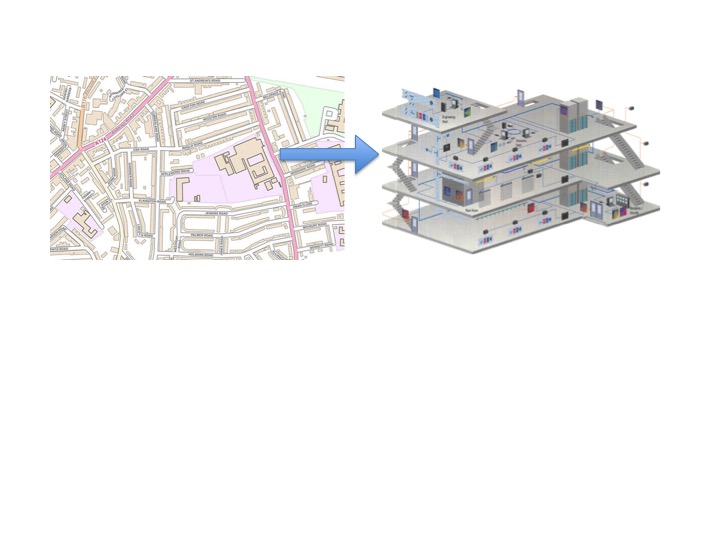

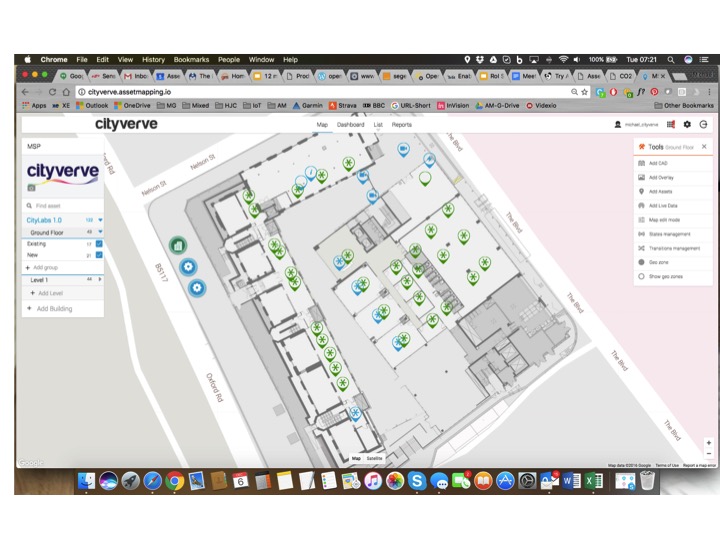

Manchester Smart City Project Verve showing IoT Devices locations combined with context data within a building using the capabilities provided by the UK company Asset Mapping

Manchester Smart City Project Verve showing IoT Devices locations combined with context data within a building using the capabilities provided by the UK company Asset Mapping