Combining Two Modern Practices Propels E-Commerce Success: Product Content Syndication (PCS) and Product-to-Consumer (P2C)

Combining Two Modern Practices Propels E-Commerce Success: Product Content Syndication (PCS) and Product-to-Consumer (P2C)

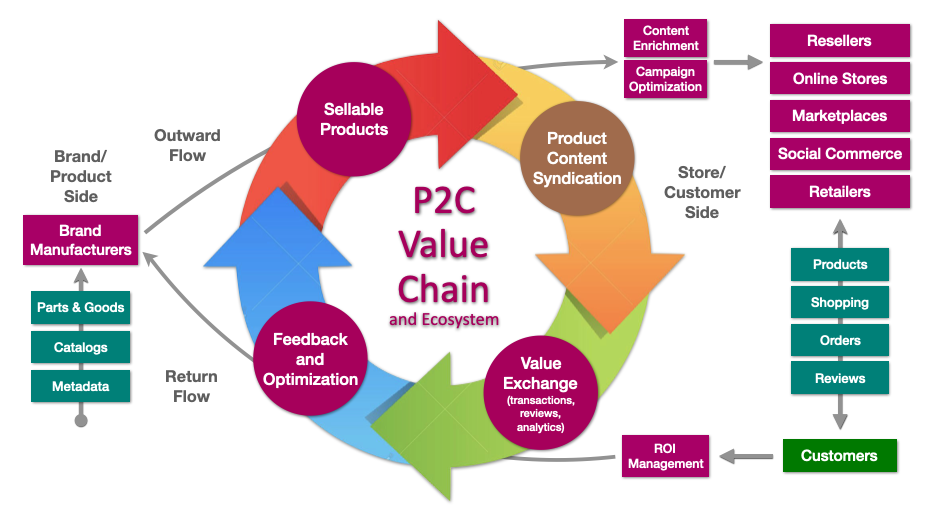

As I noted last year when describing the emerging Product-to-Consumer (P2C) category, as the e-commerce sector has grown to high levels of maturity it accumulated "ever-growing overhead in time, resources, and management attention on making the many moving pieces -- product catalogs, commerce systems, feeds, channels, and marketplaces -- fit together and properly operational in a way that is truly sustainable as a business." E-commerce will be a $7.3 trillion global industry by 2023, but only those prepared to evolve and modernize their ecosystems will thrive in an ever-more digitally sophisticated operational environment.

This is particularly true of the activity that is the lifeblood of e-commerce: The process of optimizing and maximizing product content to create sales. This product content syndication, or PCS, is increasingly seen as cenral to driving growth. This flow of product data has become both a technical and strategic advantage when it comes to omnichannel sales in today’s fast-paced e-commerce space, particularly in competitive environments. In short, having the best, richest, and most accurate set of product listings is now of pre-eminant importance for capturing market share and attracting buyers.

Related Research Report: The New E-Commerce Category of P2C Management

Creating a Winning Product Content Syndication Approach

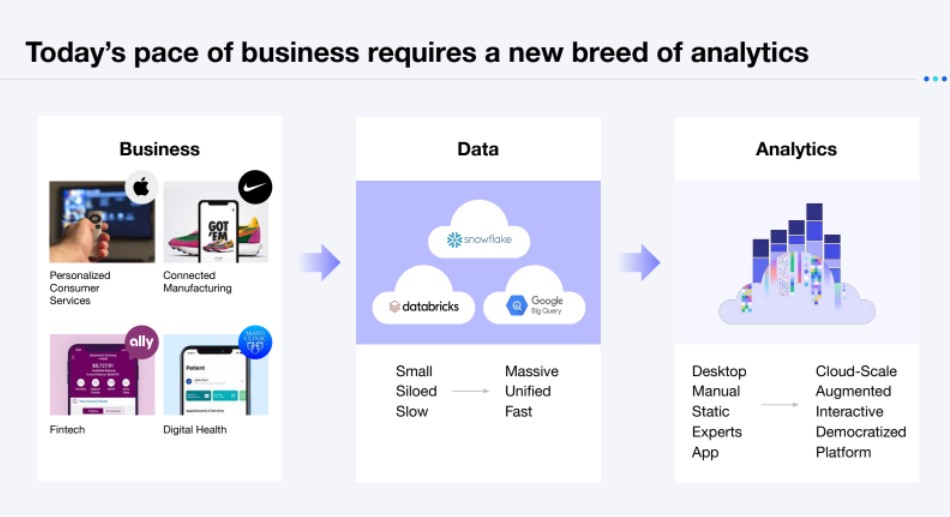

Since a rich variety of product content, combined with a maximal distribution strategy for that content, makes the difference between merely surviving and actually competing, e-commerce managers are seeking better ways beyind the more static product information management approach of the last decade, with more dynamic methods that understand the individual details and optimal operating needs of the full end-to-end ecosystem.

To help navigate the choices, I've researched some of the top means for syndicating product content and determined what the best and most capable methods are in a new research report, Driving E-Commerce Growth With Product Content Syndication. As noted in the report, syndication puts product content into motion and therefore is the key step that brings an e-commerce ecosystem to life. Selecting the approach and technical solution for PCS is therefore a key decision that is a key determining factor the ultimate success of a digital business and all of its often far-flung constituent elements.

Key Takeaway: Make PCS an Integral Part of an E-Commerce Strategy

Without a more systemic and contextual approach -- I found the overall approach of using P2C management to be the most effective -- every kind of digital businesses, ranging from the existing the traditional e-commerce stores to the hot new emerging D2C channels from manufacturers, each have the same set of management challenge when it comes to keeping their product content updated and optimally used across their ecosystem. Namely, not to just maximize the product content but also to fundamentally transform their engagement with the market in a far more dynamic, intelligent, and compelling way.

The syndicated options outlined in my report range form the most basic and fundamental, all the way up to the most holistic vision available to e-commerce firms currently. Brands, retailers, and stores will be successfully primarily by their effective management of the product content ecosystem. In fact, it is by making PCS a fully integrated aspect of an e-commerce strategy that they can properly realize the insights and knowledge contained within the feedback loops that link them to the market. Harnessing this feedback with PCS in a contextually and data-driven way is how to provide the highest-impact results.

The best sustainable strategy for succeeding with product content is to contextually address each and every channel via automation. E-commerce firms and digital businesses that can that do this from a holistic strategy and matching platform will be in a better position to seize opportunity and survive rapid shifts in the market. Digital business has moved away from the simplistic models of years' past to much more deeply integrated new systems that can cope with today’s operating requirements, regardless of how sophisticated they are. For most organizations, developing and operating a more robust, enlightened, and sustainable PCS strategy will be essential to their long-term growth, maturity, and success.

Additional Reading

The Strategic New Digital Commerce Category of Product-to-Consumer (P2C) Management

The P2C Management Vendor ShortList for 2021

Realizing a Decisive Advantage in Digital Commerce Through Economic Flexibility

How Headless Revolutionized Content Management

The Future of Enterprise Content Management

A New Digital Experience Maturity Model for Improved Business Outcomes

How CXOs Can Attain Minimum Viable Digital Experience for Customers, Employees, and Partners

To Strategically Scale Digital, Enterprises Must Have a Multicloud Experience Integration Stack

Marketing Transformation Matrix Commerce New C-Suite Next-Generation Customer Experience Tech Optimization Chief Analytics Officer Chief Customer Officer Chief Data Officer Chief Digital Officer Chief Executive Officer Chief Information Officer Chief Marketing Officer Chief Revenue Officer Chief Supply Chain Officer Chief Technology Officer