CoreWeave raises $7.5 billion in debt financing for AI data center buildout

CoreWeave raises $7.5 billion in debt financing for AI data center buildout

CoreWeave, a hyperscale cloud provider focused on AI workloads, raised $7.5 billion in debt financing to build out data centers primarily with Nvidia GPUs to go with $1.1 billion in equity funding earlier this month.

In the last 12 months, CoreWeave has raised more than $12 billion in equity and debt financing. CoreWeave's funding highlights how the generative AI boom has fueled a new category of specialized cloud vendors.

The debt financing, led by Blackstone, Magnetar and Coatue, will be used to develop compute infrastructure for existing contracts. CoreWeave customers are using its infrastructure to train models.

CoreWeave is also building out its European footprint, which includes a headquarters in London and a commitment to invest $1.25 billion in the region.

By the end of 2024, CoreWeave expects to have 28 data centers double its 2023 footprint, which was up from 3 data centers in 2022.

Michael Intrator, CoreWeave CEO and co-founder, said the company is looking to provide specialized GPU cloud infrastructure that can "reshape the cloud landscape, accelerate the AI race, and power the next generation of AI innovation." CoreWeave's infrastructure is designed for AI and machine learning, graphics and rendering and life sciences and other intensive workloads.

Nvidia is a CoreWeave investor, and the cloud provider is adopting its latest technology in its data centers whether it's GPUs, networking systems, applications and architecture. For Nvidia, CoreWeave is a key cog in its plans to scale AI factories. Microsoft is reportedly among CoreWeave’s largest customers.

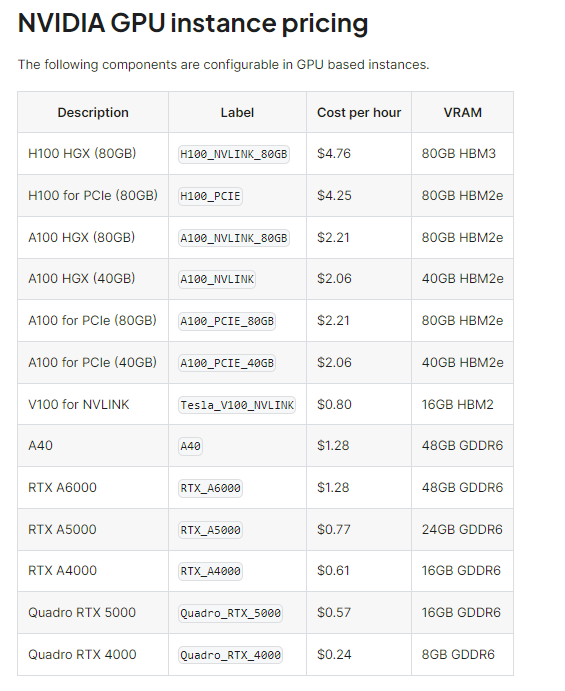

According to CoreWeave, its infrastructure is purpose built for intensive workloads and can deliver performance 35x times faster and 80% less expensive than general purpose clouds. Here's a look at CoreWeave's Nvidia GPU pricing.

Data to Decisions Tech Optimization Innovation & Product-led Growth Future of Work Next-Generation Customer Experience Digital Safety, Privacy & Cybersecurity nvidia Big Data ML Machine Learning LLMs Agentic AI Generative AI AI Analytics Automation business Marketing SaaS PaaS IaaS Digital Transformation Disruptive Technology Enterprise IT Enterprise Acceleration Enterprise Software Next Gen Apps IoT Blockchain CRM ERP finance Healthcare Customer Service Content Management Collaboration Cloud CCaaS UCaaS Enterprise Service GenerativeAI Chief Information Officer Chief Technology Officer Chief Information Security Officer Chief Data Officer Chief Executive Officer Chief AI Officer Chief Analytics Officer Chief Product Officer

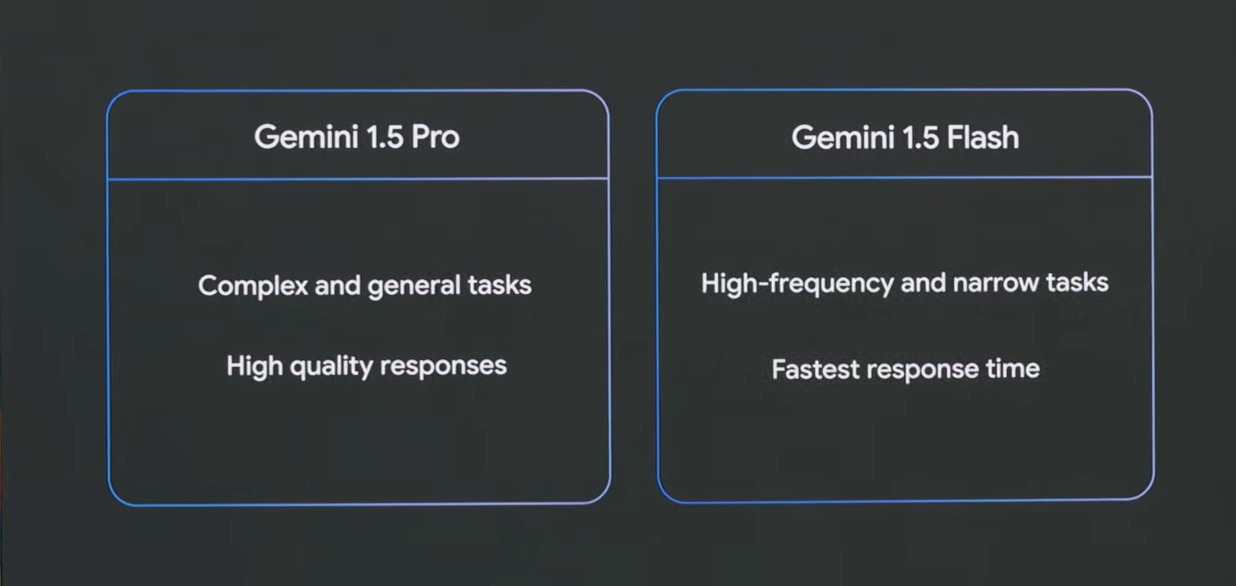

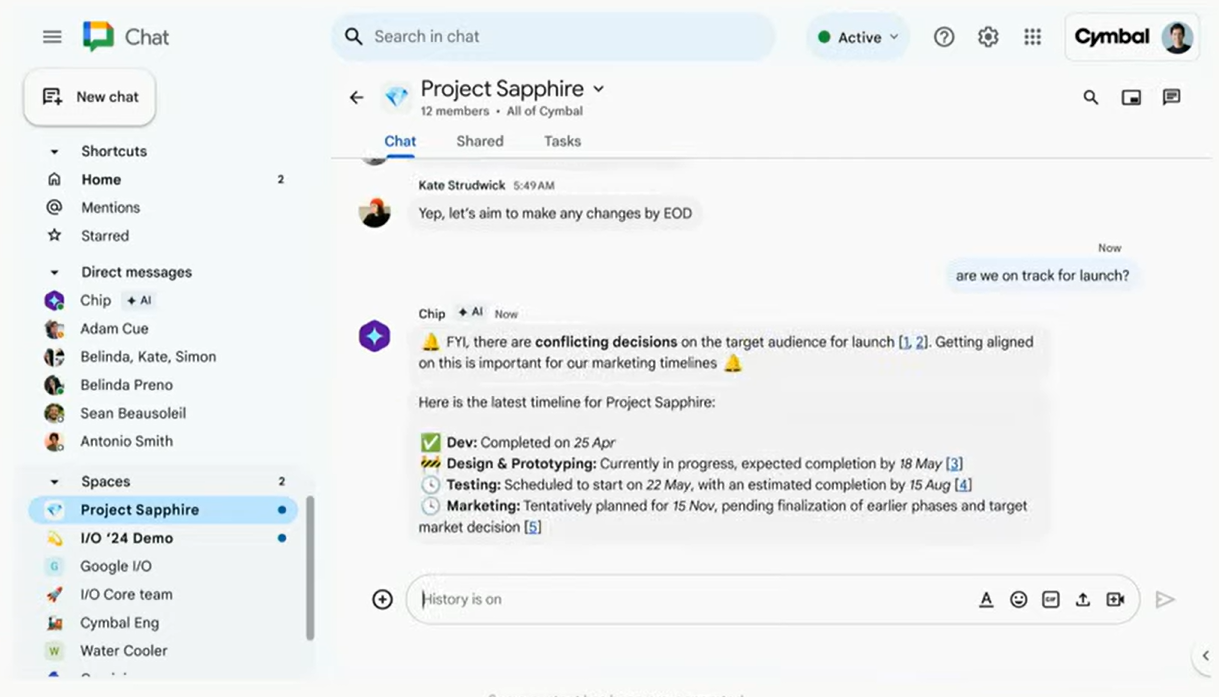

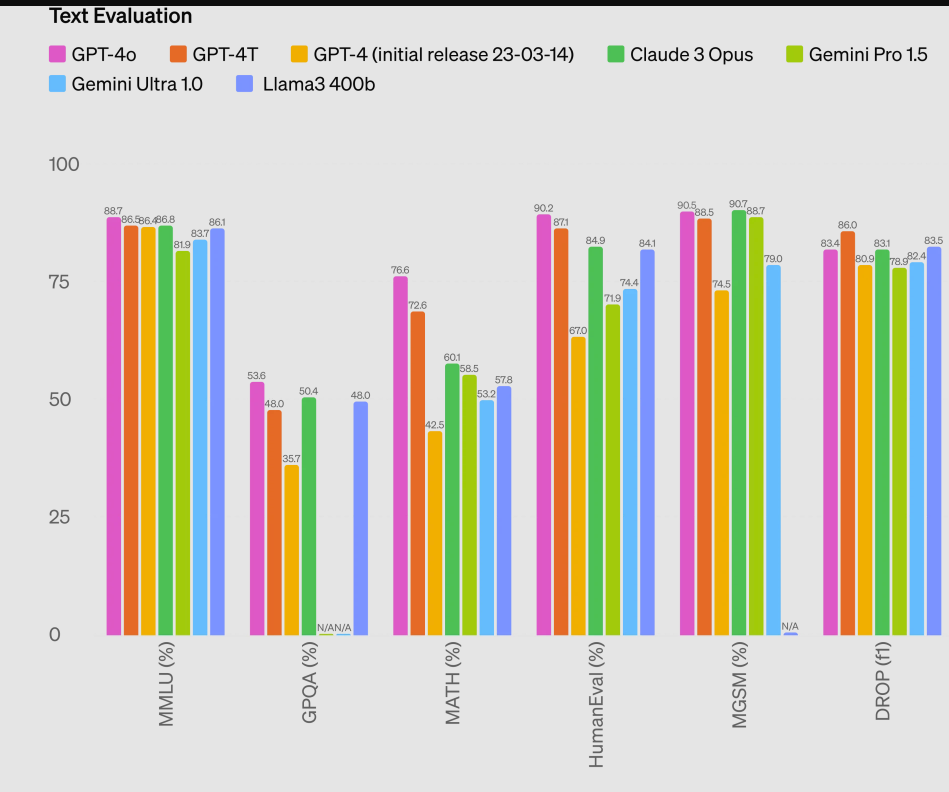

Google I/O's barrage of news, which served as a sequel to Google Cloud Next.

Google I/O's barrage of news, which served as a sequel to Google Cloud Next. vers a 4.7x improvement in compute performance per chip. Over the previous generation. So most efficient, and performant TPU today will make Trillium available to our cloud customers in late 2024," said Pichai, who noted that Google Cloud will offer Nvidia Blackwell GPUs too. Pichai's message was that Google has the scale to keep building out infrastructure for the AI arms race whether it's in liquid cooling advances, custom processors and fiber cables around the world.

vers a 4.7x improvement in compute performance per chip. Over the previous generation. So most efficient, and performant TPU today will make Trillium available to our cloud customers in late 2024," said Pichai, who noted that Google Cloud will offer Nvidia Blackwell GPUs too. Pichai's message was that Google has the scale to keep building out infrastructure for the AI arms race whether it's in liquid cooling advances, custom processors and fiber cables around the world.