Where’s tokenomics for the rest of us?

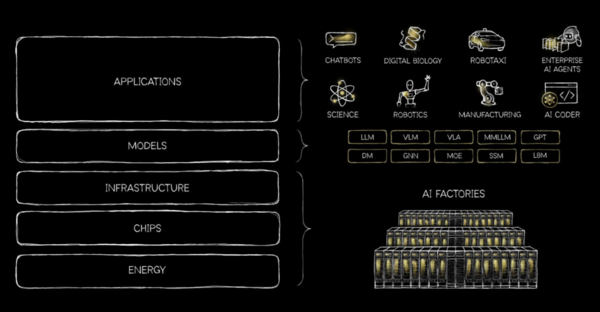

Nvidia CEO Jensen Huang has a go-to riff about the company's full stack AI approach and architecture. It goes like this: Nvidia's AI stack creates tokens that turn into revenue. Therefore, you should spend more on Nvidia's continually optimized stack.

Every company will become an AI factory and grow revenue. Nvidia has reframed AI infrastructure as an industrial system that converts energy and compute into tokens, which are the fundamental units of AI output.

"Compute with the right architecture translates to maximizing revenues and compute equals revenues. Without investing capacity today, without investing in compute, there cannot be revenue growth. And that, I think everybody understands. Compute equals revenues, choosing the right architecture is incredibly important. It's more than strategic now. It directly affects their earnings and choosing the right architecture, the one with the best performance per watt is literally everything," said Huang on Nvidia's fourth quarter earnings call.

- Nvidia Nemotron: Much needed open-source model champion in US

- Nvidia's Huang: All software will be agentic

- Constellation ShortList™ AI Factory Providers

The argument is convenient for selling AI infrastructure to the likes of OpenAI, Anthropic, Meta, AI-focused clouds like CoreWeave and hyperscalers including AWS, Microsoft Azure, Google Cloud and Oracle. The capital expenditures devoted to AI infrastructure is mind boggling and most of it is going to Nvidia. The reality is that most of the AI world has bought into tokenomics, which makes sense if you're one of the companies monetizing tokens or selling the compute to create tokens.

Here's the rub: Most enterprises don't sell tokens and won't sell tokens. At some point, enterprises may be able to sell their own data and industry specific know-how, but at last check JPMorgan Chase sells financial services, Walmart sells retail goods and GM and Ford sell vehicles.

Most companies simply don't sell tokens. In this world, revenue doesn't equate to tokens generated because revenue comes from products or services. Tokens are a cost input not a product. The reality is a token is a word, picture and chunk of audio or video and the equivalent of scrap metal left on the manufacturing floor.

Huang's tokenomics argument doesn't get much pushback from the AI bubble. Tokenomics is the GDP of the AI industry. The rest of us need to cut AI costs to get a return. Tokens are a cost center for most enterprises and CIOs are looking for on-prem deployments, smaller models, cheaper compute and more AI inference efficiency.

- Constellation ShortList™ AI Application Development Platforms

- Meta to double down on Nvidia's AI stack, including CPUs

- Nvidia touts Rubin platform production, hardware advances

- A look at how Mercedes Benz, Nvidia collaborated on autonomous vehicles

At Nvidia GTC 2026, Huang and company need to reach these enterprises that don’t conveniently fit into a token-based model. I've run this tokenomics theme past at least a dozen CxOs and they all say the same thing: "It's a cost, not a revenue generator." Tokenomics works for a handful of companies potentially as revenue hasn't caught up to what's being spent.

In other words, the rest of us need returns pronto. That's why Nvidia is likely to speak to AI inference efficiency, architecture and ways to drive down costs. Salesforce cooked up the Agentic Work Unit in an effort to advance tokenomics and translate it into actual work being conducted. It's a start. For most companies, surging token consumption just means you’re wildly inefficient.

At some point, Nvidia is going to need enterprises standardizing on its stack. It's starting to happen as earnings from Dell Technologies show, but tokenomics hasn’t expanded beyond a few companies.

The total cost of ownership (TCO) vibe is something that is likely to influence other themes at Nvidia GTC. Here's a look at what I'm expecting to learn more about.

- Nvidia's take on its latest GPU platform and how it improves TCO and validates a full stack approach. Nvidia is the Apple of AI, but its large customers, notably hyperscalers, are also developing custom silicon to improve efficiency. See: Palantir and Nvidia build sovereign, on-premises AI reference architecture

- A lot of talk about AI inference and an architecture that includes Groq technology. Huang set up the architectural themes with his 5-layer cake blog. On Nvidia's fourth quarter earnings call, Huang said: "As we did with Mellanox, we will extend Nvidia's architecture with Groq's innovations to enable new levels of AI infrastructure performance and value." Huang said the word architecture 23 times on the earnings call.

- Nvidia will likely spend a lot of time talking about how the software stack and CUDA architecture are what drives the full stack approach. Nvidia's moat is its CUDA architecture and Huang's promise is that the company will continually innovate with software engineering and optimization for AI workloads.

- Open source. Nvidia has become the champion of open-source models with the Nemotron family of models and new versions have launched. Huang will also reportedly launch a platform for enterprise AI agents that rhymes with OpenClaw. China is the headliner in open source LLMs, but Nvidia leads US efforts and that work is critical. See: Why enterprise AI leaders need to bank on open-source LLMs

- The next big thing. Nvidia GTC will feature a lot of robotics and the company will sit at the intersection of robots and AI. Real industrial use cases for humanoids will be worth watching.