AI inference costs are going to be a big concern: What's the fix?

AI inference costs are turning up on earnings calls as enterprises try to get a handle on token costs as they ramp AI agents. The bigger question is whether the context layer is the way to keep a lid on AI costs or you just kick it old school with budgeting.

First, AI inference is starting to matter as a budget item. Based on an analysis of earnings call transcripts, it's clear that AI inference and token costs are top of mind for the technology companies most likely to be running AI agents at scale. Here's a sampling of AI inference costs comments on the most recent earnings calls from technology companies, which are probably a quarter or two ahead of the curve.

Cognizant CEO Ravi Kumar said the company is using token metering on projects and at the individual levels. Kumar said:

"As our AI productivity capabilities mature, we are increasingly applying token metering at a project or an individual level to provide early insights into usage patterns, model management and optimization of inference costs. Token metering is a reality, both at a project level and at an individual level. We have token metering for fixed price programs as well as for time and material. For fixed price programs, we have the opportunity to reduce the cost and keep the margin with us."

Kumar said customers are starting to become more sophisticated about metering token costs and analyzing human and digital efforts delivered by AI. Enterprises are finding that they could meter token usage for frontier model companies like Anthropic and OpenAI, but increasingly want to hand off the economics to Cognizant.

Spotify Co-CEO Gustav Söderström said the company is integrating AI across the company and it is "accelerating how we build and deliver at a pace we haven't seen before." Söderström added that Spotify is shipping faster with greater efficiency and lowering the cost per feature, but inference costs are a real budget item. "You can see some of the inference costs behind that acceleration in our OpEx, but we have tremendous confidence in what we're building," he said.

Twilio CFO Aidan Viggiano said the company is seeing AI productivity gains, but he's also watching costs. "I'd say while it's an area of spend that we're watching, our inference costs are manageable," he said.

ServiceNow CFO Gina Mastantuono said on the company's Investor Day that AI reasoning is "less than 10% of our cost to serve." "Customers aren't paying us for tokens. They're paying for a resolved outcome. Reasoning is one input. Workflow orchestration, governance, context, cross-system action, that's where the other 90% of the value and costs sit," she said.

Bill McDermott, CEO of ServiceNow added that "a lot of customers are getting a little bit surprised on the tokenization of models and how that is surprising their budget landscape." McDermott said those unpleasant budget surprises are good for ServiceNow's business and hybrid seat and consumption plans.

Can context layers help with AI costs?

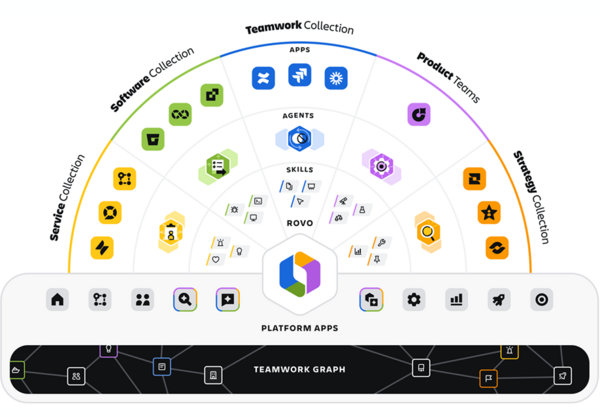

Like ServiceNow, Atlassian sees itself as a fix to AI inference costs. Atlassian CEO Michael Cannon-Brookes recently outlined the company's traction with using its platform as a context layer with the company's Teamwork Graph. In other words, the right context at the right time with the right latency is going to lower your AI inference costs.

"We showed a stat that talked about how we were getting 44% of better quality in terms of output and 48% cheaper rate. You're using 48% fewer tokens to get to that same or better outcome. That's a conversation with your CIO. That's a conversation with your CFO that's going to resonate. And so, I think both things are true," said Cannon-Brookes. "I'm not sure most CFOs really like the token maxing meme, to be honest. I think they are starting to see and pay bills that this magical revenue pile is not coming from nowhere."

Is the context layer the cure for your potentially runaway AI inference and token costs? Perhaps. Enterprise technology buyers will need some way to evaluate the returns on context layers. After all, every vendor--Atlassian, ServiceNow, Boomi, Microsoft, Salesforce, Workday, SAP, Celonis and a bevy of others--has a context layer now.

I could run a dozen slides of various context layers, but here’s Atlassian’s play.

What follows is a framework that could be used to start to evaluate context layers. The working theory here--and it is early--is that a context layer should save you money by getting you the right answers faster and with as few tokens as possible. There won't be one context layer winner, but you should be able to maximize returns without a dozen vendors playing the context game.

There are caveats with boiling context layers down to a metric. For starters, a context layer is really enterprise AI infrastructure. The context layer is there to give AI systems the right business meaning, data, permissions, memory and provenance at the right time with the lowest costs and risk. Ultimately, a context layer has to give AI agents the right answer to act with accuracy and minimal rework.

Context layers also need to deliver precision, recall, a strong hit rate with minimal noise and good sourcing. On the tech level, a context layer needs to be judged on grounded answer relevance, citation accuracy as well as human acceptance rate. Databricks' RetrievalGroundedness judge is worth a look.

With those table stakes checked off, context layers will differentiate on accuracy, system of record adherence, ontology and complete data lineage. The money round for context layers will likely boil down to a scorecard of metrics. This context layer scorecard could include the following:

- Task completion rates.

- Percent of cases resolved without human intervention.

- Reduced rework.

- Cycle time reduction.

- Decision accuracy.

- Token cost and cost per successful task.

- Cost avoidance where AI costs are compared to a baseline human.

The costs boil down to context layer platforms, graph infrastructure, data integration, ontology work, governance and security, observability, model and token costs and maintenance and human review.

What will make or break context layers will be cost per accepted outcome. Other metrics like number of AI queries or tokens processed are merely vanity plays.

Will on-prem or open weight models the answer?

One of the more interesting riffs of the week at Boomi World 2026 was CEO Steve Lucas' take on open models and economics.

The short version:

- Proprietary models at the present moment are sucking you in. Prices will go up. And you'll be locked-in before you know it.

- That concern is partly what drove Boomi's Lunar.dev acquisition. The idea is that Boomi's platform will need to route to the most economically effective models.

- In the end, open weight models win, argued Lucas.

"They cost money to run from a compute standpoint, but they are profoundly less expensive. Do we continue to pay every year 10 times what we did last year for a proprietary frontier model? Or do we start to do what enterprises always do, which is pursue the lowest economic option with the highest rate of return economics? ROI always wins. It will profoundly supersede AI at all times," said Lucas.

- The AI world according to Boomi CEO Steve Lucas

- Boomi to buy Lunar.dev, eyes intelligent prompt and model routing

- Boomi builds out data-to-agent platform, says welcome to headless party

Open weight models are also closing the gap on frontier models. "You'll need enterprise grade intelligent prompt routing that distributes prompts, requests, actions and activities to the right model with the most economically productive outcome," said Lucas.

And don't forget the on-prem play with open weight models. Boomi is emphasizing Distributed Agent Runtime with a focus on hybrid cloud and on-prem deployments. Boomi Distributed Agent Runtime enables customers to run agents locally in their own infrastructure, solves for data residency, resiliency and cloud cost concerns and runs agents behind the firewall.

That announcement was overlooked as was Boomi's partnership with Red Hat. Boomi said it will integrate Boomi Agentstudio with Red Hat AI and create an integrated stack for on-prem deployments. Rest assured open weight and low cost models will be in that Red Hat and Boomi bundle.

Why AI FinOps will explode

Whether it's context layers or platforms such as what was outlined by Boomi at Boomi World 2026, the AI budget is going to be scrutinized and it's a bit fuzzy how the ROI equations will look. Today, the idea that AI agents are going to deliver returns is partly based on blind faith.

Mike McLarty, CTO of Boomi's Innovation Group, said customers are spending heavily on AI on migration, process and development work, but the biggest wild card is token costs. "Token cost is probably the biggest variable and the biggest risk factor," said McLarty.

The biggest challenge for AI costs will be the end of what is a "heavily subsidized market right now for tokens," said McLarty. Subsidized? How can you be subsidized for token costs when you're paying Anthropic 10x more than you were a year ago?

Circular deals in the AI industry between hyperscalers, chipmakers and foundational model players are subsidizing token costs. At some point, that subsidy will run out. The bet by the industry is that enterprises will be locked in before the costs really surge.

"Who knows where the token costs are going to go, but we know it's going to be a big line item for CFOs," said McLarty.