Google Cloud presses full-stack AI edge with new TPUs, Agentic Data Cloud, Gemini Enterprise Agent Platform

Google Cloud is making its stack more specialized across the board as it launched two 8th-generation TPUs aimed at model training and inference, outlined a data platform that will compete with Snowflake and Databricks and broadened its platform for AI agents across industries and use cases.

At Google Cloud Next 2026, the company's broad theme is to move enterprises from merely chatting with models to building full-blown agentic enterprises. Another key theme: Google plans to be the vertically integrated AI stack of choice.

"The early versions of AI models and systems were really focused on answering questions that people had and assisting them with creative tasks," said Google Cloud CEO Thomas Kurian on an analyst briefing. "Now we're seeing as the models evolve, people want to delegate tasks and sequence of tasks or workflows to agent and then being able to turn around and use a computer, use all of GCP and Workspace."

- Merck inks Google Cloud agentic AI deal worth up to $1 billion

- The big picture behind Ask Macy’s, a Gemini powered AI agent

Google Cloud is also adding a few infrastructure twists to optimize for AI inference workloads, which happen to be needed for AI agents and capable of blowing through token budgets. Google is also leveraging its AI infrastructure to be more efficient about power as well as memory.

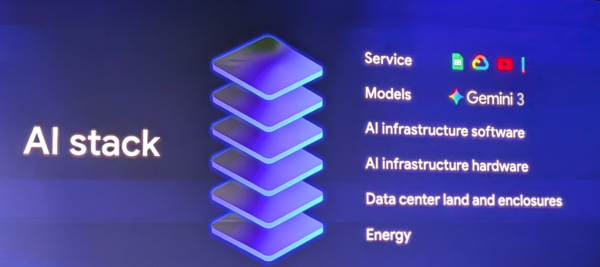

The goal for Google Cloud is clear. Be the hyperscale cloud provider that offers an integrated first-party AI stack across infrastructure to data to AI agent production.

Here's what was announced at Google Cloud Next and a few thoughts.

8th Gen TPUs for training and inference

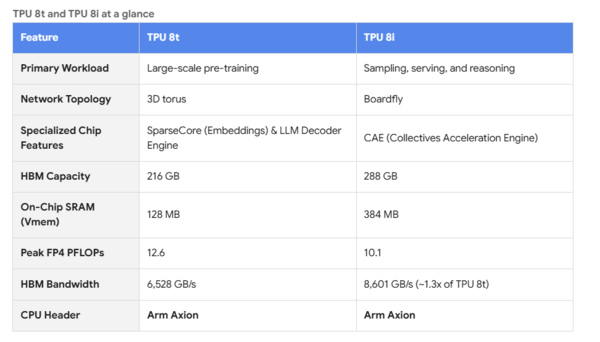

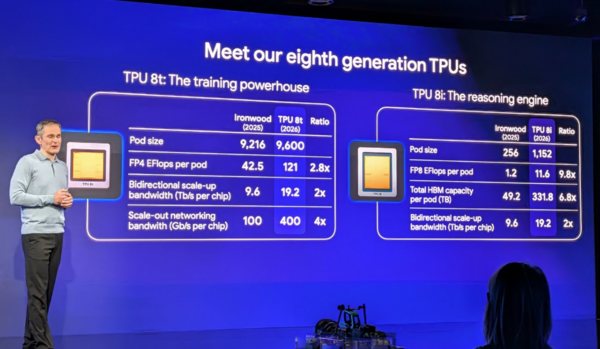

Google Cloud launched two versions of its 8th generation TPUs. The TPU 8t is aimed at training and provides TPU Direct Storage and Google Cloud's Virgo Networking. Virgo Network is Google Cloud's new AI-optimized network that connects either Nvidia Vera Rubin NVL72 systems or TPI 8t superpods into massive supercomputers for training frontier models.

Google Cloud's TPU 8i features Boardfly, a new architecture to scale agents, and Collectives Acceleration Engine, which optimizes the chip and lowers latency.

For scaling CPUs, Google Cloud's Axion Arm-based processor will power N4A compute instances. Google Cloud also outlined high-performance storage with a Managed Lustre 10T offering and Rapid Storage for inference.

Google Cloud also launched an AI-optimized networking fabric with 250K-node RDMA virtual private clouds, RDMA Direct Storage and petabit interconnect.

Kurian also noted that Nvidia was a strong partner and Google Cloud launched Nvidia Vera Rubin instances with Virgo Networking to run the largest AI clusters.

- Anthropic to use Google Cloud TPUs as it diversifies capacity

- Anthropic inks deal with Google, Broadcom for TPU capacity

- Alphabet plots massive CapEx increase for 2026

These components make up Google Cloud's next-gen AI Hypercomputer. Google Cloud also has consumption models for long-running batch workloads, on-demand and spot pricing. The new TPUs and infrastructure advances create gains in training and inferencing. Kurian said TPU 8t are 3x better than the previous generation based on flops per what. TPU 8i is "an 80% improvement in the amount of memory you get with SRAM."

"We felt that people would want systems that were more optimized for training, and separately, systems that were more optimized for inference," said Kurian. "We also designed them to be super-efficient in terms of how much power they use, because we felt that power efficiency would become a constraint as people continue to scale both training and inference."

Key points about additions to AI Hypercomputer:

- TPU 8t uses Inter-Chip Interconnect technology to scale up to 9,6000 TPUs and 2 PB of shared high-bandwidth memory in one superpod.

- TPU 8i can use the Boardfly topology to directly connect 1,152 TPUs in one pond.

What it means: Google Cloud’s competitive advantage is its TPUs and if the company were ever to sell its silicon to third parties it would be a fierce Nvidia rival. Simply put, everything starts with the TPU.

The vertical integration play

At a launch event for Google's next-gen TPUs, Amin Vahdat, SVP and Chief Technologist of AI and Infrastructure at Google, made the case for the company's vertically integrated AI stack strategy.

"We have a unique opportunity to really solve the problem and get vertically integrated. We don't just insert at one layer of the stack. We ensure that everything is integrated end to end, for the highest levels of efficiency, the highest levels of reliability and the highest layers of security," said Vahdat.

Vahdat also argued that TPUs were a requirement back in 2013 when they originated to handle workloads unique to Google. Going forward, the need for specialization will become the norm for infrastructure and computing stacks overall. That need for specialization explains why Google launched two TPUs focused on training and inference with completely different specifications.

"These chips were both custom designed from the ground up for training and inference separately. These are not simple derivatives of one another," said Vahdat.

Vahdat added that CPUs are likely to make a comeback because they are useful for running AI agents. "The age of specialization is going to continue. You have to specialize if you're really going to go after these hard problems," said Vahdat.

That specialization for AI workloads applies to every segment of Google's AI stack.

Data cloud advances aimed at Databricks

Google Cloud launched its Agentic Data Cloud that includes a cross-cloud lakehouse with zero-copy access to Iceberg data across Google Cloud, AWS, Microsoft Azure and SaaS applications.

The effort also includes a knowledge catalog that builds a semantic graph over structured and unstructured data and a new Lightning engine for Spark that Google Cloud says offers twice the price/performance.

Google Cloud also said the Agentic Data Cloud includes Gemini-powered notebooks and a deep research agent that works across structured and unstructured data.

"A big shift we see is how models are being now used to execute tasks on behalf of people," said Kurian. "In the first step, agents need to access context, which in turn requires them to understand the data that the company has."

Google Cloud said that its Lightning Engine for Apache Spark has performance parity and 2x price-performance compared to Databricks Photon on AWS S3 for large datasets.

Enterprises using Agentic Data Cloud include Flipkart, Lowe's, American Express and Vodafone.

What it means: When Google Next 2026 is analyzed, it’s possible that Agentic Data Cloud will be the most disruptive announcement. Yes, Google Cloud had the parts of its data cloud in place already, but its approach is a direct shot at Databricks. The move to work across multiple clouds makes it more of a competitor. The wild card is whether AWS and Microsoft Azure play along with what Google Cloud announced.

Wiz integration

Google Cloud said it will combine Google's Threat Intelligence and Security Operations with Wiz's Cloud and AI Security Platform to prevent, detect and respond to threats to create the Agentic Security Operations Center. Kurian said the combined platform will include Gemini-powered threat intelligence that will scan the dark web.

"We're introducing new Gemini powered threat intelligence agents that use Google's expertise to scan the dark web and understand threats and prioritize alerts for you," said Kurian. "Our dark web intelligence and threat intelligence agents are incredibly accurate, detecting over 98% of the issues on the dark web accurately.”

In addition, Google Cloud is launching agentic AI security operations for alert triage, investigation, detection and threat hunting. Google and Wiz will create red, blue and green agents for continuous testing and remediation.

The overall goal is to secure agentic workloads via multiple components and Cloud Armor, which protects models from internal and external threats. Agents are governed for identity and policies and audited.

What it means: Wiz rounds out an already strong security stack from Google Cloud and there’s potential to take market share. However, the cybersecurity industry is in flux.

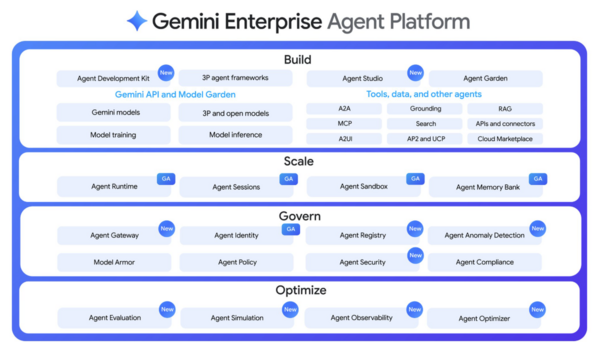

Gemini Enterprise Agent Platform evolves

With last year's launch of Gemini Enterprise, Google Cloud was early in its efforts to become a platform to build, orchestrate and govern agents. At Google Cloud Next 2026, Gemini Enterprise Agent Platform has gone with more of a full-stack approach that includes systems connectivity, registries for skills, tools and agents, universal context, agent engine runtime, governance and marketplace.

Gemini Enterprise Agent Platform includes multiple surfaces ranging from personal accounts, Workspace, Gemini Enterprise and any third-party application as well as Agent Engine, universal context, observability and various connections via MCP.

The platform is comprised of multiple Google Cloud services including Agent Studio, Agent Registry, Agent Identity and Agent Observability.

Google Cloud also noted that Gemini is embedded across Google Workspace including Gmail, Meet, Chat, Docs, Slides and Sheets. Ultimately, Chat becomes the hub for interacting with people and agents. As for the broader platform, Workspace becomes a semantic layer across documents meetings and files.

At the base of the agent platform there are a variety of core layers to build enterprise applications. Gemini Enterprise Application includes:

- A core platform with Gemini 3.1 model advances, Agent Builder updates and an advanced memory bank.

- The ability to leverage Anthropic models.

- Context via Workspace and Knowledge Engine as well as personal and team intelligence.

- Tools with universal MCP connectors, pre-package connectors and grounding.

- Projects with collaboration tools and Canvas Mode to create and collaborate on artifacts.

- Notification and inbox to manage a taskforce of agents that do the work and notify you.

What it means: Gemini Enterprise Agent Platform now looks fully formed. What remains to be seen is whether enterprises favor hyperscalers to build and manage agents or one of their go-to vendors like Salesforce or ServiceNow.

Gemini Enterprise for Customer Experience

Google Cloud also updated Gemini Enterprise for CX to manage a suite of agents and provide merchant insights with Agent Assist and Customer Experience Insights.

Going forward, Kurian said Gemini Enterprise for CX will also hone industry focused workflows to cover retailers, restaurants and financial services.

The platform will also have connections to point-of-sale, sales, service and other systems.

What it means: The Gemini Enterprise for Customer Service announcements will be overshadowed, but are key to the Google Cloud ground game. The move to specialize by industries and use cases is a good move.

Building out the ground game

Google Cloud is spending $750 million to develop its partner network for agentic AI as it announced a bevy of partnerships with integrators as well as enterprise software giants such as Salesforce and SAP.

The bottom line is Google Cloud is building out its ground game for AI agent deployment. Key announcements at Google Cloud Next include:

- Google Cloud will spend $750 million to accelerate customer deployments, AI prototyping and using Google FDEs.

- Deloitte launched a Google Cloud Agentic Transformation practice and Accenture expanded its Google Cloud efforts on agentic AI. BCG and Google Cloud also expanded a partnership as did McKinsey.

- Salesforce and Google Cloud said their agents will be able to collaborate with context and end-to-end workflows across their platforms.

- SAP said Google Cloud outlined a multi-agent integration pact with Gemini Enterprise and SAP’s Joule agents. Customers can deploy Joule Agents in SAP CX Solutions to build, launch and optimize marketing campaigns. Gemini Enterprise acts as a central hub for agents to take action across SAP and Google Cloud platforms.