AWS' Kiro launches autonomous agents for individual developers

AWS' Kiro launches autonomous agents for individual developers

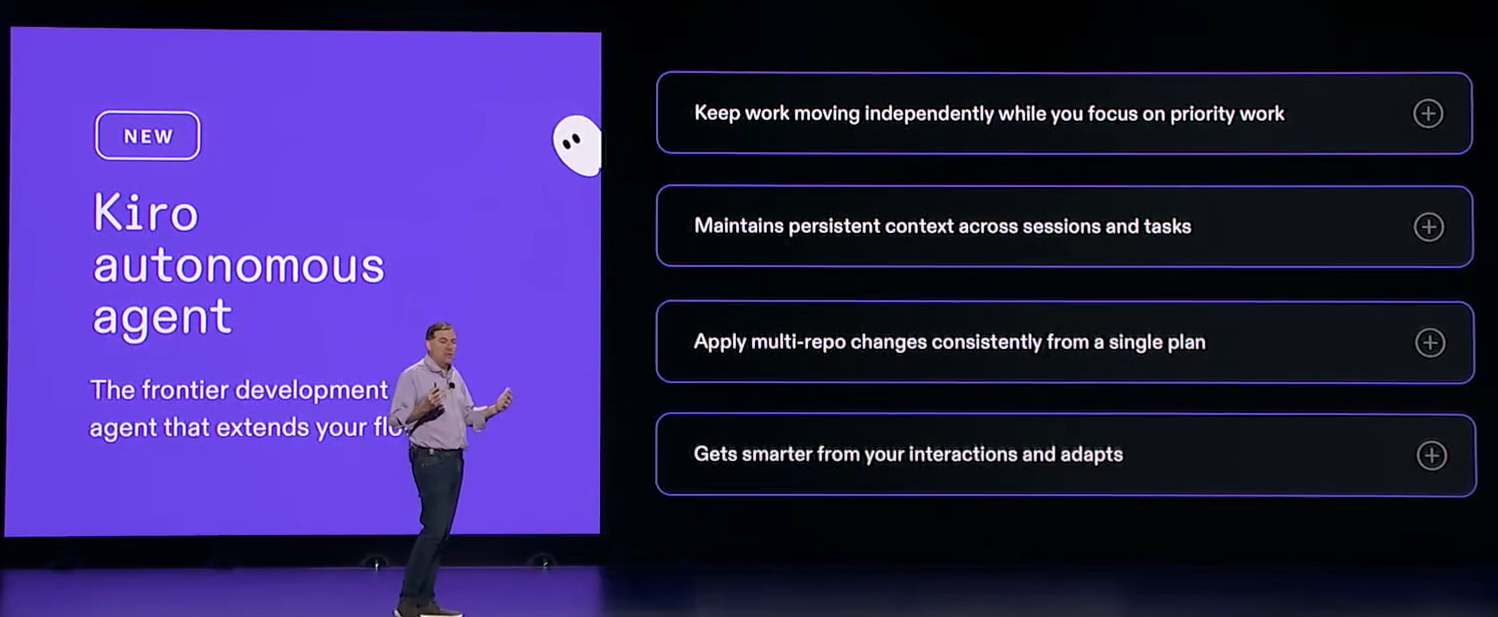

Amazon's Kiro, Amazon Web Services' next-gen AI developer platform, is launching in preview for individual users with an autonomous agent for each developer, integration with GitHub and issue assignments.

Kiro, which recently became generally available, has been expanding tools and features on a two-week cadence since being introduced in July as an agentic AI integrated development environment. Amazon is standardizing its developers on Kiro.

Speaking during a re:Invent 2025 keynote, CEO Matt Garman said:

"We really see the potential for the entire developer experience, and frankly, the way that software is built to be completely reimagined. We're taking what's exciting about AI and software development and adding structure to it. This is why we launched Kiro, the agentic development environment for structured AI coding,"

More from re:Invent 2025

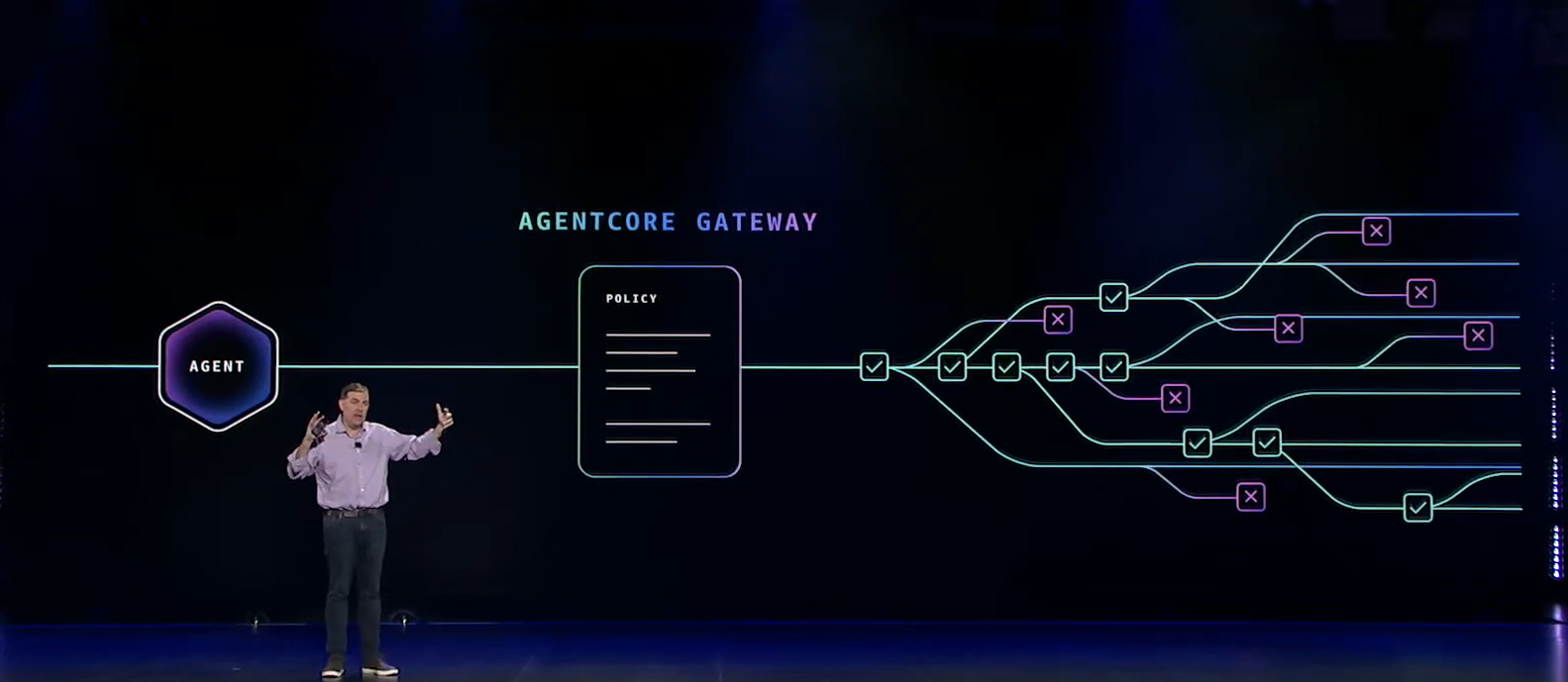

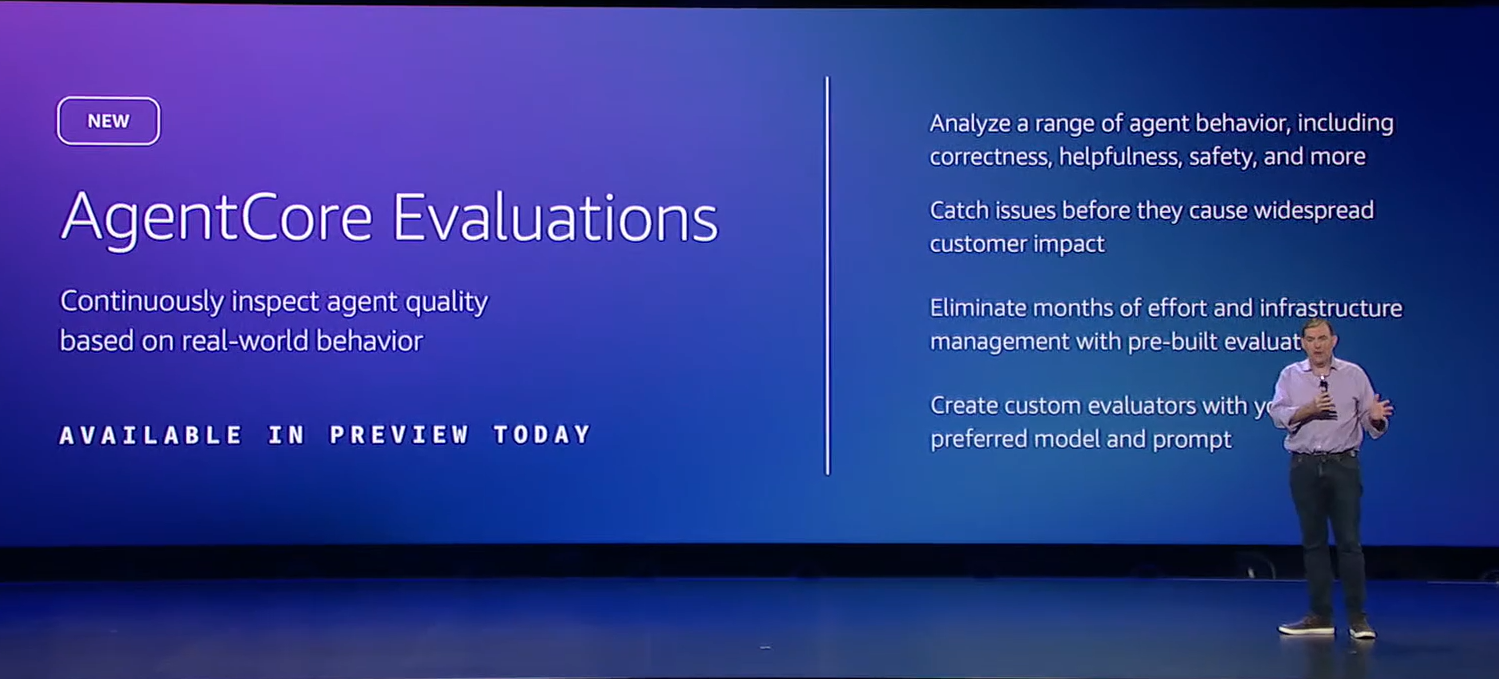

- AWS adds AI agent policy, evaluation tools to Amazon Bedrock AgentCore

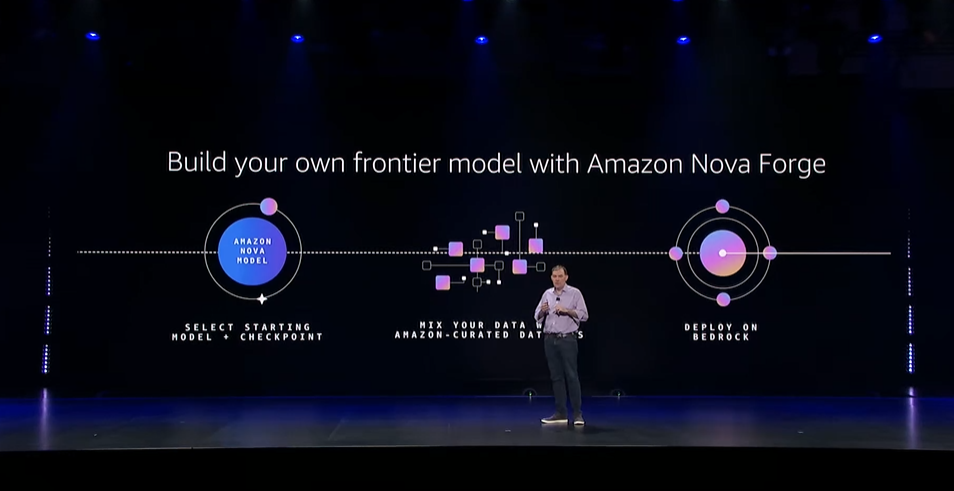

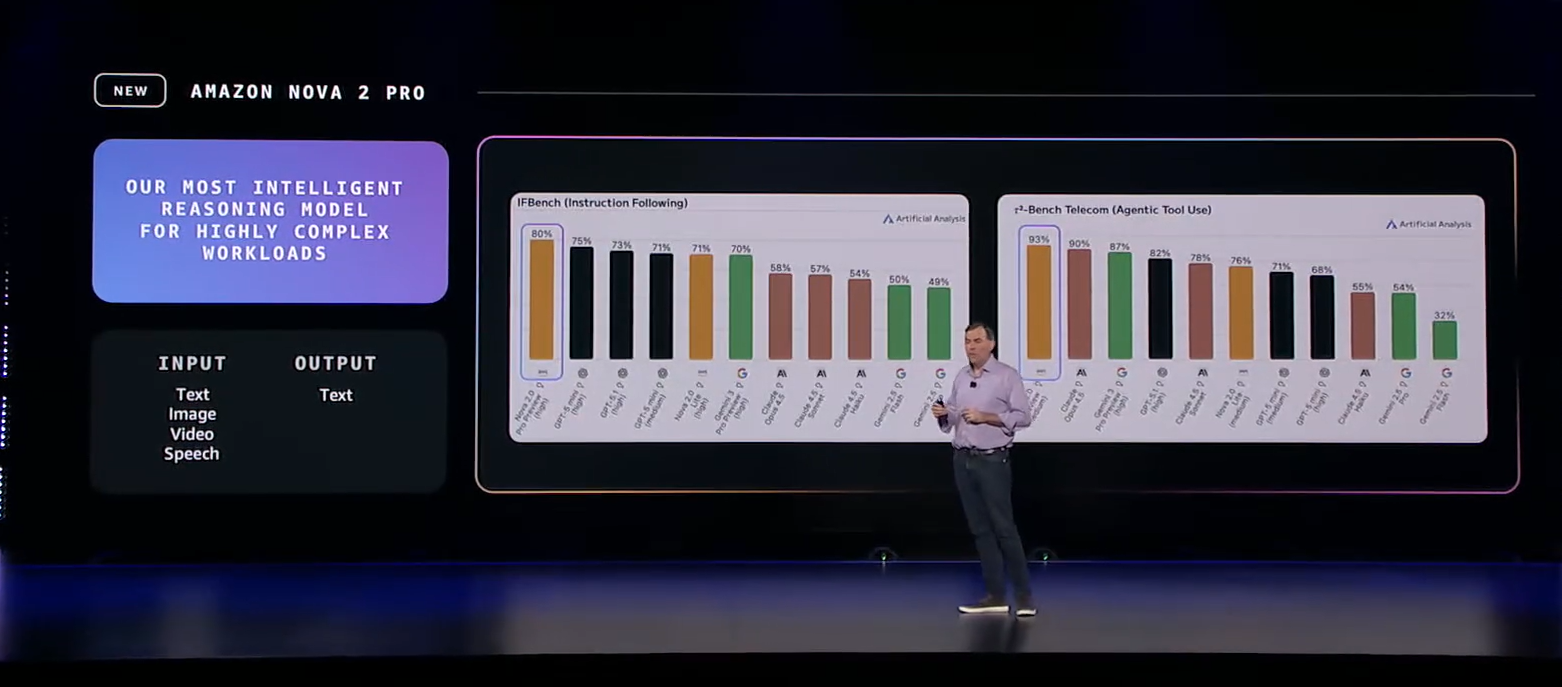

- AWS launches Amazon Nova Forge, Nova 2 Omni

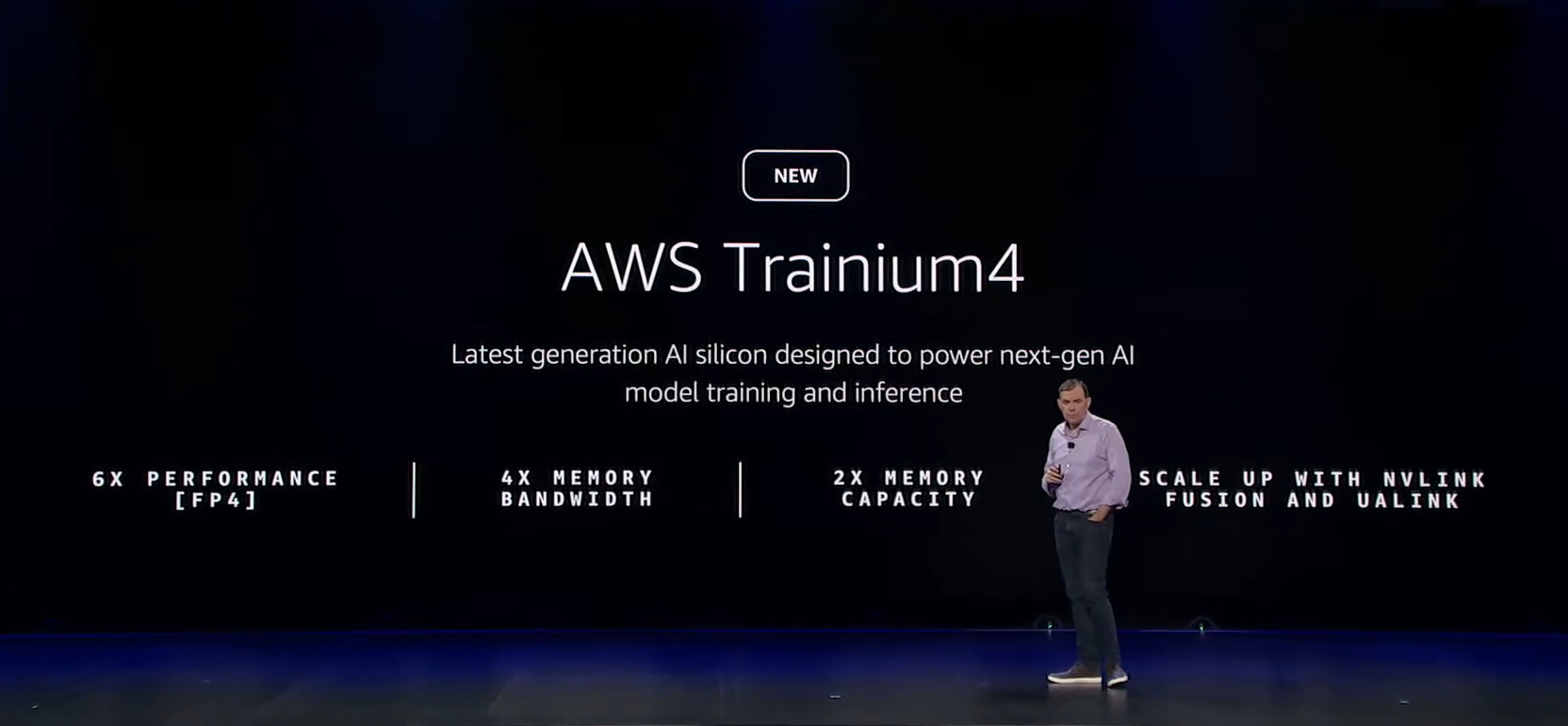

- AWS launches AI factory service, Trainium 4 with Trainium 4 on deck

- AWS Transform aims for custom code, enterprise tech debt

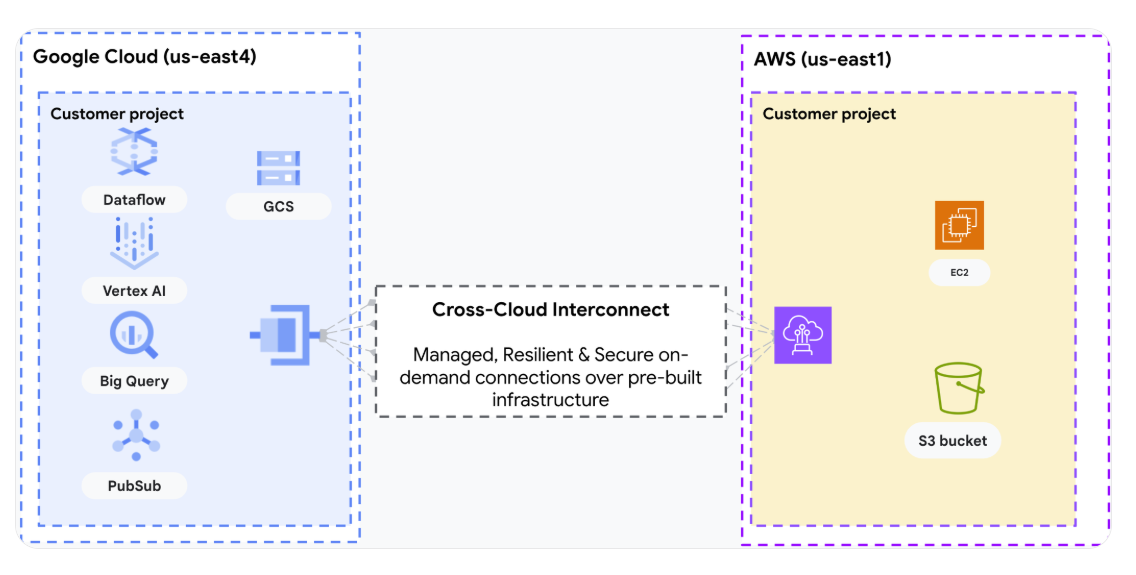

- AWS, Google Cloud engineer interconnect between their clouds

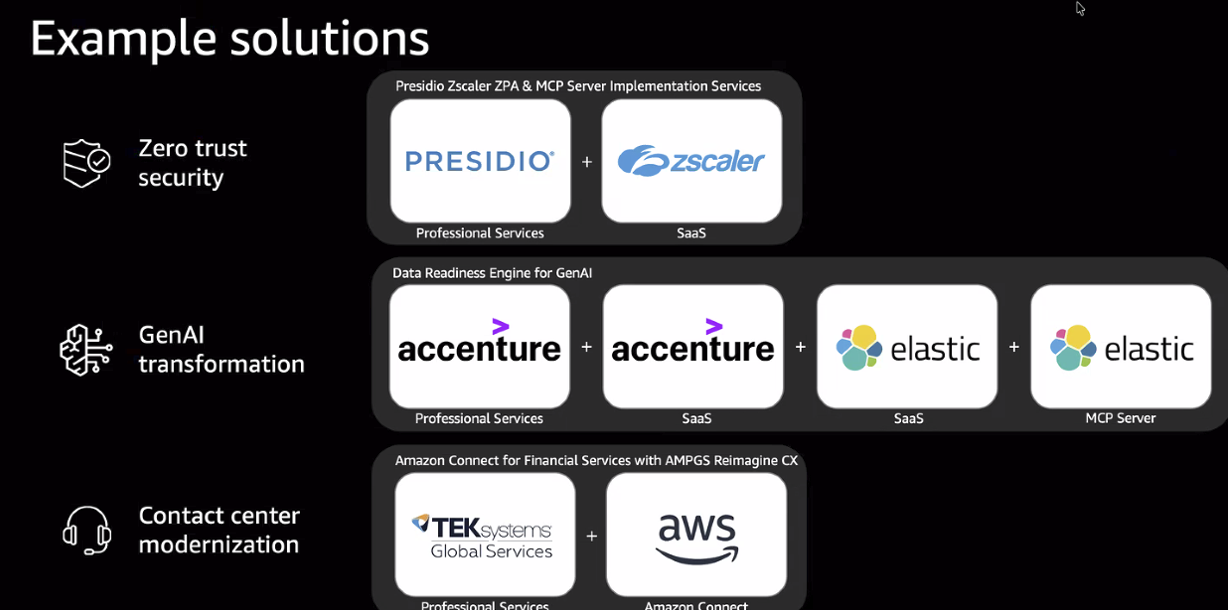

- AWS Marketplace adds solutions-based offers, Agent Mode

With the individual accounts and an autonomous agent that learns how you develop, Kiro is moving closer to the goal of moving developers from prompt to prototype faster. Key points about the Kiro autonomous agent, announced at AWS re:Invent 2025 include:

- The agent is designed for persistent operation beyond individual coding sessions.

- This AI-powered companion can manage work across multiple repositories and tools like GitHub and Jira.

- Kiro’s autonomous agent can research an implementation approach to a new feature for an existing code base.

- It learns from user preferences, orchestrates sub-agents for specialized tasks, and maintains context throughout projects. Early use cases range from bug triage and multi-repo refactoring to maintenance campaigns—tasks often too routine or time-consuming for human developers.

AWS's Kiro autonomous agent is part of a broader plan to offer a unified software development platform that features one interface covering everything from planning to deployment. Nikhil Swaminathan, Kiro’s product lead, said the autonomous agent will be able to spin up sub-agents and complete tasks and judge quality to make the move to production.

Garman said the autonomous agent in Kiro is part of what AWS calls a frontier agent. Frontier agents can carry out longer projects autonomously by operating in the background.

The idea is that the autonomous agent in Kiro will move from tasks to being able to give feedback to a developer. “Just being able to have feedback come through and making it very personalized will be a win,” said Swaminathan. “We’re launching with one agent per developer and will have a private beta. We’ll be expanding more from there.”

"This is AWS first foray into the autonomous AI world when it comes to its largest user population: developers. Good to see the focus, it is likely to increase developer velocity. Leaders of developers and CxOs are now waiting (desperately) for a SDLC focussed autonomous AI offering from AWS," said Holger Mueller, a Constellation Research analyst.

Ultimately, Kiro autonomous agents for teams will launch.

Kiro is also getting Powers, which is an extension that gives developers extensions to quickly augment a Kiro agent for workflows including design integration, hosting and data handling. Powers gives agents only the tools and context they need. Powers is being launched with partners such as Figma and Netlify.

“The concept of a power is to give developers the ability to extend the core agent. Often what happens with frameworks and tools and technologies is that people keep shipping improvements and the model itself is not trained to understand, there's a lot of trial and error with figuring out how to configure the agent rules in the right way to get the output that you need,” said Swaminathan.

These re:Invent 2025 announcements go with new Kiro features announced in November to go with general availability. Some of those additions include:

- Kiro has added Anthropic's Opus 4.5 model in Kiro.

- The new version of the Kiro IDE can measure whether code is up to specifications with property based testing. Kiro will go into a project's specs, extract properties that indicate how a system should work and then test against them.

- The Kiro agent is available in your terminal. Developers can use the command line interface (CLI) to build features, automate workflows, analyze errors and trace bugs in multiple terminals. Kiro CLI works with the same steering files and Model Context Protocol (MCP) settings that are in the Kiro IDE.

- Kiro Teams. Kiro is available for teams via the AWS IAM Identity Center with support for other identity providers on deck.

- A startup program for Kiro Pro+. Startups that have raised funds up to Series B can apply for AWS credits for Kiro until Dec. 31.