Humanoid robots near inflection point courtesy of AI

Editor in Chief of Constellation Insights

Constellation Research

Larry Dignan is Editor in Chief of Constellation Insights at Constellation Research, where he leads editorial coverage focused on enterprise technology, digital transformation, and emerging trends shaping the future of business. He oversees research-driven news, analysis, interviews, and event coverage designed to help technology buyers and vendors navigate complex markets with clarity and context. ...

Read more

AI models are quickly including the physical world and multiple modalities and a humanoid robot inflection point may soon follow.

That's the gist coming out of Nvidia's GTC 2025 conference and recent developments. The combination of foundational AI models that apply to robotics means enterprises need to start thinking through the key concepts. Nvidia CEO Jensen Huang told investors during GTC that "the business opportunity is well upstream of the robot."

Huang said: "Before you have a robot, you have to create the AI for the robot. Before you have a chat bot, you have to create the AI for the chat bot. That chat bot is just the last end of it. And so, in order for us to enable the world's robotics industry, upstream is a bunch of AI infrastructure we have to go create to teach the robot how to be a robot. Now, teaching a robot how to be a robot is much harder than in fact, even chat bots, for obvious reasons, it has to manipulate physical things, and it has to understand the world physically. We have to invent new technologies for that, the amount of data you have to train with is gigantic. It's not words, it's video, it's not world, it's not just words and numbers, it's video and physical interactions, cause and effects, physics and so. So that's the new adventure we've been on for several years, and now it's starting to grow quite fast."

Yes, folks. Nvidia is looking for its next big thing and robotics may be it. Nvidia has launched physical AI world models via Cosmos, which are able to be customized. 1X, Agility Robotics, Figure AI, Foretellix, Skild AI and Uber are adopting Cosmos, a family that now includes Cosmos Transfer, which can ingest video inputs such as segmentation maps, depth maps and lidar scans to create photoreal video outputs. The dream is that models will know the ground truth needed to train robots.

Nvidia also followed up with Nvidia Isaac GR00T N1, a foundation model for generalized humanoid reasoning and skills. In addition, the Nvidia Isaac GR00T Blueprint and the Newton open-source physics engine, which is being developed with Google DeepMind and Disney Research. Nvidia also plans to release Jetson Thor, a computing platform designed to power humanoid robots.

For good measure, Hyundai said it will work with its Boston Dynamics unit to "expand the U.S. ecosystem for robotics components and establish a mass-production system" and partner with Nvidia on AI for robotics. Hyundai's total investment for expanding robotics, AI and autonomous driving in the US is $6 billion. Google DeepMind launched Gemini Robotics, a Gemini 2.0 model designed for robotics. Robotics developments have popped up repeatedly at technology conferences. At AWS re:Invent 2024, Amazon CEO Andy Jassy talked about the 750,000 robots in fulfillment centers that are leveraging generative AI.

The continuum for the future revolves around AI, agentic AI and an ecosystem extending into robotics starting with things like autonomous vehicles and ultimately humanoid robots.

Get Constellation Insights newsletter

Is it too early to start thinking about humanoid robots? Probably not. If you're already thinking through agentic AI and the implications for your company humanoid robots are the next step on the digital labor continuum.

Deloitte sees humanoid robotics ultimately scaling in 7 to 10 years with experiments starting today. A panel at GTC 2025 highlighted the following timeline.

That was followed by a range of considerations for planning today and executing in the years ahead.

Although Huang's keynote kicker included a little robot that could react to humans and follow instructions via the AI and models embedded, there are real implications to ponder. You think generative AI was disruptive, robotics will also have a wide impact. Here's a look at what you need to know.

Humanoid robotics are a collection of technologies. Tim Gaus, Principal and Smart Manufacturing Business Leader at Deloitte, noted that humanoid robots will share features of humans, but be powered by AI that makes them more functional.

"It's not just about the robot itself. It actually takes an entire ecosystem to make this come to life," said Gaus, who said there's a robot operating system governing the movement and then the AI that will enable it to be trained and work with other robots. "It's not going to be just one robot or one humanoid that's out there. It's actually the interaction model between classic robots, non-humanoid, humanoid, multi humanoids coming together and the that integrates the entire enterprise itself."

These technologies are all coming together and enabling experimentation ahead of real value and actual use cases for humanoid robots, which will be software defined.

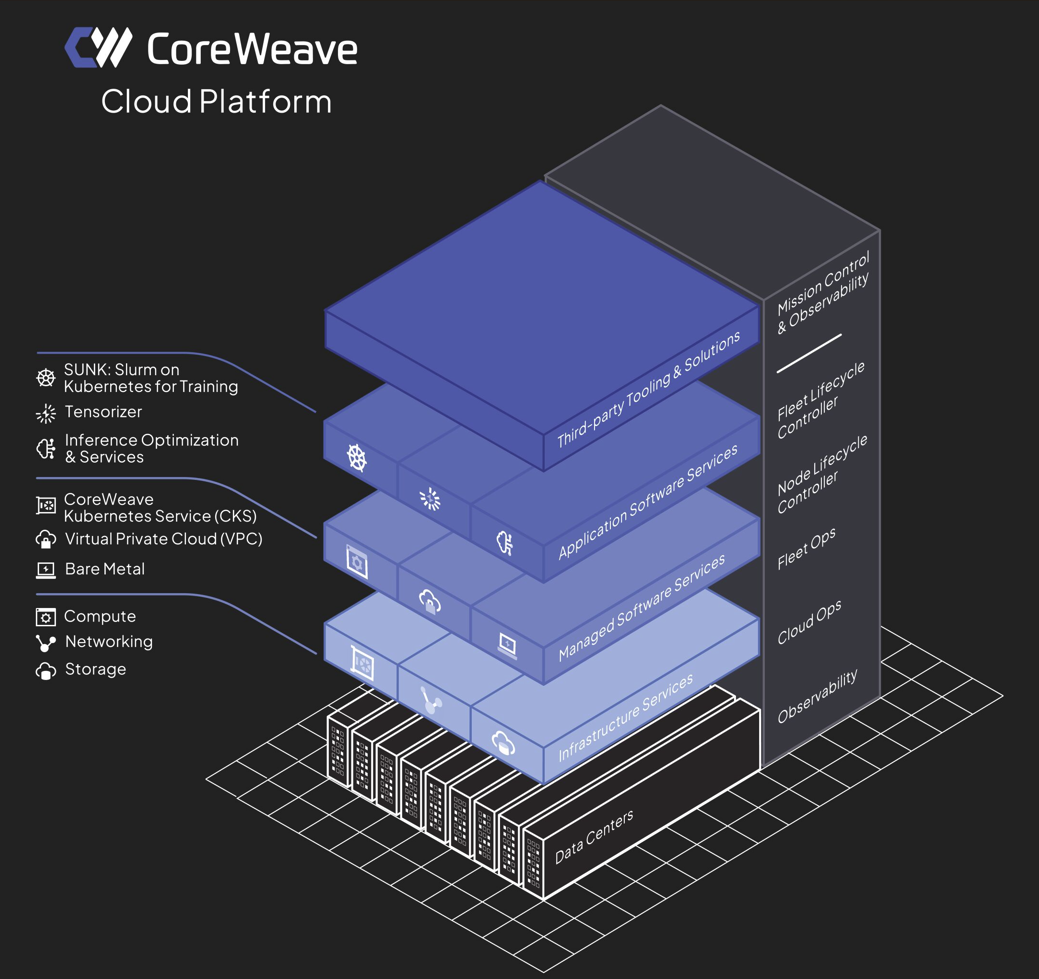

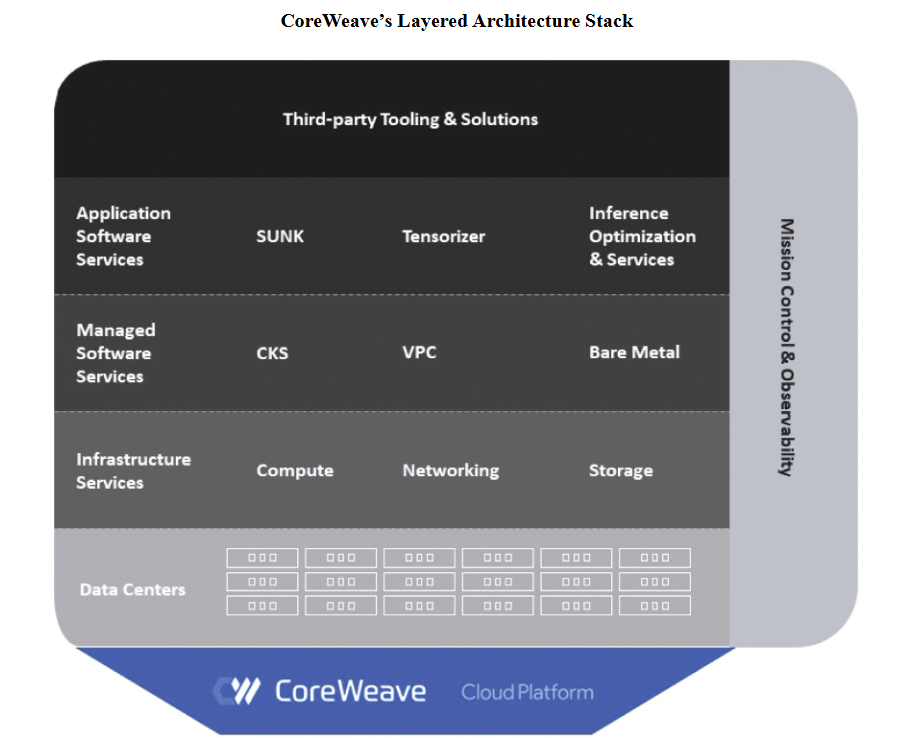

- The stack will look like this:

- Robot mechanical form (hardware).

- Robot operating system (open source and proprietary).

- Robotics training (Nvidia, OpenAI, Google Deepmind and model providers).

- Fleet management.

- Enterprise integration via enterprise technology vendors.

Humanoid robots will collaborate with humans more than replace them. We're already hearing the agentic AI spin on digital labor and how it will make humans more productive. But remember, agentic AI is going to mean that you need to hire fewer people. The thinking in the humanoid robot crowd is that these devices will fill roles humans don't want to do anyway.

Huang said the world is already short of "10s of millions of workers" and that "we need lots of robots."

Gaus noted that humanoid robots are "hitting on one of the most important challenges that we have in this space, which is we just don't have enough people who want to do these types of jobs." Gaus was speaking to manufacturing, logistics and a host of industries today. The future of work will include a lot of robots.

Orchestration will be everything. Tomer Gal, Managing Director, NVIDIA Alliance, AI and Accelerated Computing at Deloitte, said fleet management and orchestration will be a big challenge. Cybersecurity, communication interfaces, orchestration and upgrading thousands of robots will all be an issue. Meanwhile, enterprises will need to transform systems just like they will for generative and agentic AI. In fact, data and AI work today can enable humanoid robots in the future.

"I think we should start now because we're not going to catch up when humanoid robots are all around us. We need to catch up already now, meaning there is the aspect of the simulation, the reinforcement learning, all of these technologies in place when we have the humanoids," said Gal.

Edge computing integration with core enterprise systems will be essential. Franz Gilbert, Global Growth Leader for Human Capital Ecosystems and Alliances at Deloitte, said enterprises need to think beyond just training a humanoid robot to pick up a can. Humanoids will be able to pick up that can and tie into the inventory system. "Every client's infrastructure, tech stack and environment will be different. How do you train the robot and what does the integration look like?" asked Gilbert.

The future of work. As with AI, enterprise value will require a lot of culture and change management. With humanoid robots, Gilbert noted that "over 87% of the roles will be redesigned in order to take advantage of what a humanoid robot can do."

Like AI and automation, the big decision revolves around where you insert the human into the process. Gilbert noted that complex decision-making will rest with humans. Emotional intelligence will also require humans. "There's also a social interaction piece. Humanoid robots can't read facial expressions at this point," said Gilbert, who said that tasks that need to be done quickly may be suited for humanoids, but humans will need to handle EQ-heavy items. "We're going to have to start dividing those tasks within roles and redesigning them."

For instance, think of healthcare and humanoid robots making patient checks instead of nurses. Think of the guardrails required as humanoid robots are connected to electronic health records. How much do these robots need to look like humans? Do they scale?

These humanoid robotic roles will vary by industry. Humanoid robots will apply to multiple industries, but the markets that will develop faster will be manufacturing, industrial, logistics, warehousing, retail and hospitality.

Humanoid robots will become a geopolitical issue. Speaking on DisrupTV, Constellation Research CEO Ray "R" Wang noted that humanoids are going to be a geopolitical issue just like AI and energy--and the tariffs that go with those categories. Wang said: "This is a game about AI, energy and humanoids. China doesn't care if the population dynamics go in reverse. In fact, they're going to replace everything with humanoids and they have the supply chain. If you're getting a $1,000 robot from China vs. a $5,000 root from the US and you're on the battlefield you're going to lose every time on the US side. You're seeing protective tariffs come out against the humanoid supply."

Crawford Del Prete, President of IDC, said China could lead on humanoid robots. "When you get into places like China, you've got a very different legal landscape and amount of data that a humanoid can actually record," he said. "And so, they're able to make different kinds of decisions with those humanoid robots, because they're collecting a lot more data. China could end up pretty far ahead here."

Data to Decisions

Future of Work

Innovation & Product-led Growth

Tech Optimization

Next-Generation Customer Experience

Digital Safety, Privacy & Cybersecurity

AI

GenerativeAI

ML

Machine Learning

LLMs

Agentic AI

Analytics

Automation

Disruptive Technology

Chief Information Officer

Chief Executive Officer

Chief Technology Officer

Chief AI Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Product Officer

The AI bakeoff is underway with a dose of agents. One company is handing its developers the latest AI tools as well as all the enterprise's processes and tasks. The goal: Teams come up with their best stuff and the best projects are ranked.

The AI bakeoff is underway with a dose of agents. One company is handing its developers the latest AI tools as well as all the enterprise's processes and tasks. The goal: Teams come up with their best stuff and the best projects are ranked.