Google Cloud, AWS, Microsoft Azure: The AI vertical integration race

Amazon Web Services’ launch of the Connect family of business applications and Amazon Quick, Google Cloud Next 2026 announcements and hyperscale cloud first quarter results all lead to one thing: AI vertical integration is in.

Cloud providers are racing to create a full stack of AI-optimized systems and shoring up weak spots. AWS has dominated in infrastructure as a service and has moved up the stack in recent years. Amazon Connect--now a family of business applications focused on Customer AI, Talent, Decisions and Health--are areas where AWS can differentiate and connect enterprise applications in a way that rhymes with Anthropic's Claude Cowork.

The AWS move shores up the top of what it hopes is an integrated cloud and AI stack.

Google Cloud Next featured integration and full AI stack themes at nearly every turn. Google Cloud with strength in custom silicon via TPUs, AI models, platforms and applications and services make the best case for a full AI stack.

"At Google, we actually have a unique opportunity to really solve the problem and get vertically innovated. We don't just insert at one layer of the stack. We ensure that everything is integrated end to end, for the highest levels of efficiency, the highest levels of reliability and the highest layers of security," said Google Cloud SVP and Chief Technologist Amin Vahdat.

Google Cloud CEO Thomas Kurian emphasizes that the company has an integrated AI stack that’s also open. "Google Cloud is the only provider to offer first party solutions across the entire stack," said Kurian, who added that the company also fosters open connections to other infrastructure and data. See: Google Cloud CEO Kurian on AI model evolution, CPUs importance and being open

AWS CEO Matt Garman emphasized a similar theme in relation to the company's partnership with OpenAI, which will include its models on Amazon Bedrock and other services. "Companies always want the best options," said Garman. "When customers are looking at their AI applications, they want the broadest set of choices."

Coming from the other end of this vertically integrated AI stack trend is Microsoft. In many ways, Microsoft is working from the other end of the stack with dominance in applications and a plan to connect them all with AI agents. Microsoft's Azure infrastructure level is strong and growing, but it lacks the maturity in custom silicon relative to Google's TPUs and AWS' Graviton, Inferentia and Trainium. Both Google and AWS would have chip businesses that rival companies like AMD if spun out.

Nevertheless, Microsoft has its own vertically integrated AI stack story that revolves around context and process. Context layers like Microsoft's WorkIQ are designed to differentiate. On Microsoft's third quarter earnings call, CEO Satya Nadella said: "WorkIQ now spans more than 17 exabytes of data, growing 35% year over year. The liquidity and freshness of that data matters. That context is getting even richer as Copilot adoption grows .”

AI stack strengths and weaknesses

Turns out every cloud wants to be a complete AI stack and integrated in a way that can go horizontal or vertical. The big thing to note is that each hyperscaler is coming to a complete AI stack from different directions. Here's a look at the moving parts.

- AWS: The core integration thesis revolves around owning the infrastructure (S3, EC2, Lambda) and exposing modular services including Amazon Bedrock, SageMaker and AgentCore as well as Kiro so it is the best place to build and run anything. AWS is strongest in infrastructure, primitives, storage, databases, cloud operations and cost/performance. The weakness in the vertical integration story is the business application and productivity layer. AWS also doesn't own the end-user workflows. That business app story is what the What's Next with AWS event was all about. Can AWS make AI-led workflows as easy and native as Google Cloud and Microsoft? AWS will need to reinvent the app layer since it lacks any ERP, CRM, collaboration or productivity footprint.

- Google Cloud: The big theme for Google Cloud is that it has been an AI and machine learning company from the start and has developed its stack accordingly. It has designed its AI stack from silicon to model to data platform to power YouTube and Google search. Google at its core is an AI/data company. Strengths revolve around TPUs, Gemini, BiqQuery and AI, data and security services. Google Cloud via Workspace also has a good footing at the business application and productivity layer. Gemini Enterprise Agent Platform has the potential to be a workflow orchestrator. Google Cloud lacks back-office application gravity, but the company is increasingly the AI engine that is in multi-cloud environments.

- Microsoft: The strategy for Microsoft revolves around integrating AI throughout the enterprise work surface and owning the context layer. Microsoft's existing enterprise footprint and distribution are unmatched via Microsoft 365, Copilot, GitHub, Teams, Power Platform and Dynamics. Azure Foundry, SQL Server/Azure SQL, OneLake and WorkIQ are also strengths. Microsoft wants to be the operating system for enterprise work with Azure being the AI infrastructure layer. Microsoft is moving into custom silicon with Maia and Cobalt chips with the former focused on AI inference and the latter on general workloads. Microsoft's distribution and ecosystem is huge.

What the numbers say

Based on earnings reports from Alphabet, owner of Google, Amazon, owner of AWS, and Microsoft it's safe to say that the companies are growing cloud and AI businesses at a breakneck pace.

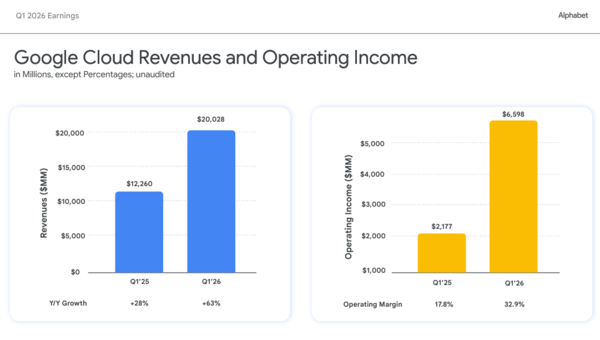

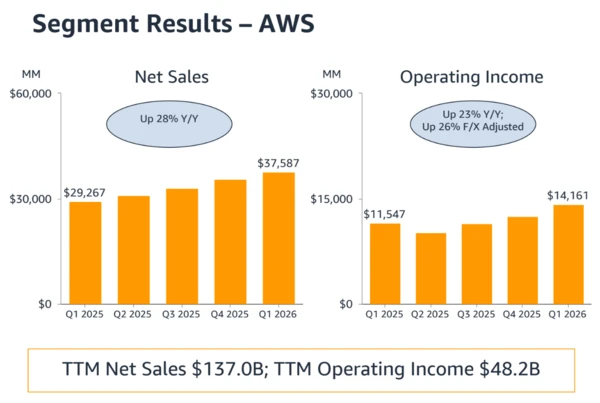

Google first quarter revenue growth was 63%, Microsoft Azure put up fiscal third quarter revenue growth of 40% and AWS, which is working off a larger base, had revenue growth of 28%.

The integrated AI stack approach for Google Cloud led to a growth explosion. Google Cloud is now on an $80 billion annual revenue run rate.

Alphabet CEO Sundar Pichai reiterated the integrated AI stack theme that emerged from Google Cloud Next 2026. “Gemini Enterprise has great momentum with 40% quarter on quarter growth in paid monthly active users,” said Pichai. “These outstanding results are built on our differentiated, full stack approach. Our first-party models, like Gemini, are now processing more than 16 billion tokens per minute via direct API use by our customers, up 60% from last quarter.”

For Google Cloud, TPUs are a huge part of that integrated stack story. Google Cloud is selling its TPUs as a service, using them internally for Alphabet's various businesses and, in some cases, adding them to racks to host at customer sites. "TPUs are viewed overall as an opportunity for Google Cloud but at times it's direct sales of TPU hardware to a select group of customers. We take a ROIC approach and TPUs help us get more economies of scale in our overall compute environment," said Pichai.

Amazon CEO Andy Jassy also touted the AWS integrated stack. "Our custom silicon business is now one of the top three data center chip businesses in the world. We have momentum for our custom AI silicon," said Jassy.

He added that the app layer of the AWS stack can also change how people work.

"We continue to see people choosing AWS for AI, in part because of our really broad full stack functionality, in part because people want their inference as they scale it to be close to their data and their applications, so much more of it lives in AWS than elsewhere," said Jassy.

Nadella spent Microsoft's earnings call talking about agentic AI, WorkIQ and efficiency both internally and for customers. He also noted that Microsoft is a systems company and emphasized infrastructure knowhow.

He said:

"We are at the beginning of one of the most consequential platform shifts that will change the entire tech stack as agents proliferate and become the dominant workload. This will drive TAM expansion and change the value creation equation across the entire economy. To capture this opportunity, we are executing against 2 priorities. First, we are building the world's leading cloud and AI infrastructure for agentic computing era. Second, we are building high-value agentic systems across core domains such as productivity, coding and security. These two layers reinforce each other, and we are focused on driving competitive value and differentiation for customers across each so that they can eval-max their outcomes."

Key figures:

- Microsoft has reduced dock-to-live times for GPUs in its biggest regions by nearly 20% since the beginning of the year.

- The company's Fairwater data center in Wisconsin came online 6 weeks ahead of schedule.

- Microsoft delivered a 40% improvement in inference throughput for its most used models across Copilot.

- The Maia 200 AI accelerator is live in Microsoft's Iowa and Arizona data centers and features a 30% improved token per dollar compared to the latest silicon in Microsoft's fleet.

- More than 10,000 customers have used more than one model on Foundry and half of those have used open-source models.

The catch? These vertical integration efforts don’t come cheap.

- Microsoft said capital expenditures for calendar 2026 will be about $190 billion with $25 billion of that sum related to higher component costs.

- Alphabet said 2026 capital expenditures will be $180 billion to $190 billion, up from the previous $175 billion to $195 billion.

- Amazon's first quarter capital expenditures were $43.2 billion in the first quarter primarily due to AWS and AI to meet demand. Amazon previously projected $200 billion in capital expenditures for 2026.

What's in it for you? Early benefits and risks later

Vertical integration from Google Cloud, AWS and Microsoft Azure aren't necessarily bad for enterprises, but they are a risk that requires planning. These emerging integrated AI stacks lower friction, simplify AI adoption and enable you to innovate faster. In theory, these vertically integrated AI stacks are more efficient and pass along savings to customers.

But vertical integration among cloud giants has the following risks:

- Lock-in shifts from compute, storage and databases to models, agents, vector stores, data catalogs and workflow automation. Exiting a cloud isn't easy and exiting an AI platform will be harder.

- As hyperscalers control each layer they'll have more monetization opportunities and pricing power. You may get bundle economics today and pay for hyperscalers' margin expansion later via consumption models and potential orchestration charges.

- Data gravity is AI gravity. Once your agents are grounded in one cloud you'll likely stay. Cloud providers are vertically integrating across the entire stack and that means you'll be more dependent on them at every level.

These high-level risks also have cloud-specific concerns.

For instance, the enterprise risk with AWS is infrastructure dependency that starts with dozens of services that accumulate in the architecture. AWS continually optimizes, but the overall architecture is hard to move. AWS is reducing your dependence on Nvidia for infrastructure, but you'll be more dependent on Trainium, Inferentia and Amazon Bedrock with related AI agent offerings.

The vertical integration risks with Google Cloud are similar in that the AI stack is technically elegant. If your semantic layer, data, datasets and governance are in Google Cloud's abstraction layers you could be stuck. Migration is more than moving tables because agentic AI means you'll have to move embeddings, prompts, model behavior, semantic layers, agent registries and a bevy of other things.

Microsoft's vertical integration risks are a bit more subtle, but you've been dealing with them for years because chances are the company is already your dominant work surface. Microsoft can integrate AI where your employees already work.

Should Microsoft's AI offerings, notably something like WorkIQ, become embedded into business processes and workflows it's going to be hard to move a context layer. Microsoft can be your AI control plane for enterprises before neutral architectures are created.

As hyperscale clouds race to vertically integrate, CXOs need to think about architecture and mitigate future lock-in risks as much as they do the latest and greatest model. Here are a few things to think about:

- Separate data control from AI consumption. Your data layer needs to be neutral as possible.

- Document prompts, evaluations, embeddings, vector stores, models and agent tools so you can exit a cloud provider if required.

- Maintain multiple clouds and continually benchmark them.

- Create plans that ensure your AI operating model won't be dependent on any one vendor.